實戰hadoop2.6.3+zookeeper3.4.6+hbase1.0.2高可用集羣方案

一、安裝前準備

1.環境5臺

2、修改hosts文件

[root@hadoop01 ~]# cat /etc/hosts

192.168.10.201hadoop01

192.168.10.202hadoop02

192.168.10.203hadoop03

192.168.10.204hadoop04

192.168.10.205hadoop05

3、ssh 免密碼登錄

在每臺操作

[root@hadoop01 ~]# mkidr ~/.ssh

[root@hadoop01 ~]# chmod 700 ~/.ssh

[root@hadoop01 ~]#cd ~/.ssh/

[root@hadoop01 .ssh ]ssh-keygen -t rsa

五臺操作完成 後做成公鑰文件

[root@hadoop01 .ssh ] ssh hadoop02 cat /root/.ssh/id_rsa.pub >> authorized_keys

[root@hadoop01 .ssh ] ssh hadoop03 cat /root/.ssh/id_rsa.pub >> authorized_keys

[root@hadoop01 .ssh ] ssh hadoop04 cat /root/.ssh/id_rsa.pub >> authorized_keys

[root@hadoop01 .ssh ] ssh hadoop05 cat /root/.ssh/id_rsa.pub >> authorized_keys

[root@hadoop01 .ssh ] ssh hadoop01 cat /root/.ssh/id_rsa.pub >> authorized_keys

[root@hadoop01 .ssh]# chmod 600 authorized_keys

[root@hadoop01 .ssh]# scp authorized_keys hadoop02:/root/.ssh/

[root@hadoop01 .ssh]# scp authorized_keys hadoop03:/root/.ssh/

[root@hadoop01 .ssh]# scp authorized_keys hadoop04:/root/.ssh/

[root@hadoop01 .ssh]# scp authorized_keys hadoop05:/root/.ssh/

測試ssh信任

[root@hadoop01 .ssh]# ssh hadoop02 date

Mon Aug 8 11:07:23 CST 2016

[root@hadoop01 .ssh]# ssh hadoop03 date

Mon Aug 8 11:07:26 CST 2016

[root@hadoop01 .ssh]# ssh hadoop04 date

Mon Aug 8 11:07:29 CST 2016

[root@hadoop01 .ssh]# ssh hadoop05 date

5.服務時間同步(五臺操作)

yum -y install ntpdate

[root@hadoop01 .ssh]# crontab -l

0 * * * * /usr/sbin/ntpdate 0.rhel.pool.ntp.org && /sbin/clock -w

可以採用別的方案同步時間

6.修改文件打開數(五臺操作)

[root@hadoop01 ~]# vi /etc/security/limits.conf

root soft nofile 65535

root hard nofile 65535

root soft nproc 32000

root hard nproc 32000

[root@hadoop01 ~]# vi /etc/pam.d/login

session required pam_limits.so

修改完後重啓系統

二、安裝hadoop+zookeeper HA

1.安裝jdk (五臺操作)

解壓jdk

[root@hadoop01 ~] cd /opt

[root@hadoop01 opt]# tar zxvf jdk-7u21-linux-x64.tar.gz

[root@hadoop01 opt]# mv jdk1.7.0_21 jdk

配置到環境變量/etc/profile

[root@hadoop01 opt]# vi /etc/profile

#java

JAVA_HOME=/opt/jdk

PATH=$JAVA_HOME/bin:$PATH

CLASSPATH=.:$JAVA_HOME/lib/dt.jar:$JAVA_HOME/lib/tools.jar

export JAVA_HOME

export PATH

export CLASSPATH

配置文件生效

[root@hadoop01 opt]# source /etc/profile

[root@hadoop01 opt]# java -version

java version "1.7.0_21"

Java(TM) SE Runtime Environment (build 1.7.0_21-b11)

Java HotSpot(TM) 64-Bit Server VM (build 23.21-b01, mixed mode)

以上說明生效

2.解壓hadoop並修改環境變量

[root@hadoop01 ~]# tar zxvf hadoop-2.6.3.tar.gz

[root@hadoop01 ~]#mkdir /data

[root@hadoop01 ~]# mv hadoop-2.6.3 /data/hadoop

[root@hadoop01 data]# vi /etc/profile

##hadoop

export HADOOP_HOME=/data/hadoop

export PATH=$PATH:$HADOOP_HOME/bin:$HADOOP_HOME/bin

[root@hadoop01 data]# source /etc/profile

3.修改hadoop配置文件

[root@hadoop01 data]# cd /data/hadoop/etc/hadoop/

[root@hadoop01 hadoop]# vi slaves

hadoop01

hadoop02

hadoop03

hadoop04

hadoop05

以上利用hadoop01,hadoop02兩臺磁盤空間,也增加進去了,不介意增加。

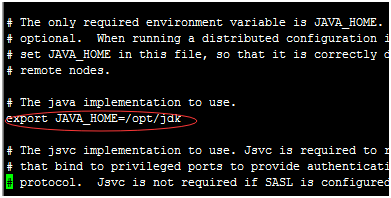

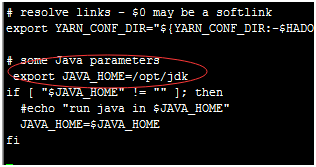

[root@hadoop01 hadoop]# vi hadoop-env.sh

[root@hadoop01 hadoop]# vi yarn-env.sh

修改core-site.xml文件

[root@hadoop01 hadoop]# vi core-site.xml

<?xml version="1.0" encoding="UTF-8"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<!--

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

-->

<!-- Put site-specific property overrides in this file. -->

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://cluster</value>

<description>The name of the default file system.</description>

<final>true</final>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>/data/hadoop/tmp</value>

</property>

<property>

<name>ha.zookeeper.quorum</name>

<value>hadoop01:2190,hadoop02:2190,hadoop03:2190,hadoop04:2190,hadoop05:2190</value>

</property>

<property>

<name>io.file.buffer.size</name>

<value>2048</value>

</property>

<property>

<name>dfs.ha.fencing.methods</name>

<value>sshfence</value>

</property>

<property>

<name>dfs.ha.fencing.ssh.private-key-files</name>

<value>/root/.ssh/id_dsa</value>

</property>

</configuration>

修改hdfs-site.xml文件

[root@hadoop01 hadoop]# vi hdfs-site.xml

<?xml version="1.0" encoding="UTF-8"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<!--

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

-->

<!-- Put site-specific property overrides in this file. -->

<configuration>

<property>

<name>dfs.nameservices</name>

<value>cluster</value>

</property>

<property>

<name>dfs.ha.namenodes.cluster</name>

<value>nn1,nn2</value>

</property>

<property>

<name>dfs.namenode.rpc-address.cluster.nn1</name>

<value>hadoop01:8020</value>

</property>

<property>

<name>dfs.namenode.rpc-address.cluster.nn2</name>

<value>hadoop02:8020</value>

</property>

<property>

<name>dfs.namenode.http-address.cluster.nn1</name>

<value>hadoop01:50070</value>

</property>

<property>

<name>dfs.namenode.http-address.cluster.nn2</name>

<value>hadoop02:50070</value>

</property>

<property>

<name>dfs.namenode.servicerpc-address.cluster.nn1</name>

<value>hadoop01:53333</value>

</property>

<property>

<name>dfs.namenode.servicerpc-address.cluster.nn2</name>

<value>hadoop02:53333</value>

</property>

<property>

<name>dfs.namenode.shared.edits.dir</name>

<value>qjournal://hadoop01:8485;hadoop02:8485;hadoop03:8485;hadoop04:8485;hadoop05:8485/cluster</value>

</property>

<property>

<name>dfs.client.failover.proxy.provider.cluster</name>

<value>org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider</value>

</property>

<property>

<name>dfs.journalnode.edits.dir</name>

<value>/data/hadoop/mydata/journal</value>

</property>

<property>

<name>dfs.replication</name>

<value>3</value>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>file:/data/hadoop/mydata/name</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>file:/data/hadoop/mydata/data</value>

</property>

<property>

<name>dfs.ha.automatic-failover.enabled</name>

<value>true</value>

</property>

<property>

<name>dfs.webhdfs.enabled</name>

<value>true</value>

</property>

<property>

<name>dfs.journalnode.http-address</name>

<value>0.0.0.0:8480</value>

</property>

<property>

<name>dfs.journalnode.rpc-address</name>

<value>0.0.0.0:8485</value>

</property>

<property>

<name>dfs.permissions</name>

<value>false</value>

</property>

</configuration>

修改mapred-site.xml

[root@hadoop01 hadoop]# vi mapred-site.xml

<?xml version="1.0"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<!--

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

-->

<!-- Put site-specific property overrides in this file. -->

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>mapreduce.cluster.temp.dir</name>

<value>/data/hadoop/mydata/mr_temp</value>

</property>

<property>

<name>mareduce.jobhistory.address</name>

<value>hadoop01:10020</value>

</property>

<property>

<name>mapreduce.jobhistory.webapp.address</name>

<value>hadoop01:19888</value>

</property>

</configuration>

修改yarn-site.xml文件

[root@hadoop01 hadoop]# vi yarn-site.xml

<?xml version="1.0"?>

<!--

Licensed under the Apache License, Version 2.0 (the "License");

you may not use this file except in compliance with the License.

You may obtain a copy of the License at

http://www.apache.org/licenses/LICENSE-2.0

Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License. See accompanying LICENSE file.

-->

<configuration>

<!-- Site specific YARN configuration properties -->

<property>

<name>yarn.resourcemanager.connect.retry-interval.ms</name>

<value>60000</value>

</property>

<property>

<name>yarn.resourcemanager.ha.enabled</name>

<value>true</value>

</property>

<property>

<name>yarn.resourcemanager.cluster-id</name>

<value>rm-cluster</value>

</property>

<property>

<name>yarn.resourcemanager.ha.rm-ids</name>

<value>rm1,rm2</value>

</property>

<property>

<name>yarn.resourcemanager.ha.id</name>

<value>rm1</value>

</property>

<property>

<name>yarn.resourcemanager.hostname.rm1</name>

<value>hadoop01</value>

</property>

<property>

<name>yarn.resourcemanager.hostname.rm2</name>

<value>hadoop02</value>

</property>

<property>

<name>yarn.resourcemanager.recovery.enabled</name>

<value>true</value>

</property>

<property>

<name>yarn.resourcemanager.store.class</name>

<value>org.apache.hadoop.yarn.server.resourcemanager.recovery.ZKRMStateStore</value>

</property>

<property>

<name>yarn.resourcemanager.zk-address</name>

<value>hadoop01:2190,hadoop02:2190,hadoop03:2190,hadoop04:2190,hadoop05:2190,</value>

</property>

<property>

<name>yarn.resourcemanager.address.rm1</name>

<value>${yarn.resourcemanager.hostname.rm1}:23140</value>

</property>

<property>

<name>yarn.resourcemanager.scheduler.address.rm1</name>

<value>${yarn.resourcemanager.hostname.rm1}:23130</value>

</property>

<property>

<name>yarn.resourcemanager.webapp.https.address.rm1</name>

<value>${yarn.resourcemanager.hostname.rm1}:23189</value>

</property>

<property>

<name>yarn.resourcemanager.webapp.address.rm1</name>

<value>${yarn.resourcemanager.hostname.rm1}:23188</value>

</property>

<property>

<name>yarn.resourcemanager.resource-tracker.address.rm1</name>

<value>${yarn.resourcemanager.hostname.rm1}:23125</value>

</property>

<property>

<name>yarn.resourcemanager.admin.address.rm1</name>

<value>${yarn.resourcemanager.hostname.rm1}:23141</value>

</property>

<property>

<name>yarn.resourcemanager.address.rm2</name>

<value>${yarn.resourcemanager.hostname.rm2}:23140</value>

</property>

<property>

<name>yarn.resourcemanager.scheduler.address.rm2</name>

<value>${yarn.resourcemanager.hostname.rm2}:23130</value>

</property>

<property>

<name>yarn.resourcemanager.webapp.https.address.rm2</name>

<value>${yarn.resourcemanager.hostname.rm2}:23189</value>

</property>

<property>

<name>yarn.resourcemanager.webapp.address.rm2</name>

<value>${yarn.resourcemanager.hostname.rm2}:23188</value>

</property>

<property>

<name>yarn.resourcemanager.resource-tracker.address.rm2</name>

<value>${yarn.resourcemanager.hostname.rm2}:23125</value>

</property>

<property>

<name>yarn.resourcemanager.admin.address.rm2</name>

<value>${yarn.resourcemanager.hostname.rm2}:23141</value>

</property>

<property>

<name>yarn.resourcemanager.scheduler.class</name>

<value>org.apache.hadoop.yarn.server.resourcemanager.scheduler.fair.FairScheduler</value>

</property>

<property>

<name>yarn.scheduler.fair.allocation.file</name>

<value>${yarn.home.dir}/etc/hadoop/fairscheduler.xml</value>

</property>

<property>

<name>yarn.nodemanager.local-dirs</name>

<value>/data/hadoop/mydata/yarn_local</value>

</property>

<property>

<name>yarn.nodemanager.log-dirs</name>

<value>/data/hadoop/mydata/yarn_log</value>

</property>

<property>

<name>yarn.nodemanager.remote-app-log-dir</name>

<value>/data/hadoop/mydata/yarn_remotelog</value>

</property>

<property>

<name>yarn.app.mapreduce.am.staging-dir</name>

<value>/data/hadoop/mydata/yarn_userstag</value>

</property>

<property>

<name>mapreduce.jobhistory.intermediate-done-dir</name>

<value>/data/hadoop/mydata/yarn_intermediatedone</value>

</property>

<property>

<name>mapreduce.jobhistory.done-dir</name>

<value>/data/hadoop/mydata/yarn_done</value>

</property>

<property>

<name>yarn.log-aggregation-enable</name>

<value>true</value>

</property>

<property>

<name>yarn.nodemanager.resource.memory-mb</name>

<value>2048</value>

</property>

<property>

<name>yarn.nodemanager.vmem-pmem-ratio</name>

<value>4.2</value>

</property>

<property>

<name>yarn.nodemanager.resource.cpu-vcores</name>

<value>2</value>

</property>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.nodemanager.aux-services.mapreduce.shuffle.class</name>

<value>org.apache.hadoop.mapred.ShuffleHandler</value>

</property>

<property>

<description>Classpath for typical applications.</description>

<name>yarn.application.classpath</name>

<value>

$HADOOP_HOME/etc/hadoop,

$HADOOP_HOME/share/hadoop/common/*,

$HADOOP_HOME/share/hadoop/common/lib/*,

$HADOOP_HOME/share/hadoop/hdfs/*,

$HADOOP_HOME/share/hadoop/hdfs/lib/*,

$HADOOP_HOME/share/hadoop/mapreduce/*,

$HADOOP_HOME/share/hadoop/mapreduce/lib/*,

$HADOOP_HOME/share/hadoop/yarn/*,

$HADOOP_HOME/share/hadoop/yarn/lib/*

</value>

</property>

</configuration>

修改fairscheduler.xml文件

[root@hadoop01 hadoop]# vi fairscheduler.xml

<?xml version="1.0"?>

<allocations>

<queue name="news">

<minResources>1024 mb, 1 vcores </minResources>

<maxResources>1536 mb, 1 vcores </maxResources>

<maxRunningApps>5</maxRunningApps>

<minSharePreemptionTimeout>300</minSharePreemptionTimeout>

<weight>1.0</weight>

<aclSubmitApps>root,yarn,search,hdfs</aclSubmitApps>

</queue>

<queue name="crawler">

<minResources>1024 mb, 1 vcores</minResources>

<maxResources>1536 mb, 1 vcores</maxResources>

</queue>

<queue name="map">

<minResources>1024 mb, 1 vcores</minResources>

<maxResources>1536 mb, 1 vcores</maxResources>

</queue>

</allocations>

創建相關xml配置中目錄

mkdir -p /data/hadoop/mydata/yarn

4.解壓zookeeper並修改環境變量

[root@hadoop01 ~]# tar zxvf zookeeper-3.4.6.tar.gz

[root@hadoop01 ~]#mv zookeeper-3.4.6 /data/zookeeper

[root@hadoop01 ~]# vi /etc/profile

##zookeeper

export ZOOKEEPER_HOME=/data/zookeeper

export PATH=$PATH:$ZOOKEEPER_HOME/bin:$ZOOKEEPER_HOME/conf

[root@hadoop01 ~]# source /etc/profile

5.修改zookeeper配置文件

[root@hadoop01 ~]# cd /data/zookeeper/conf/

[root@hadoop01 conf]# cp zoo_sample.cfg zoo.cfg

[root@hadoop01 conf]# vi zoo.cfg

# The number of milliseconds of each tick

tickTime=2000

# The number of ticks that the initial

# synchronization phase can take

initLimit=10

# The number of ticks that can pass between

# sending a request and getting an acknowledgement

syncLimit=5

# the directory where the snapshot is stored.

# do not use /tmp for storage, /tmp here is just

# example sakes.

dataDir=/data/hadoop/mydata/zookeeper

dataLogDir=/data/hadoop/mydata/zookeeperlog

# the port at which the clients will connect

clientPort=2190

server.1=hadoop01:2888:3888

server.2=hadoop02:2888:3888

server.3=hadoop03:2888:3888

server.4=hadoop04:2888:3888

server.5=hadoop05:2888:3888

# the maximum number of client connections.

# increase this if you need to handle more clients

#maxClientCnxns=60

#

# Be sure to read the maintenance section of the

# administrator guide before turning on autopurge.

#

# http://zookeeper.apache.org/doc/current/zookeeperAdmin.html#sc_maintenance

#

# The number of snapshots to retain in dataDir

#autopurge.snapRetainCount=3

# Purge task interval in hours

# Set to "0" to disable auto purge feature

#autopurge.purgeInterval=1

創建目錄

mkdir /data/hadoop/mydata/zookeeper

mkdir /data/hadoop/mydata/zookeeperlog

6.把配置hadoop、zookeeper文件目錄到其他四臺中

[root@hadoop01 ~]# scp -r /data/hadoop hadoop02:/data/

[root@hadoop01 ~]# scp -r /data/hadoop hadoop03:/data/

[root@hadoop01 ~]# scp -r /data/hadoop hadoop04:/data/

[root@hadoop01 ~]# scp -r /data/hadoop hadoop05:/data/

[root@hadoop01 ~]# scp -r /data/zookeeper hadoop02:/data/

[root@hadoop01 ~]# scp -r /data/zookeeper hadoop03:/data/

[root@hadoop01 ~]# scp -r /data/zookeeper hadoop04:/data/

[root@hadoop01 ~]# scp -r /data/zookeeper hadoop05:/data/

在hadoop02修改yarn-site.xml

[root@hadoop02 hadoop]# cd /data/hadoop/etc/hadoop/

把rm1修改成rm2

[root@hadoop02 hadoop]# vi yarn-site.xml

<name>yarn.resourcemanager.ha.id</name>

<value>rm2</value>

[root@hadoop01 ~]# vi /data/hadoop/mydata/zookeeper/myid

1

[root@hadoop02 ~]# vi /data/hadoop/mydata/zookeeper/myid

2

[root@hadoop03 ~]# vi /data/hadoop/mydata/zookeeper/myid

3

[root@hadoop04 ~]# vi /data/hadoop/mydata/zookeeper/myid

4

[root@hadoop05 ~]# vi /data/hadoop/mydata/zookeeper/myid

5

7、啓動zookeeper

五臺操作zkServer.sh start

[root@hadoop01 ~]# zkServer.sh start

[root@hadoop01 ~]# zkServer.sh status

ZooKeeper JMX enabled by default

Using config: /data/zookeeper/bin/../conf/zoo.cfg

Mode: follower

[root@hadoop03 ~]# zkServer.sh status

ZooKeeper JMX enabled by default

Using config: /data/zookeeper/bin/../conf/zoo.cfg

Mode: leader

You have new mail in /var/spool/mail/root

正常情況只有一臺leader狀態

8、格式化zookeeper集羣

在hadoop機器執行命令

[root@hadoop01 ~]# hdfs zkfc -formatZK

9.啓動journalnode進程

在每臺啓動(五臺)

[root@hadoop01 ~]# cd /data/hadoop/sbin/

[root@hadoop01 sbin]# ./hadoop-daemon.sh start journalnode

10.格式化namenode

在hadoop01上執行命令

[root@hadoop01 ~]# hdfs namenode -format

11.啓動namenode

在hadoop01執行命令

[root@hadoop01 ~]# cd /data/hadoop/sbin/

[root@hadoop01 sbin]# ./hadoop-daemon.sh start namenode

12.將剛纔格式化的namenode信息同步麼備用namenode上

[root@hadoop01 ~]# hdfs namenode -bootstrapStandby

13.在hadoop02上啓動namenode

[root@hadoop02 ~]# cd /data/hadoop/sbin/

[root@hadoop02 sbin]# ./hadoop-daemon.sh start namenode

14.啓動所有datanode

在每臺執行這是根據slaves來的

[root@hadoop01 ~]# cd /data/hadoop/sbin/

[root@hadoop01 sbin]# ./hadoop-daemon.sh start datanode

15.啓動yarn

在hadoop01上執行命令

root@hadoop01 ~]# cd /data/hadoop/sbin/

[root@hadoop01 sbin]# ./start-yarn.sh

16.啓動ZKFC

在hadoop01和hadoop02上啓動

[root@hadoop01 ~]# cd /data/hadoop/sbin/

[root@hadoop01 sbin]# ./hadoop-daemon.sh start zkfc

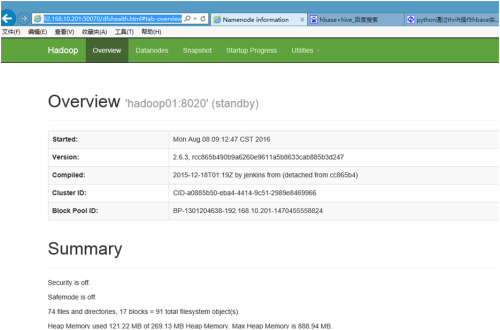

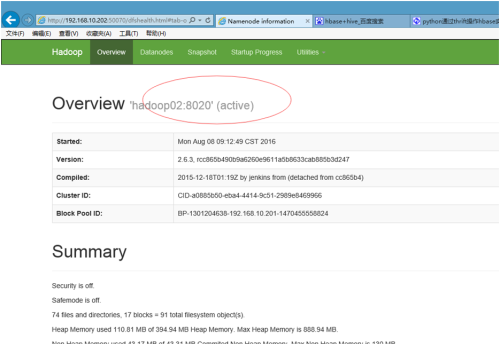

17.啓動成功結果

三、安裝hbase HA

1.解壓hbase修改配置文件

[root@hadoop01 ~]# tar zxvf hbase-1.0.2-bin.tar.gz

[root@hadoop01 ~]# mv hbase-1.0.2 /data/hbase

配置環境變量

[root@hadoop01 ~]# vi /etc/profile

##hbase

export HBASE_HOME=/data/hbase

export PATH=$PATH:$HBASE_HOME/bin

[root@hadoop01 ~]# source /etc/profile

[root@hadoop01 ~]# cd /data/hbase/conf/

[root@hadoop01 conf]# vi hbase-env.sh

# The java implementation to use. Java 1.7+ required.

export JAVA_HOME="/opt/jdk"

# Extra Java CLASSPATH elements. Optional.

#記得以下一定要配置,HMaster會啓動不了

export HBASE_CLASSPATH=/data/hadoop/etc/hadoop

# Where log files are stored. $HBASE_HOME/logs by default.

export HBASE_LOG_DIR=/data/hbase/logs

# Tell HBase whether it should manage it's own instance of Zookeeper or not.

export HBASE_MANAGES_ZK=false

修改hbase-site.xml

[root@hadoop01 conf]# vi hbase-site.xml

<?xml version="1.0"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<!--

/**

*

* Licensed to the Apache Software Foundation (ASF) under one

* or more contributor license agreements. See the NOTICE file

* distributed with this work for additional information

* regarding copyright ownership. The ASF licenses this file

* to you under the Apache License, Version 2.0 (the

* "License"); you may not use this file except in compliance

* with the License. You may obtain a copy of the License at

*

* http://www.apache.org/licenses/LICENSE-2.0

*

* Unless required by applicable law or agreed to in writing, software

* distributed under the License is distributed on an "AS IS" BASIS,

* WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

* See the License for the specific language governing permissions and

* limitations under the License.

*/

-->

<configuration>

<property>

<name>hbase.rootdir</name>

<value>hdfs://cluster/hbase</value>

</property>

<property>

<name>hbase.cluster.distributed</name>

<value>true</value>

</property>

<property>

<name>hbase.tmp.dir</name>

<value>/data/hbase/tmp</value>

</property>

<property>

<name>hbase.master.port</name>

<value>60000</value>

</property>

<property>

<name>hbase.zookeeper.property.dataDir</name>

<value>/data/hadoop/mydata/zookeeper</value>

</property>

<property>

<name>hbase.zookeeper.quorum</name>

<value>hadoop01,hadoop02,hadoop03,hadoop04,hadoop05</value>

</property>

<property>

<name>hbase.zookeeper.property.clientPort</name>

<value>2190</value>

</property>

<property>

<name>zookeeper.session.timeout</name>

<value>120000</value>

</property>

<property>

<name>hbase.regionserver.restart.on.zk.expire</name>

<value>true</value>

</property>

</configuration>

[root@hadoop01 conf]# vi regionservers

hadoop01

hadoop02

hadoop03

hadoop04

hadoop05

~

創建文件目錄

mkdir /data/hbase/tmp

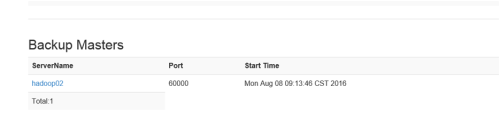

增加backup-master

[root@hadoop01 conf]# vi backup-masters

hadoop02

以上都配置完成

2、把文件傳到其他服務器上

[root@hadoop01 conf]# scp -r /data/hbase hadoop02:/data/

[root@hadoop01 conf]# scp -r /data/hbase hadoop03:/data/

[root@hadoop01 conf]# scp -r /data/hbase hadoop04:/data/

[root@hadoop01 conf]# scp -r /data/hbase hadoop05:/data/

3.啓動hbase

在hadoop01執行命令

[root@hadoop01 conf]# start-hbase.sh

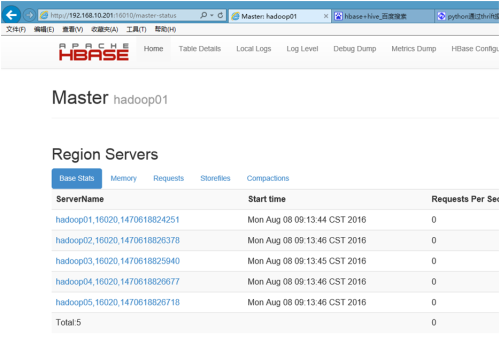

4.啓動結果

可以通過jps查看

[root@hadoop01 conf]# jps

2540 NodeManager

1686 QuorumPeerMain

2134 JournalNode

2342 DFSZKFailoverController

3041 HMaster

1933 DataNode

3189 HRegionServer

2438 ResourceManager

7848 Jps

1827 NameNode

以後啓動過程

每臺執行(五臺)

[root@hadoop01 ~]# zkServer.sh start

在hadoop01啓動

[root@hadoop01 ~]# cd /data/hadoop/sbin/

[root@hadoop01 sbin]# ./start-dfs.sh

[root@hadoop01 sbin]# ./start-yarn.sh

最後啓動hbase

[root@hadoop01 sbin]# start-hbase.sh

關閉過程

先關閉hbase

stop-hbase.sh

在hadoop01關閉

[root@hadoop01 ~]# cd /data/hadoop/sbin/

[root@hadoop01 sbin]# ./stop-yarn.sh

[root@hadoop01 sbin]# ./stop-dfs.sh