在爬取這個網站之前,試過爬取其他網站的漫畫,但是發現有很多反爬蟲的限制,有的圖片後面加了動態參數,每秒都會更新,所以前一秒爬取的圖片鏈接到一下秒就會失效了,還有的是圖片地址不變,但是訪問次數頻繁的話會返回403,終於找到一個沒有限制的漫畫網站,演示一下selenium爬蟲

# -*- coding:utf-8 -*-

# crawl kuku漫畫

__author__='fengzhankui'

from selenium import webdriver

from selenium.webdriver.common.desired_capabilities import DesiredCapabilities

import os

import urllib2

import chrom

class getManhua(object):

def __init__(self):

self.num=5

self.starturl='http://comic.kukudm.com/comiclist/2154/51850/1.htm'

self.browser=self.getBrowser()

self.getPic(self.browser)

def getBrowser(self):

dcap = dict(DesiredCapabilities.PHANTOMJS)

dcap["phantomjs.page.settings.userAgent"] = ("Mozilla/5.0 (Windows NT 6.1; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/59.0.3071.115 Safari/537.36")

browser=webdriver.PhantomJS(desired_capabilities=dcap)

try:

browser.get(self.starturl)

except:

print 'open url fail'

browser.implicitly_wait(20)

return browser

def getPic(self,browser):

cartoonTitle = browser.title.split('_')[0]

self.createDir(cartoonTitle)

os.chdir(cartoonTitle)

for i in range(1,self.num):

i=str(i)

imgurl = browser.find_element_by_tag_name('img').get_attribute('src')

print imgurl

with open('page'+i+'.jpg','wb') as fp:

agent = chrom.pcUserAgent.get('Firefox 4.0.1 - Windows')

request=urllib2.Request(imgurl)

request.add_header(agent.split(':',1)[0],agent.split(':',1)[0])

response=urllib2.urlopen(request)

fp.write(response.read())

print 'page'+i+'success'

NextTag = browser.find_elements_by_tag_name('a')[-1].get_attribute('href')

browser.get(NextTag)

browser.implicitly_wait(20)

def createDir(self,cartoonTitle):

if os.path.exists(cartoonTitle):

print 'exists'

else:

os.mkdir(cartoonTitle)

if __name__=='__main__':

getManhua()對了應對反爬蟲的機制,我在selenium和urllib2分別加了請求參數,反正網站通過過濾請求的方式將爬蟲過濾掉,在這裏僅爬取了開始url往下的5頁,而且爲了防止圖片和網絡延時,設置20秒了等待時間,剛開始運行時間會稍微有點長,需要等待。

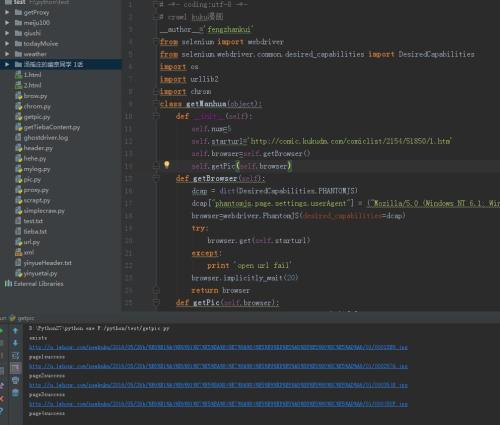

運行過程如圖所示