接上一篇通過Rancher部署並擴容Kubernetes集羣基礎篇一

7. 使用ConfigMap配置redis

redis-config

maxmemory 2mb maxmemory-policy allkeys-lru

# kubectl create configmap example-redis-config --from-file=./redis-config

# kubectl get configmap example-redis-config -o yaml apiVersion: v1 data: redis-config: | maxmemory 2mb maxmemory-policy allkeys-lru kind: ConfigMap metadata: creationTimestamp: 2017-07-12T13:27:56Z name: example-redis-config namespace: default resourceVersion: "45707" selfLink: /api/v1/namespaces/default/configmaps/example-redis-config uid: eab522fd-6705-11e7-94da-02672b869d7f

redis-pod.yaml

apiVersion: v1

kind: Pod

metadata:

name: redis

spec:

containers:

- name: redis

image: kubernetes/redis:v1

env:

- name: MASTER

value: "true"

ports:

- containerPort: 6379

resources:

limits:

cpu: "0.1"

volumeMounts:

- mountPath: /redis-master-data

name: data

- mountPath: /redis-master

name: config

volumes:

- name: data

emptyDir: {}

- name: config

configMap:

name: example-redis-config

items:

- key: redis-config

path: redis.conf

# kubectl create -f redis-pod.yaml pod "redis" created

# kubectl exec -it redis redis-cli 127.0.0.1:6379> 127.0.0.1:6379> 127.0.0.1:6379> config get maxmemory 1) "maxmemory" 2) "2097152" 127.0.0.1:6379> config get maxmemory-policy 1) "maxmemory-policy" 2) "allkeys-lru" 127.0.0.1:6379>

8. 使用kubectl命令管理kubernetes對象

# kubectl run nginx --image nginx deployment "nginx" created

或者

# kubectl create deployment nginx --image nginx

使用kubectl create創建一個配置文件中定義的對象

#kubectl create -f nginx.yaml

刪除兩個配置文件中定義的對象

#kubectl delete -f nginx.yaml -f redis.yaml

更新對象

#kubectl replace -f nginx.yaml

處理configs目錄下所有的對象配置文件,創建新的對象或者打補丁現有的對象

#kubectl apply -f configs/

遞推處理子目錄下的對象配置文件

#kubectl apply -R -f configs/

9. 部署無狀態應用

9.1 使用deployment運行一個無狀態應用

deployment.yaml

apiVersion: apps/v1beta1 kind: Deployment metadata: name: nginx-deployment spec: replicas: 2 # tells deployment to run 2 pods matching the template template: # create pods using pod definition in this template metadata: # unlike pod-nginx.yaml, the name is not included in the meta data as a unique name is # generated from the deployment name labels: app: nginx spec: containers: - name: nginx image: nginx:1.7.9 ports: - containerPort: 80

kubectl create -f https://k8s.io/docs/tasks/run-application/deployment.yaml

這裏需要注意一下,rancher1.6.2部署的是kubernetes 1.5.4

這裏的apiVersion要改一下,改成extensions/v1beta1

kubernetes從1.6引入apps/v1beta1.Deployment 替代 extensions/v1beta1.Deployment

顯示這個deployment的信息

# kubectl describe deployment nginx-deployment

# kubectl get pods -l app=nginx

更新deployment

deployment-update.yaml

和deployment.yaml除了image不同之外其餘的內容相同

image: nginx:1.8

# kubectl apply -f deployment-update.yaml

執行更新後,kubernetes會先創建新的pods,然後再停掉並刪除老的pods

# kubectl get pods -l app=nginx # kubectl get pod nginx-deployment-148880595-9l07p -o yaml|grep image: - image: nginx:1.8 image: nginx:1.8

已經更新成功了

通過增加replicas的數量來擴展應用

# cp deployment-update.yaml deployment-scale.yaml

修改deployment-scale.yaml

replicas: 4

# kubectl apply -f deployment-scale.yaml # kubectl get pods -l app=nginx NAME READY STATUS RESTARTS AGE nginx-deployment-148880595-1tb5m 1/1 Running 0 26s nginx-deployment-148880595-9l07p 1/1 Running 0 10m nginx-deployment-148880595-lc113 1/1 Running 0 26s nginx-deployment-148880595-z8l51 1/1 Running 0 10m

刪除deployment

# kubectl delete deployment nginx-deployment deployment "nginx-deployment" deleted

創建一個可以複製的應用首選的方式是使用的deployment, deployment會使用ReplicaSet. 在Deployment和ReplicaSet加入到Kubernetes之前,可複製的應用是通過ReplicationController來配置的

9.2 案例: 部署一個以Redis作爲存儲的PHP留言板

第一步: 啓動一個redis master服務

redis-master-deployment.yaml

apiVersion: extensions/v1beta1 kind: Deployment metadata: name: redis-master spec: replicas: 1 template: metadata: labels: app: redis role: master tier: backend spec: containers: - name: master image: gcr.io/google_containers/redis:e2e # or just image: redis resources: requests: cpu: 100m memory: 100Mi ports: - containerPort: 6379

# kubectl create -f redis-master-deployment.yaml

redis-master-service.yaml

apiVersion: v1 kind: Service metadata: name: redis-master labels: app: redis role: master tier: backend spec: ports: - port: 6379 targetPort: 6379 selector: app: redis role: master tier: backend

# kubectl create -f redis-master-service.yaml

# kubectl get services redis-master NAME CLUSTER-IP EXTERNAL-IP PORT(S) AGE redis-master 10.43.10.96 <none> 6379/TCP 2m

targetPort是後端容器接收外部流量的端口,port是其他任務pods訪問service的端口

kubernetes支持兩種主要的模式來發現一個service -- 環境變量和DNS

查看集羣DNS

# kubectl --namespace=kube-system get rs -l k8s-app=kube-dns NAME DESIRED CURRENT READY AGE kube-dns-1208858260 1 1 1 1d

# kubectl get pods -n=kube-system -l k8s-app=kube-dns NAME READY STATUS RESTARTS AGE kube-dns-1208858260-c8dbv 4/4 Running 8 1d

第二步: 啓動一個redis slave服務

redis-slave.yaml

apiVersion: v1 kind: Service metadata: name: redis-slave labels: app: redis role: slave tier: backend spec: ports: - port: 6379 selector: app: redis role: slave tier: backend --- apiVersion: extensions/v1beta1 kind: Deployment metadata: name: redis-slave spec: replicas: 2 template: metadata: labels: app: redis role: slave tier: backend spec: containers: - name: slave image: gcr.io/google_samples/gb-redisslave:v1 resources: requests: cpu: 100m memory: 100Mi env: - name: GET_HOSTS_FROM value: dns # If your cluster config does not include a dns service, then to # instead access an environment variable to find the master # service's host, comment out the 'value: dns' line above, and # uncomment the line below: # value: env ports: - containerPort: 6379

# kubectl create -f redis-slave.yaml service "redis-slave" created deployment "redis-slave" created

# kubectl get deployments -l app=redis NAME DESIRED CURRENT UP-TO-DATE AVAILABLE AGE redis-master 1 1 1 1 35m redis-slave 2 2 2 2 5m

# kubectl get services -l app=redis NAME CLUSTER-IP EXTERNAL-IP PORT(S) AGE redis-master 10.43.10.96 <none> 6379/TCP 31m redis-slave 10.43.231.209 <none> 6379/TCP 6m

# kubectl get pods -l app=redis NAME READY STATUS RESTARTS AGE redis-master-343230949-sc78h 1/1 Running 0 37m redis-slave-132015689-rc1xf 1/1 Running 0 7m redis-slave-132015689-vb0vl 1/1 Running 0 7m

第三步: 啓動一個留言板前端

frontend.yaml

apiVersion: v1 kind: Service metadata: name: frontend labels: app: guestbook tier: frontend spec: # if your cluster supports it, uncomment the following to automatically create # an external load-balanced IP for the frontend service. # type: LoadBalancer ports: - port: 80 selector: app: guestbook tier: frontend --- apiVersion: extensions/v1beta1 kind: Deployment metadata: name: frontend spec: replicas: 3 template: metadata: labels: app: guestbook tier: frontend spec: containers: - name: php-redis image: gcr.io/google-samples/gb-frontend:v4 resources: requests: cpu: 100m memory: 100Mi env: - name: GET_HOSTS_FROM value: dns # If your cluster config does not include a dns service, then to # instead access environment variables to find service host # info, comment out the 'value: dns' line above, and uncomment the # line below: # value: env ports: - containerPort: 80

# kubectl create -f frontend.yaml service "frontend" created deployment "frontend" created

# kubectl get services -l tier NAME CLUSTER-IP EXTERNAL-IP PORT(S) AGE frontend 10.43.39.188 <none> 80/TCP 3m redis-master 10.43.10.96 <none> 6379/TCP 47m redis-slave 10.43.231.209 <none> 6379/TCP 22m

# kubectl get deployments -l tier NAME DESIRED CURRENT UP-TO-DATE AVAILABLE AGE frontend 3 3 3 3 3m redis-master 1 1 1 1 53m redis-slave 2 2 2 2 22m

# kubectl get pods -l tier NAME READY STATUS RESTARTS AGE frontend-88237173-b7x03 1/1 Running 0 13m frontend-88237173-qg6d7 1/1 Running 0 13m frontend-88237173-zb827 1/1 Running 0 13m redis-master-343230949-sc78h 1/1 Running 0 1h redis-slave-132015689-rc1xf 1/1 Running 0 32m redis-slave-132015689-vb0vl 1/1 Running 0 32m

guestbook.php

<?php

error_reporting(E_ALL);

ini_set('display_errors', 1);

require 'Predis/Autoloader.php';

Predis\Autoloader::register();

if (isset($_GET['cmd']) === true) {

$host = 'redis-master';

if (getenv('GET_HOSTS_FROM') == 'env') {

$host = getenv('REDIS_MASTER_SERVICE_HOST');

}

header('Content-Type: application/json');

if ($_GET['cmd'] == 'set') {

$client = new Predis\Client([

'scheme' => 'tcp',

'host' => $host,

'port' => 6379,

]);

$client->set($_GET['key'], $_GET['value']);

print('{"message": "Updated"}');

} else {

$host = 'redis-slave';

if (getenv('GET_HOSTS_FROM') == 'env') {

$host = getenv('REDIS_SLAVE_SERVICE_HOST');

}

$client = new Predis\Client([

'scheme' => 'tcp',

'host' => $host,

'port' => 6379,

]);

$value = $client->get($_GET['key']);

print('{"data": "' . $value . '"}');

}

} else {

phpinfo();

} ?>

redis-slave 容器裏面有個/run.sh

if [[ ${GET_HOSTS_FROM:-dns} == "env" ]]; then

redis-server --slaveof ${REDIS_MASTER_SERVICE_HOST} 6379

else

redis-server --slaveof redis-master 6379

firedis-master通過kube-dns解析

從外部訪問這個留言板有兩種方式: NodePort和LoadBalancer

更新frontend.yaml

設置 type: NodePort

# kubectl apply -f frontend.yaml

# kubectl get services -l tier NAME CLUSTER-IP EXTERNAL-IP PORT(S) AGE frontend 10.43.39.188 <nodes> 80:31338/TCP 4h redis-master 10.43.10.96 <none> 6379/TCP 5h redis-slave 10.43.231.209 <none> 6379/TCP 5h

可以看到frontend對集羣外暴露一個31338端口,訪問任意一個集羣節點

更新設置frontend.yaml

設置 type: LoadBalancer

# kubectl apply -f frontend.yaml

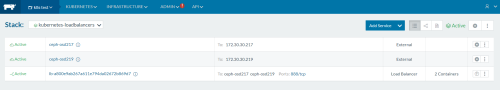

使用rancher部署kubernetes,這裏設置type爲LoadBalancer後,在rancher上可以看到

默認對外的端口是80,可以自己調整,還可以rancher LB的容器數量

10.部署有狀態應用

StatefulSets是用於部署有狀態應用和分佈式系統。部署有狀態應用之前需要先部署有動態持久化存儲

10.1 StatefulSet基礎

使用Ceph集羣作爲Kubernetes的動態分配持久化存儲

10.2 運行一個單實例的有狀態應用

10.3 運行一個多實例複製的有狀態應用

10.4 部署WordPress和MySQL案例

10.5 部署Cassandra案例

10.6 部署ZooKeeper案例

參考文檔:

http://blog.kubernetes.io/2016/10/dynamic-provisioning-and-storage-in-kubernetes.html