階段三:我們這次試一下非正常退出

模擬宕機方法:

方法一:虛擬機掛起

方法二:echoc>/proc/sysrq-trigger

恢復所有節點(略)

[root@web1 ~]# cman_tool status

……

Nodes: 4

Expected votes: 8

Total votes: 8

Node votes: 2

Quorum: 5

……

=====Step1:對web3節點進行模擬故障=====

[root@web1 ~]# ssh root@web3 'echo c>/proc/sysrq-trigger'

Sep 22 22:40:18 web1openais[4253]: [TOTEM] The token was lost in the OPERATIONAL state.

#在這之前沒有收到web3節點退出的信息,對比階段二關機狀態的日誌。

Sep 22 22:40:18 web1 openais[4253]: [TOTEM] Receive multicast socketrecv buffer size (320000 bytes).

Sep 22 22:40:18 web1 openais[4253]: [TOTEM] Transmit multicast socketsend buffer size

……

Sep 22 22:40:30 web1 openais[4253]: [SYNC ] This node is within theprimary component and will provide service.

Sep 22 22:40:30 web1 openais[4253]: [TOTEM] entering OPERATIONAL state.

Sep 22 22:40:30 web1 openais[4253]: [CLM ] got nodejoin message 192.168.1.201

Sep 22 22:40:30 web1 openais[4253]: [CLM ] got nodejoin message 192.168.1.202

Sep 22 22:40:30 web1 openais[4253]: [CLM ] got nodejoin message 192.168.1.204

Sep 22 22:40:30 web1 openais[4253]: [CPG ] got joinlist message from node 1

Sep 22 22:40:30 web1 openais[4253]: [CPG ] got joinlist message from node 2

Sep 22 22:40:30 web1 openais[4253]: [CPG ] got joinlist message from node 4

Sep 22 22:40:35 web1fenced[4272]: fencing node "web3.rocker.com"

Sep 22 22:40:35 web1fenced[4272]: fence "web3.rocker.com" failed

Sep 22 22:40:40 web1fenced[4272]: fencing node "web3.rocker.com"

Sep 22 22:40:40 web1fenced[4272]: fence "web3.rocker.com" failed

Sep 22 22:40:45 web1fenced[4272]: fencing node "web3.rocker.com"

Sep 22 22:40:45 web1fenced[4272]: fence "web3.rocker.com" failed

#我們用了手動fence設備,當集羣發現web3節點失聯的時候,向管理員申請fence掉web3節點

我們先來看看節點狀態

[root@web2 ~]# clustat

Cluster Status for mycluster @ Mon Sep 22 22:42:33 2014

Member Status: Quorate #集羣可用

Member Name ID Status

------ ---- ----------

web1.rocker.com 1 Online,rgmanager

web2.rocker.com 2 Online,Local, rgmanager

web3.rocker.com 3 Offline

web4.rocker.com 4 Online,rgmanager

Service Name Owner (Last) State

------- ---- ----- ------ -----

service:myservice web3.rocker.com started

#但是資源還沒有進行轉移!

看看quorum

[root@web2 ~]# cman_tool status

……

Nodes: 3

Expected votes: 8

Total votes: 6

Node votes: 2

Quorum: 5 #區別在這裏

#Totalvotes改了,意味這web3節點投票失敗,然而quorum沒有改變。

……

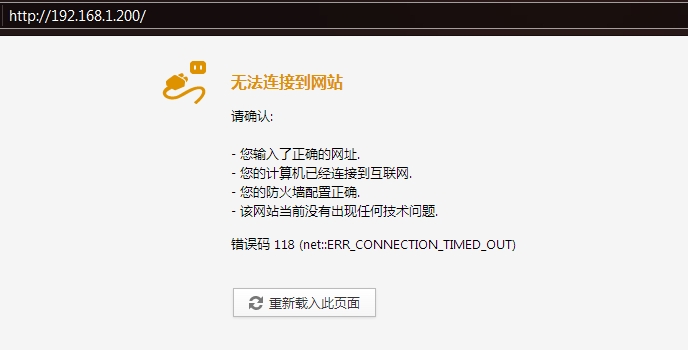

測試一下

=====Step2:把web3節點手動fence掉=====

[root@web2 ~]# fence_ack_manual -n web3.rocker.com

Warning: If the node"web3.rocker.com" has not been manually fenced

(i.e. power cycled or disconnected from shared storage devices)

the GFS file system may become corrupted and all its data

unrecoverable! Please verifythat the node shown above has

been reset or disconnected from storage.

Are you certain you want to continue? [yN] y

can't open /tmp/fence_manual.fifo: No such file or directory

[root@web2 ~]# touch /tmp/fence_manual.fifo

[root@web2 ~]# fence_ack_manual -n web3.rocker.com -e

Warning: If the node"web3.rocker.com" has not been manually fenced

(i.e. power cycled or disconnected from shared storage devices)

the GFS file system may become corrupted and all its data

unrecoverable! Please verifythat the node shown above has

been reset or disconnected from storage.

Are you certain you want to continue? [yN] y

done

#fence成功

#tail /var/log/message

Sep 22 22:52:25 web1 fenced[4272]: fence "web3.rocker.com"overridden by administrator intervention

再看節點狀態

[root@web1 ~]# clustat

Cluster Status for mycluster @ Mon Sep 22 22:54:47 2014

Member Status: Quorate

Member Name ID Status

------ ---- ----------

web1.rocker.com 1 Online,Local, rgmanager

web2.rocker.com 2 Online,rgmanager

web3.rocker.com 3 Offline

web4.rocker.com 4 Online,rgmanager

Service Name Owner (Last) State

------- ---- ----- ------ -----

service:myservice web2.rocker.com started

#資源發生轉移了

測試

看看quorum

[root@web1 ~]# cman_tool status

……

Nodes: 3

Expected votes: 8

Total votes: 6

Node votes: 2

Quorum: 5 #quorum不變,因爲expectedvote也沒變

……

=====step3:繼續踢!現在用虛擬機web2掛起=====

Sep 22 22:57:14 web1 openais[4253]: [TOTEM] The token was lost in the OPERATIONAL state.

Sep 22 22:57:14 web1 openais[4253]: [TOTEM] Receive multicast socketrecv buffer size (320000 bytes).

Sep 22 22:57:14 web1 openais[4253]: [TOTEM] Transmit multicast socketsend buffer size (221184 bytes).

Sep 22 22:57:14 web1 openais[4253]: [TOTEM] entering GATHER state from2.

……

Sep 22 22:57:26 web1 openais[4253]:[CMAN ] quorum lost, blocking activity

……

Sep 22 22:57:26 web1 openais[4253]: [TOTEM] entering OPERATIONAL state.

Sep 22 22:57:26 web1 openais[4253]: [CLM ] got nodejoin message 192.168.1.201

Sep 22 22:57:26 web1 ccsd[4247]:Cluster is not quorate. Refusingconnection.

Sep 22 22:57:26 web1 openais[4253]: [CLM ] got nodejoin message 192.168.1.204

Sep 22 22:57:26 web1 ccsd[4247]: Error while processing connect:Connection refused

Sep 22 22:57:26 web1 openais[4253]: [CPG ] got joinlist message from node 1

Sep 22 22:57:26 web1 ccsd[4247]: Invalid descriptor specified (-111).

Sep 22 22:57:26 web1 openais[4253]: [CPG ] got joinlist message from node 4

Sep 22 22:57:26 web1 ccsd[4247]: Someone may be attempting somethingevil.

Sep 22 22:57:26 web1 ccsd[4247]: Error while processing get: Invalidrequest descriptor

#爽!!!終於出現了!!!集羣掛起了。

web1的shell界面報錯:

Message from syslogd@ at Mon Sep 22 22:57:26 2014 ...

web1 clurgmgrd[4312]: <emerg> #1: Quorum Dissolved

[root@web1 ~]# clustat

Service states unavailable: Operation requires quorum

Cluster Status for mycluster @ Mon Sep 22 22:58:05 2014

Member Status: Inquorate #集羣掛起了!

Member Name ID Status

------ ---- ----------

web1.rocker.com 1 Online,Local

web2.rocker.com 2 Offline

web3.rocker.com 3 Offline

web4.rocker.com 4 Online

#沒有轉移到資源

[root@web1 ~]# cman_tool status

……

Nodes: 2

Expected votes: 8

Total votes: 4

Node votes: 2

Quorum: 5 Activity blocked

#因爲quorum> Total votes,所以集羣掛起了

……

************************************************************************

階段四:配置qdisk

就是爲了這種情況的發生,我們需要配置qdisk(我們之前配置了,在用system-config-cluster新建集羣的時候),並且開啓qdiskd服務

在ss節點爲/dev/sdb分兩個區(略)

在ss節點編輯配置文件/etc/tgt/targets.conf,添加一個target

<target iqn.2008-09.com.example:rocker.use.target>

backing-store /dev/sdb

</target>

ss開啓iscsi-target服務:

[root@ss ~]# service tgtd start

Starting SCSI target daemon: Starting target framework daemon

讓web所有節點開啓iscsi-initial,並且識別target

[root@web1 ~]# for i in web1 web2 web3 web4;do ssh root@$i ‘serviceiscsi start ; iscsiadm -m discovery -t sendtargets -p 192.168.1.205 ; iscsiadm-m node -p 192.168.1.205 -l’;done

查看掛載後的塊號

[root@web1 ~]# dmesg

sd 1:0:0:1: Attached scsi disk sdb

sd 1:0:0:1: Attached scsi generic sg6 type 0

[root@web1 ~]# fdisk /dev/sdb -l

Disk /dev/sdb: 21.4 GB, 21474836480 bytes

255 heads, 63 sectors/track, 2610 cylinders

Units = cylinders of 16065 * 512 = 8225280 bytes

Device Boot Start End Blocks Id System

/dev/sdb1 1 63 506016 83 Linux

/dev/sdb2 64 126 506047+ 83 Linux

創建qdisk分區:

在web某一節點設置即可

[root@web1 ~]# mkqdisk -c /dev/sdb1 -l myqdisk

mkqdisk v0.6.0

Writing new quorum disk label 'myqdisk' to /dev/sdb1.

WARNING: About to destroy all data on /dev/sdb1; proceed [N/y] ? y

Initializing status block for node 1...

……

Initializing status block for node 16...

在所有節點開啓qdiskd服務

[root@web1 ~]# for i in web1 web2 web3 web4;do ssh root@$i 'serviceqdiskd start';done

Starting the Quorum Disk Daemon:[ OK ]

Starting the Quorum Disk Daemon:[ OK ]

Starting the Quorum Disk Daemon:[ OK ]

Starting the Quorum Disk Daemon:[ OK ]

查看quorum

[root@web1 ~]# cman_tool status

……

Nodes: 4

Expected votes: 8

Quorum device votes: 2 #qdisk支持投票了

Total votes: 10

Node votes: 2

Quorum: 6

……

=====step1:讓web3宕機=====

[root@web3 ~]# echo c>/proc/sysrq-trigger

[root@web1 ~]# clustat

Cluster Status for mycluster @ Tue Sep 23 08:55:41 2014

Member Status: Quorate #集羣可用

Member Name ID Status

------ ---- ---- ------

web1.rocker.com 1 Online, Local, rgmanager

web2.rocker.com 2 Online, rgmanager

web3.rocker.com 3 Offline

web4.rocker.com 4 Online, rgmanager

/dev/disk/by-id/scsi-1IET_00010001-p 0 Online, Quorum Disk

#qdisk開啓成功

Service Name Owner (Last) State

------- ---- ----- ------ -----

service:myservice web2.rocker.com started

#資源轉移了

[root@web1 ~]# cman_tool status

……

Nodes: 3

Expected votes: 8

Quorum device votes: 2

Total votes: 8

Node votes: 2

Quorum: 6

……

=====step2:讓web2宕機=====

[root@web2 ~]# echo c>/proc/sysrq-trigger

[root@web1 ~]# clustat

Cluster Status for mycluster @ Tue Sep 23 08:58:25 2014

Member Status: Quorate

Member Name ID Status

------ ---- ---- ------

web1.rocker.com 1 Online, Local,rgmanager

web2.rocker.com 2 Offline

web3.rocker.com 3 Offline

web4.rocker.com 4 Online, rgmanager

/dev/disk/by-id/scsi-1IET_00010001-p 0 Online, Quorum Disk

Service Name Owner (Last) State

------- ---- ----- ------ -----

service:myservice web1.rocker.com started

[root@web1 ~]# cman_tool status

……

Nodes: 2

Expected votes: 8

Quorum device votes: 2

Total votes: 6

Node votes: 2

Quorum: 6 #這次就不會cluster掛起

……

=====step3:讓web1節點宕機=====

[root@web1 ~]# echo c>/proc/sysrq-trigger

web4 clurgmgrd[4505]: <emerg> #1: Quorum Dissolved

[root@web4 ~]# clustat

Service states unavailable: Operation requires quorum

Cluster Status for mycluster @ Tue Sep 23 09:02:03 2014

Member Status: Inquorate #集羣掛起了

Member Name ID Status

------ ---- ---- ------

web1.rocker.com 1 Offline

web2.rocker.com 2 Offline

web3.rocker.com 3 Offline

web4.rocker.com 4 Online, Local

/dev/disk/by-id/scsi-1IET_00010001-p 0 Online

#沒有轉移資源

[root@web4 ~]# cman_tool status

……

Nodes: 1

Expected votes: 8

Quorum device votes: 2

Total votes: 4

Node votes: 2

Quorum: 6 Activity blocked #掛了

……

五、結論:

1)在正常關機的情況下,無論是否需要發生資源轉移,都會自動把關機的節點踢出去,然後重新計算quorum,不會發生節點數少於過半導致集羣掛起;

2)在非正常宕機的情況下,當集羣檢測到有節點失聯,就會通知fence來把它隔離掉,但是,在重新計算quorum後,當節點數少於過半會導致集羣掛起;

3)配置了qdisk之後,相當於是總票數的後援,爲Total vote加了票數。

關於quorum、vote、Totalvote的關係:

例如4個節點,每個節點2vote,qdisk有2vote。

Total vote=node vote + qdisk vote,這裏的Total vote=4X2+2=10

Expected vote=所有節點正常情況下的Total vote +qdisk vote

Quorum=expected vote/2+1,這裏的Quorum=10/2+1=6

當檢查到關機節點,集羣會重新計算Total vote和Quorum。例如,node3關機了,集羣重新計算Total vote=2X3+2=8。Quorum=8/2+1=5。

當檢測到非正常關機導致與集羣失聯的節點,Total vote就會重新計算,但是Quorum不變。例如,這裏的node2死機了,集羣會通知fence設備,把它隔離掉,然後再進行資源在Failover Domain內轉移。Total vote=2X3+2=8。但是Quorum保持6不變。當再有節點死機,重新計算得到的Total vote <Quorum,整個集羣會掛起。

由此推斷,如果把qdisk vote=4,即可實現剩下一臺服務器也可以讓集羣繼續工作。

某些資料說,在gfs文件系統上集羣,有一個節點就會掛起,我搞不懂什麼原理,還有實驗如何實施,請大家多多指教。