logstash日誌收集分析系統

此文版本已較老,請移步http://bbotte.com/查看

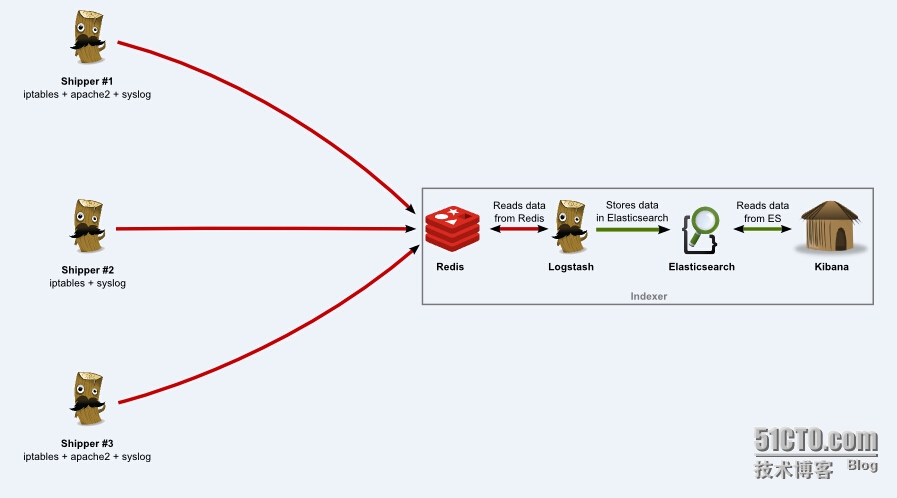

Logstash provides a powerful pipeline for storing, querying, and analyzing your logs. When using Elasticsearch as a backend data store and Kibana as a frontend reporting tool, Logstash acts as the workhorse. It includes an arsenal of built-in inputs, filters, codecs, and outputs, enabling you to harness some powerful functionality with a small amount of effort.

http://semicomplete.com/files/logstash/ logstash收集日誌,需要java平臺

logstash-1.4.2.tar.tar jdk-7u67-linux-x64.rpm

http://www.elasticsearch.org/overview/elkdownloads elasticsearch搜索引擎,此頁面有幫助文檔

http://www.elasticsearch.org/overview/kibana/installation/ Kibana提供web界面

http://redis.io/download redis redis-2.8.19.tar.gz

http://www.elasticsearch.org/guide/en/elasticsearch/reference/current/modules-plugins.html elasticsearch插件

https://github.com/logstash-plugins/logstash-patterns-core/tree/master/patterns logstash正則

幫助文檔

http://www.elasticsearch.org/guide/

http://logstash.net/docs/1.4.2/

https://github.com/elasticsearch/kibana/blob/master/README.md

http://kibana.logstash.es/content/

http://shgy.gitbooks.io/mastering-elasticsearch/content/

系統:CentOS 6.5 64位

所安裝的軟件包:

jdk-7u67-linux-x64.rpm

redis-2.8.19.tar.gz

logstash-1.4.2.tar.tar

elasticsearch-1.4.2.zip#請安裝新的版本 1.4.4(修復了漏洞),logstash和elasticsearch的版本最好一致

kibana-3.1.2.zip

#安裝java和redis

# rpm -ivh jdk-7u67-linux-x64.rpm

# /usr/java/jdk1.7.0_67/bin/java -version

# vim ~/.bashrc

export JAVA_HOME=/usr/java/jdk1.7.0_67

export JRE_HOME=${JAVA_HOME}/jre

export CLASSPATH=.:${JAVA_HOME}/lib:${JRE_HOME}/lib

export PATH=${JAVA_HOME}/bin:$PATH

# . ~/.bashrc

# java -version #驗證java

java version "1.7.0_67"

Java(TM) SE Runtime Environment (build 1.7.0_67-b01)

Java HotSpot(TM) 64-Bit Server VM (build 24.65-b04, mixed mode)

# tar -xzf redis-2.8.19.tar.gz

# cd redis-2.8.19

# make

# make install

# ./utils/install_server.sh

Port : 6379

Config file : /etc/redis/6379.conf

Log file : /var/log/redis_6379.log

Data dir : /var/lib/redis/6379

Executable : /usr/local/bin/redis-server

Cli Executable : /usr/local/bin/redis-cli

# service redis_6379 restart #啓動redis

# redis-cli ping#安裝logstash和elasticsearch

# mkdir /var/www/logstash

# unzip elasticsearch-1.4.2.zip -d /var/www/logstash

# cd /var/www/logstash

# ln -s elasticsearch-1.4.2/ elasticsearch

# cd elasticsearch

# ./bin/elasticsearch -f #啓動elasticsearch,默認配置文件

getopt: invalid option -- 'f'

[2015-02-09 16:15:24,502][INFO ][node ] [Amergin] version[1.4.2], pid[4718], build[927caff/2014-12-16T14:11:12Z]

[2015-02-09 16:15:24,502][INFO ][node ] [Amergin] initializing ...

[2015-02-09 16:15:24,518][INFO ][plugins ] [Amergin] loaded [], sites []

[2015-02-09 16:15:27,945][INFO ][node ] [Amergin] initialized

[2015-02-09 16:15:27,945][INFO ][node ] [Amergin] starting ...

[2015-02-09 16:15:28,232][INFO ][transport ] [Amergin] bound_address {inet[/0:0:0:0:0:0:0:0:9300]}, publish_address {inet[/192.168.10.1:9300]}

[2015-02-09 16:15:28,300][INFO ][discovery ] [Amergin] elasticsearch/mvrxUfixSPKQKzb3s_nFug

[2015-02-09 16:15:32,091][INFO ][cluster.service ] [Amergin] new_master [Amergin][mvrxUfixSPKQKzb3s_nFug][manager][inet[/192.168.10.1:9300]], reason: zen-disco-join (elected_as_master)

[2015-02-09 16:15:32,143][INFO ][http ] [Amergin] bound_address {inet[/0:0:0:0:0:0:0:0:9200]}, publish_address {inet[/192.168.10.1:9200]}

[2015-02-09 16:15:32,143][INFO ][node ] [Amergin] started

[2015-02-09 16:15:32,162][INFO ][gateway ] [Amergin] recovered [0] indices into cluster_state

# curl -X GET http://localhost:9200 #也可以在瀏覽器打開http://192.168.10.1:9200/

{

"status" : 200,

"name" : "Amergin",

"cluster_name" : "elasticsearch",

"version" : {

"number" : "1.4.2",

"build_hash" : "927caff6f05403e936c20bf4529f144f0c89fd8c",

"build_timestamp" : "2014-12-16T14:11:12Z",

"build_snapshot" : false,

"lucene_version" : "4.10.2"

},

"tagline" : "You Know, for Search"

}

# tar -xzf logstash-1.4.2.tar.tar

# cd logstash-1.4.2

# ./bin/logstash -h #查看幫助#下面是測試,查看logstash的運行原理

# echo "`date` hello world"

Mon Feb 9 16:36:15 CST 2015 hello world

#測試logstash的stdin,stdout,如下:

# bin/logstash -e 'input { stdin { } } output { stdout {} }'

Mon Feb 9 16:36:15 CST 2015 hello world #輸入這一行,直接粘貼,不要手動輸入

2015-02-09T08:36:23.190+0000 manager Mon Feb 9 16:36:15 CST 2015 hello world #顯示logstash處理後的數據

#測試logstash的stdin,stdout在elasticsearch處理後的數據顯示,如下:

# /var/www/logstash/elasticsearch/bin/elasticsearch -f #同時啓動elasticsearch

# bin/logstash -e 'input { stdin { } } output { elasticsearch { host => localhost } }'

you know, for logs #輸入這一行

# curl 'http://localhost:9200/_search?pretty' #顯示elasticsearch處理後的數據

{

"took" : 64,

"timed_out" : false,

"_shards" : {

"total" : 5,

"successful" : 5,

"failed" : 0

},

"hits" : {

"total" : 1,

"max_score" : 1.0,

"hits" : [ {

"_index" : "logstash-2015.02.09",

"_type" : "logs",

"_id" : "IFmPqi0dQjSNZR5-94NuHg",

"_score" : 1.0,

"_source":{"message":"you know, for logs","@version":"1","@timestamp":"2015-02-09T08:48:48.747Z","host":"manager"}

} ]

}

}

#You’ve successfully stashed logs in Elasticsearch via Logstash#安裝elasticsearch插件,測試一下

# cd /var/www/logstash/elasticsearch/bin/ #安裝kopf插件

# ./plugin -install lmenezes/elasticsearch-kopf

#下面測試這個kopf插件

# /var/www/logstash/elasticsearch/bin/elasticsearch -f

# bin/logstash -e 'input { stdin { } } output { elasticsearch { host => localhost } stdout { } }'

hello world

2015-02-09T09:07:35.590+0000 manager hellhello world

hello logstash

2015-02-09T09:09:26.981+0000 manager hello logstash

# curl 'http://localhost:9200/_search?pretty' #會看到剛纔輸出一定格式log文件

# curl 'http://localhost:9200/_plugin/kopf/' #顯示插件的頁面,不過這個看不到東西

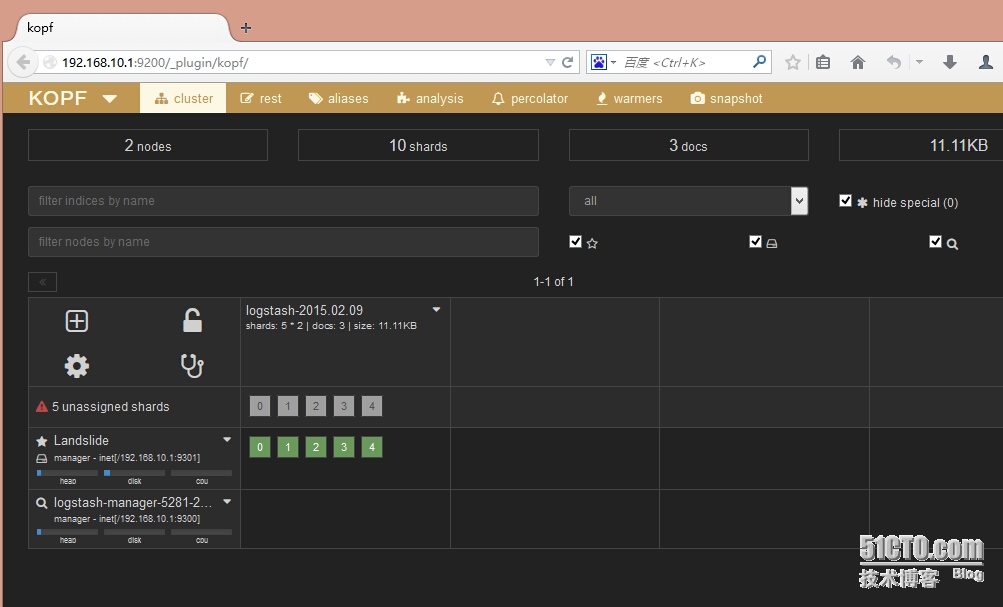

#在瀏覽器訪問192.168.10.1:9200/_plugin/kopf/ 會打開如下界面,瀏覽保存在Elasticsearch中的數據,設置及映射

還有好多很不錯的插件,都可以安裝上去:

es_head: 這個主要提供的是健康狀態查詢,當然標籤頁裏也提供了簡單的form給你提交API請求。es_head現在可以直接通過 elasticsearch/bin/plugin -install mobz/elasticsearch-head 安裝,然後瀏覽器裏直接輸入http://$eshost:9200/_plugin/head/ 就可以看到cluster/node/index/shards的狀態了

bigdesk: 這個主要提供的是節點的實時狀態監控,包括jvm的情況,linux的情況,elasticsearch的情況。排查性能問題的時候很有用,現在也可以過 elasticsearch/bin/plugin -install lukas-vlcek/bigdesk 直接安裝了。然後瀏覽器裏直接輸入 http://$eshost:9200/_plugin/bigdesk/ 就可以看到了。注意如果使用的 bulk_index 的話,如果選擇的刷新間隔太長,indexing per second數據是不準的

#elasticsearch處理日誌

# /var/www/logstash/elasticsearch/bin/elasticsearch -d /var/run/elasticsearch.pid #啓動elasticsearch

#logstash對apache的錯誤日誌處理,如下:

# vi logstash-apache.conf

input {

file {

path => "/var/log/httpd/error_log"

start_position => beginning

}

}

filter {

if [path] =~ "error" {

mutate { replace => { "type" => "apache_error" } }

grok {

match => { "message" => "%{COMBINEDAPACHELOG}" }

}

}

date {

match => [ "timestamp" , "dd/MMM/yyyy:HH:mm:ss Z" ]

}

}

output {

elasticsearch {

host => localhost

}

stdout { codec => rubydebug }

}

# bin/logstash -f logstash-apache.conf

#稍等二十秒,如果沒有輸出,那麼vim 這個日誌,到最後面複製再粘貼一行,模擬寫入日誌

#此時logstash會讀apache的錯誤日誌,在下面命令行會顯示,http://192.168.10.1:9200/_search?pretty 瀏覽器頁面也會看到繼續測試

#logstash對apache日誌的處理,如下:

# vi logstash-apache.conf

input {

file {

path => "/var/log/httpd/*_log"

}

}

filter {

if [path] =~ "access" {

mutate { replace => { type => "apache_access" } }

grok {

match => { "message" => "%{COMBINEDAPACHELOG}" }

}

date {

match => [ "timestamp" , "dd/MMM/yyyy:HH:mm:ss Z" ]

}

} else if [path] =~ "error" {

mutate { replace => { type => "apache_error" } }

} else {

mutate { replace => { type => "random_logs" } }

}

}

output {

elasticsearch { host => localhost }

stdout { codec => rubydebug }

}

# bin/logstash -f logstash-apache.conf說明: 事件的生命週期 Inputs,Outputs,Codecs,Filters構成了Logstash的核心配置項。Logstash通過建立一條事件處理的管道,從你的日誌提取出數據保存到Elasticsearch中,爲高效的查詢數據提供基礎。 Inputs input 及輸入是指日誌數據傳輸到Logstash中。其中常見的配置如下: file:從文件系統中讀取一個文件,很像UNIX命令 "tail -0a" syslog:監聽514端口,按照RFC3164標準解析日誌數據 redis:從redis服務器讀取數據,支持channel(發佈訂閱)和list模式。redis一般在Logstash消費集羣中作爲"broker"角色,保存events隊列共Logstash消費。 Filters Fillters在Logstash處理鏈中擔任中間處理組件。他們經常被組合起來實現一些特定的行爲來,處理匹配特定規則的事件流。常見的filters如下: grok:解析無規則的文字並轉化爲有結構的格式。Grok是目前最好的方式來將無結構的數據轉換爲有結構可查詢的數據。有120多種匹配規則,會有一種滿足你的需要。 mutate:mutate filter 允許改變輸入的文檔,你可以從命名,刪除,移動或者修改字段在處理事件的過程中。 drop:丟棄一部分events不進行處理,例如:debug events。 clone:拷貝event,這個過程中也可以添加或移除字段。 geoip:添加地理信息(爲前臺kibana圖形化展示使用) Outputs outputs是logstash處理管道的最末端組件。一個event可以在處理過程中經過多重輸出,但是一旦所有的outputs都執行結束,這個event也就完成生命週期。一些常用的outputs包括: elasticsearch:如果你計劃將高效的保存數據,並且能夠方便和簡單的進行查詢 file:將event數據保存到文件中 graphite:將event數據發送到圖形化組件中,一個很流行的開源存儲圖形化展示的組件。http://graphite.wikidot.com/ statsd:statsd是一個統計服務,比如技術和時間統計,通過udp通訊,聚合一個或者多個後臺服務,如果你已經開始使用statsd,該選項對你應該很有用 Codecs codecs 是基於數據流的過濾器,它可以作爲input,output的一部分配置。Codecs可以幫助你輕鬆的分割發送過來已經被序列化的數據。流行的codecs包括 json,msgpack,plain(text)。 json:使用json格式對數據進行編碼/解碼 multiline:將匯多個事件中數據彙總爲一個單一的行。比如:java異常信息和堆棧信息 獲取完整的配置信息,請參考 Logstash文檔中 "plugin configuration"部分

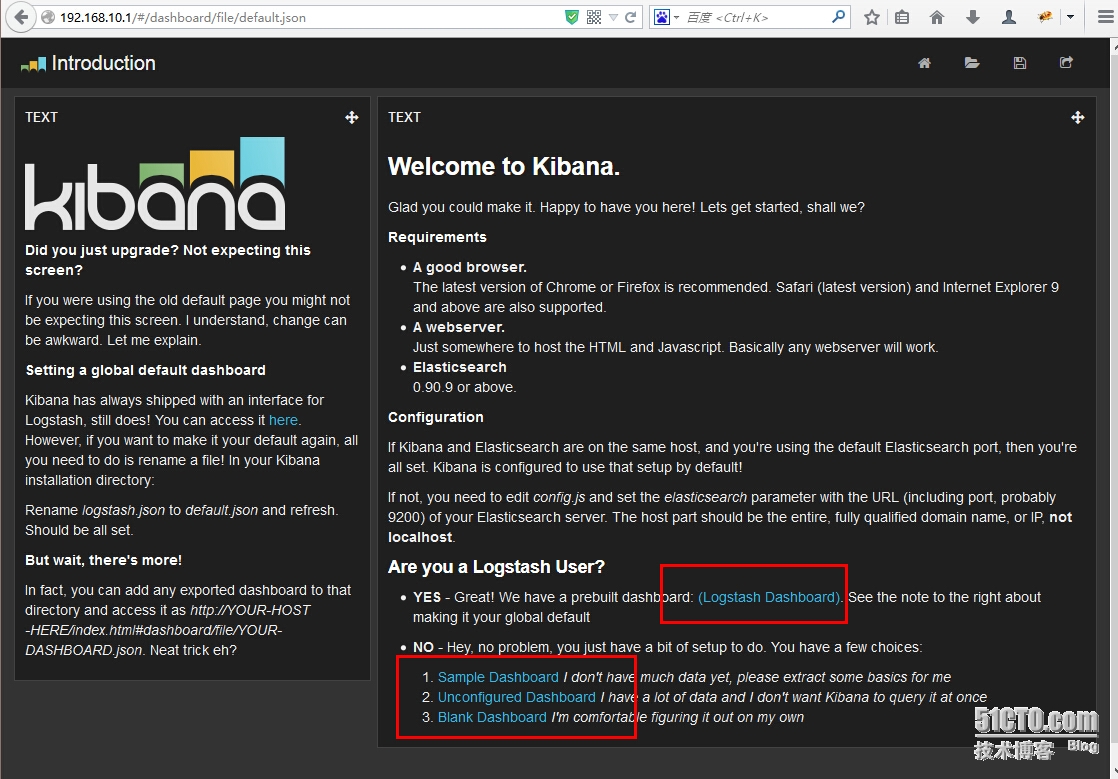

#上面已經很清楚的說明了logstash的工作模式,下面就結合kibana在頁面查看

#kibaba,在logstash裏面已經集成了kibana,在vendor/kibana/這個目錄裏面,當然你也可以下載 kibana-3.1.2.zip 然後解壓

# unzip kibana-3.1.2.zip -d /var/www/logstash/kibana

# ln -s /var/www/logstash/kibana/kibana-3.1.2 /var/www/logstash/kibana/kibana

# vim /var/www/logstash/kibana/kibana/config.js

32 /* elasticsearch: "http://"+window.location.hostname+":9200",

33 */

34 elasticsearch: "http://192.168.10.1:9200",

# vim /etc/httpd/conf.d/kibana.conf

<VirtualHost *:80>

DocumentRoot /var/www/logstash/kibana/kibana

ServerName 192.168.10.1

<Directory "/var/www/logstash/kibana/kibana">

Options FollowSymLinks

AllowOverride None

Order allow,deny

Allow from all

php_value max_execution_time 300

php_value memory_limit 128M

php_value post_max_size 16M

php_value upload_max_filesize 2M

php_value max_input_time 300

php_value date.timezone Asia/Shanghai

</Directory>

</VirtualHost>

# vim logstash.conf

input {

file {

type => "syslog"

# path => [ "/var/log/*.log", "/var/log/messages", "/var/log/syslog" ]

path => [ "/var/log/messages", "/var/log/syslog" ]

sincedb_path => "/var/sincedb"

}

redis {

host => "192.168.10.1"

type => "redis-input"

data_type => "list"

key => "logstash"

}

syslog {

type => "syslog"

port => "5544"

}

}

filter {

grok {

type => "syslog"

match => [ "message", "%{SYSLOGBASE2}" ]

add_tag => [ "syslog", "grokked" ]

}

}

output {

elasticsearch { host => "192.168.10.1" }

}

# service httpd restart

# vim /etc/redis/6379.conf

bind 192.168.10.1

# service redis_6379 restart

# ps aux|grep redis|grep -v grep

root 8340 0.1 0.7 40536 7448 ? Ssl 07:23 0:00 /usr/local/bin/redis-server 192.168.10.1:6379

# vim /var/www/logstash/elasticsearch/config/elasticsearch.yml

http.cors.enabled: true #添加此行

#參考https://github.com/elastic/kibana/issues/1637

# /var/www/logstash/elasticsearch/bin/elasticsearch -d /var/run/elasticsearch.pid #服務也重啓下

# ./bin/logstash --configtest -f logstash.conf #測試配置文件

Configuration OK

# ./bin/logstash -v -f logstash.conf &

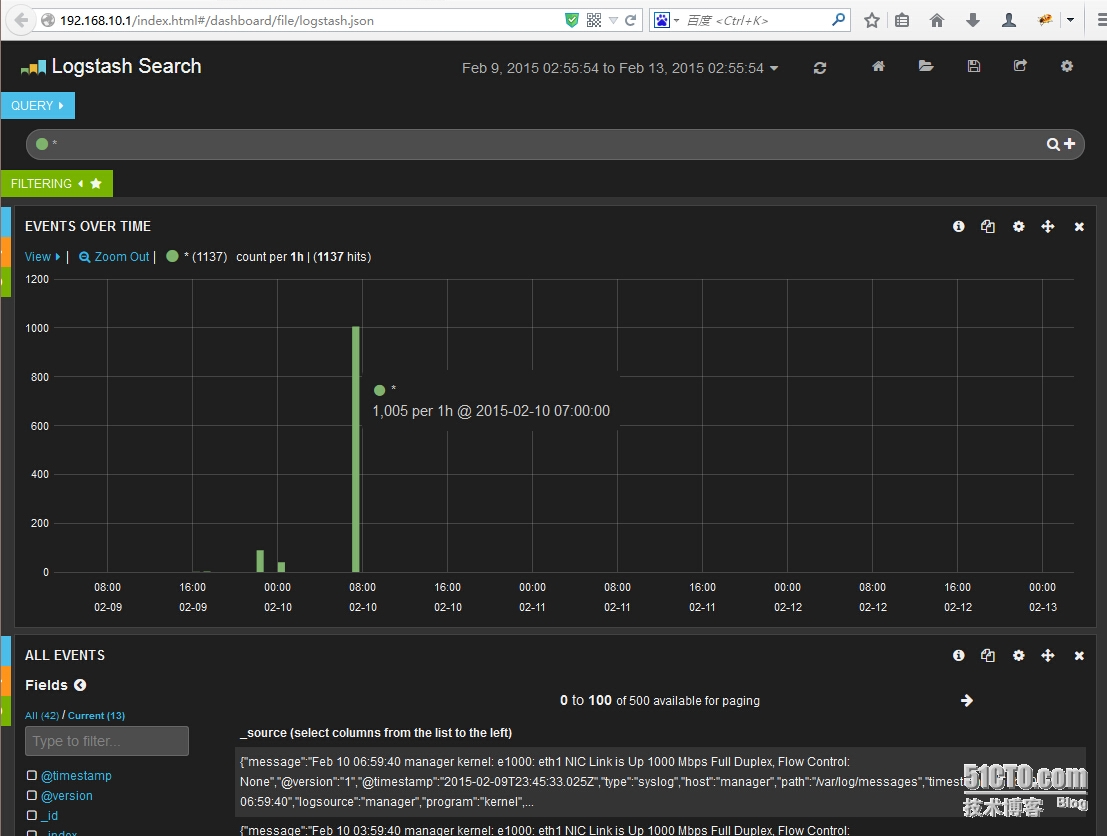

#服務都啓動後,在瀏覽器打開 http://192.168.10.1即可顯示Kibana的默認頁面

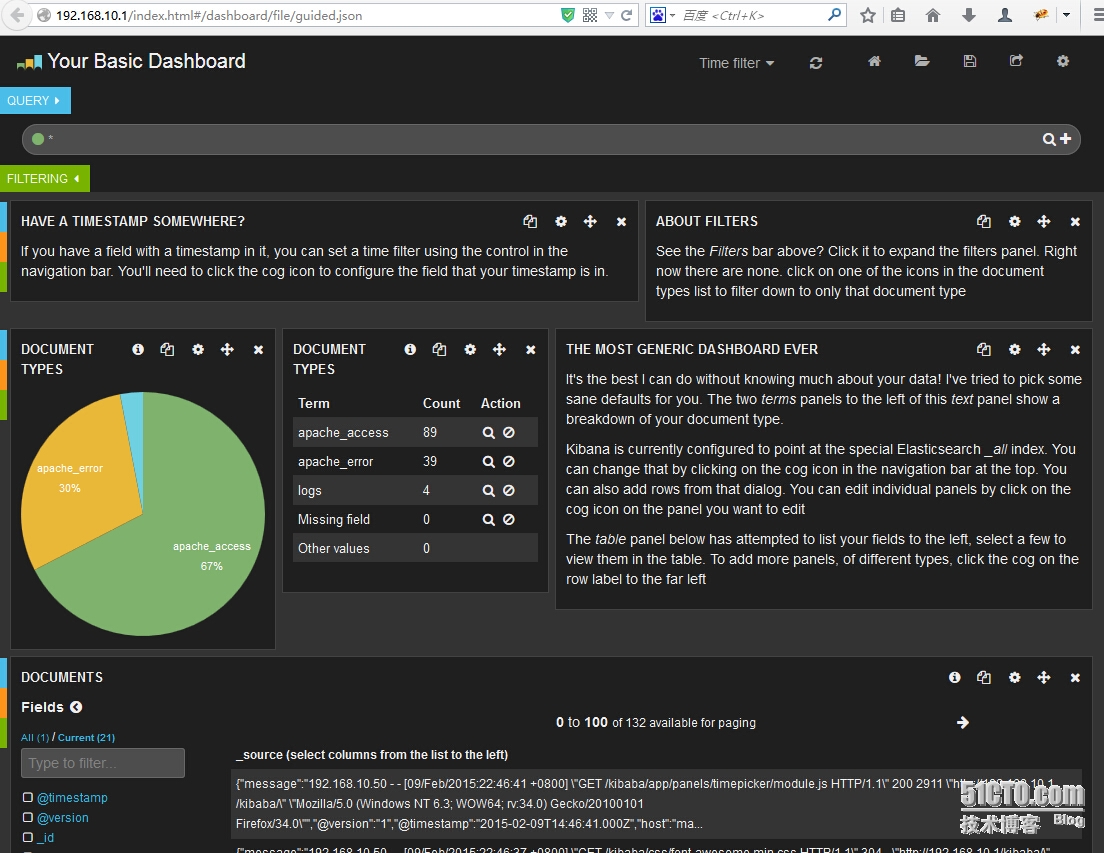

當有日誌寫入的時候,http://192.168.10.1/index.html#/dashboard/file/guided.json 頁面相應的數據即隨着變動,下一步就是研究elasticsearch搜索

elasticsearch存儲的分析日誌目錄:

/var/www/logstash/elasticsearch/data/elasticsearch/nodes/0/indices