出處:http://rfyiamcool.blog.51cto.com/1030776/1340057

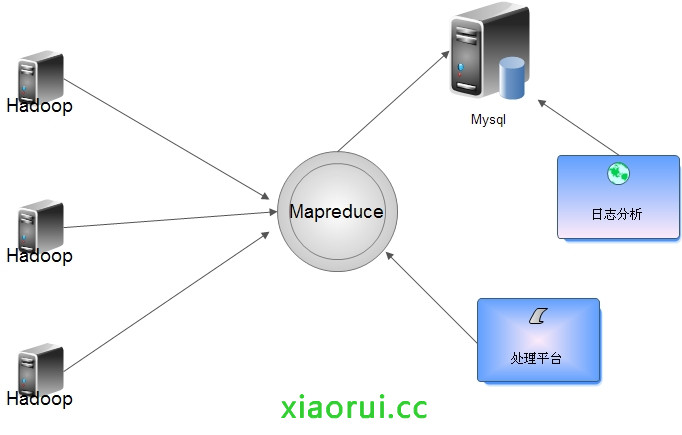

流量比較大的日誌要是直接寫入Hadoop對Namenode負載過大,所以入庫前合併,可以把各個節點的日誌湊併成一個文件寫入HDFS。 根據情況定期合成,寫入到hdfs裏面。

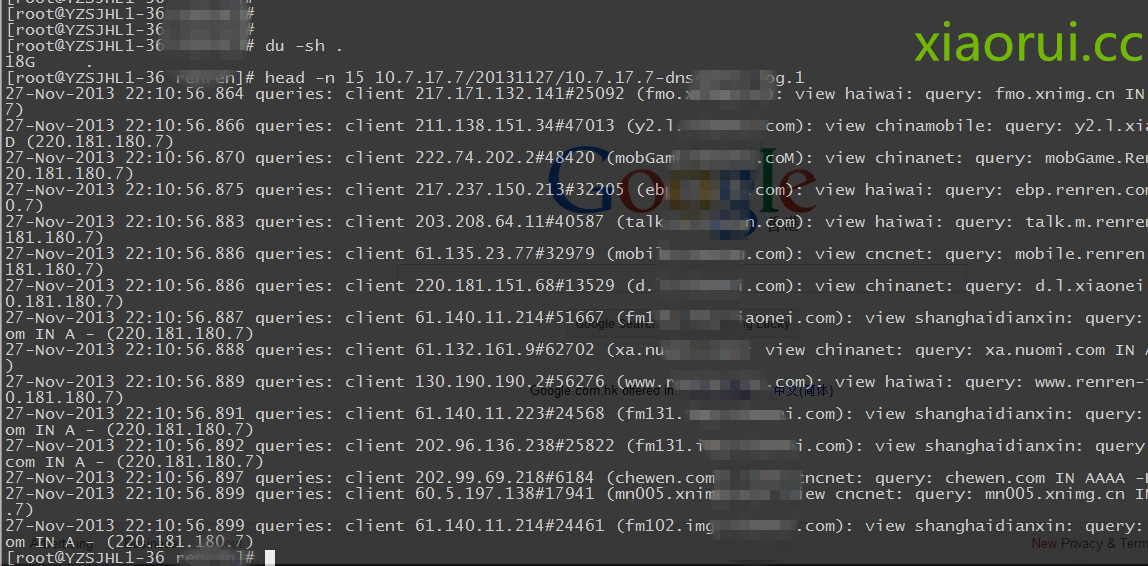

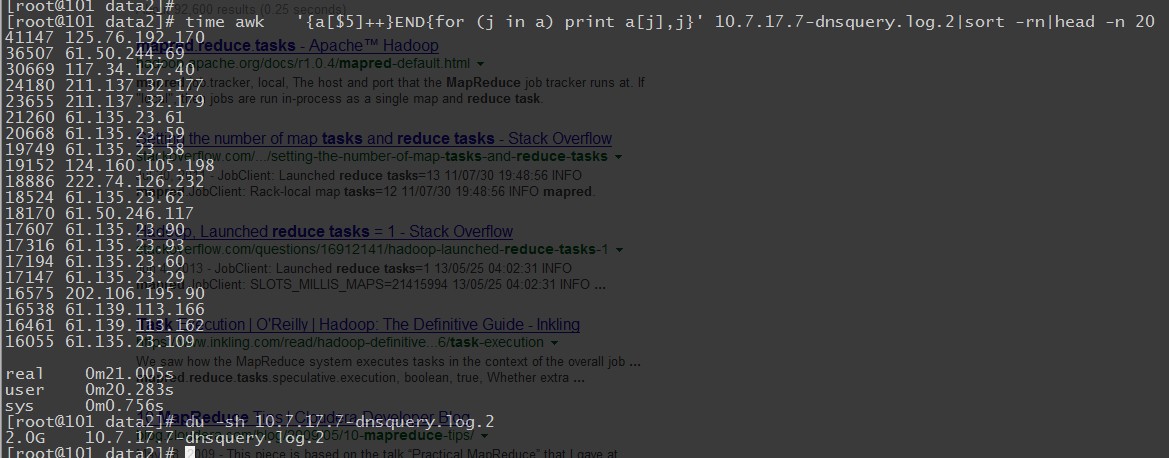

咱們看看日誌的大小,200G的dns日誌文件,我壓縮到了18G,要是用awk perl當然也可以,但是處理速度肯定沒有分佈式那樣的給力。

Hadoop Streaming原理

mapper和reducer會從標準輸入中讀取用戶數據,一行一行處理後發送給標準輸出。Streaming工具會創建MapReduce作業,發送給各個tasktracker,同時監控整個作業的執行過程。

任何語言,只要是方便接收標準輸入輸出就可以做mapreduce~

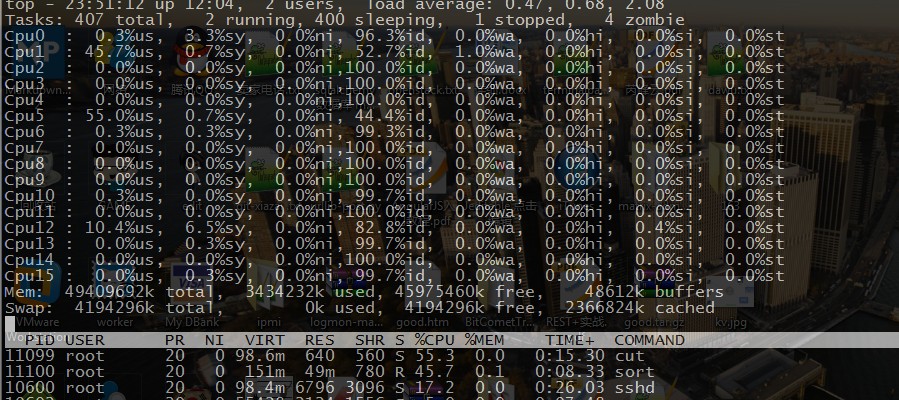

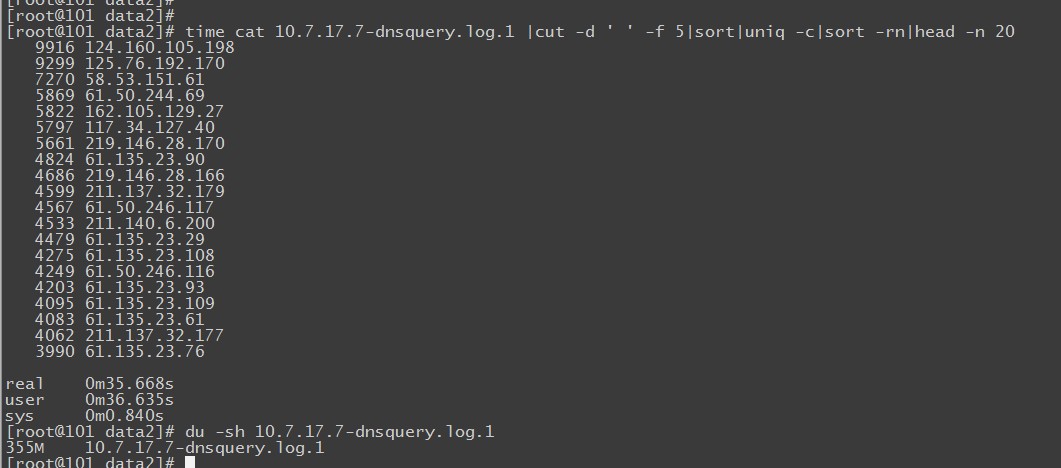

再搞之前我們先簡單測試下shell模擬mapreduce的性能速度~

看下他的結果,350M的文件用時35秒左右。

這是2G的日誌文件,居然用了3分鐘。 當然和我寫的腳本也有問題,我們是模擬mapreduce的方式,而不是調用shell下牛逼的awk,gawk處理。

awk的速度 !果然很霸道,處理日誌的時候,我也很喜歡用awk,只是學習的難度有點大,不像別的shell組件那麼靈活簡單。

這是官方的提供的兩個demo ~

map.py

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 | #!/usr/bin/env python"""A more advanced Mapper, using Python iterators and generators."""importsysdef read_input(file):forline infile:# split the line into wordsyield line.split()def main(separator='\t'):# input comes from STDIN (standard input)data = read_input(sys.stdin)forwords indata:# write the results to STDOUT (standard output);# what we output here will be the input forthe# Reduce step, i.e. the input forreducer.py## tab-delimited; the trivial word count is1forword inwords:print '%s%s%d'% (word, separator, 1)if__name__ == "__main__":main() |

reduce.py的修改方式

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 | #!/usr/bin/env python"""A more advanced Reducer, using Python iterators and generators."""from itertools importgroupbyfrom operator importitemgetterimportsysdef read_mapper_output(file, separator='\t'):forline infile:yield line.rstrip().split(separator, 1)def main(separator='\t'):# input comes from STDIN (standard input)data = read_mapper_output(sys.stdin, separator=separator)# groupby groups multiple word-count pairs by word,# and creates an iterator that returns consecutive keys and their group:# current_word - string containing a word (the key)# group - iterator yielding all ["<current_word>", "<count>"] itemsforcurrent_word, group ingroupby(data, itemgetter(0)):try:total_count = sum(int(count) forcurrent_word, count ingroup)print "%s%s%d"% (current_word, separator, total_count)except ValueError:# count was not a number, so silently discard thisitempassif__name__ == "__main__":main() |

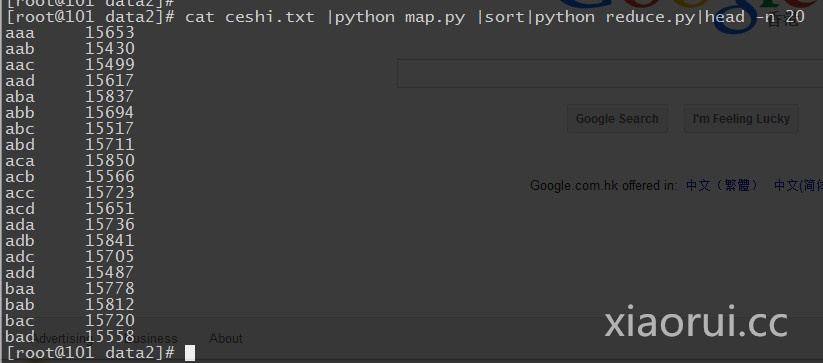

咱們再簡單點:

1 2 3 4 5 6 7 | #!/usr/bin/env pythonimportsysforline insys.stdin:line = line.strip()words = line.split()forword inwords:print '%s\t%s'% (word, 1) |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 | #!/usr/bin/env pythonfrom operator importitemgetterimportsyscurrent_word = Nonecurrent_count = 0word = Noneforline insys.stdin:line = line.strip()word, count = line.split('\t', 1)try:count = int(count)except ValueError:continueifcurrent_word == word:current_count += countelse:ifcurrent_word:print '%s\t%s'% (current_word, current_count)current_count = countcurrent_word = wordifcurrent_word == word:print '%s\t%s'% (current_word, current_count) |

咱們就簡單模擬下數據,跑個測試

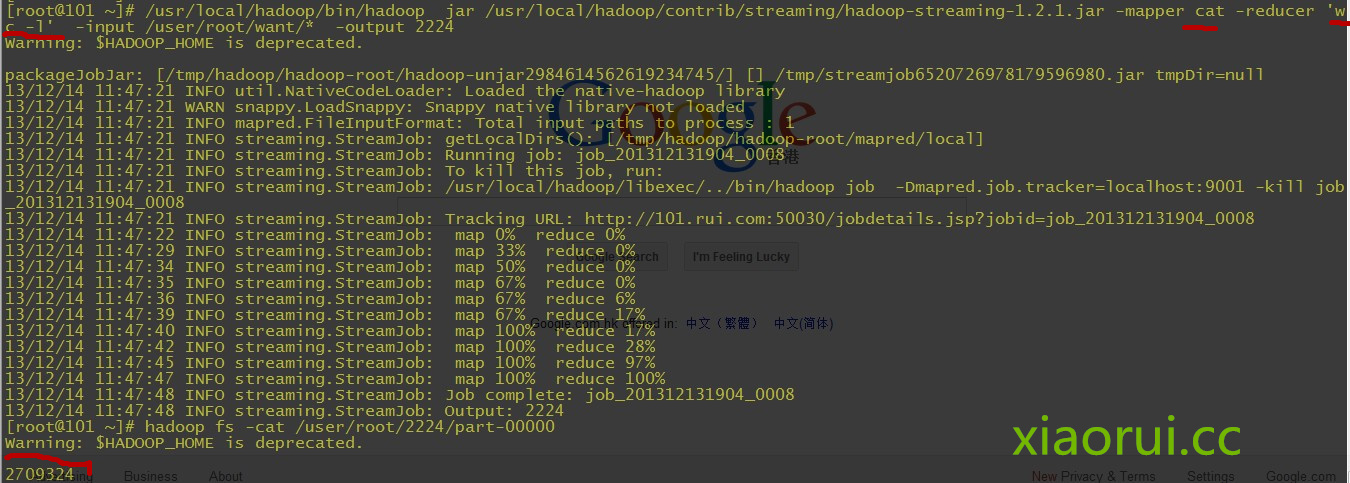

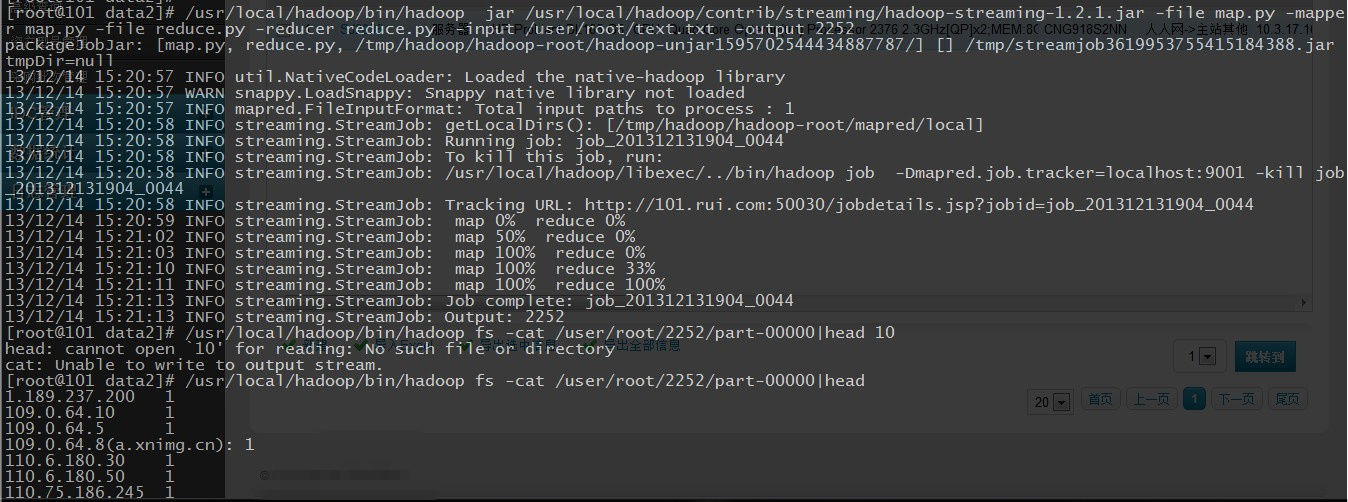

剩下就沒啥了,在hadoop集羣環境下,運行hadoop的steaming.jar組件,加入mapreduce的腳本,指定輸出就行了. 下面的例子我用的是shell的成分。

1 2 3 4 5 | [root@101cron]#$HADOOP_HOME/bin/hadoop jar $HADOOP_HOME/contrib/streaming/hadoop-*-streaming.jar \-input myInputDirs \-output myOutputDir \-mapper cat \-reducer wc |

詳細的參數,對於咱們來說提供性能可以把tasks的任務數增加下,根據情況自己測試下,也別太高了,增加負擔。

(1)-input:輸入文件路徑

(2)-output:輸出文件路徑

(3)-mapper:用戶自己寫的mapper程序,可以是可執行文件或者腳本

(4)-reducer:用戶自己寫的reducer程序,可以是可執行文件或者腳本

(5)-file:打包文件到提交的作業中,可以是mapper或者reducer要用的輸入文件,如配置文件,字典等。

(6)-partitioner:用戶自定義的partitioner程序

(7)-combiner:用戶自定義的combiner程序(必須用java實現)

(8)-D:作業的一些屬性(以前用的是-jonconf),具體有:

1)mapred.map.tasks:map task數目

2)mapred.reduce.tasks:reduce task數目

3)stream.map.input.field.separator/stream.map.output.field.separator: map task輸入/輸出數

據的分隔符,默認均爲\t。

4)stream.num.map.output.key.fields:指定map task輸出記錄中key所佔的域數目

5)stream.reduce.input.field.separator/stream.reduce.output.field.separator:reduce task輸入/輸出數據的分隔符,默認均爲\t。

6)stream.num.reduce.output.key.fields:指定reduce task輸出記錄中key所佔的域數目

這裏是統計dns的日誌文件有多少行 ~

在mapreduce作爲參數的時候,不能用太多太複雜的shell語言,他不懂的~

可以寫成shell文件的模式;

1 2 3 4 5 6 7 8 | #! /bin/bashwhileread LINE; do# forword in$LINE# do# echo "$word 1"awk '{print $5}'donedone |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 | #! /bin/bashcount=0started=0word=""whileread LINE;dogoodk=`echo $LINE | cut -d ' '-f 1`if[ "x"== x"$goodk"];thencontinuefiif[ "$word"!= "$goodk"];then[ $started -ne 0] && echo -e "$word\t$count"word=$goodk count=1started=1elsecount=$(( $count + 1))fidone |

有時候會出現這樣的問題,好好看看自己寫的mapreduce程序 ~

13/12/14 13:26:52 INFO streaming.StreamJob: Tracking URL: http://101.rui.com:50030/jobdetails.jsp?jobid=job_201312131904_0030

13/12/14 13:26:53 INFO streaming.StreamJob: map 0% reduce 0%

13/12/14 13:27:16 INFO streaming.StreamJob: map 100% reduce 100%

13/12/14 13:27:16 INFO streaming.StreamJob: To kill this job, run:

13/12/14 13:27:16 INFO streaming.StreamJob: /usr/local/hadoop/libexec/../bin/hadoop job -Dmapred.job.tracker=localhost:9001 -kill job_201312131904_0030

13/12/14 13:27:16 INFO streaming.StreamJob: Tracking URL: http://101.rui.com:50030/jobdetails.jsp?jobid=job_201312131904_0030

13/12/14 13:27:16 ERROR streaming.StreamJob: Job not successful. Error: # of failed Map Tasks exceeded allowed limit. FailedCount: 1. LastFailedTask: task_201312131904_0030_m_000000

13/12/14 13:27:16 INFO streaming.StreamJob: killJob...

Streaming Command Failed!

python做爲mapreduce執行成功後,結果和日誌一般是放在你指定的目錄下的,結果是在part-00000文件裏面~