Keeplived簡介

keepalived觀其名可知,保持存活,在網絡裏面就是保持在線了,也就是所謂的高可用或熱備,用來防止單點故障(單點故障是指一旦某一點出現故障就會導致整個系統架構的不可用)的發生。

Keepalived原理

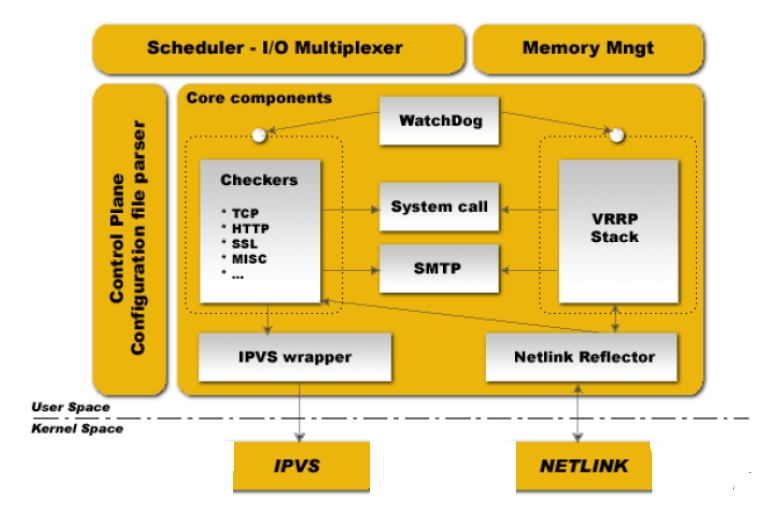

keepalived也是模塊化設計,不同模塊複雜不同的功能

core:是keepalived的核心,複雜主進程的啓動和維護,全局配置文件的加載解析等

check:負責healthchecker(健康檢查),包括了各種健康檢查方式,以及對應的配置的解析包括LVS的配置解析

vrrp:VRRPD子進程,VRRPD子進程就是來實現VRRP協議的

libipfwc:iptables(ipchains)庫,配置LVS會用到

libipvs*:配置LVS會用到

注意,keepalived和LVS完全是兩碼事,只不過他們各負其責相互配合而已

keepalived啓動後會有三個進程

父進程:內存管理,子進程管理等等

子進程:VRRP子進程

子進程:healthchecker子進程

有圖可知,兩個子進程都被系統WatchDog看管,兩個子進程各自複雜自己的事,healthchecker子進程複雜檢查各自服務器的健康程度,例如HTTP,LVS等等,如果healthchecker子進程檢查到MASTER上服務不可用了,就會通知本機上的兄弟VRRP子進程,讓他刪除通告,並且去掉虛擬IP,轉換爲BACKUP狀態

實驗:

主機1 172.16.31.10

主機2 172.16.31.30

1, 下載rpm包至本地,然後安裝,此次實驗用的是keepalived-1.2.13-1.el6.x86_64.rpm主機1,2都安裝

#yum install -y keepalived-1.2.13-1.el6.x86_64.rpm

2, 編輯配置文件在/etc/keepalived/keepalived.conf,一般在配置前最好先把配置文件備份一下

#cp keepalived.conf keepalived.conf.bak

!Configuration File for keepalived //歎號開頭的行爲註釋行

global_defs{ //全局配置

notification_email {

[email protected]

[email protected]

[email protected]

}

notification_email_from [email protected]

smtp_server 192.168.200.1

smtp_connect_timeout 30

router_id LVS_DEVEL

}

vrrp_instanceVI_1 { // 配置實例1

state MASTER //狀態爲主

interface eth0 //在哪個接口上配置

virtual_router_id 51 // 虛擬router_id 號爲51

priority 100 // 優先級

advert_int 1

authentication {

auth_type PASS // 基於密碼的認證

auth_pass1111 // 認證密碼

}

virtual_ipaddress { // 虛擬地址IP組

172.16.31.22

}

}3, 配置備份節點etc/keepalived/keepalived.conf

vrrp_instanceVI_1 {

state BACKUP // 狀態變爲備的,BACKUP

interface eth0

virtual_router_id 101

priority 99 // 優先級比主的小就行

advert_int 1

authentication {

auth_type PASS

auth_pass 123456 // 與主的保持一致

}

virtual_ipaddress {

172.16.31.22

}

}4, 啓動keepalived服務在主機1接點上

# service keepalived start

Starting keepalived: [ OK ]

在主機1上# ip addr show

(1): lo: <LOOPBACK,UP,LOWER_UP> mtu16436 qdisc noqueue state UNKNOWN

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

(2): eth0:<BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen1000

link/ether 00:0c:29:a7:90:c5 brd ff:ff:ff:ff:ff:ff

inet 172.16.31.10/16 brd 172.16.255.255 scope global eth0

inet 172.16.31.22/32 scope global eth0 虛擬IP配置上了

inet6 fe80::20c:29ff:fea7:90c5/64 scope link

valid_lft forever preferred_lft forever

5, 然後再主機1上停止一下服務,查看日誌文件

# service keepalived stop

Oct 10 17:00:49 localhost Keepalived[2791]: Stopping Keepalivedv1.2.13 (09/14,2014)

Oct 10 17:00:49 localhost Keepalived_vrrp[2793]:VRRP_Instance(VI_1) removing protocol VIPs.

主機1上# ip addrshow

(1): lo:<LOOPBACK,UP,LOWER_UP> mtu 16436 qdisc noqueue state UNKNOWN

link/loopback 00:00:00:00:00:00 brd00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

(2): eth0:<BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen1000

link/ether 00:0c:29:a7:90:c5 brdff:ff:ff:ff:ff:ff

inet 172.16.31.10/16 brd 172.16.255.255scope global eth0

inet6 fe80::20c:29ff:fea7:90c5/64 scopelink

valid_lftforever preferred_lft forever

此刻172.16.31.22消失了

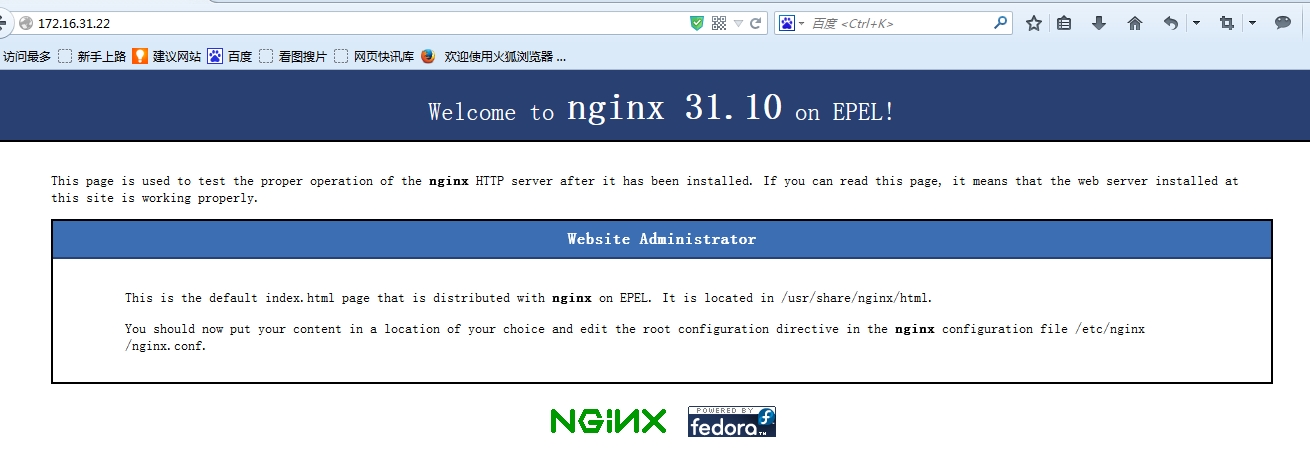

6, 此刻在啓動2節點keepalived服務,並查看日誌文件

# service keepalived start

Sep 25 08:15:21 localhost Keepalived_vrrp[3954]: VRRP_Instance(VI_1)Transition to MASTER STATE

Sep 25 08:15:22 localhost Keepalived_vrrp[3954]: VRRP_Instance(VI_1)Entering MASTER STATE

Sep 25 08:15:22 localhost Keepalived_vrrp[3954]: VRRP_Instance(VI_1)setting protocol VIPs.

由於沒人和主機2搶,所以主機2就升級爲master

# ip addrshow

(1): lo:<LOOPBACK,UP,LOWER_UP> mtu 16436 qdisc noqueue state UNKNOWN

link/loopback 00:00:00:00:00:00 brd00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

(2): eth0:<BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen1000

link/ether 00:0c:29:c8:8f:e1 brdff:ff:ff:ff:ff:ff

inet 172.16.31.30/16 brd 172.16.255.255scope global eth0

inet172.16.31.22/32 scope global eth0 此刻ip在主機2上

inet6 fe80::20c:29ff:fec8:8fe1/64 scopelink

valid_lftforever preferred_lft forever

7, 在2節點上停止服務,在開始一下服務

Oct 10 17:40:59 localhost Keepalived_vrrp[2941]: VRRP_Instance(VI_1)Received lower prio advert, forcing new election 1節點接收到一個比自己優先級低的,然後重新選舉,自己變爲主

Oct 10 17:41:00 localhost Keepalived_vrrp[2941]: VRRP_Instance(VI_1)Entering MASTER STATE

Oct 10 17:41:00 localhost Keepalived_vrrp[2941]: VRRP_Instance(VI_1)setting protocol VIPs.

Oct 10 17:41:00 localhost Keepalived_healthcheckers[2940]: Netlinkreflector reports IP 172.16.31.22 added

8, 然後開始配置雙主模型,在1,2節點上配置,各增加一個實例

vrrp_instanceVI_2 {

state BACKUP

interface eth0

virtual_router_id 200

priority 99

advert_int 1

authentication {

auth_type PASS

auth_pass 123

}

virtual_ipaddress {

172.16.31.222

}

}

節點2上

vrrp_instanceVI_2 {

state MASTER

interface eth0

virtual_router_id 200

priority 100

advert_int 1

authentication {

auth_type PASS

auth_pass 123

}

virtual_ipaddress {

172.16.31.222

}

}然後節點1上# ip addr show

(1): lo: <LOOPBACK,UP,LOWER_UP> mtu16436 qdisc noqueue state UNKNOWN

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

(2): eth0:<BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen1000

link/ether 00:0c:29:a7:90:c5 brd ff:ff:ff:ff:ff:ff

inet 172.16.31.10/16 brd 172.16.255.255 scope global eth0

inet 172.16.31.22/32 scope global eth0

inet6 fe80::20c:29ff:fea7:90c5/64 scope link

valid_lft forever preferred_lft forever

節點2上

(2): eth0:<BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen1000

link/ether 00:0c:29:c8:8f:e1 brd ff:ff:ff:ff:ff:ff

inet 172.16.31.30/16 brd 172.16.255.255 scope global eth0

inet 172.16.31.222/32 scope global eth0

inet6 fe80::20c:29ff:fec8:8fe1/64 scope link

valid_lft forever preferred_lft forever

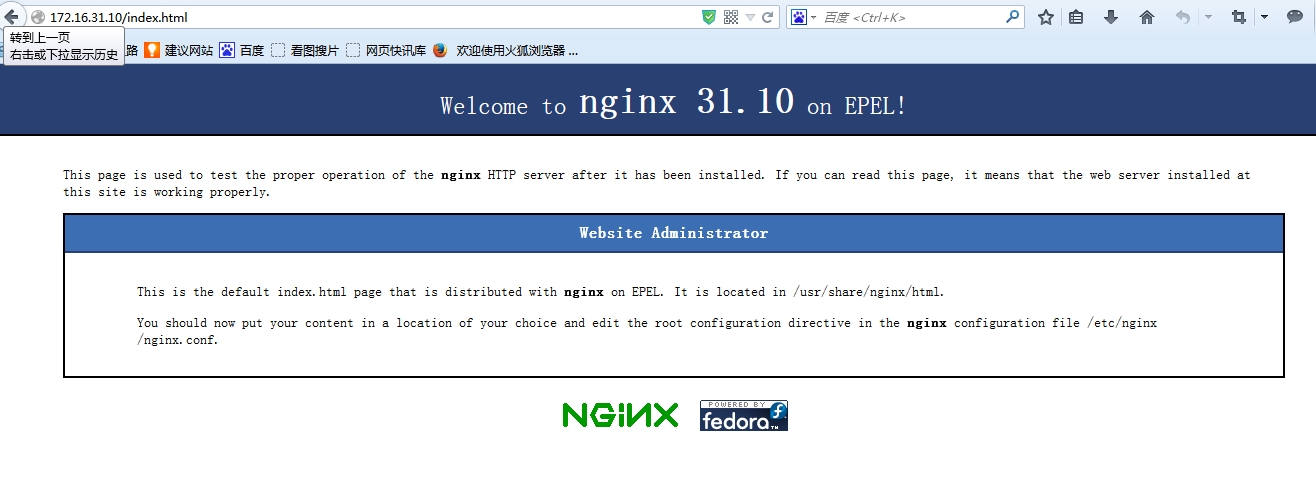

9, 簡單的利用keepalived配置通知nginx

首先在1,2節點上安裝nginx,然後再配置文件keepalived.conf配置

vrrp_instanceVI_1 {

state MASTER

interface eth0

virtual_router_id 101

priority 100

advert_int 1

authentication {

auth_type PASS

auth_pass 123456

}

virtual_ipaddress {

172.16.31.22

}

notify_master "/etc/rc.d/init.d/nginxstart" 表示當切換master時,要執行的腳本

notify_backup "/etc/rc.d/init.d/nginxstop"

notify_fault "/etc/rc.d/init.d/nginxstop"

}2節點上同樣的配置,然後1,2節點

#servicekeepalived restart

LISTEN 0 128 *:80 *:*

然後再此基礎上擴展,shell腳本來檢測

vrrp-script chk_nx{

script "killall -0 nginx"

interval 1 腳本執行間隔

weight -5 腳本結果導致優先級變更,-5優先級-5,+10優先級+10

fall 2

rise 1

}

然後再配置文件最後再加上

track_script{

chk_ nx 這個是調用上面那個腳本

}2節點上也是這樣,然後2節點開啓服務

然後開啓1節點,這時候由於1節點比2節點的優先級高,就會變爲主的