簡介

LNMT=Linux+Nginx+MySQL+Tomcat;

Tomcat 服務器是一個免費的開放源代碼的Web 應用服務器,屬於輕量級應用服務器;

在中小型系統和併發訪問用戶不是很多的場合下被普遍使用,是開發和調試JSP 程序的首選;

架構需求

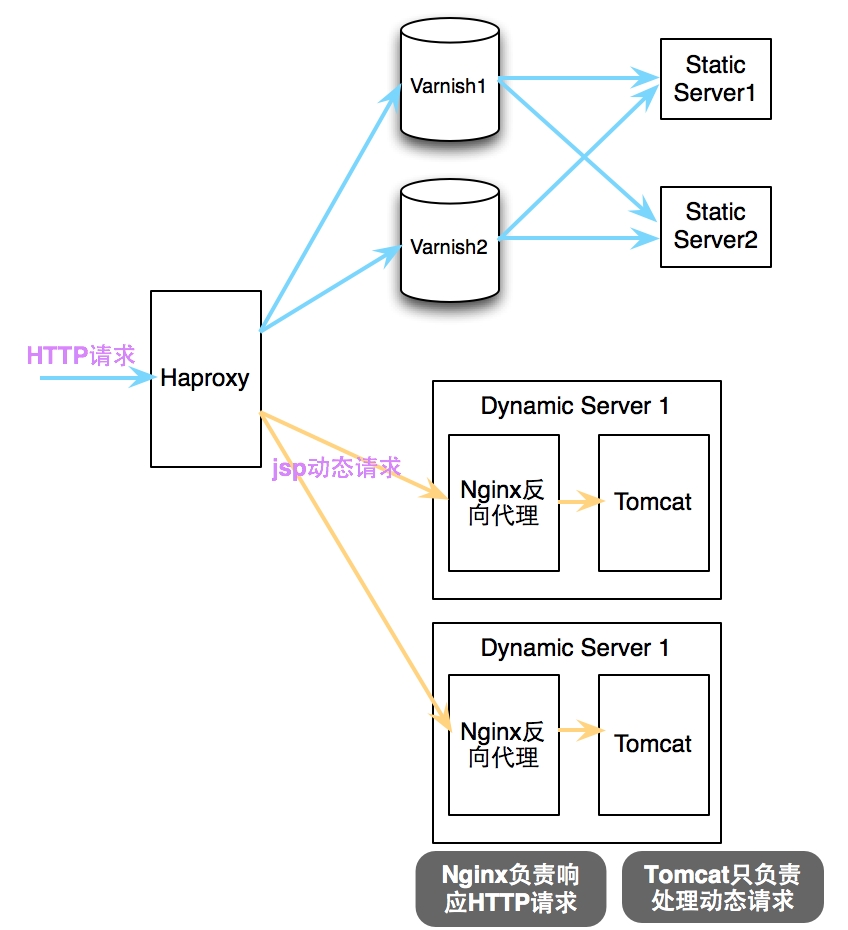

Tomcat實現JSP動態請求解析的基本架構

說明:由後端Tomcat負責解析動態jsp請求,但爲了提高響應性能,在同一主機內配置Nginx做反向代理,轉發所有請求至tomcat即可;

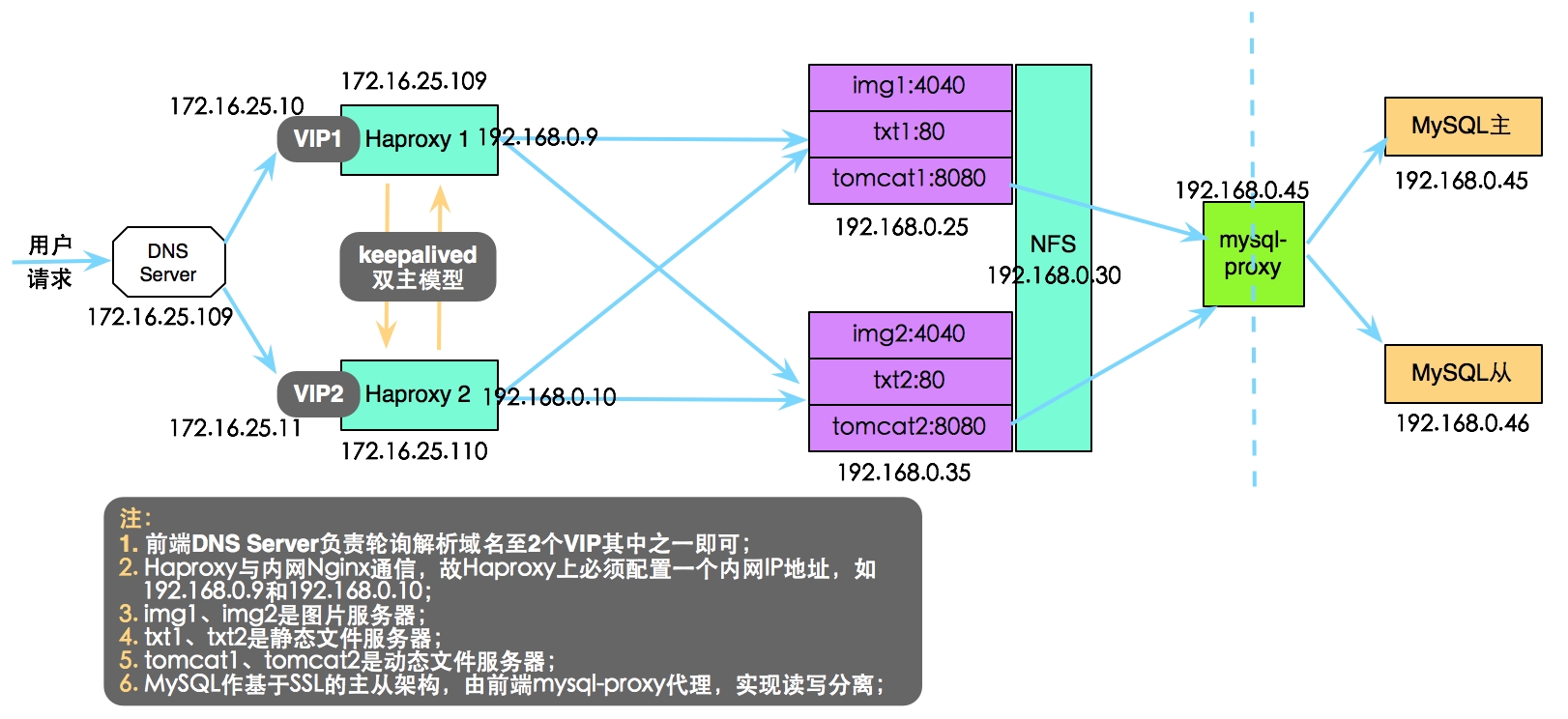

完整的LNMT架構設計

說明:本篇博客主要講解單臺Haproxy到後端多臺Tomcat服務器的實現;

安裝配置

Tomcat安裝配置

安裝JDK

# rpm -ivh jdk-7u9-linux-x64.rpm

# vi /etc/profile.d/java.sh

export JAVA_HOME=/usr/java/latest

export PATH=$JAVA_HOME/bin:$PATH

# . /etc/profile.d/java.sh安裝Tomcat

# tar xf apache-tomcat-7.0.42.tar.gz -C /usr/local/

# cd /usr/local/

# ln -sv apache-tomcat-7.0.42/ tomcat

# vi /etc/profile.d/tomcat.sh

export CATALINA_HOME=/usr/local/tomcat

export PATH=$CATALINA_HOME/bin:$PATH

# . /etc/profile.d/tomcat.sh

# 編寫服務腳本

# vi /etc/init.d/tomcat

#!/bin/sh

# Tomcat init script for Linux.

#

# chkconfig: 2345 96 14

# description: The Apache Tomcat servlet/JSP container.

# JAVA_OPTS='-Xms64m -Xmx128m'

JAVA_HOME=/usr/java/latest

CATALINA_HOME=/usr/local/tomcat

export JAVA_HOME CATALINA_HOME

case $1 in

start)

exec $CATALINA_HOME/bin/catalina.sh start ;;

stop)

exec $CATALINA_HOME/bin/catalina.sh stop;;

restart)

$CATALINA_HOME/bin/catalina.sh stop

sleep 2

exec $CATALINA_HOME/bin/catalina.sh start ;;

*)

echo "Usage: `basename $0` {start|stop|restart}"

exit 1

;;

esac

# chmod +x /etc/init.d/tomcat配置Tomcat

# cd /usr/local/tomcat/conf

# vi server.xml

<?xml version='1.0' encoding='utf-8'?>

<Server port="8005" shutdown="SHUTDOWN">

<Listener className="org.apache.catalina.core.AprLifecycleListener" SSLEngine="on" />

<Listener className="org.apache.catalina.core.JasperListener" />

<Listener className="org.apache.catalina.core.JreMemoryLeakPreventionListener" />

<Listener className="org.apache.catalina.mbeans.GlobalResourcesLifecycleListener" />

<Listener className="org.apache.catalina.core.ThreadLocalLeakPreventionListener" />

<GlobalNamingResources>

<Resource name="UserDatabase" auth="Container"

type="org.apache.catalina.UserDatabase"

description="User database that can be updated and saved"

factory="org.apache.catalina.users.MemoryUserDatabaseFactory"

pathname="conf/tomcat-users.xml" />

</GlobalNamingResources>

<Service name="Catalina">

<Connector port="9000" protocol="HTTP/1.1" # 配置HTTP連接器監聽9000端口

connectionTimeout="20000"

redirectPort="8443" />

<Connector port="8009" protocol="AJP/1.3" redirectPort="8443" />

<Engine name="Catalina" defaultHost="localhost">

<Realm className="org.apache.catalina.realm.LockOutRealm">

<Realm className="org.apache.catalina.realm.UserDatabaseRealm"

resourceName="UserDatabase"/>

</Realm>

<Host name="xxrenzhe.lnmmp.com" appBase="webapps" # 新增Host,配置相應的Context

unpackWARs="true" autoDeploy="true">

<Context path="" docBase="lnmmpapp" /> # 配置的應用程序目錄是webapps/lnmmpapp

<Valve className="org.apache.catalina.valves.AccessLogValve" directory="logs"

prefix="lnmmp_access_log." suffix=".txt"

pattern="%h %l %u %t "%r" %s %b" />

</Host>

<Host name="localhost" appBase="webapps"

unpackWARs="true" autoDeploy="true">

<Valve className="org.apache.catalina.valves.AccessLogValve" directory="logs"

prefix="localhost_access_log." suffix=".txt"

pattern="%h %l %u %t "%r" %s %b" />

</Host>

</Engine>

</Service>

</Server>

# 創建應用程序相關目錄

# cd /usr/local/tomcat/webapps/

# mkdir -pv lnmmpapp/WEB-INF/{classes,lib}

# cd lnmmpapp

# vi index.jsp # 編寫首頁文件

<%@ page language="java" %>

<html>

<head><title>Tomcat1</title></head># 在Tomcat2主機上替換爲Tomcat2

<body>

<h1><font color="red">Tomcat1.lnmmp.com</font></h1># 在Tomcat2主機上替換爲Tomcat2.lnmmp.com,color修改爲blue

<table align="centre" border="1">

<tr>

<td>Session ID</td>

<% session.setAttribute("lnmmp.com","lnmmp.com"); %>

<td><%= session.getId() %></td>

</tr>

<tr>

<td>Created on</td>

<td><%= session.getCreationTime() %></td>

</tr>

</table>

</body>

</html>啓動Tomcat服務

chkconfig --add tomcat service tomcat start

Nginx配置

Nginx安裝詳見博文“如何測試Nginx的高性能”

配置Nginx

# vi /etc/nginx/nginx.conf

worker_processes 2;

error_log /var/log/nginx/nginx.error.log;

pid /var/run/nginx.pid;

events {

worker_connections 1024;

}

http {

include mime.types;

default_type application/octet-stream;

log_format main '$remote_addr - $remote_user [$time_local] "$request" '

'$status $body_bytes_sent "$http_referer" '

'"$http_user_agent" "$http_x_forwarded_for"';

sendfile on;

keepalive_timeout 65;

fastcgi_cache_path /www/cache levels=1:2 keys_zone=fcgicache:10m inactive=5m;

server { # 處理前端發來的圖片請求;

listen 4040;

server_name xxrenzhe.lnmmp.com;

access_log /var/log/nginx/nginx-img.access.log main;

root /www/lnmmp.com;

valid_referers none blocked xxrenzhe.lnmmp.com *.lnmmp.com; # 配置一定的反盜鏈策略;

if ($invalid_referer) {

rewrite ^/ http://xxrenzhe.lnmmp.com/404.html;

}

}

server {

listen 80; # 處理前端發來的靜態請求;

server_name xxrenzhe.lnmmp.com;

access_log /var/log/nginx/nginx-static.access.log main;

location / {

root /www/lnmmp.com;

index index.php index.html index.htm;

}

gzip on; # 對靜態文件開啓壓縮傳輸功能;

gzip_comp_level 6;

gzip_buffers 16 8k;

gzip_http_version 1.1;

gzip_types text/plain text/css application/x-javascript text/xml application/xml;

gzip_disable msie6;

}

server {

listen 8080;

server_name xxrenzhe.lnmmp.com;

access_log /var/log/nginx/nginx-tomcat.access.log main;

location / {

proxy_pass http://127.0.0.1:9000; # 將全部動態請求都轉發至後端tomcat

}

}

}啓動服務

service nginx start

Haproxy安裝配置

# yum -y install haproxy

# vi /etc/haproxy/haproxy.cfg

global

log 127.0.0.1 local2

chroot /var/lib/haproxy

pidfile /var/run/haproxy.pid

maxconn 4000

user haproxy

group haproxy

daemon

defaults

mode http

log global

option httplog

option dontlognull

option http-server-close

option forwardfor except 127.0.0.0/8

option redispatch

retries 3

timeout http-request 10s

timeout queue 1m

timeout connect 10s

timeout client 1m

timeout server 1m

timeout http-keep-alive 10s

timeout check 10s

maxconn 30000

listen stats # 配置haproxy的狀態信息頁面

mode http

bind 0.0.0.0:1080

stats enable

stats hide-version

stats uri /haproxyadmin?stats

stats realm Haproxy\ Statistics

stats auth admin:admin

stats admin if TRUE

frontend http-in

bind *:80

mode http

log global

option httpclose

option logasap

option dontlognull

capture request header Host len 20

capture request header Referer len 60

acl url_img path_beg -i /images

acl url_img path_end -i .jpg .jpeg .gif .png

acl url_dynamic path_end -i .jsp .do

use_backend img_servers if url_img # 圖片請求發送至圖片服務器;

use_backend dynamic_servers if url_dynamic # JSP動態請求發送至Tomcat服務器;

default_backend static_servers # 其餘靜態請求都發送至靜態服務器;

backend img_servers

balance roundrobin

server img-srv1 192.168.0.25:4040 check maxconn 6000

server img-srv2 192.168.0.35:4040 check maxconn 6000

backend static_servers

cookie node insert nocache

option httpchk HEAD /health_check.html

server static-srv1 192.168.0.25:80 check maxconn 6000 cookie static-srv1

server static-srv2 192.168.0.35:80 check maxconn 6000 cookie static-srv2

backend dynamic_servers

balance roundrobin

server tomcat1 192.168.0.25:8080 check maxconn 1000

server tomcat2 192.168.0.35:8080 check maxconn 1000啓動服務

service haproxy start

本地DNS解析設置

xxrenzhe.lnmmp.com A 172.16.25.109 # 配置爲haproxy的IP地址即可

訪問驗證

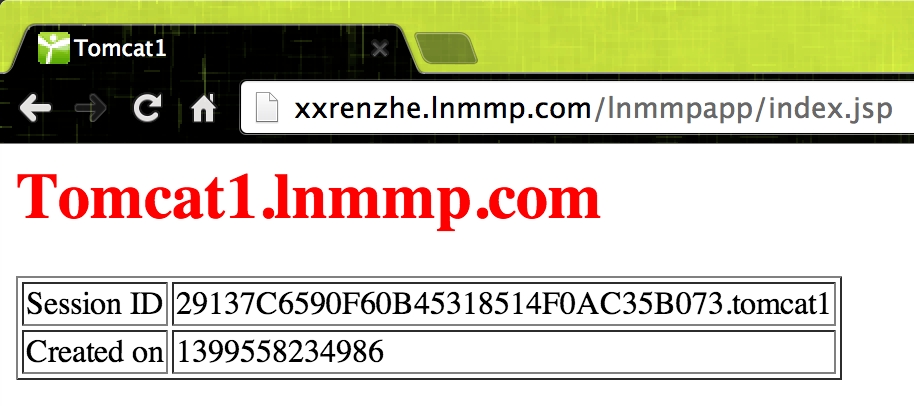

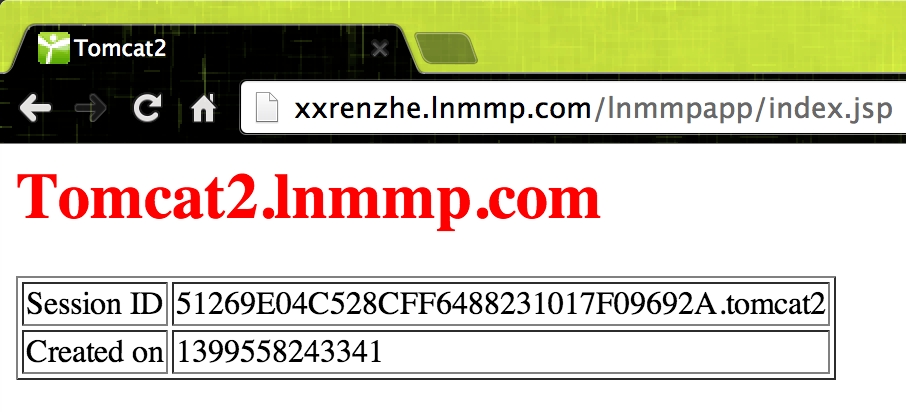

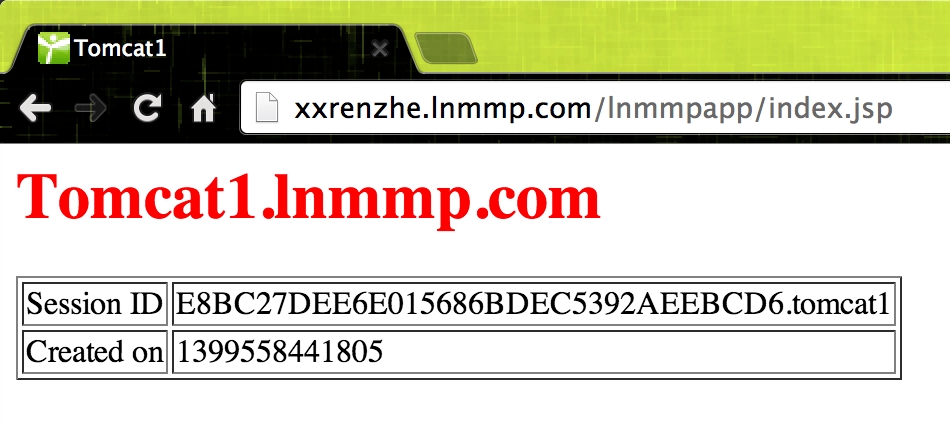

說明:由於前端Haproxy調度動態請求是roundrobin算法,故每次刷新都會輪詢分配到不同的Tomcat節點上,且每次獲得的session都是不一樣的;

實現session綁定

將同一用戶的請求調度至後端同一臺Tomcat上,不至於一刷新就導致session丟失;

修改Tomcat配置

# vi /usr/local/tomcat/conf/server.xml # 修改如下行內容,添加jvmRoute字段 <Engine name="Catalina" defaultHost="localhost" jvmRoute="tomcat1"> # 在Tomcat2上替換爲tomcat2

修改Haproxy配置

# vi /etc/haproxy/haproxy.cfg # 爲後端動態節點添加cookie綁定機制

backend dynamic_servers

cookie node insert nocache

balance roundrobin

server tomcat1 192.168.0.25:8080 check maxconn 1000 cookie tomcat1

server tomcat2 192.168.0.35:8080 check maxconn 1000 cookie tomcat1訪問驗證

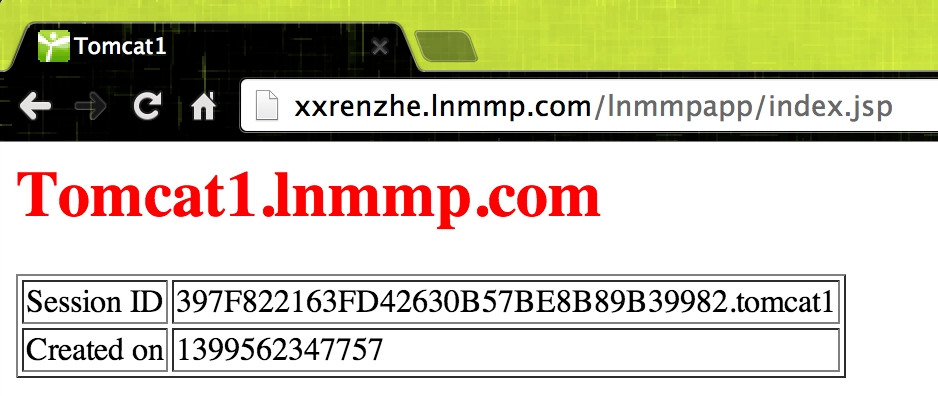

說明:當第一次訪問成功後,再次刷新並不會改變分配的Tomcat節點和session信息,說明session綁定成功;

實現session保持

Tomcat支持Session集羣,可在各Tomcat服務器間複製全部session信息,當後端一臺Tomcat服務器宕機後,Haproxy重新調度用戶請求後,在其它正常的Tomcat服務上依然存在用戶原先的session信息;

Session集羣可在Tomcat服務器規模(一般10臺以下)不大時使用,否則會導致複製代價過高;

配置實現

# vi /usr/local/tomcat/conf/server.xml # 完整配置

<?xml version='1.0' encoding='utf-8'?>

<Server port="8005" shutdown="SHUTDOWN">

<Listener className="org.apache.catalina.core.AprLifecycleListener" SSLEngine="on" />

<Listener className="org.apache.catalina.core.JasperListener" />

<Listener className="org.apache.catalina.core.JreMemoryLeakPreventionListener" />

<Listener className="org.apache.catalina.mbeans.GlobalResourcesLifecycleListener" />

<Listener className="org.apache.catalina.core.ThreadLocalLeakPreventionListener" />

<GlobalNamingResources>

<Resource name="UserDatabase" auth="Container"

type="org.apache.catalina.UserDatabase"

description="User database that can be updated and saved"

factory="org.apache.catalina.users.MemoryUserDatabaseFactory"

pathname="conf/tomcat-users.xml" />

</GlobalNamingResources>

<Service name="Catalina">

<Connector port="9000" protocol="HTTP/1.1"

connectionTimeout="20000"

redirectPort="8443" />

<Connector port="8009" protocol="AJP/1.3" redirectPort="8443" />

<Engine name="Catalina" defaultHost="localhost" jvmRoute="tomcat1"># 在Tomcat2主機上替換爲tomcat2

<Cluster className="org.apache.catalina.ha.tcp.SimpleTcpCluster" # 添加集羣相關配置;

channelSendOptions="8">

<Manager className="org.apache.catalina.ha.session.DeltaManager" # 集羣會話管理器選擇DeltaManager;

expireSessionsOnShutdown="false"

notifyListenersOnReplication="true"/>

<Channel className="org.apache.catalina.tribes.group.GroupChannel"> # 爲集羣中的幾點定義通信信道;

<Membership className="org.apache.catalina.tribes.membership.McastService" # 定義使用McastService確定集羣中的成員

address="228.25.25.4" # 集羣內session複製所用的多播地址

port="45564"

frequency="500"

dropTime="3000"/>

<Receiver className="org.apache.catalina.tribes.transport.nio.NioReceiver" # 定義以NioReceiver方式接收其它節點的數據;

address="192.168.0.25"# 在Tomcat2主機上替換爲192.168.0.35

port="4000"

autoBind="100"

selectorTimeout="5000"

maxThreads="6"/>

<Sender className="org.apache.catalina.tribes.transport.ReplicationTransmitter"> # 定義數據複製的發送器;

<Transport className="org.apache.catalina.tribes.transport.nio.PooledParallelSender"/>

</Sender>

<Interceptor className="org.apache.catalina.tribes.group.interceptors.TcpFailureDetector"/>

<Interceptor className="org.apache.catalina.tribes.group.interceptors.MessageDispatch15Interceptor"/>

</Channel>

<Valve className="org.apache.catalina.ha.tcp.ReplicationValve"

filter=""/>

<Valve className="org.apache.catalina.ha.session.JvmRouteBinderValve"/>

<Deployer className="org.apache.catalina.ha.deploy.FarmWarDeployer"

tempDir="/tmp/war-temp/"

deployDir="/tmp/war-deploy/"

watchDir="/tmp/war-listen/"

watchEnabled="false"/>

<ClusterListener className="org.apache.catalina.ha.session.JvmRouteSessionIDBinderListener"/>

<ClusterListener className="org.apache.catalina.ha.session.ClusterSessionListener"/>

</Cluster>

<Realm className="org.apache.catalina.realm.LockOutRealm">

<Realm className="org.apache.catalina.realm.UserDatabaseRealm"

resourceName="UserDatabase"/>

</Realm>

<Host name="xxrenzhe.lnmmp.com" appBase="webapps"

unpackWARs="true" autoDeploy="true">

<Context path="" docBase="lnmmpapp" />

<Valve className="org.apache.catalina.valves.AccessLogValve" directory="logs"

prefix="lnmmp_access_log." suffix=".txt"

pattern="%h %l %u %t "%r" %s %b" />

</Host>

<Host name="localhost" appBase="webapps"

unpackWARs="true" autoDeploy="true">

<Valve className="org.apache.catalina.valves.AccessLogValve" directory="logs"

prefix="localhost_access_log." suffix=".txt"

pattern="%h %l %u %t "%r" %s %b" />

</Host>

</Engine>

</Service>

</Server>

# cd /usr/local/tomcat/webapps/lnmmpapp/WEB-INF/

# cp /usr/local/tomcat/conf/web.xml .

# vi web.xml # 添加如下一行,無需放置於任何容器中

<distributable\>查看日誌

# tailf /usr/local/tomcat/logs/catalina.out

May 08, 2014 11:08:13 PM org.apache.catalina.ha.tcp.SimpleTcpCluster memberAdded

INFO: Replication member added:org.apache.catalina.tribes.membership.MemberImpl[tcp://{192, 168, 0, 35}:4000,{192, 168, 0, 35},4000, alive=1029, securePort=-1, UDP Port=-1, id={106 35 -62 -54 -28 61 74 -98 -86 -11 -69 104 28 -114 32 -69 }, payload={}, command={}, domain={}, ]

# 查看到如上信息,則說明session集羣已生效,tomcat1已檢測到tomcat2節點的存在訪問驗證

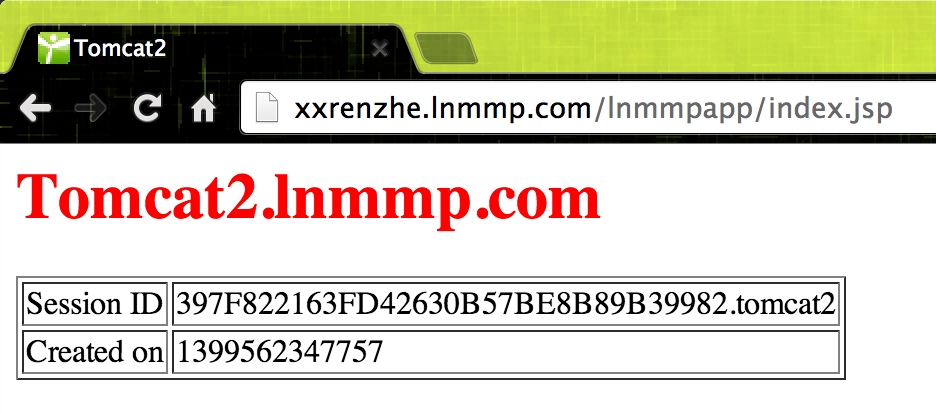

第一次訪問

然後停止tomcat1的nginx服務(service nginx stop),再次訪問

說明:雖然因爲tomcat1故障,導致用戶請求被調度到了tomcat2節點上,但Session ID並未發生改變,即session集羣內的所有節點都保存有全局的session信息,很好的實現了用戶訪問的不中斷;,