爬取小說網站的小說,並保存到數據庫

第一步:先獲取小說內容

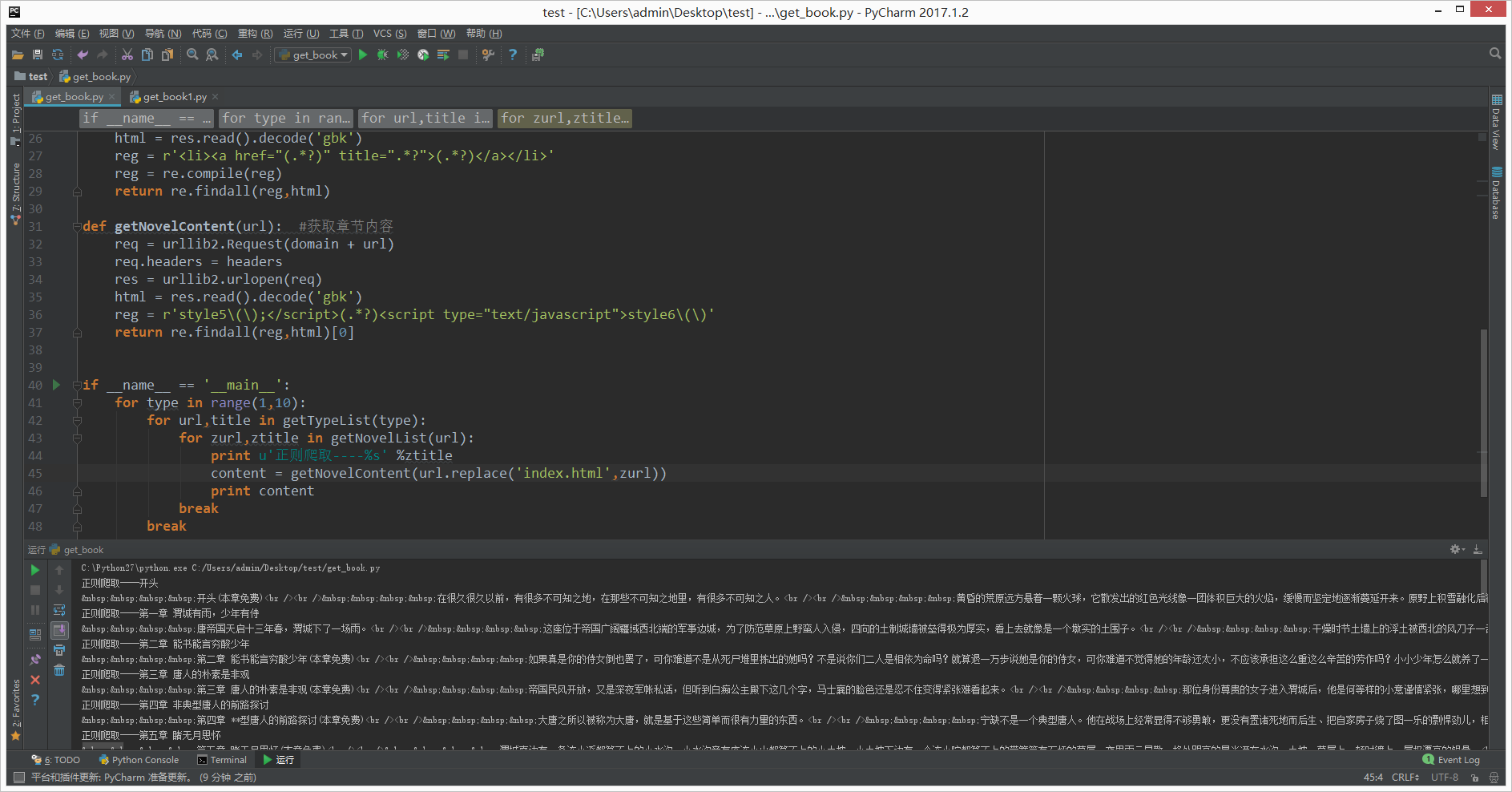

#!/usr/bin/python

# -*- coding: UTF-8 -*-

import urllib2,re

domain = 'http://www.quanshu.net'

headers = {

"User-Agent": "Mozilla/5.0 (Windows NT 6.3; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/58.0.3029.110 Safari/537.36"

}

def getTypeList(pn=1): #獲取分類列表的函數

req = urllib2.Request('http://www.quanshu.net/map/%s.html' % pn) #實例將要請求的對象

req.headers = headers #替換所有頭信息

#req.add_header() #添加單個頭信息

res = urllib2.urlopen(req) #開始請求

html = res.read().decode('gbk') #decode解碼,解碼成Unicode

reg = r'<a href="(/book/.*?)" target="_blank">(.*?)</a>'

reg = re.compile(reg) #增加匹配效率 正則匹配返回的類型爲List

return re.findall(reg,html)

def getNovelList(url): #獲取章節列表函數

req = urllib2.Request(domain + url)

req.headers = headers

res = urllib2.urlopen(req)

html = res.read().decode('gbk')

reg = r'<li><a href="(.*?)" title=".*?">(.*?)</a></li>'

reg = re.compile(reg)

return re.findall(reg,html)

def getNovelContent(url): #獲取章節內容

req = urllib2.Request(domain + url)

req.headers = headers

res = urllib2.urlopen(req)

html = res.read().decode('gbk')

reg = r'style5\(\);</script>(.*?)<script type="text/javascript">style6\(\)'

return re.findall(reg,html)[0]

if __name__ == '__main__':

for type in range(1,10):

for url,title in getTypeList(type):

for zurl,ztitle in getNovelList(url):

print u'正則爬取----%s' %ztitle

content = getNovelContent(url.replace('index.html',zurl))

print content

break

break執行後結果如下:

第二步:存儲到數據庫

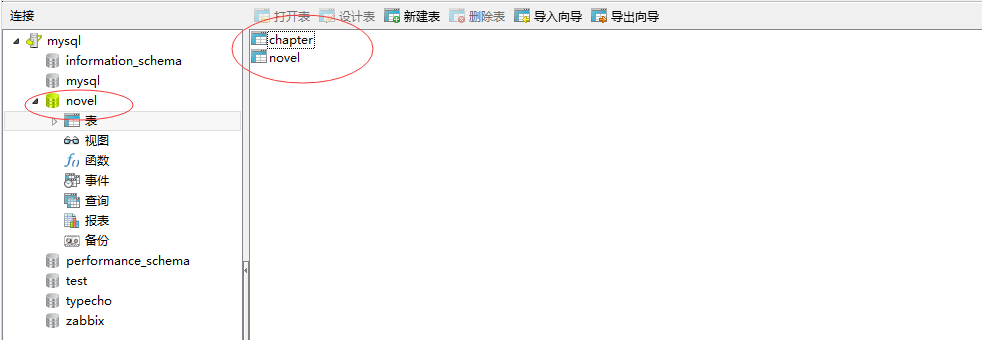

1、設計數據庫

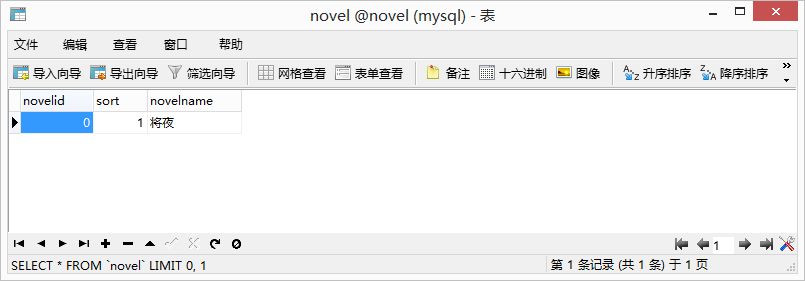

1.1 新建庫:novel

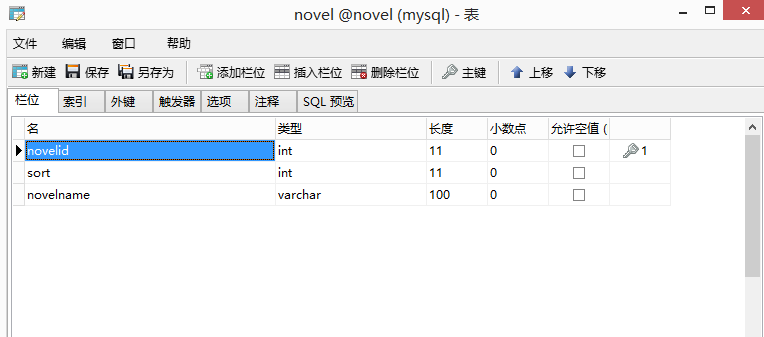

1.2 設計表:novel

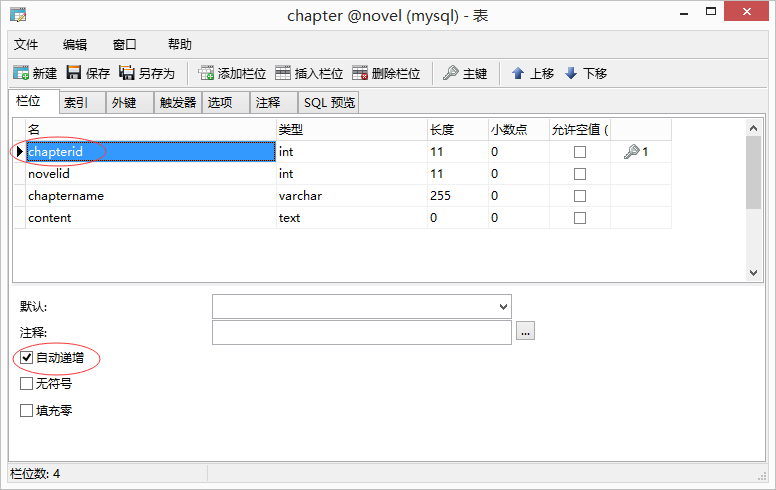

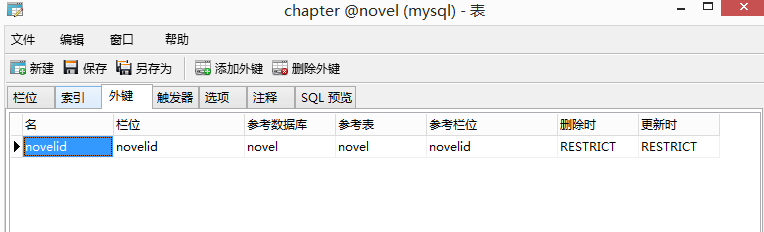

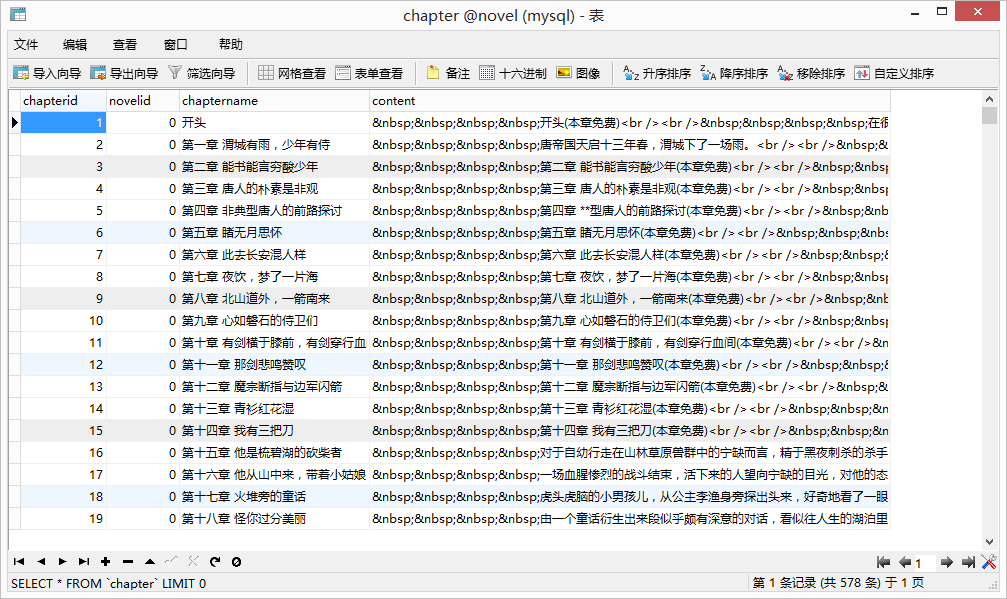

1.3 設計表:chapter

並設置外鍵

2、編寫腳本

#!/usr/bin/python

# -*- coding: UTF-8 -*-

import urllib2,re

import MySQLdb

class Sql(object):

conn = MySQLdb.connect(host='192.168.19.213',port=3306,user='root',passwd='Admin123',db='novel',charset='utf8')

def addnovels(self,sort,novelname):

cur = self.conn.cursor()

cur.execute("insert into novel(sort,novelname) values(%s , '%s')" %(sort,novelname))

lastrowid = cur.lastrowid

cur.close()

self.conn.commit()

return lastrowid

def addchapters(self,novelid,chaptername,content):

cur = self.conn.cursor()

cur.execute("insert into chapter(novelid,chaptername,content) values(%s , '%s' ,'%s')" %(novelid,chaptername,content))

cur.close()

self.conn.commit()

domain = 'http://www.quanshu.net'

headers = {

"User-Agent": "Mozilla/5.0 (Windows NT 6.3; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/58.0.3029.110 Safari/537.36"

}

def getTypeList(pn=1): #獲取分類列表的函數

req = urllib2.Request('http://www.quanshu.net/map/%s.html' % pn) #實例將要請求的對象

req.headers = headers #替換所有頭信息

#req.add_header() #添加單個頭信息

res = urllib2.urlopen(req) #開始請求

html = res.read().decode('gbk') #decode解碼,解碼成Unicode

reg = r'<a href="(/book/.*?)" target="_blank">(.*?)</a>'

reg = re.compile(reg) #增加匹配效率 正則匹配返回的類型爲List

return re.findall(reg,html)

def getNovelList(url): #獲取章節列表函數

req = urllib2.Request(domain + url)

req.headers = headers

res = urllib2.urlopen(req)

html = res.read().decode('gbk')

reg = r'<li><a href="(.*?)" title=".*?">(.*?)</a></li>'

reg = re.compile(reg)

return re.findall(reg,html)

def getNovelContent(url): #獲取章節內容

req = urllib2.Request(domain + url)

req.headers = headers

res = urllib2.urlopen(req)

html = res.read().decode('gbk')

reg = r'style5\(\);</script>(.*?)<script type="text/javascript">style6\(\)'

return re.findall(reg,html)[0]

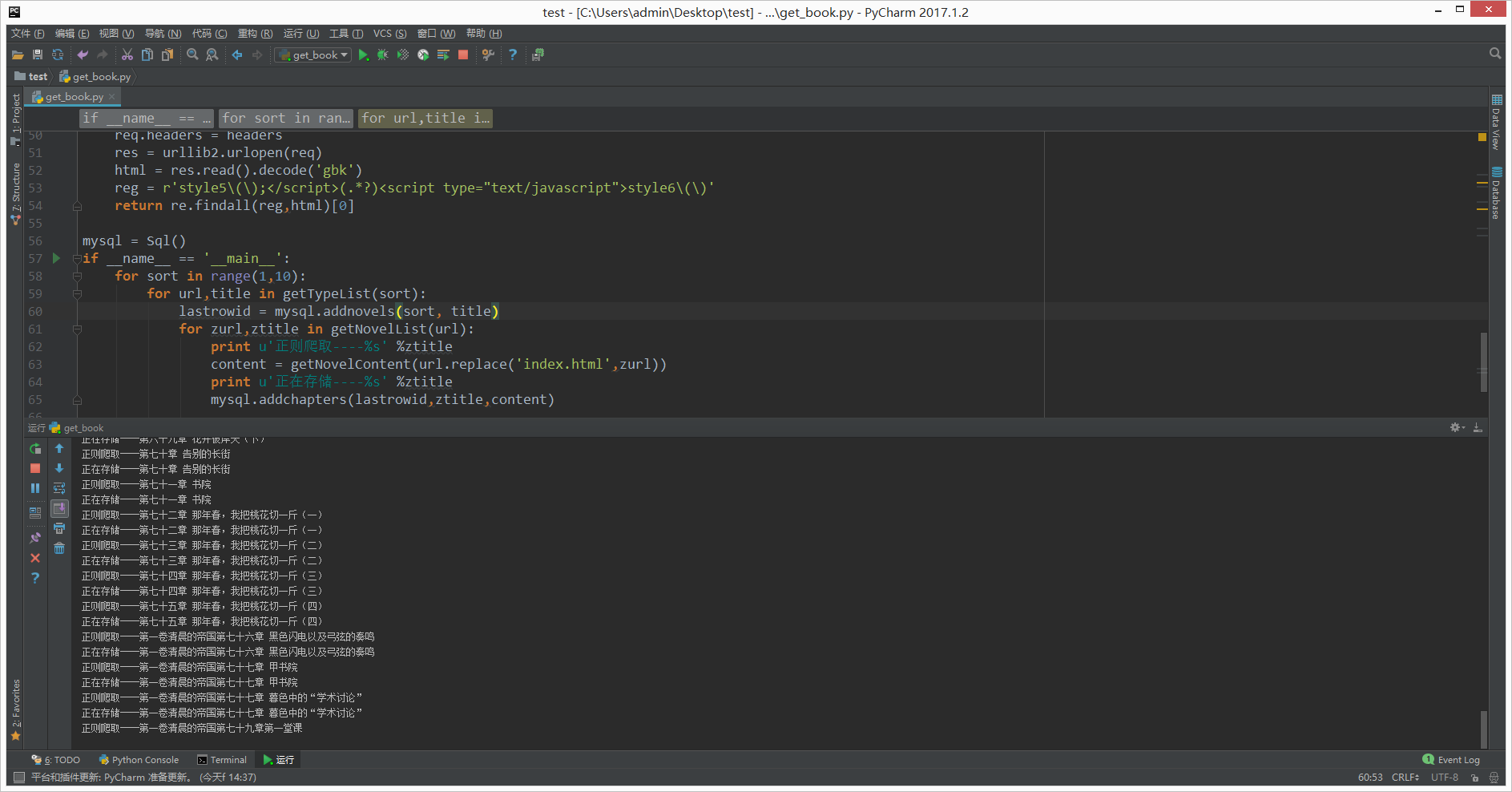

mysql = Sql()

if __name__ == '__main__':

for sort in range(1,10):

for url,title in getTypeList(sort):

lastrowid = mysql.addnovels(sort, title)

for zurl,ztitle in getNovelList(url):

print u'正則爬取----%s' %ztitle

content = getNovelContent(url.replace('index.html',zurl))

print u'正在存儲----%s' %ztitle

mysql.addchapters(lastrowid,ztitle,content)3、執行腳本

4、查看數據庫

可以看到已經存儲成功了。

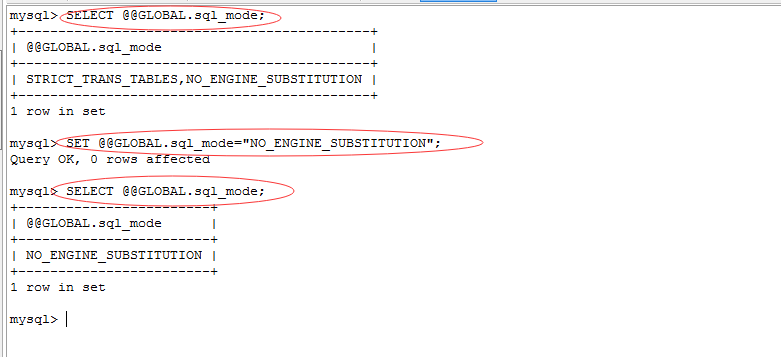

報錯:

_mysql_exceptions.OperationalError: (1364, "Field 'novelid' doesn't have a default value")

解決:執行sql語句

SELECT @@GLOBAL.sql_mode;

SET @@GLOBAL.sql_mode="NO_ENGINE_SUBSTITUTION";

報錯參考:http://blog.sina.com.cn/s/blog_6d2b3e4901011j9w.html