概述

以房價預測爲例,使用numpy實現深度學習網絡--線性迴歸代碼。

數據鏈接:https://pan.baidu.com/s/1pY5gc3g8p-IK3AutjSUUMA

提取碼:l3oo

導入庫

import numpy as np

import matplotlib.pyplot as plt加載數據

def LoadData():

#讀取數據

data = np.fromfile( './housing.data', sep=' ' )

#變換數據形狀

feature_names = ['CRIM', 'ZN', 'INDUS', 'CHAS', 'NOX', 'RM', 'AGE', 'DIS', 'RAD', 'TAX', 'PTRATIO', 'B', 'LSTAT', 'MEDV']

feature_num = len( feature_names )

data = data.reshape( [-1, feature_num] )

#計算數據最大值、最小值、平均值

data_max = data.max( axis=0 )

data_min = data.min( axis=0 )

data_avg = data.sum( axis=0 ) / data.shape[0]

#對數據進行歸一化處理

for i in range( feature_num ):

data[:, i] = ( data[:, i] - data_avg[i] ) / ( data_max[i] - data_min[i] )

#劃分訓練集和測試集

ratio = 0.8

offset = int( data.shape[0] * ratio )

train_data = data[ :offset ]

data_test = data[ offset: ]

return data_train, data_test模型設計

class Network( object ):

'''

線性迴歸神經網絡類

'''

def __init__( self, num_weights ):

'''

初始化權重和偏置

'''

self.w = np.random.randn( num_weights, 1 ) #隨機初始化權重

self.b = 0.

def Forward( self, x ):

'''

前向訓練:計算預測值

'''

y_predict = np.dot( x, self.w ) + self.b #根據公式,計算預測值

return y_predict

def Loss( self, y_predict, y_real ):

'''

計算損失值:均方誤差法

'''

error = y_predict - y_real #誤差

cost = np.square( error ) #代價函數:誤差求平方

cost = np.mean( cost ) #求代價函數的均值(即:MSE法求損失)

return cost

def Gradient( self, x, y_real ):

'''

根據公式,計算權重和偏置的梯度

'''

y_predict = self.Forward( x ) #計算預測值

gradient_w = ( y_predict - y_real ) * x #根據公式,計算權重的梯度

gradient_w = np.mean( gradient_w, axis=0 ) #計算每一列的權重的平均值

gradient_w = gradient_w[:, np.newaxis] #reshape

gradient_b = ( y_predict - y_real ) #根據公式,計算偏置的梯度

gradient_b = np.mean( gradient_b ) #計算偏置梯度的平均值

return gradient_w, gradient_b

def Update( self, gradient_w, gradient_b, learning_rate=0.01 ):

'''

梯度下降法:更新權重和偏置

'''

self.w = self.w - gradient_w * learning_rate #根據公式,更新權重

self.b = self.b - gradient_b * learning_rate #根據公式,更新偏置

def Train( self, x, y, num_iter=100, learning_rate=0.01 ):

'''

使用梯度下降法,訓練模型

'''

losses = []

for i in range( num_iter ): #迭代計算更新權重、偏置

#計算預測值

y_predict = self.Forward( x )

#計算損失

loss = self.Loss( y_predict, y )

#計算梯度

gradient_w, gradient_b = self.Gradient( x, y )

#根據梯度,更新權重和偏置

self.Update( gradient_w, gradient_b, learning_rate )

#打印模型當前狀態

losses.append( loss )

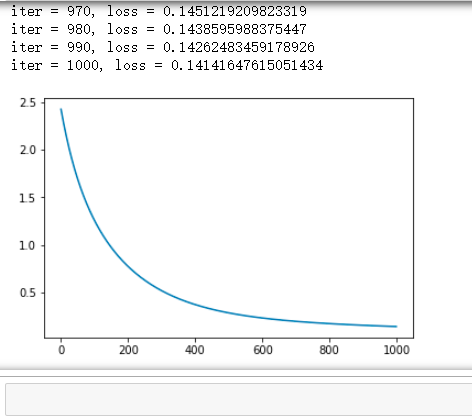

if ( i+1 ) % 10 == 0:

print( 'iter = {}, loss = {}'.format( i+1, loss ) )

return losses模型訓練

#獲取數據

train_data, test_data = LoadData()

x_data = train_data[:, :-1]

y_data = train_data[:, -1:]

#創建網絡

net = Network( 13 )

num_interator = 1000

learning_rate = 0.01

#進行訓練

losses = net.Train( x_data, y_data, num_interator, learning_rate )

#畫出損失函數變化趨勢

plot_x = np.arange( num_interator )

plot_y = losses

plt.plot( plot_x, plot_y )

plt.show()