一、jenkins 的安裝

配置要求

- 最小 256MB 內存,推薦 512MB 以上

- 10GB硬盤空間,用於安裝 Jenkins、Docker 鏡像和容器

在 Docker 中運行 Jenkins

我們在服務器上面爲 jenkins 準備數據目錄,假設爲/home/data/www/jenkins.wzlinux.com,前提是我們已經在服務器上面安裝好了 docker。

docker run \

--name jenkins \

-u root \

-d \

-p 8080:8080 \

-p 50000:50000 \

-e TZ="Asia/Shanghai" \

-v /home/data/www/jenkins.wzlinux.com:/var/jenkins_home \

-v /var/run/docker.sock:/var/run/docker.sock \

--restart=on-failure:10 \

jenkinsci/blueocean配置 jenkins

使用瀏覽器打開服務器的 8080 端口,並等待 Unlock Jenkins 頁面出現。

可以使用如下命令獲取管理員的密碼:

docker logs jenkins關於插件的安裝我這裏也不介紹了,有什麼不懂的可以微信聯繫我。

二、配置 pipeline

2.1、配置源

我們從 github 上面找一個 nodejs 的案例作爲我們的代碼源,當然你也可以選擇自己的 gitlab。

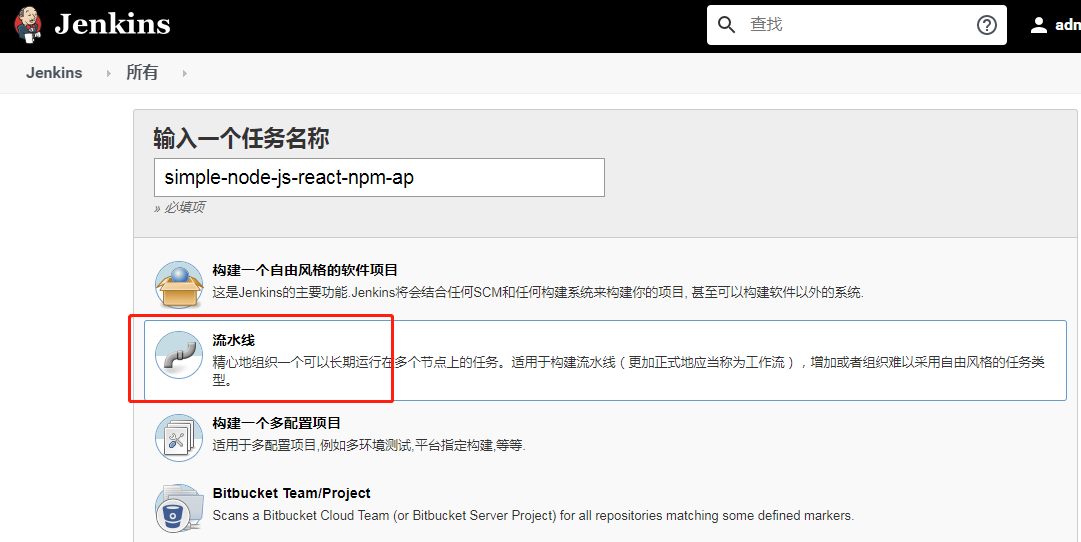

https://github.com/jenkins-docs/simple-node-js-react-npm-app2.2、創建我們的 pipeline

- 進入首頁,點擊 New Item

- 在項目名的地方,我們填寫

simple-node-js-react-npm-ap - 類型我們選擇 pipeline

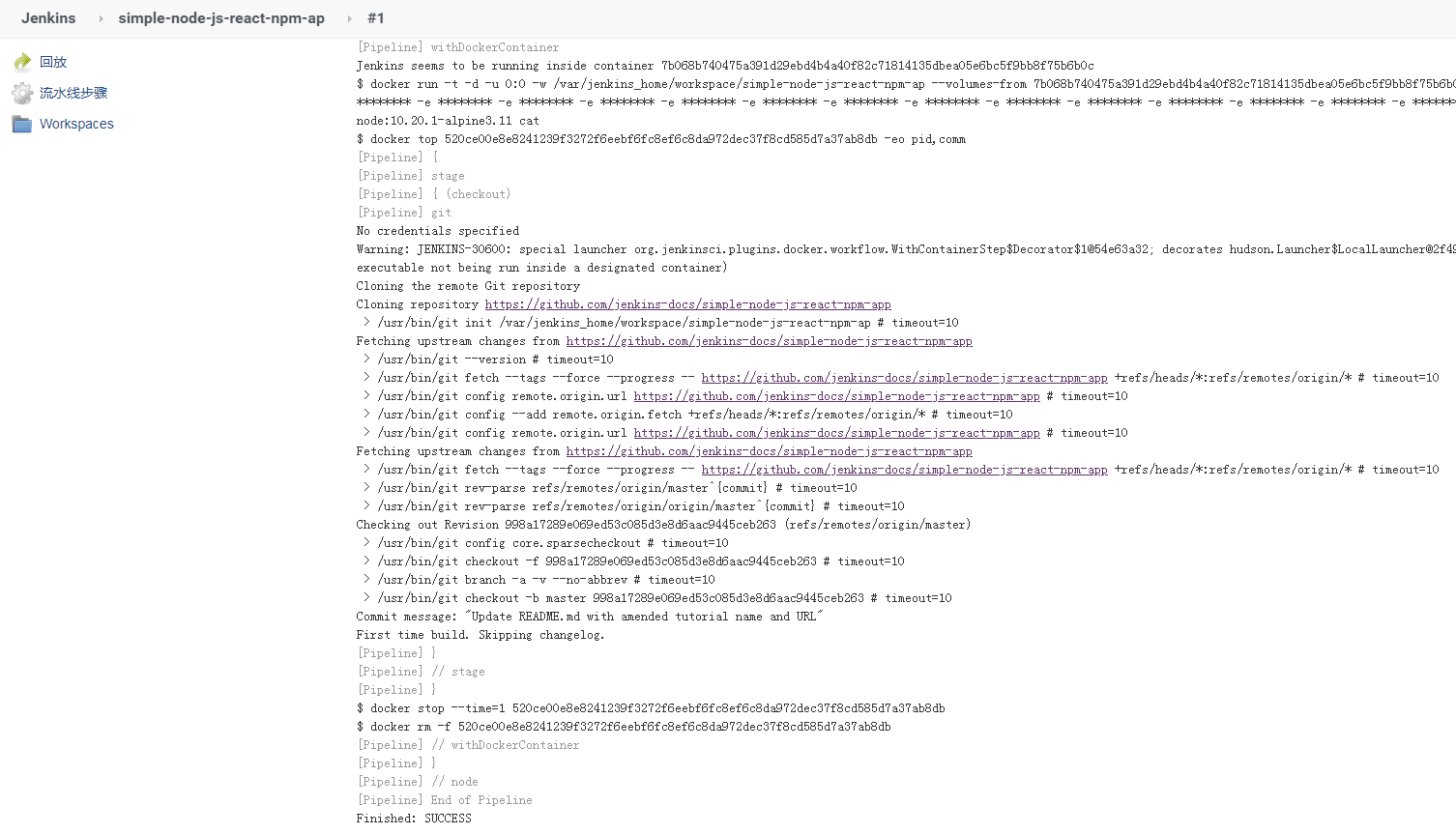

2.3、拉取源代碼

點擊確定之後,我們進入到 project 的配置界面,我們找到 pipeline 這一部分。

我們可以把 pipeline 寫入到 jenkinsfile,然後保存到代碼根目錄,也可以直接在這裏填寫,我們選擇在這裏填寫。

pipeline {

agent {

docker {

image 'node:10.20.1-alpine3.11'

args '-v $HOME/.m2:/root/.m2'

}

}

stages {

stage('checkout') {

steps {

git 'https://github.com/jenkins-docs/simple-node-js-react-npm-app'

}

}

}

}- 因爲我們是構建 nodejs 項目,所以我們這裏選擇 node 的鏡像,大家可以選擇自己的版本,使用 docker 的好處就是,一些工具我們也不需要再去安裝,然後到系統工具配置了,直接選擇自己需要的工具的 docker 鏡像就可以了。

- 我們這裏去拉取 github 的代碼,如果語法不會的話,輸入框下面有流水線語法器,可以隨時去生成,也可以使用我們的 gitlab。

我們運行一下,看下輸出結果如何。

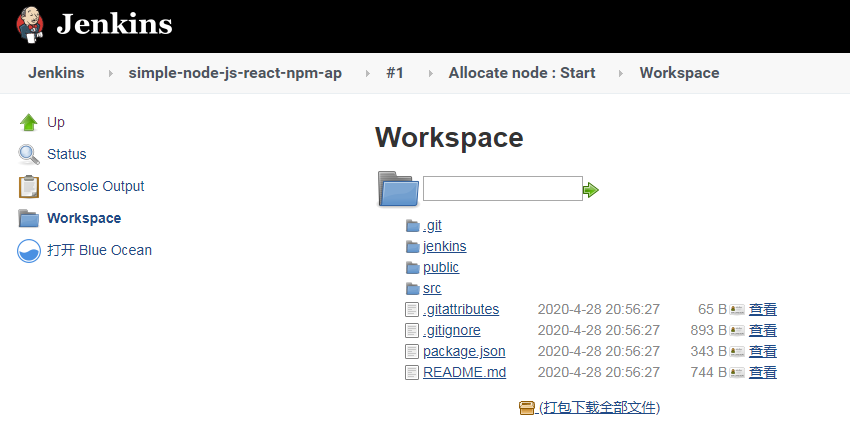

並且查看一下 workspace,看下載下來的代碼

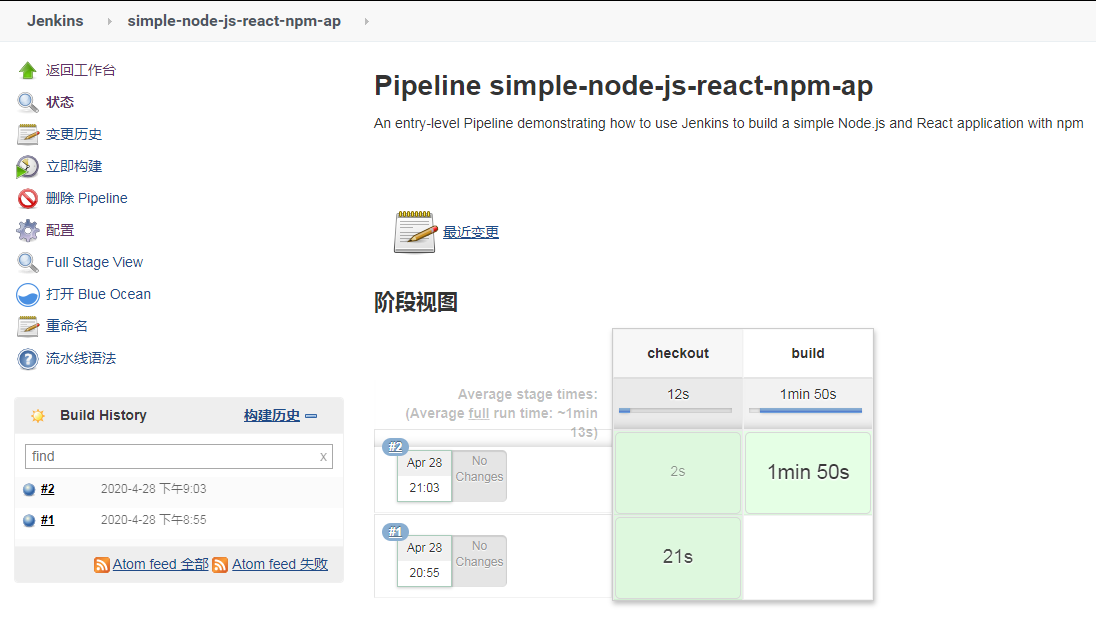

2.4、node.js 構建

那我們再完善一下 pipeline,我們增加打包構建,我這裏選擇的 node 版本比較低,大家可以去 docker hub 上面去選擇更新的版本。

pipeline {

agent {

docker {

image 'node:10.20.1-alpine3.11'

args '-v $HOME/.m2:/root/.m2'

}

}

stages {

stage('checkout') {

steps {

git 'https://github.com/jenkins-docs/simple-node-js-react-npm-app'

}

}

stage('build') {

steps {

sh 'npm install'

sh 'npm run build'

}

}

}

}然後提交,查看一下運行結果如何:

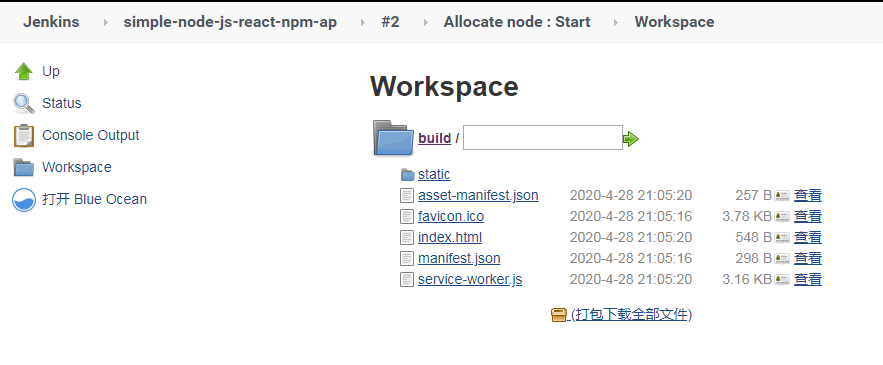

然後再查看一下 wordspace 裏面構建的文件,構建的文件在 build 目錄下面。我對代碼也不是太清楚,有的好像生成在 dist 目錄。

2.5、發佈到服務器上面

這裏我們還是選擇插件 Publish over SSH,配置這個插件,我簡要說一下。

這裏的 Remote Directory 比較重要,後面所有的文件都會傳到以這個目錄爲根目錄的目錄下面。

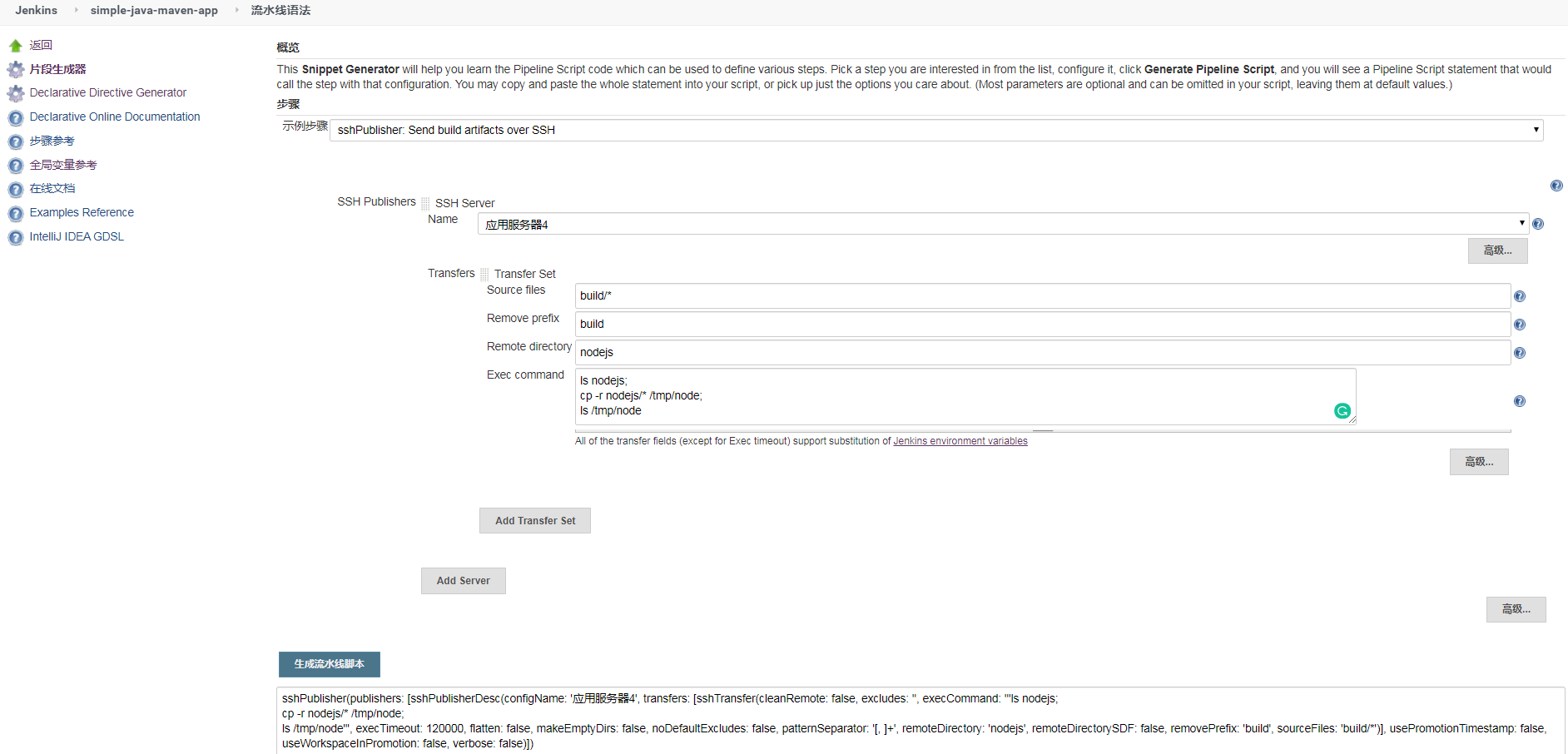

這裏配置好了,但是我們要寫 pipeline,這裏我們只能借住流水線語法生成器,我們這裏計劃把 build 裏面的代碼放到目標服務器的 /tmp/node 目錄下面。

Source files:需要上傳的文件(注意:相對於工作區的路徑。看後面的配置可以填寫多個,默認用,分隔),爲了簡要也可以寫

build/*。

Remove prefix:移除前綴(只能指定Source files中的目錄)。

Remote directory:遠程目錄(這裏也是相對目錄,目錄根據我們配 ss h的時候填寫的Remote Directory 路徑,我寫的是/root,所以文件會被上傳到我們的 /root/nodejs 目錄下面。

最終的 pipeline 如下:

pipeline {

agent {

docker {

image 'node:10.20.1-alpine3.11'

args '-v $HOME/.m2:/root/.m2'

}

}

stages {

stage('checkout') {

steps {

git 'https://github.com/jenkins-docs/simple-node-js-react-npm-app'

}

}

stage('build') {

steps {

sh 'npm install'

sh 'npm run build'

}

}

stage('Deliver') {

steps {

sshPublisher(publishers: [sshPublisherDesc(configName: '應用服務器4', transfers: [sshTransfer(cleanRemote: false, excludes: '', execCommand: '''ls nodejs;

cp -r nodejs/* /tmp/node;

ls /tmp/node''', execTimeout: 120000, flatten: false, makeEmptyDirs: false, noDefaultExcludes: false, patternSeparator: '[, ]+', remoteDirectory: 'nodejs', remoteDirectorySDF: false, removePrefix: 'build', sourceFiles: 'build/*')], usePromotionTimestamp: false, useWorkspaceInPromotion: false, verbose: false)])

}

}

}

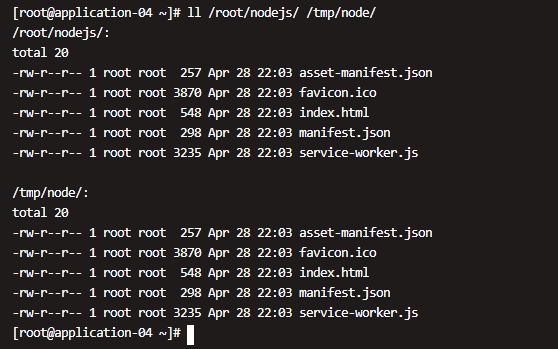

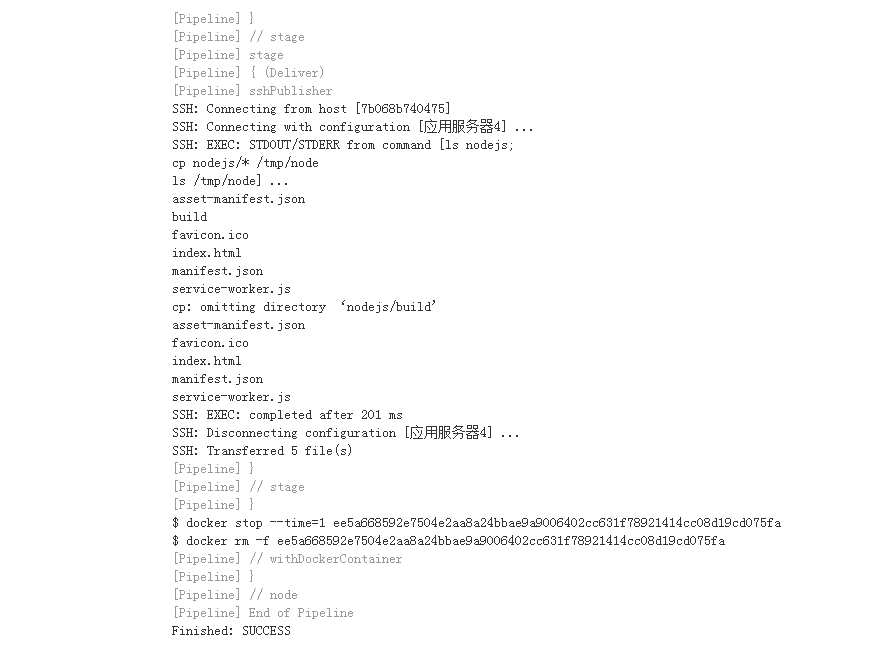

}運行,我們查看一下結果是否如我們預期。

我們發現了一點問題,發現只能傳送文件,不能傳送文件夾,有可能就是不支持文件,也有可能我還沒有完全搞明白,歡迎大家留意告訴我。

其實解決辦法也有很多,我們在第二步構建完成之後,可以使用 zip 命令對這個文件夾打包,然後我們再使用第三步去獲取,我這裏不進行演示了,大家請自行測試。

三、最佳實踐

Customizing the execution environment

Pipeline is designed to easily use Docker images as the execution environment for a single Stage or the entire Pipeline. Meaning that a user can define the tools required for their Pipeline, without having to manually configure agents. Practically any tool which can be packaged in a Docker container. can be used with ease by making only minor edits to a Jenkinsfile.

pipeline {

agent {

docker { image 'node:7-alpine' }

}

stages {

stage('Test') {

steps {

sh 'node --version'

}

}

}

}Caching data for containers

Many build tools will download external dependencies and cache them locally for future re-use. Since containers are initially created with "clean" file systems, this can result in slower Pipelines, as they may not take advantage of on-disk caches between subsequent Pipeline runs.

Pipeline supports adding custom arguments which are passed to Docker, allowing users to specify custom Docker Volumes to mount, which can be used for caching data on the agent between Pipeline runs. The following example will cache ~/.m2 between Pipeline runs utilizing the maven container, thereby avoiding the need to re-download dependencies for subsequent runs of the Pipeline.

pipeline {

agent {

docker {

image 'maven:3-alpine'

args '-v $HOME/.m2:/root/.m2'

}

}

stages {

stage('Build') {

steps {

sh 'mvn -B'

}

}

}

}Using multiple containers

It has become increasingly common for code bases to rely on multiple, different, technologies. For example, a repository might have both a Java-based back-end API implementation and a JavaScript-based front-end implementation. Combining Docker and Pipeline allows a Jenkinsfile to use multiple types of technologies by combining the agent {} directive, with different stages.

pipeline {

agent none

stages {

stage('Back-end') {

agent {

docker { image 'maven:3-alpine' }

}

steps {

sh 'mvn --version'

}

}

stage('Front-end') {

agent {

docker { image 'node:7-alpine' }

}

steps {

sh 'node --version'

}

}

}

}Using a Dockerfile

For projects which require a more customized execution environment, Pipeline also supports building and running a container from a Dockerfile in the source repository. In contrast to the previous approach of using an "off-the-shelf" container, using the agent { dockerfile true } syntax will build a new image from a Dockerfile rather than pulling one from Docker Hub.

Re-using an example from above, with a more custom Dockerfile:

Dockerfile

FROM node:7-alpine

RUN apk add -U subversionBy committing this to the root of the source repository, the Jenkinsfile can be changed to build a container based on this Dockerfile and then run the defined steps using that container:

Jenkinsfile (Declarative Pipeline)

pipeline {

agent { dockerfile true }

stages {

stage('Test') {

steps {

sh 'node --version'

sh 'svn --version'

}

}

}

}The agent { dockerfile true } syntax supports a number of other options which are described in more detail in the Pipeline Syntax section.

Advanced Usage with Scripted Pipeline

Running "sidecar" containers

Using Docker in Pipeline can be an effective way to run a service on which the build, or a set of tests, may rely. Similar to the sidecar pattern, Docker Pipeline can run one container "in the background", while performing work in another. Utilizing this sidecar approach, a Pipeline can have a "clean" container provisioned for each Pipeline run.

Consider a hypothetical integration test suite which relies on a local MySQL database to be running. Using the withRun method, implemented in the Docker Pipeline plugin’s support for Scripted Pipeline, a Jenkinsfile can run MySQL as a sidecar:

node {

checkout scm

/*

* In order to communicate with the MySQL server, this Pipeline explicitly

* maps the port (`3306`) to a known port on the host machine.

*/

docker.image('mysql:5').withRun('-e "MYSQL_ROOT_PASSWORD=my-secret-pw" -p 3306:3306') { c ->

/* Wait until mysql service is up */

sh 'while ! mysqladmin ping -h0.0.0.0 --silent; do sleep 1; done'

/* Run some tests which require MySQL */

sh 'make check'

}

}This example can be taken further, utilizing two containers simultaneously. One "sidecar" running MySQL, and another providing the execution environment, by using the Docker container links.

node {

checkout scm

docker.image('mysql:5').withRun('-e "MYSQL_ROOT_PASSWORD=my-secret-pw"') { c ->

docker.image('mysql:5').inside("--link ${c.id}:db") {

/* Wait until mysql service is up */

sh 'while ! mysqladmin ping -hdb --silent; do sleep 1; done'

}

docker.image('centos:7').inside("--link ${c.id}:db") {

/*

* Run some tests which require MySQL, and assume that it is

* available on the host name `db`

*/

sh 'make check'

}

}

}The above example uses the object exposed by withRun, which has the running container’s ID available via the id property. Using the container’s ID, the Pipeline can create a link by passing custom Docker arguments to the inside() method.

The id property can also be useful for inspecting logs from a running Docker container before the Pipeline exits:

sh "docker logs ${c.id}"Building containers

In order to create a Docker image, the Docker Pipeline plugin also provides a build() method for creating a new image, from a Dockerfile in the repository, during a Pipeline run.

One major benefit of using the syntax docker.build("my-image-name") is that a Scripted Pipeline can use the return value for subsequent Docker Pipeline calls, for example:

node {

checkout scm

def customImage = docker.build("my-image:${env.BUILD_ID}")

customImage.inside {

sh 'make test'

}

}The return value can also be used to publish the Docker image to Docker Hub, or a custom Registry, via the push() method, for example:

node {

checkout scm

def customImage = docker.build("my-image:${env.BUILD_ID}")

customImage.push()

}One common usage of image "tags" is to specify a latest tag for the most recently, validated, version of a Docker image. The push() method accepts an optional tag parameter, allowing the Pipeline to push the customImage with different tags, for example:

node {

checkout scm

def customImage = docker.build("my-image:${env.BUILD_ID}")

customImage.push()

customImage.push('latest')

}The build() method builds the Dockerfile in the current directory by default. This can be overridden by providing a directory path containing a Dockerfile as the second argument of the build() method, for example:

node {

checkout scm

def testImage = docker.build("test-image", "./dockerfiles/test")

testImage.inside {

sh 'make test'

}

}Builds

test-imagefrom the Dockerfile found at./dockerfiles/test/Dockerfile.

It is possible to pass other arguments to docker build by adding them to the second argument of the build() method. When passing arguments this way, the last value in the that string must be the path to the docker file and should end with the folder to use as the build context)

This example overrides the default Dockerfile by passing the -f flag:

node {

checkout scm

def dockerfile = 'Dockerfile.test'

def customImage = docker.build("my-image:${env.BUILD_ID}", "-f ${dockerfile} ./dockerfiles")

}Builds

my-image:${env.BUILD_ID}from the Dockerfile found at./dockerfiles/Dockerfile.test.

Using a remote Docker server

By default, the Docker Pipeline plugin will communicate with a local Docker daemon, typically accessed through /var/run/docker.sock.

To select a non-default Docker server, such as with Docker Swarm, the withServer() method should be used.

By passing a URI, and optionally the Credentials ID of a Docker Server Certificate Authentication pre-configured in Jenkins, to the method with:

node {

checkout scm

docker.withServer('tcp://swarm.example.com:2376', 'swarm-certs') {

docker.image('mysql:5').withRun('-p 3306:3306') {

/* do things */

}

}

}Using a custom registry

By default the Docker Pipeline integrates assumes the default Docker Registry of Docker Hub.

In order to use a custom Docker Registry, users of Scripted Pipeline can wrap steps with the withRegistry() method, passing in the custom Registry URL, for example:

node {

checkout scm

docker.withRegistry('https://registry.example.com') {

docker.image('my-custom-image').inside {

sh 'make test'

}

}

}For a Docker Registry which requires authentication, add a "Username/Password" Credentials item from the Jenkins home page and use the Credentials ID as a second argument to withRegistry():

node {

checkout scm

docker.withRegistry('https://registry.example.com', 'credentials-id') {

def customImage = docker.build("my-image:${env.BUILD_ID}")

/* Push the container to the custom Registry */

customImage.push()

}

}https://www.jenkins.io/doc/book/pipeline/docker/