pacemaker,是一個羣集資源管理器。它實現最大可用性羣集服務(亦稱資源管理)的節點和資源級故障檢測和恢復使用您的首選集羣基礎設施(OpenAIS的或Heaerbeat)提供的消息和成員能力。

它可以做乎任何規模的集羣,並配備了一個強大的依賴模型,使管理員能夠準確地表達羣集資源之間的關係(包括順序和位置)。幾乎任何可以編寫腳本,可以管理作爲心臟起搏器集羣的一部分。

pacemaker的特點:

主機和應用程序級別的故障檢測和恢復

幾乎支持任何冗餘配置

同時支持多種集羣配置模式

配置策略處理法定人數損失(多臺機器失敗時)

支持應用啓動/關機順序

支持,必須/必須在同一臺機器上運行的應用程序

支持多種模式的應用程序(如主/從)

可以測試任何故障或羣集的羣集狀態

primitive(native):基本資源,原始資源

group:資源組

clone:克隆資源(可同時運行在多個節點上),要先定義爲primitive後才能進行clone。主要包含STONITH和集羣文件系統(cluster filesystem)

master/slave:主從資源,如drdb

Lsb:linux表中庫,一般位於/etc/rc.d/init.d/目錄下的支持start|stop|status等參數的服務腳本都是lsb

ocf:Open cluster Framework,開放集羣架構

heartbeat:heartbaet V1版本

stonith:專爲配置stonith設備而用

172.25.85.2 server2.example.com(1024)

172.25.85.3 server3.example.com(1024)

1. server2/3:

/etc/init.d/ldirectord stop

chkconfig ldirectord off

yum install pacemaker -y

rpm -q corosync

server2:

cd /etc/corosync

cp corosync.conf.example corosync.conf

vim /etc/corosync/corosync.conf

bindnetaddr: 172.25.85.0

service {

name: pacemaker

ver: 0

} scp /etc/corosync/corosync.conf [email protected]:/etc/corosync/

/etc/init.d/corosync start

crm_verify -LV ##檢測配置文件是否正確

server3:

/etc/init.d/corosync start

tail -f /var/log/messages

2. server2:

yum install crmsh-1.2.6-0.rc2.2.1.x86_64.rpm pssh-2.3.1-2.1.x86_64.rpm

crm

crm(live)configure# property no-quorum-policy=ignore ##將修改同步到其他節點 crm(live)configure# commit

vim /etc/httpd/conf/httpd.conf

<Location /server-status> SetHandler server-status Order deny,allow Deny from all Allow from 127.0.0.1 </Location>

crm

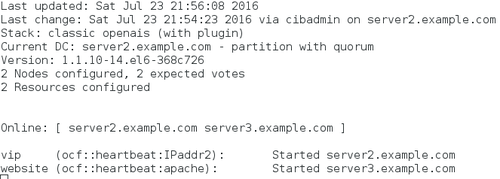

crm(live)configure# primitive vip ocf:heartbeat:IPaddr2 params ip=172.25.85.100 crm(live)configure# primitive vip ocf:heartbeat:IPaddr2 params ip=172.25.85.100 cidr_netmask=32 op monitor interval=30s primitive website ocf:heartbeat:apache params configfile=/etc/httpd/conf/httpd.conf op monitor interval=60s crm(live)configure# commit

crm(live)configure# collocation website-with-ip inf: website vip ##將這兩個資源整合到一個主機上

crm(live)configure# commit

crm(live)configure# delete website-with-ip

crm(live)configure# commit

crm(live)configure# group apache vip website ##創建一個資源組apache

crm(live)configure# commit

crm(live)node# show server2.example.com: normal server3.example.com: normal crm(live)node# standby server2.example.com

crm(live)resource# stop apache crm(live)resource# show Resource Group: apache vip (ocf::heartbeat:IPaddr2): Stopped website (ocf::heartbeat:apache): Stopped crm(live)configure# delete apache crm(live)configure# delete website crm(live)configure# commit

[注意]

刪除一個資源:

crm(live)resource# stop website

crm(live)configure# delete website

server3:

yum install crmsh-1.2.6-0.rc2.2.1.x86_64.rpm pssh-2.3.1-2.1.x86_64.rpm

vim /etc/httpd/conf/httpd.conf

<Location /server-status> SetHandler server-status Order deny,allow Deny from all Allow from 127.0.0.1 </Location>

crm_mon ##

3.真機上:systemctl start fence_virtd.service

server2:

stonith_admin -I

stonith_admin -M -a fence_xvm

crm

crm(live)configure# property stonith-enabled=true

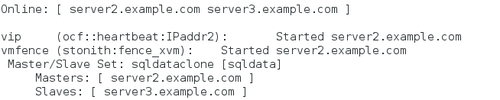

primitive vmfence stonith:fence_xvm params pcmk_host_map="server2.example.com:ricci1;server3.example.com:heartbeat1" op monitor interval=1min

primitive sqldata ocf:linbit:drbd params drbd_resource=sqldata op monitor interval=30s

ms sqldataclone sqldata meta master-max=1 master-node-max=1 clone-max=2 clone-node-max=1 notify=true

crm(live)configure# commit

crm_mon

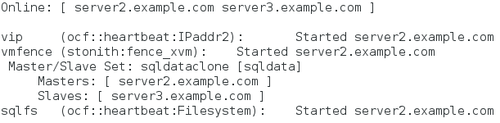

primitive sqlfs ocf:heartbeat:Filesystem params device=/dev/drbd1 directory=/var/lib/mysql fstype=ext4

colocation sqlfs_on_drbd inf: sqlfs sqldataclone:Master

order sqlfs-after-sqldata inf: sqldataclone:promote sqlfs:start

crm(live)configure# commit

crm_mon

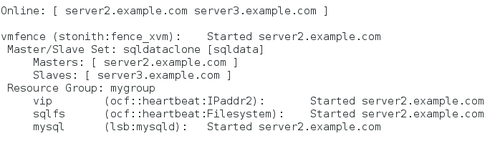

crm(live)configure# primitive mysql lsb:mysqld op monitor interval=30s crm(live)configure# group mygroup vip sqlfs mysql INFO: resource references in colocation:sqlfs_on_drbd updated INFO: resource references in order:sqlfs-after-sqldata updated

crm(live)configure# commit

crm_mon

crm(live)resource# refresh mygroup

server3:

crm_mon ##監控節點狀態