一:Corosync+Pacemaker

Pacemaker是最流行的CRM(集羣資源管理器),是從heartbeat v3中獨立出來的資源管理器,同時Corosync+Pacemaker是最流行的高可用集羣的套件.

二:DRBD

DRBD (Distributed Replicated Block Device,分佈式複製塊設備)是由內核模塊和相關腳本而構成,用以構建高可用性的集羣。其實現方式是通過網絡來鏡像整個設備。你可以把它看作是一種網絡RAID1。

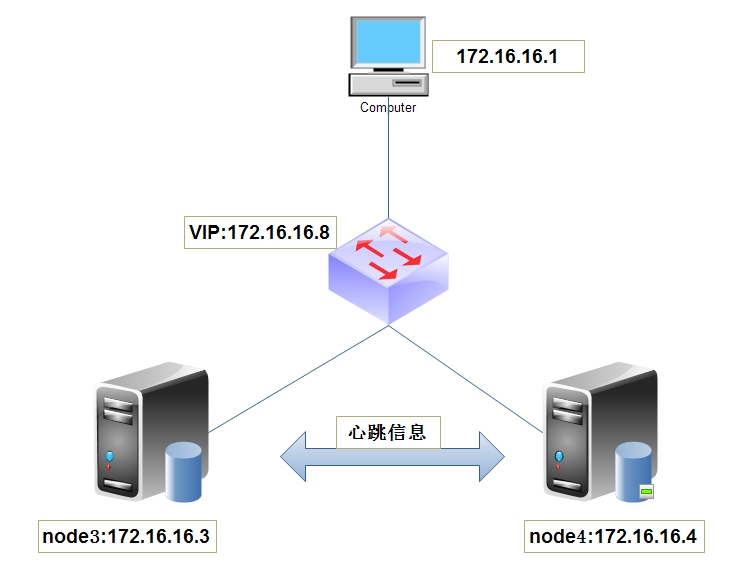

三:試驗拓撲圖

四:試驗環境準備(centos6.5.x86_64)

drbd-8.4.3-33.el6.x86_64.rpm

drbd-kmdl-2.6.32-431.el6-8.4.3-33.el6.x86_64.rpm

crmsh-1.2.6-4.el6.x86_64.rpm

mariadb-5.5.36-linux-x86_64.tar.gz

corosync.x86_64-1.4.1-17.el6

五:實驗配置

1)配置各節點互相解析

配置node1

[root@node3 ~]# uname -n node3 [root@node3 ~]# cat /etc/hosts 127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4 ::1 localhost localhost.localdomain localhost6 localhost6.localdomain6 172.16.0.1 server.magelinux.com server 172.16.16.1 node1 172.16.16.2 node2 172.16.16.3 node3 172.16.16.4 node4 172.16.16.5 node5

配置node2

[root@node4 ~]# uname -n node4 [root@node4 ~]# cat /etc/hosts 127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4 ::1 localhost localhost.localdomain localhost6 localhost6.localdomain6 172.16.0.1 server.magelinux.com server 172.16.16.1 node1 172.16.16.2 node2 172.16.16.3 node3 172.16.16.4 node4 172.16.16.5 node5

配置各節點時間同步

[root@node3 ~]# ntpdate 172.16.0.1 [root@node4 ~]# ntpdate 172.16.0.1 #172.16.0.1爲時間服務器

配置各節點ssh互信

node4上的操作

[root@node4 ~]# ssh-keygen -t rsa -f /root/.ssh/id_rsa -P '' [root@node4 ~]# ssh-copy-id -i .ssh/id_rsa.pub root@node3 #發給node3

node3上的操作

[root@node3 ~]# ssh-keygen -t rsa -f /root/.ssh/id_rsa -P '' [root@node3 ~]# ssh-copy-id -i .ssh/id_rsa.pub root@node4 #發給node4

2)安裝配置corosync

node3

[root@node3 ~]# yum install corosync

node4

[root@node4 ~]# yum install corosync

配置node3上的corosync

[root@node3 ~]# cd /etc/corosync/ [root@node3 corosync]# cp corosync.conf.example corosync.conf [root@node3 corosync]# vim corosync.conf

# Please read the corosync.conf.5 manual page

compatibility: whitetank

totem {

version: 2

secauth: on #啓動認證

threads: 0

interface {

ringnumber: 0

bindnetaddr: 172.16.0.0 #心跳主機網段

mcastaddr: 226.94.16.1 #組播傳遞心跳信息

mcastport: 5405

ttl: 1

}

}

logging {

fileline: off

to_stderr: no

to_logfile: yes

to_syslog: yes

logfile: /var/log/cluster/corosync.log #日誌位置

debug: off

timestamp: on

logger_subsys {

subsys: AMF

debug: off

}

}

amf {

mode: disabled

}

service {

ver: 0

name: pacemaker

}

aisexec {

user: root

group: root

}生成密鑰文件

前面的幾部操作爲了節約生成密鑰的時間.random是根據敲擊鍵盤的頻率來生成密鑰,如果之前沒有足夠的生成密鑰所需要的信息那麼需要你不停的敲擊鍵盤.

[root@node3 corosync]# mv /dev/{random,random.bak}

[root@node3 corosync]# ln -s /dev/urandom /dev/random

[root@node3 corosync]# corosync-keygen

Corosync Cluster Engine Authentication key generator.

Gathering 1024 bits for key from /dev/random.

Press keys on your keyboard to generate entropy.

Writing corosync key to /etc/corosync/authkey.查看是否已經生成密鑰文件

[root@node3 corosync]# ll

total 24

-r-------- 1 root root 128 Oct 11 19:52 authkey #此爲密鑰文件

-rw-r--r-- 1 root root 537 Oct 11 19:49 corosync.conf

-rw-r--r-- 1 root root 445 Nov 22 2013 corosync.conf.example

-rw-r--r-- 1 root root 1084 Nov 22 2013 corosync.conf.example.udpu

drwxr-xr-x 2 root root 4096 Nov 22 2013 service.d

drwxr-xr-x 2 root root 4096 Nov 22 2013 uidgid.d

將配置文件和密鑰複製到node4節點上

[root@node3 corosync]# scp authkey corosync.conf node4:/etc/corosync/ authkey 100% 128 0.1KB/s 00:00 corosync.conf 100% 537 0.5KB/s 00:00

3)安裝配置pacemaker和crm

[root@node3 ~]# yum install pacemaker

先獲得crmsh-1.2.6-4.el6.x86_64.rpm 包

[root@node3 ~]# rpm -ivh crmsh-1.2.6-4.el6.x86_64.rpm error: Failed dependencies: pssh is needed by crmsh-1.2.6-4.el6.x86_64 python-dateutil is needed by crmsh-1.2.6-4.el6.x86_64 python-lxml is needed by crmsh-1.2.6-4.el6.x86_64

需要解決依賴關係

[root@node3 ~]# yum install python-dateutil python-lxml [root@node3 ~]# rpm -ivh crmsh-1.2.6-4.el6.x86_64.rpm --nodeps Preparing... ########################################### [100%] 1:crmsh ########################################### [100%]

4)node4安裝pacemaker和crm方法同node3

啓動node3和node4的pacemaker

[root@node3 ~]# service corosync start [root@node4 ~]# service corosync start

查看corosync引擎是否成功啓動

[root@node3 ~]# grep -e "Corosync Cluster Engine" -e "configuration file" /var/log/cluster/corosync.log

Oct 11 20:19:35 corosync [MAIN ] Corosync Cluster Engine ('1.4.1'): started and ready to provide service. #說明已經啓動準備好了

Oct 11 20:19:35 corosync [MAIN ] Successfully read main configuration file '/etc/corosync/corosync.conf'查看初始化成員節點通知是否正常發出

[root@node3 ~]# grep TOTEM /var/log/cluster/corosync.log Oct 11 20:19:35 corosync [TOTEM ] Initializing transport (UDP/IP Multicast). Oct 11 20:19:35 corosync [TOTEM ] Initializing transmit/receive security: libtomcrypt SOBER128/SHA1HMAC (mode 0). Oct 11 20:19:35 corosync [TOTEM ] The network interface [172.16.16.3] is now up.

查看pacemaker是否正常啓動

[root@node3 ~]# grep pcmk_startup /var/log/cluster/corosync.log Oct 11 20:19:35 corosync [pcmk ] info: pcmk_startup: CRM: Initialized Oct 11 20:19:35 corosync [pcmk ] Logging: Initialized pcmk_startup Oct 11 20:19:35 corosync [pcmk ] info: pcmk_startup: Maximum core file size is: 18446744073709551615 Oct 11 20:19:35 corosync [pcmk ] info: pcmk_startup: Service: 9 Oct 11 20:19:35 corosync [pcmk ] info: pcmk_startup: Local hostname: node3

查看集羣狀態

[root@node3 ~]# crm status Last updated: Sat Oct 11 20:29:24 2014 Last change: Sat Oct 11 20:19:35 2014 via crmd on node4 Stack: classic openais (with plugin) Current DC: node4 - partition with quorum Version: 1.1.10-14.el6-368c726 2 Nodes configured, 2 expected votes 0 Resources configured Online: [ node3 node4 ] #在線

5)安裝DRBD

先獲得drbd安裝包drbd-kmdl-2.6.32-431.el6-8.4.3-33.el6.x86_64.rpm ; drbd-8.4.3-33.el6.x86_64.rpm

[root@node3 ~]# rpm -ivh drbd-kmdl-2.6.32-431.el6-8.4.3-33.el6.x86_64.rpm #先安裝kmdl包 [root@node3 ~]# rpm -ivh drbd-8.4.3-33.el6.x86_64.rpm

6)node4安裝方法同node3

7)配置DRBD

[root@node3 ~]# cat /etc/drbd.d/global_common.conf

global {

usage-count yes; #讓linbit公司收集目前drbd的使用情況,yes爲參加,我們這裏不參加設置爲no

# minor-count dialog-refresh disable-ip-verification

}

common {

handlers {

gency-reboot.sh; echo b > /proc/sysrq-trigger ; reboot -f";

gency-reboot.sh; echo b > /proc/sysrq-trigger ; reboot -f";

wn.sh; echo o > /proc/sysrq-trigger ; halt -f";

}

startup {

# wfc-timeout degr-wfc-timeout outdated-wfc-timeout wait-after-sb

}

options {

# cpu-mask on-no-data-accessible

}

disk {

on-io-error detach; #添加這一項,同步出錯分離

}

net {

cram-hmac-alg "sha1";

shared-secret "mydrbdlab"; #添加認證算法和認證密鑰

}

}增加資源

node3

resource web {

device /dev/drbd0;

disk /dev/sda3; #sda3爲事先創建好的分區和node4保持一致

on node3 { #節點爲主機名

address 172.16.16.3:7788;

meta-disk internal;

}

on node4 {

address 172.16.16.4:7788;

meta-disk internal;

}

}同步DRBD配置文件到node4

[root@node3 drbd.d]# scp global_common.conf web.res node4:/etc/drbd.d/

node3與node4初始化資源

[root@node3 drbd.d]# drbdadm create-md web [root@node4 drbd.d]# drbdadm create-md web

啓動DRBD

[root@node3 drbd.d]# service drbd start #兩邊需要同時啓動 [root@node3 drbd.d]# service drbd start

查看兩邊的狀

開始時兩邊都爲secondary狀態

[root@node3 drbd.d]# drbd-overview 0:web/0 Connected Secondary/Secondary Inconsistent/Inconsistent C r----- [root@node4 drbd.d]# drbd-overview 0:web/0 Connected Secondary/Secondary Inconsistent/Inconsistent C r-----

選取node3爲主節點

[root@node3 drbd.d]# drbdadm -- --overwrite-data-of-peer primary web [root@node3 drbd.d]# drbd-overview 0:web/0 Connected Primary/Secondary UpToDate/UpToDate C r----- [root@node4 drbd.d]# drbd-overview 0:web/0 Connected Secondary/Secondary Inconsistent/Inconsistent C r----- [root@node4 drbd.d]# drbd-overview 0:web/0 Connected Secondary/Primary UpToDate/UpToDate C r-----

看 node3有之前的secondary變成了primary,說明node3已經變成了主節點

進行格式化並掛載

[root@node3 ~]# mke2fs -t ext4 /dev/drbd0 #這主節點上進行格式化並掛載 [root@node3 ~]# mount /dev/drbd0 /mnt

設置node4爲主節點

node3上面的操作

[root@node3 ~]# umount /mnt [root@node3 ~]# drbdadm secondary web [root@node3 ~]# drbd-overview 0:web/0 Connected Secondary/Secondary UpToDate/UpToDate C r-----

看:node3已經有primary變成了secondary

node4上面的操作

[root@node4 ~]# drbdadm primary web [root@node4 ~]# drbd-overview 0:web/0 Connected Primary/Secondary UpToDate/UpToDate C r----- [root@node4 ~]# mount /dev/drbd0 /mnt [root@node4 ~]# cd /mnt [root@node4 mnt]# ls

lost+found

看:node4 變成了secondary 而且可以在node4上面進行掛載.

說明:我們的drbd工作一切正常

8)安裝mysql

node3上創建mysql用戶與組

[root@node3 ~]# groupadd -g 3306 mysql [root@node3 ~]# useradd -u 3306 -g mysql -s /sbin/nologin -M mysql [root@node3 ~]# id mysql uid=3306(mysql) gid=3306(mysql) groups=3306(mysql)

node4上創建用戶與組同node3

node3安裝mysql

先獲得mysql安裝包 mariadb-5.5.36-linux-x86_64.tar.gz

[root@node3 ~]# tar xf mariadb-5.5.36-linux-x86_64.tar.gz -C /usr/local/ [root@node3 ~]# cd /usr/local/ [root@node3 local]# ln -sv mariadb-5.5.36-linux-x86_64 mysql `mysql' -> `mariadb-5.5.36-linux-x86_64' [root@node3 local]# cd mysql [root@node3 mysql]# chown root.mysql ./* [root@node3 mysql]# ll total 212 drwxr-xr-x 2 root mysql 4096 Oct 11 21:54 bin -rw-r--r-- 1 root mysql 17987 Feb 24 2014 COPYING -rw-r--r-- 1 root mysql 26545 Feb 24 2014 COPYING.LESSER drwxr-xr-x 3 root mysql 4096 Oct 11 21:54 data drwxr-xr-x 2 root mysql 4096 Oct 11 21:55 docs drwxr-xr-x 3 root mysql 4096 Oct 11 21:55 include -rw-r--r-- 1 root mysql 8694 Feb 24 2014 INSTALL-BINARY drwxr-xr-x 3 root mysql 4096 Oct 11 21:55 lib drwxr-xr-x 4 root mysql 4096 Oct 11 21:54 man drwxr-xr-x 11 root mysql 4096 Oct 11 21:55 mysql-test -rw-r--r-- 1 root mysql 108813 Feb 24 2014 README drwxr-xr-x 2 root mysql 4096 Oct 11 21:55 scripts drwxr-xr-x 27 root mysql 4096 Oct 11 21:55 share drwxr-xr-x 4 root mysql 4096 Oct 11 21:55 sql-bench drwxr-xr-x 4 root mysql 4096 Oct 11 21:54 support-files

提供配置文件

[root@node3 mysql]# cp support-files/my-large.cnf /etc/my.cnf cp: overwrite `/etc/my.cnf'? y [root@node3 mysql]# vim /etc/my.cnf 增加一行 datadir = /mydata/data

掛載DRBD到/mydata/data

[root@node3 mysql]# mkdir -pv /mydata/data [root@node3 mysql]# mount /dev/drbd0 /mydata/data [root@node3 mysql]# chown -R mysql.mysql /mydata

初始化mysql

[root@node3 mysql]# scripts/mysql_install_db --datadir=/mydata/data/ --basedir=/usr/local/mysql --user=mysql

給mysql提供啓動腳本

[root@node3 mysql]# cp /usr/local/mysql/support-files/mysql.server /etc/init.d/mysqld [root@node3 mysql]# chmod +x /etc/init.d/mysqld [root@node3 mysql]# service mysqld start Starting MySQL.... [ OK ]

給mysql提供客戶端

node4安裝mysql同node3

將node4作爲主節點

[root@node3 ~]# umount /mnt [root@node3 ~]# drbdadm secondary web [root@node3 ~]# drbd-overview 0:web/0 Connected Secondary/Secondary UpToDate/UpToDate C r----- [root@node4 ~]# drbdadm primary web [root@node4 ~]# drbd-overview 0:web/0 Connected Primary/Secondary UpToDate/UpToDate C r-----

掛載DRBD

[root@node4 mysql]# mkdir -pv /mydata/data [root@node4 mysql]# mount /dev/drbd0 /mydata/data [root@node4 mysql]# chown -R mysql.mysql /mydata

把node3上的mysql配置文件發送到node4相應的目錄中.

[root@node3 mysql]# scp /etc/my.cnf node4:/etc/ my.cnf 100% 4924 4.8KB/s 00:00 [root@node3 mysql]# scp /etc/init.d/mysqld node4:/etc/init.d/ mysqld 100% 12KB 11.6KB/s 00:00

測試能否啓動

[root@node4 ~]# service mysqld start

Starting MySQL... [ OK ]

OK 說明成功啓動

好了,到這裏mysql配置全部完成

9)配置crmsh 資源管理

在配置crmsh之前要先把drbd停掉

關閉drbd並設置開機不啓動

關閉node3

[root@node3 ~]# service drbd stop Stopping all DRBD resources: . [root@node3 ~]# chkconfig drbd off [root@node3 ~]# chkconfig drbd --list drbd 0:關閉 1:關閉 2:關閉 3:關閉 4:關閉 5:關閉 6:關閉

關閉node4

[root@node4 ~]# service drbd stop Stopping all DRBD resources: . [root@node4 ~]# chkconfig drbd off [root@node4 ~]# chkconfig drbd --list drbd 0:關閉 1:關閉 2:關閉 3:關閉 4:關閉 5:關閉 6:關閉

禁用STONISH、忽略法定票數

[root@node3 ~]# crm crm(live)# configure crm(live)configure# property stonith-enabled=false crm(live)configure# property no-quorum-policy=ignore crm(live)configure# verify crm(live)configure# commit

增加DRBD資源

[root@node3 ~]# crm crm(live)configure# primitive mysqldrbd ocf:linbit:drbd params drbd_resource=web op start timeout=240 op stop timeout=100 op monitor role=Master interval=20 timeout=30 op monitor role=Slave interval=30 timeout=30 crm(live)configure# ms ms_mysqldrbd mysqldrbd meta master-max=1 master-node-max=1 clone-max=2 clone-node-max=1 notify=true

增加文件系統資源

crm(live)configure# primitive mystore ocf:heartbeat:Filesystem params device=/dev/drbd0 directory=/mydata/data fstype=ext4 op start timeout=60 op stop timeout=60 crm(live)configure# verify crm(live)configure# colocation mystore_with_ms_mysqldrbd inf: mystore ms_mysqldrbd:Master #mystor和mysqldrbd在一起 crm(live)configure# order mystore_after_ms_mysqldrbd mandatory: ms_mysqldrbd:promote mystore:start #mystore要晚於mysqldrbd啓動 crm(live)configure# verify

增加mysql資源

crm(live)configure# primitive mysqld lsb:mysqld crm(live)configure# colocation mysqld_with_mystore inf: mysqld mystore #定義mysql和mysqlstore 在一起 crm(live)configure# verify

增加VIP資源

crm(live)configure# primitive myip ocf:heartbeat:IPaddr params ip=172.16.16.8 op monitor interval=30s timeout=20s crm(live)configure# colocation myip_with_ms_mysqldrbd_master inf: myip ms_mysqldrbd:Master #第一VIP和mysqldrbd在一起

查看一下配置

crm(live)configure# show node node3 \ attributes standby="on" node node4 primitive myip ocf:heartbeat:IPaddr \ params ip="172.16.16.8" \ op monitor interval="30s" timeout="20s" primitive mysqld lsb:mysqld primitive mysqldrbd ocf:linbit:drbd \ params drbd_resource="web" \ op start timeout="240" interval="0" \ op stop timeout="100" interval="0" \ op monitor role="Master" interval="20" timeout="30" \ op monitor role="Slave" interval="30" timeout="30" primitive mystore ocf:heartbeat:Filesystem \ params device="/dev/drbd0" directory="/mydata/data" fstype="ext4" \ op start timeout="60" interval="0" \ op stop timeout="60" interval="0" ms ms_mysqldrbd mysqldrbd \ meta master-max="1" master-node-max="1" clone-max="2" clone-node-max="1" notify="true" target-role="Started" colocation myip_with_ms_mysqldrbd inf: ms_mysqldrbd:Master myip colocation mysqld_with_mystore inf: mysqld mystore colocation mystore_with_ms_mysqldrbd inf: mystore ms_mysqldrbd:Master order mysqld_after_mystore inf: mystore mysqld order mystore_after_ms_mysqldrbd inf: ms_mysqldrbd:promote mystore:start property $id="cib-bootstrap-options" \ stonith-enabled="false" \ no-quorum-policy="ignore" \ dc-version="1.1.10-14.el6-368c726" \ cluster-infrastructure="classic openais (with plugin)" \ expected-quorum-votes="2" \ last-lrm-refresh="1413090998" rsc_defaults $id="rsc-options" \ resource-stickiness="100"

10)測試一下高可用mysql能否使用

首先查看一下高可用集羣的狀態

crm(live)# status Last updated: Sun Oct 12 14:27:51 2014 Last change: Sun Oct 12 14:27:47 2014 via crm_attribute on node3 Stack: classic openais (with plugin) Current DC: node3 - partition with quorum Version: 1.1.10-14.el6-368c726 2 Nodes configured, 2 expected votes 5 Resources configured Online: [ node3 node4 ] Master/Slave Set: ms_mysqldrbd [mysqldrbd] Masters: [ node3 ] Slaves: [ node4 ] mystore (ocf::heartbeat:Filesystem): Started node3 myip (ocf::heartbeat:IPaddr): Started node3

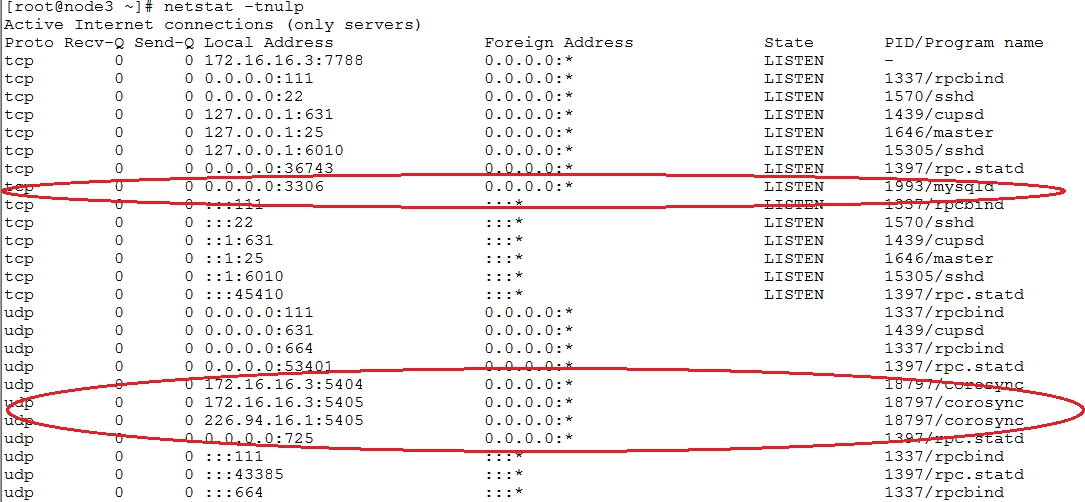

看 現在node3和node4都在線 ,此時node3爲主節點,資源都在node3節點上.看下node3是否已經啓動了mysql

可以看到mysql在node3節點上

現在我們來模擬node3節點下線

[root@node3 ~]# crm crm(live)# node crm(live)node# standby crm(live)node#

現在來查看下mysql高可用集羣的狀態

[root@node4 ~]# crm status Last updated: Sun Oct 12 14:39:38 2014 Last change: Sun Oct 12 14:39:22 2014 via crm_attribute on node3 Stack: classic openais (with plugin) Current DC: node3 - partition with quorum Version: 1.1.10-14.el6-368c726 2 Nodes configured, 2 expected votes 5 Resources configured Node node3: standby Online: [ node4 ] Master/Slave Set: ms_mysqldrbd [mysqldrbd] Masters: [ node4 ] Stopped: [ node3 ] mystore(ocf::heartbeat:Filesystem):Started node4 myip(ocf::heartbeat:IPaddr):Started node4

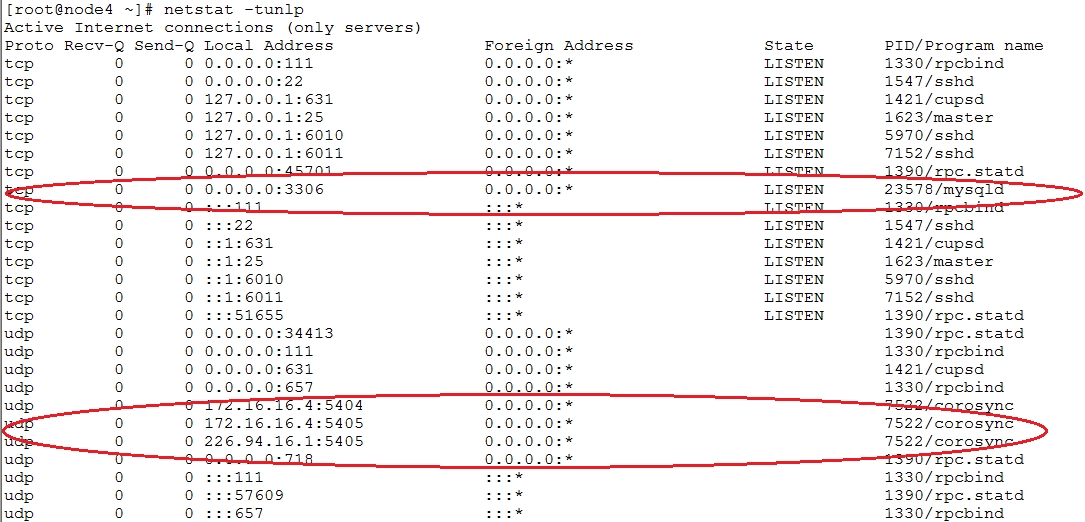

可以看到node4成爲了主節點,node3處於standby狀態,資源都轉移到了node4上面.

可以看到mysql在node4節點上依然工作起來..

11)測試一下能否寫入數據

給連接的IP地址授權

[root@node4 ~]# /usr/local/mysql/bin/mysql Welcome to the MariaDB monitor. Commands end with ; or \g. Your MariaDB connection id is 2 Server version: 5.5.36-MariaDB-log MariaDB Server Copyright (c) 2000, 2014, Oracle, Monty Program Ab and others. Type 'help;' or '\h' for help. Type '\c' to clear the current input statement. MariaDB [(none)]> grant all on *.* to root@"172.16.16.%" identified by "123456"; Query OK, 0 rows affected (0.12 sec)

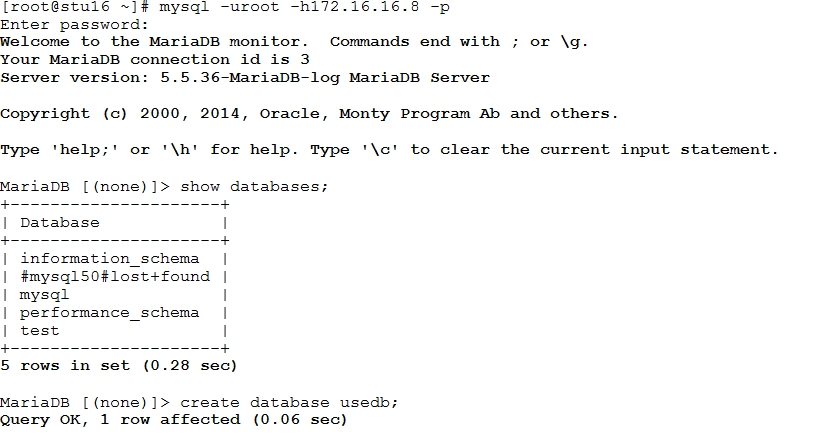

器其他主機上連接數據庫測試

可以看到在stu16(IP:172.16.16.1)主機上連接測試可以,查詢數據,創建數據.

status下面出現提示錯誤時可以先進入resource模式cleanup下相應的服務.如果想要在configure模式下edit.需要先停掉相應的服務,同樣進入resource模式stop相應的服務,在進行cleanup.接着就可以編輯保存了.

注意:

KILL掉服務後,服務是不會自動重啓的。因爲節點沒有故障,所以資源不會轉移,默認情況下,pacemaker不會對任何資源進行監控。所以,即便是資源關掉了,只要節點沒有故障,資源依然不會轉移。要想達到資源轉移的目的,得定義監控(monitor)。

OK 我們的mysql高可以集羣就寫到這裏.