集羣環境說明

hostname ip vip 角色 master1 192.168.6.101 192.168.6.110 etcd集羣,k8s-master ,keepalived master2 192.168.6.102 192.168.6.110 etcd集羣,k8s-master ,keepalived master3 192.168.6.103 192.168.6.110 etcd集羣,k8s-master ,keepalived node1 192.168.6.104 k8s-node node2 192.168.6.105 k8s-node

軟件版本說明

系統版本:CentOS 7.4.1708

內核版本:3.10.0-693.17.1.el7.x86_64

etcd版本:3.2.11

docker版本:1.12.6

kubernetes版本:1.9.2準備工作

1.更新軟件源

yum -y install epel-release

yum -y update

yum -y install wget net-tools2.停止防火牆

systemctl stop firewalld

systemctl disable firewalld3.時間校時

/usr/sbin/ntpdate asia.pool.ntp.org4.關閉swap

swapoff -a

sed 's/.*swap.*/#&/' /etc/fstabPS: 不做此步驟 kubeadm init 時候出現下面錯誤

kubelet: error: failed to run Kubelet: Running with swap on is not supported, please disable swap! or set --fail-swap-on5.禁止iptables對bridge數據進行處理

cat <<EOF > /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

sysctl -p /etc/sysctl.confPS:不做此步驟 kubeadm init 時候出現下面錯誤

[ERROR FileContent--proc-sys-net-bridge-bridge-nf-call-iptables]: /proc/sys/net/bridge/bridge-nf-call-iptables6.修改hosts文件

cat >> /etc/hosts << EOF

192.168.6.101 master1

192.168.6.102 master2

192.168.6.103 master3

192.168.6.104 node1

192.168.6.105 node2

EOF7.設置免密碼登錄

ssh-keygen -t rsa

ssh-copy-id [email protected]

ssh-copy-id [email protected]

ssh-copy-id [email protected]

ssh-copy-id [email protected]

ssh-copy-id [email protected]8.關閉selinux

sed -i '/^SELINUX=/s/SELINUX=.*/SELINUX=disabled/g' /etc/sysconfig/selinux9.重啓服務器

rebootetcd集羣安裝

1.下載cfssl,cfssljson,cfsslconfig軟件

wget https://pkg.cfssl.org/R1.2/cfssl_linux-amd64

chmod +x cfssl_linux-amd64

mv cfssl_linux-amd64 /usr/local/bin/cfssl

wget https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64

chmod +x cfssljson_linux-amd64

mv cfssljson_linux-amd64 /usr/local/bin/cfssljson

wget https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64

chmod +x cfssl-certinfo_linux-amd64

mv cfssl-certinfo_linux-amd64 /usr/local/bin/cfssl-certinfo2.生成key所需要文件

cat > ca-csr.json <<EOF

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOFcat > ca-config.json <<EOF

{

"signing": {

"default": {

"expiry": "8760h"

},

"profiles": {

"kubernetes": {

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

],

"expiry": "8760h"

}

}

}

}

EOFcat > etcd-csr.json <<EOF

{

"CN": "etcd",

"hosts": [

"127.0.0.1",

"192.168.6.101",

"192.168.6.102",

"192.168.6.103",

"192.168.6.110"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF3.生成key

cfssl gencert -initca ca-csr.json | cfssljson -bare ca

cfssl gencert -ca=ca.pem \

-ca-key=ca-key.pem \

-config=ca-config.json \

-profile=kubernetes etcd-csr.json | cfssljson -bare etcd

mkdir -p /etc/etcd/ssl

cp etcd.pem etcd-key.pem ca.pem /etc/etcd/ssl/4.下載etcd

wget https://github.com/coreos/etcd/releases/download/v3.2.11/etcd-v3.2.11-linux-amd64.tar.gz

tar -xvf etcd-v3.2.11-linux-amd64.tar.gz

cp etcd-v3.2.11-linux-amd64/etcd* /usr/local/bin5.生成etcd啓動服務文件

cat > /etc/systemd/system/etcd.service <<EOF

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

Documentation=https://github.com/coreos

[Service]

Type=notify

WorkingDirectory=/var/lib/etcd/

ExecStart=/usr/local/bin/etcd \

--name=etcd-host1 \

--cert-file=/etc/etcd/ssl/etcd.pem \

--key-file=/etc/etcd/ssl/etcd-key.pem \

--peer-cert-file=/etc/etcd/ssl/etcd.pem \

--peer-key-file=/etc/etcd/ssl/etcd-key.pem \

--trusted-ca-file=/etc/etcd/ssl/ca.pem \

--peer-trusted-ca-file=/etc/etcd/ssl/ca.pem \

--initial-advertise-peer-urls=https://192.168.6.101:2380 \

--listen-peer-urls=https://192.168.6.101:2380 \

--listen-client-urls=https://192.168.6.101:2379,http://127.0.0.1:2379 \

--advertise-client-urls=https://192.168.6.101:2379 \

--initial-cluster-token=etcd-cluster-0 \

--initial-cluster=etcd-host1=https://192.168.6.101:2380,etcd-host2=https://192.168.6.102:2380,etcd-host3=https://192.168.6.103:2380 \

--initial-cluster-state=new \

--data-dir=/var/lib/etcd

Restart=on-failure

RestartSec=5

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF6.同步到其它etcd節點上

scp -r /etc/etcd/ master2:/etc/

scp -r /etc/etcd/ master3:/etc/

scp /usr/local/bin/etcd* master2:/usr/local/bin/

scp /usr/local/bin/etcd* master3:/usr/local/bin/

scp /etc/systemd/system/etcd.service master2:/etc/systemd/system/

scp /etc/systemd/system/etcd.service master2:/etc/systemd/system/PS:注意修改etcd.service相關信息

--name

--initial-advertise-peer-urls

--listen-peer-urls

--listen-client-urls

--advertise-client-urls7.啓動etcd集羣

mkdir -pv /var/lib/etcd

systemctl daemon-reload

systemctl enable etcd

systemctl start etcd8.驗證etcd集羣狀態

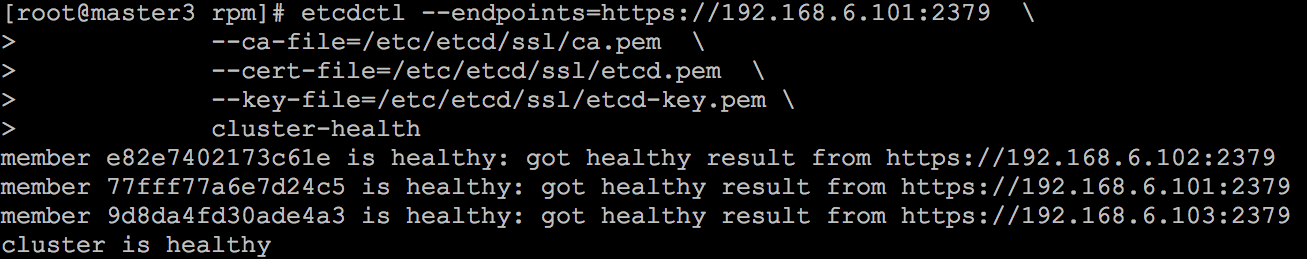

etcdctl --endpoints=https://192.168.6.101:2379 \

--ca-file=/etc/etcd/ssl/ca.pem \

--cert-file=/etc/etcd/ssl/etcd.pem \

--key-file=/etc/etcd/ssl/etcd-key.pem \

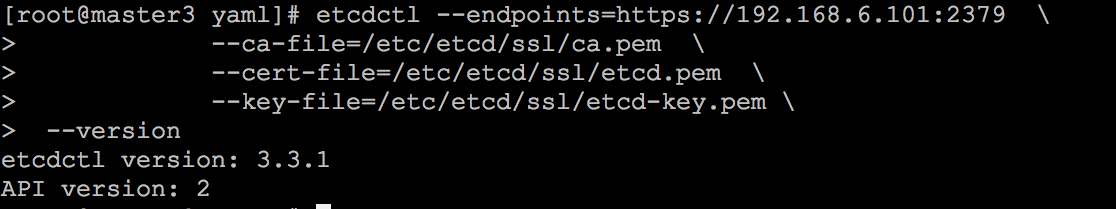

cluster-health說明:目前ETCDCTL_API版本號爲2,需要更改版本號3,否則無法操作集羣信息

更改API版本,具體操作如下

export ETCDCTL_API=3keepalived安裝

1.分別在三臺master服務器上安裝keepalived

yum -y install keepalived2.生成配置文件,並同步到其它節點,注意修改註釋部分

cat > /etc/keepalived/keepalived.conf << EOF

global_defs {

router_id LVS_k8s

}

vrrp_script CheckK8sMaster {

script "curl -k https://192.168.6.110:6443" # vip

interval 3

timeout 9

fall 2

rise 2

}

vrrp_instance VI_1 {

state MASTER

interface ens160 # 本地網卡名稱

virtual_router_id 61

priority 120 # 權重,要唯一

advert_int 1

mcast_src_ip 192.168.6.101 # 本地IP

nopreempt

authentication {

auth_type PASS

auth_pass sqP05dQgMSlzrxHj

}

unicast_peer {

#192.168.6.101 # 註釋本地IP

192.168.6.102

192.168.6.103

}

virtual_ipaddress {

192.168.6.110/24 # VIP

}

track_script {

CheckK8sMaster

}

}

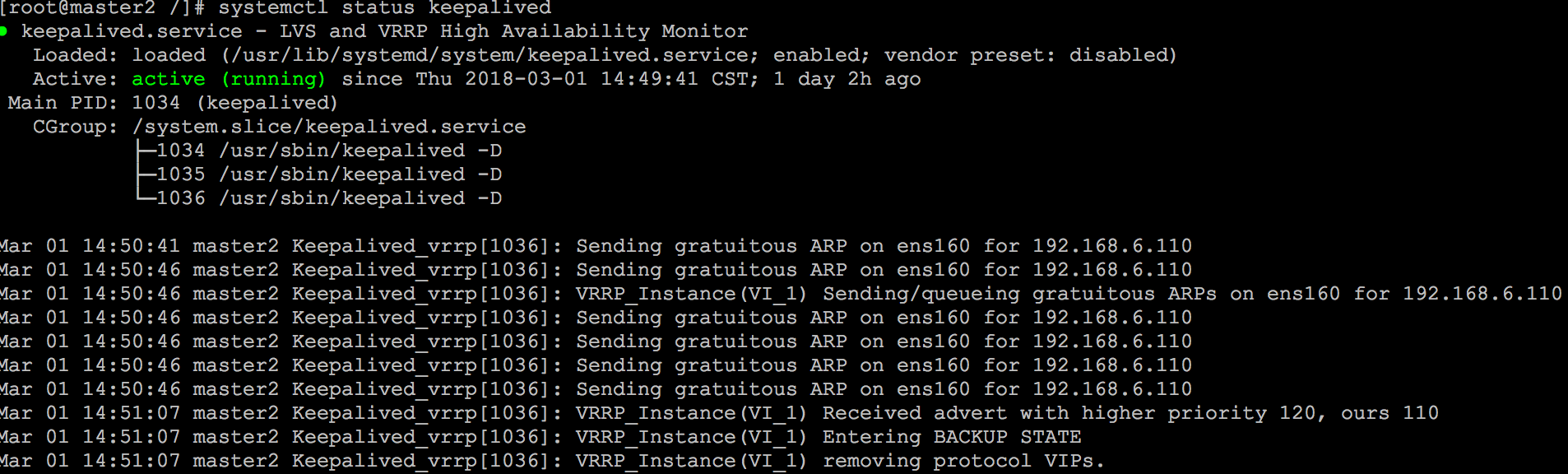

EOF3.分別在三臺服務器上啓動keepalived

systemctl enable keepalived

systemctl start keepalived

systemctl status keepalived安裝docker

1.分別在五臺服務器上安裝docker

yum -y install docker

systemctl enable docker

systemctl start docker安裝kubernetes集羣

1.docker-image下載

鏈接:https://pan.baidu.com/s/1rahyOrU 密碼:kw12

2.kubernetes rpm包下載

鏈接:https://pan.baidu.com/s/1dgVjWU 密碼:1ejn

3.yaml文件下載

鏈接:https://pan.baidu.com/s/1gfTnLJ1 密碼:9sbl

4.下載文件移動到/root/k8s下,並同步到其它4臺服務器

scp -r /root/k8s master2:

scp -r /root/k8s master3:

scp -r /root/k8s node1:

scp -r /root/k8s node2:5.分別在5臺服務器運行以下命令

cd /root/k8s/rpm

yum -y install *.rpm

cd /root/k8s/docker-image

for i in `ls`;do docker load < $i;done

systemctl daemon-reload

systemctl enable kubelet

systemctl start kubelet

注意:kubelet 和 docker cgroup driver需要一致

$ grep driver /etc/systemd/system/kubelet.service.d/10-kubeadm.conf

Environment="KUBELET_CGROUP_ARGS=--cgroup-driver=systemd"

$ grep driver /usr/lib/systemd/system/docker.service

--exec-opt native.cgroupdriver=systemd \6.初始化kubernetes

cd /root/k8s/yaml

kubeadm init --config kubeadm-config.yaml --ignore-preflight-errors=swapPS:記住 kubeadm join --token 開頭的信息,加入節點用

如果初始化失敗或者想重置集羣,可以使用以下命令

$ kubeadm reset

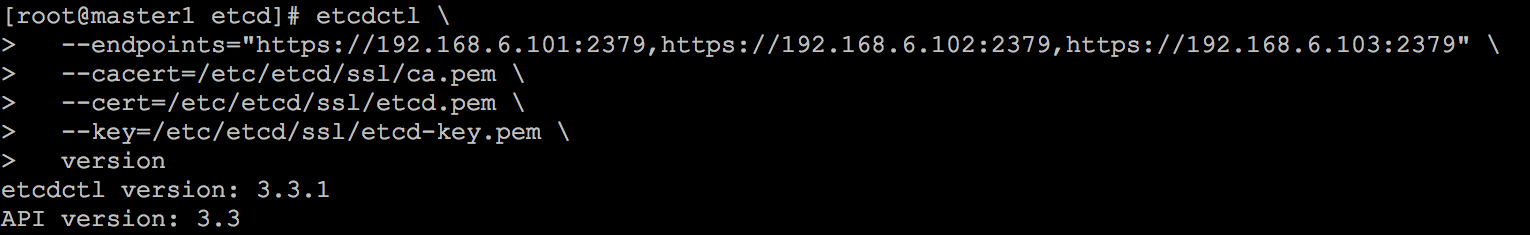

$ etcdctl \

--endpoints="https://192.168.6.101:2379,https://192.168.6.102:2379,https://192.168.6.103:2379" \

--cacert=/etc/etcd/ssl/ca.pem \

--cert=/etc/etcd/ssl/etcd.pem \

--key=/etc/etcd/ssl/etcd-key.pem \

del /registry --prefix7.kubernetes管理權限授權

echo "export KUBECONFIG=/etc/kubernetes/admin.conf" >> ~/.bash_profile

source ~/.bash_profile8.安裝網絡組件flannel

kube-flannel.yaml文件Network值必須同kubeadm-config.yaml文件podSubnet值相同

kubectl create -f kube-flannel.yaml9.部署其它master節點

kubernetes pki目錄同步到其它兩個master節點上

scp -r /etc/kubernetes/pki master2:/etc/kubernetes/

scp -r /etc/kubernetes/pki master3:/etc/kubernetes/分別在master2 master3運行以下命令

cd /root/k8s/yaml

kubeadm init --config kubeadm-config.yaml --ignore-preflight-errors=swap

echo "export KUBECONFIG=/etc/kubernetes/admin.conf" >> ~/.bash_profile

source ~/.bash_profile

PS:爲了方便測試,你也可以去除master節點污點,以便pod能夠分配到master節點上,在本次測試環境我另加了兩個worker節點

kubectl taint nodes --all node-role.kubernetes.io/master-標記污點

kubectl taint nodes --all node-role.kubernetes.io/master=:NoSchedule10.添加kubernetes節點,分別在node1,node2運行以下命令

kubeadm join --token be0204.4f256def3933a7d6 192.168.6.110:6443 --discovery-token-ca-cert-hash sha256:9b1677f2a9121e89341daa5ce0dad0da2214cf1210857e1369033c43ad60b559上述命令,在kubeadm init初始化成功後會提示,如果忘記,可以使用以下命令獲取

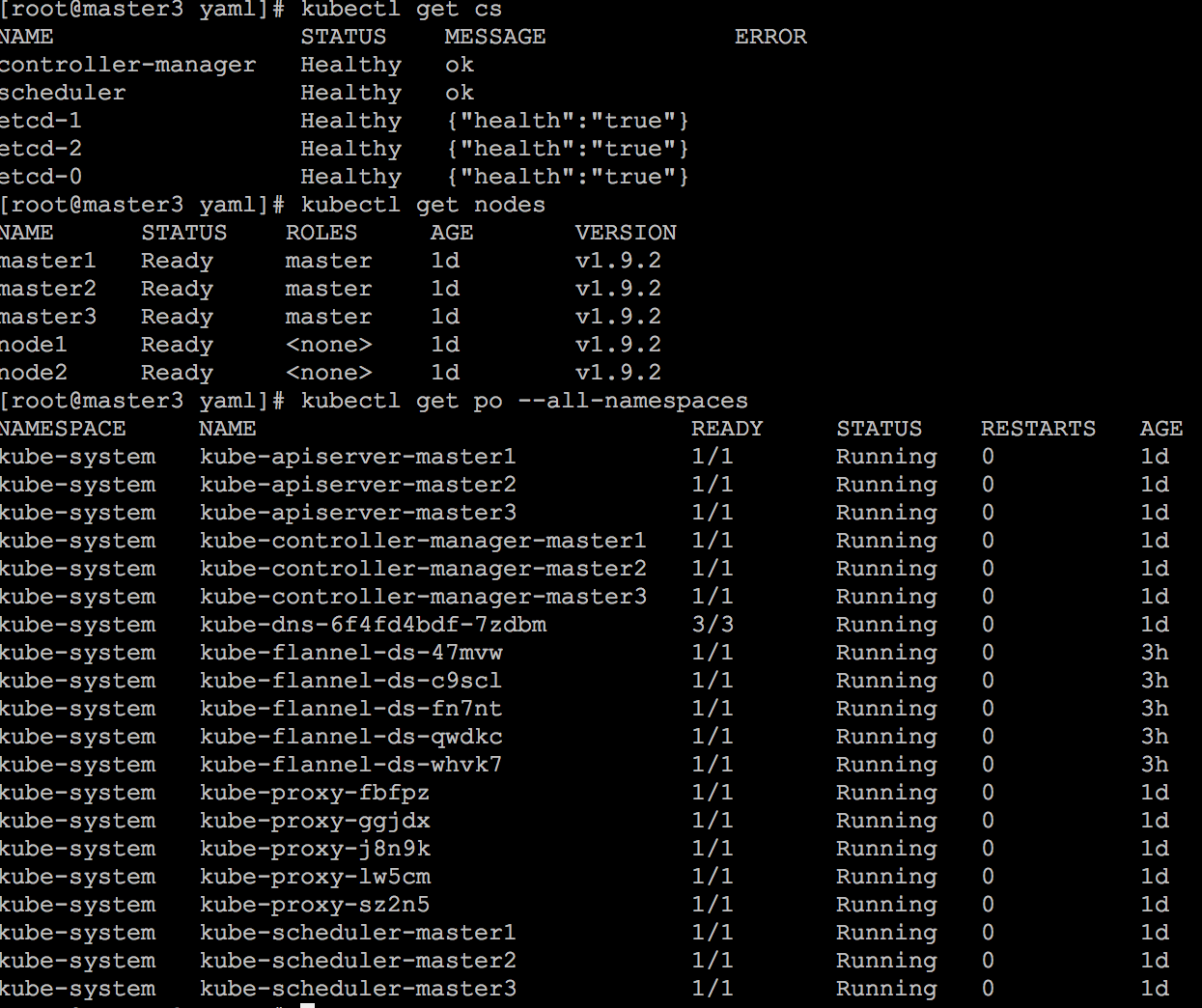

kubeadm token create --print-join-command11.驗證集羣

安裝dashboard

1.安裝dashboard heapster等組件

cd /root/k8s/yaml

kubectl create -f dashboard.yaml \

-f dashboard-rbac-admin.yml \

-f admin-user.yaml \

-f grafana.yaml \

-f heapster-rbac.yaml \

-f heapster.yaml \

-f influxdb.yamlPS:爲方便登錄,端口映射到宿主機,dashboard-service類型改爲NodePort

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kube-system

spec:

type: NodePort

ports:

- port: 443

targetPort: 8443

nodePort: 32666

selector:

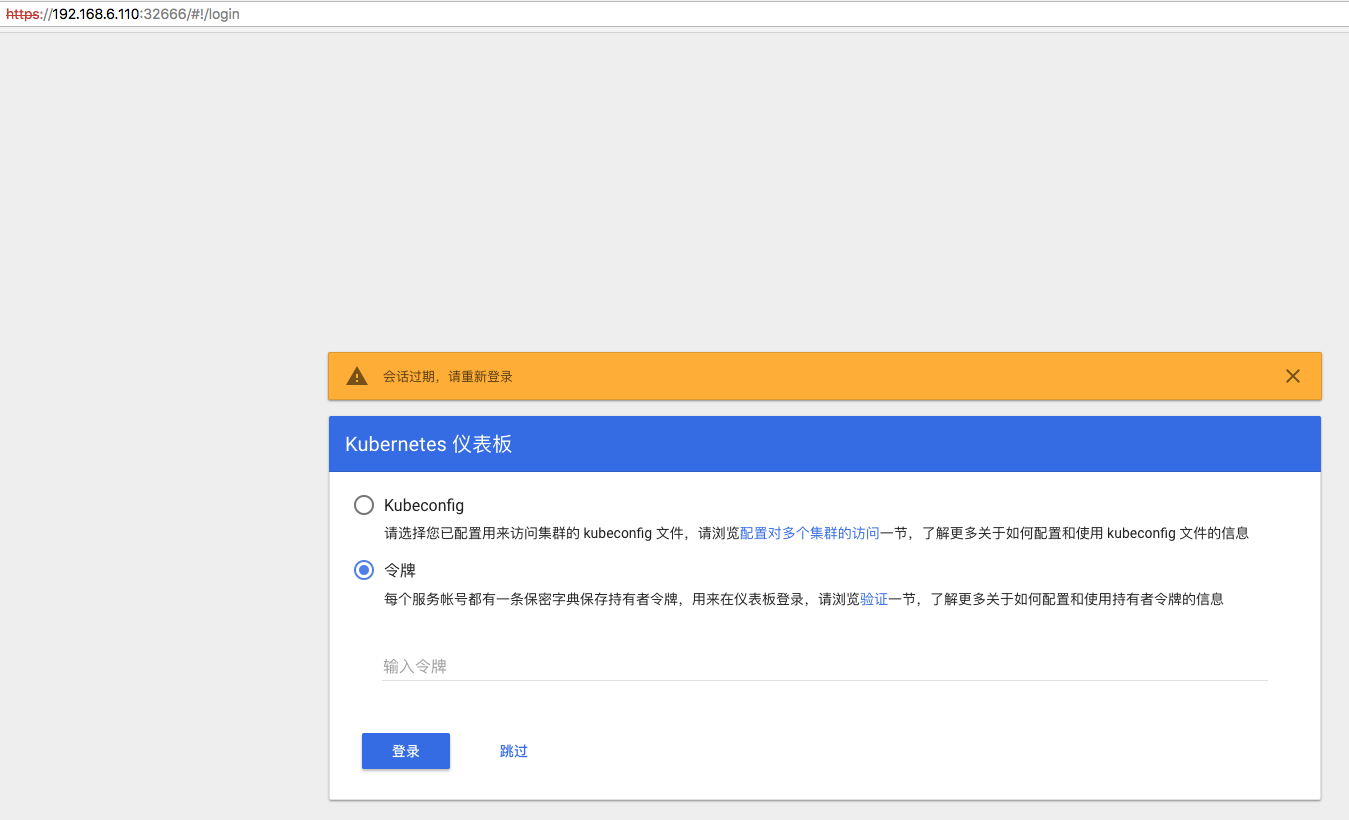

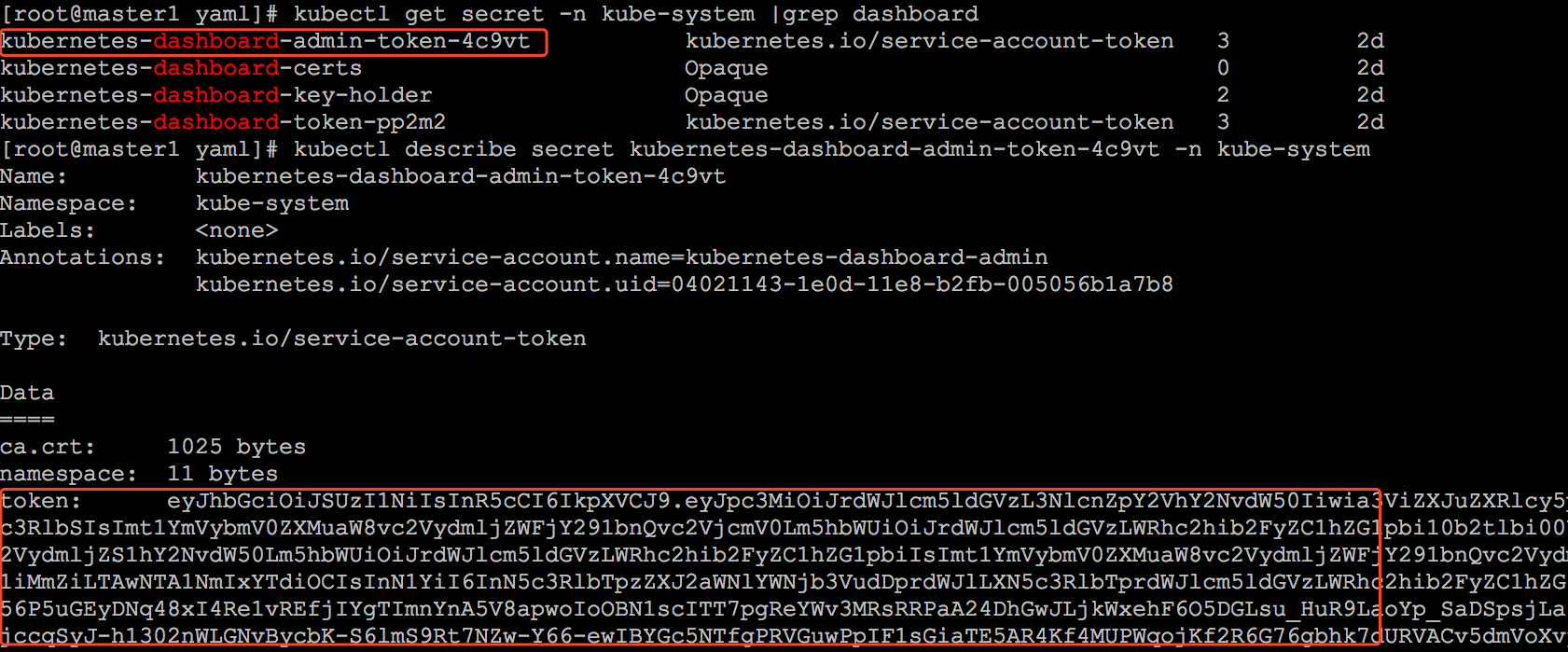

k8s-app: kubernetes-dashboard2.token方式(令牌登錄)登錄dashboard

把token值輸入登錄即可