一、實驗目的

軟件負載均衡一般通過兩種方式來實現:基於操作系統的軟負載實現和基於第三方應用的軟負載實現。LVS是基於Linux操作系統實現的一種軟負載,而HAProxy則是基於第三方應用實現的軟負載。HAProxy相比LVS的使用要簡單很多,但跟LVS一樣,HAProxy自己並不能實現高可用,一旦HAProxy節點故障,將會影響整個站點。本文帶來的是HAProxy基於KeepAlived實現Web高可用及動靜分離。

二、實驗環境介紹是準備

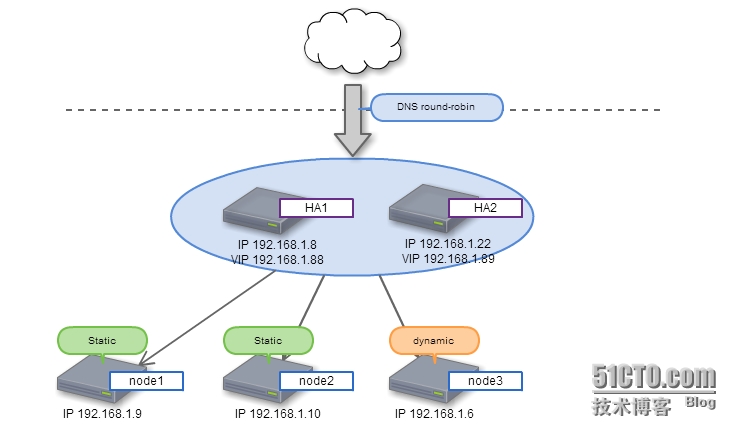

1、實驗拓撲圖

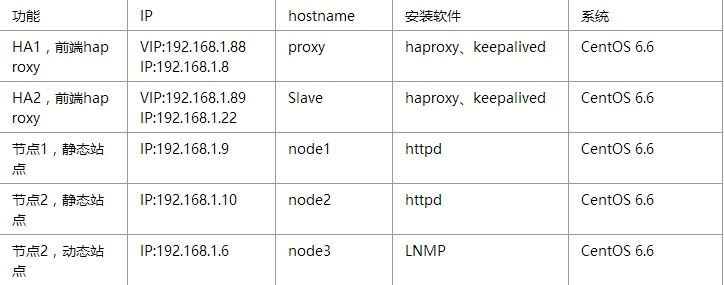

2、環境介紹

3、同步時間

[root@proxy ~]# ntpdate 202.120.2.101 [root@node1 ~]# ntpdate 202.120.2.101 [root@node2 ~]# ntpdate 202.120.2.101 [root@hpf-linux ~]# ntpdate 202.120.2.101 root@Slave ~]# ntpdate 202.120.2.101

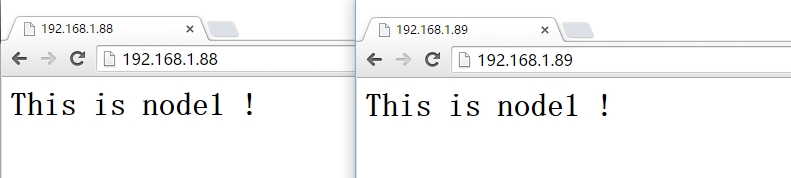

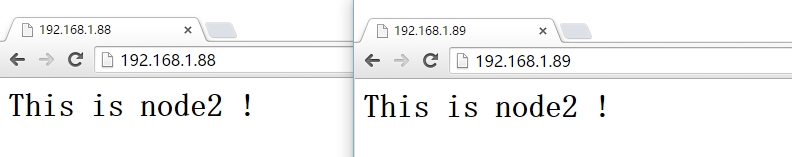

4、node1、node2節點安裝啓動httpd及提供測試頁

[root@node1 ~]# rpm -q httpd httpd-2.2.15-45.el6.centos.x86_64 [root@node1 ~]# cat /www/a.com/htdoc/index.html <h1>This is node1 !</h1> [root@node1 ~]# service httpd start [root@node2 ~]# rpm -q httpd httpd-2.2.15-45.el6.centos.x86_64 [root@node2 ~]# cat /www/a.com/htdoc/index.html <h1>This is node2 !</h1> [root@node2 ~]# service httpd start

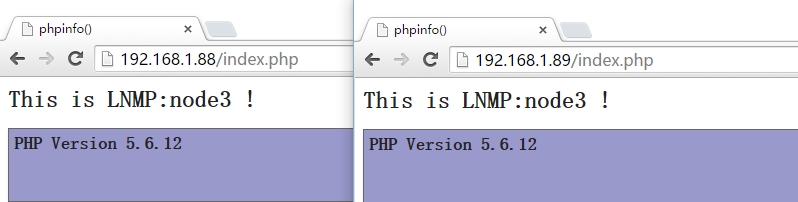

5、安裝LNMP動態站點並提供測試頁

如何安裝LNMP這裏就不列舉說明了,下面提供測試頁:

[root@hpf-linux ~]# cat /www/a.com/index.php <h1>This is LNMP:node3 !</h1> <?php phpinfo(); ?>

6、查看各節點的服務是否啓動

[root@proxy htdoc]# curl http://192.168.1.9 <h1>This is node1 !</h1> [root@proxy htdoc]# curl http://192.168.1.10 <h1>This is node2 !</h1> [root@proxy htdoc]# curl http://192.168.1.6 |head % Total % Received % Xferd Average Speed Time Time Time Current Dload Upload Total Spent Left Speed 100 75128 0 75128 0 0 1044k 0 --:--:-- --:--:-- --:--:-- 1063k <h1>This is LNMP:node3 !</h1>

三、安裝並配置Haproxy

1、在HA1節點安裝haproxy並提供配置文件

[root@proxy ~]# rpm -q haproxy

haproxy-1.5.4-2.el6_7.1.x86_64

[root@proxy ~]# cat /etc/haproxy/haproxy.cfg

global

log 127.0.0.1 local0 #日誌配置,所有日誌都記錄在本地,通過local0輸出

log 127.0.0.1 local1 notice

maxconn 25600 #最大連接數

chroot /usr/share/haproxy #改變Haproxy的工作目錄

uid 99 #用戶的UID

gid 99 #用戶的GID

nbproc 1 #進程數據(可以設置多個)

daemon #以後臺守護進程方式運行Haproxy

#debug #是否開啓調試

defaults

log global

mode http #默認使用協議,可以爲{http|tcp|health} http:是七層協議 tcp:是四層 health:只返回OK

option httplog #詳細記錄http日誌

option dontlognull #不記錄健康檢查的日誌信息

retries 3 #3次連接失敗則認爲服務不可用

option redispatch #ServerID對應的服務器宕機後,強制定向到其他運行正常的服務器

maxconn 30000 #默認的最大連接數

# contimeout 5000 #連接超時

# clitimeout 5000 #客戶端超時

# srvtimeout 5000 #服務器超時

timeout check 1s #心跳檢測超時

timeout http-request 10s #默認http請求超時時間

timeout queue 1m #默認隊列超時時間

timeout connect 10s #默認連接超時時間

timeout client 1m #默認客戶端超時時間

timeout server 1m #默認服務器超時時間

timeout http-keep-alive 10s #默認持久連接超時時間

listen stats

mode http

bind 0.0.0.0:8090 #指定IP地址與Port

stats enable #開啓Haproxy統計狀態

stats refresh 3s #統計頁面自動刷新時間間隔

stats hide-version #狀態頁面不顯示版本號

stats uri /haproxyadmin?stats #統計頁面的uri爲"/haproxyadmin?stats"

stats realm Haproxy\ Statistics #統計頁面認證時提示內容信息

stats auth admin:admin #統計頁面的用戶名與密碼

stats admin if TRUE #啓用或禁用狀態頁面

frontend allen #定義前端服務器

bind *:80

mode http

option httpclose #每次請求完成主動關閉http連接

option forwardfor #後端服務器獲取客戶端的IP地址,可以從http header中獲取

acl url_static path_end -i .html .jpg .gif #定義ACL規則以如".html"結尾的文件;-i:忽略大小寫

acl url_dynamic path_end -i .php

default_backend webservers #客戶端訪問時默認調用後端服務器地址池

use_backend lamp if url_dynamic #調用後端服務器並檢查ACL規則是否被匹配

backend webservers #定義後端服務器

balance roundrobin #定義算法;基於權重進行輪詢

server node1 192.168.1.9:80 check rise 2 fall 1 weight 2

server node2 192.168.1.10:80 check rise 2 fall 1 weight 2

backend lamp

balance source #定義算法;源地址hash運算;類似於Nginx的ip_hash

server lamp 192.168.1.6:80 check rise 2 fall 1

#####註釋:check:啓動對後端server的健康狀態檢測;rise:離線的server轉換到正常狀態成功檢查的次數;fall:確認server從正常狀態轉換爲不可用狀態需要檢查的次數;weight:權重,數量越大,超重越高從新載入文件:

[root@proxy ~]# service haproxy restart

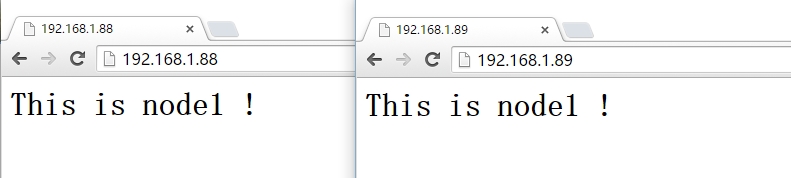

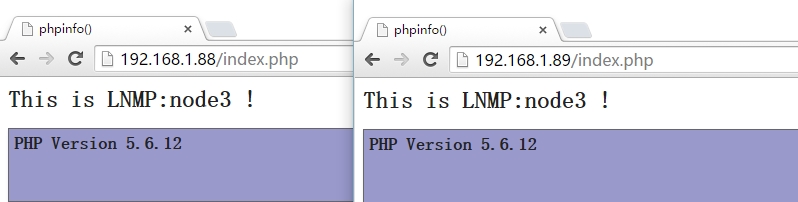

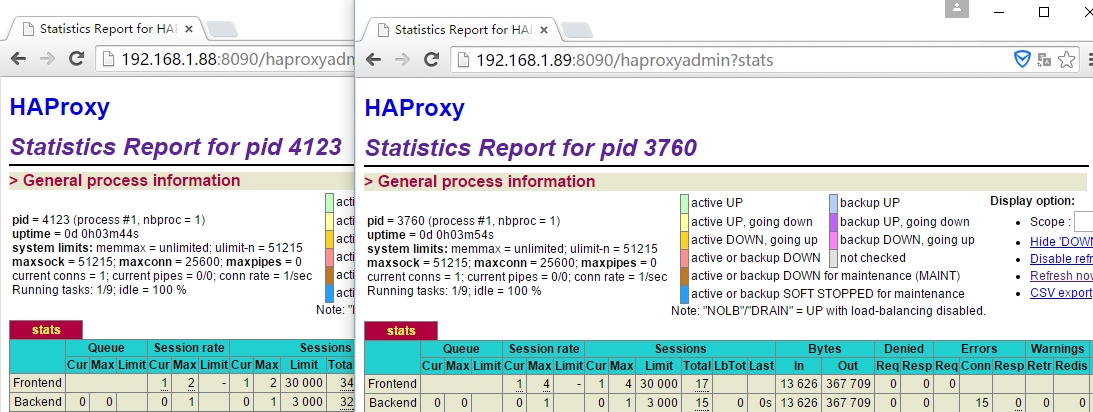

瀏覽器測試:

2、在HA2服務器上安裝Haproxy;這裏就不在介紹了,安裝與配置方法與在HA1服務器上安裝相同。

四、安裝配置keepalived

1、安裝

[root@proxy ~]# rpm -q keepalived keepalived-1.2.13-5.el6_6.x86_64 [root@Slave ~]# rpm -q keepalived keepalived-1.2.13-5.el6_6.i686

2、修改HA1服務器的主配置文件

[root@proxy ~]# cat /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

notification_email {

[email protected]

}

notification_email_from Master

smtp_connect_timeout 3

smtp_server 127.0.0.1

router_id LVS_DEVEL

}

vrrp_script chk_haproxy {

script "killall -0 haproxy"

interval 1

weight 2

}

vrrp_instance VI_1 {

interface eth0

state MASTER

priority 201

virtual_router_id 109

garp_master_delay 1

authentication {

auth_type PASS

auth_pass password

}

track_interface {

eth0

}

virtual_ipaddress {

192.168.1.88/16 dev eth0 label eth0:0

}

track_script {

chk_haproxy

}

notify_master "/etc/keepalived/notify.sh master"

notify_backup "/etc/keepalived/notify.sh backup"

notify_fault "/etc/keepalived/notify.sh fault"

}

vrrp_instance VI_2 {

interface eth0

state BACKUP

priority 99

virtual_router_id 52

garp_master_delay 1

authentication {

auth_type PASS

auth_pass password

}

track_interface {

eth0

}

virtual_ipaddress {

192.168.1.89/16 dev eth0 label eth0:1

}

track_script {

chk_haproxy

}

}配置HA1服務器notify.sh腳本:

[root@proxy ~]# cat /etc/keepalived/notify.sh #!/bin/bash # description: An example of notify script # vip=192.168.1.88 contact='[email protected]' notify() { mailsubject="`hostname` to be $1: $vip floating" mailbody="`date '+%F\ %T'`: vrrp transition, `hostname` changed to be $1" echo $mailbody | mail -s "$mailsubject" $contact } case "$1" in master) notify master /etc/rc.d/init.d/haproxy start exit 0 ;; backup) notify backup /etc/rc.d/init.d/haproxy stop exit 0 ;; fault) notify fault /etc/rc.d/init.d/haproxy stop exit 0 ;; *) echo 'Usage: `basename $0` {master|backup|fault}' exit 1 ;; esac

3、修改HA2服務器的主配置文件

[root@Slave ~]# cat /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

notification_email {

[email protected]

}

notification_email_from Slave

smtp_connect_timeout 3

smtp_server 127.0.0.1

router_id LVS_DEVEL

}

vrrp_script chk_haproxy {

script "killall -0 haproxy"

interval 1

weight 2

}

vrrp_instance VI_1 {

interface eth0

state BACKUP

priority 200

virtual_router_id 109

garp_master_delay 1

authentication {

auth_type PASS

auth_pass password

}

track_interface {

eth0

}

virtual_ipaddress {

192.168.1.88/16 dev eth0 label eth0:0

}

track_script {

chk_haproxy

}

}

vrrp_instance VI_2 {

interface eth0

state MASTER

priority 100

virtual_router_id 52

garp_master_delay 1

authentication {

auth_type PASS

auth_pass password

}

track_interface {

eth0

}

virtual_ipaddress {

192.168.1.89 dev eth0 label eth0:1

}

track_script {

chk_haproxy

}

notify_master "/etc/keepalived/notify.sh master"

notify_backup "/etc/keepalived/notify.sh backup"

notify_fault "/etc/keepalived/notify.sh fault"

}配置notify.sh腳本:

[root@Slave ~]# cat /etc/keepalived/notify.sh #!/bin/bash # description: An example of notify script # vip=192.168.1.89 contact='[email protected]' notify() { mailsubject="`hostname` to be $1: $vip floating" mailbody="`date '+%F\ %T'`: vrrp transition, `hostname` changed to be $1" echo $mailbody | mail -s "$mailsubject" $contact } case "$1" in master) notify master /etc/rc.d/init.d/haproxy start exit 0 ;; backup) notify backup /etc/rc.d/init.d/haproxy stop exit 0 ;; fault) notify fault /etc/rc.d/init.d/haproxy stop exit 0 ;; *) echo 'Usage: `basename $0` {master|backup|fault}' exit 1 ;; esac

啓動keepalived並查看VIP:

[root@proxy ~]# service keepalived start [root@Slave ~]# service keepalived start [root@proxy ~]# ip addr show 1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00 inet 127.0.0.1/8 scope host lo inet6 ::1/128 scope host valid_lft forever preferred_lft forever 2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 100 0 link/ether 00:0c:29:b0:04:27 brd ff:ff:ff:ff:ff:ff inet 192.168.1.8/24 brd 192.168.1.255 scope global eth0 inet 192.168.1.88/16 scope global eth0:0 inet6 fe80::20c:29ff:feb0:427/64 scope link valid_lft forever preferred_lft forever [root@Slave ~]# ip addr show 1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00 inet 127.0.0.1/8 scope host lo inet6 ::1/128 scope host valid_lft forever preferred_lft forever 2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UNKNOWN qlen 1000 link/ether 00:0c:29:df:1e:04 brd ff:ff:ff:ff:ff:ff inet 192.168.1.22/24 brd 192.168.1.255 scope global eth0 inet 192.168.1.89/32 scope global eth0:1 inet6 fe80::20c:29ff:fedf:1e04/64 scope link valid_lft forever preferred_lft forever

4、測試:

5、模擬haproxy機器故障

[root@proxy ~]# service haproxy stop

查看VIP:

[root@proxy ~]# ip addr show 1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00 inet 127.0.0.1/8 scope host lo inet6 ::1/128 scope host valid_lft forever preferred_lft forever 2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000 link/ether 00:0c:29:b0:04:27 brd ff:ff:ff:ff:ff:ff inet 192.168.1.8/24 brd 192.168.1.255 scope global eth0 inet6 fe80::20c:29ff:feb0:427/64 scope link valid_lft forever preferred_lft forever [root@Slave ~]# ip addr show 1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00 inet 127.0.0.1/8 scope host lo inet6 ::1/128 scope host valid_lft forever preferred_lft forever 2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UNKNOWN qlen 1000 link/ether 00:0c:29:df:1e:04 brd ff:ff:ff:ff:ff:ff inet 192.168.1.22/24 brd 192.168.1.255 scope global eth0 inet 192.168.1.89/32 scope global eth0:1 inet 192.168.1.88/16 scope global eth0:0 inet6 fe80::20c:29ff:fedf:1e04/64 scope link valid_lft forever preferred_lft forever

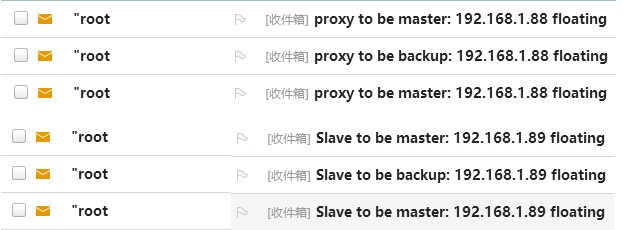

查看郵件: