一、RAID的基本概念

RAID即廉價磁盤冗餘陣列(Redundant Array of Inexpensive Disks),多個獨立的物理硬盤按照不同的方式組合起來,形成一個虛擬的硬盤。

二、RAID級別

常用級別:RAID0;RAID1;RAID5

RAID0:RAID0是以條帶的形式將數據均勻分佈在陣列的各個磁盤上.

RAID0特性:

RAID1:RAID1以鏡像爲冗餘方式,對虛擬磁盤上的數據做多份拷貝,放在成員磁盤上

RAID1特性:

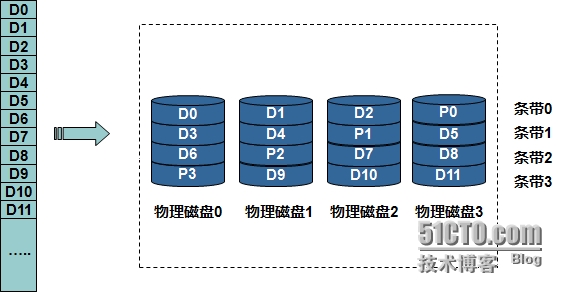

RAID5:RAID5採用獨立存取的陣列方式,校驗信息被均勻的分散到陣列的各個磁盤上.

RAID5特性:

組合不同級別的RAID:

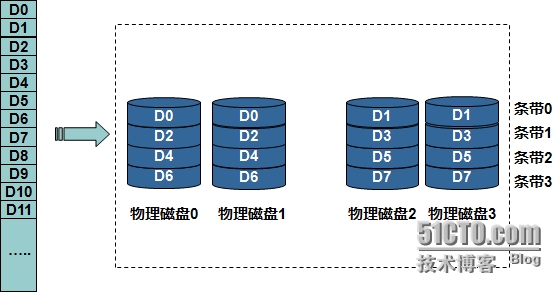

RAID10:RAID10結合RAID1和RAID0,先鏡像,再條帶化

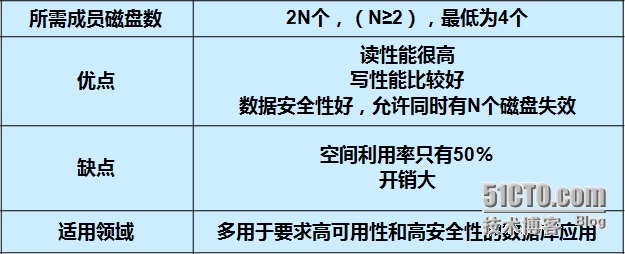

RAID10特性:

RAID50:

RAID50特性:

常用RAID級別的比較

三、RAID的實現方式和運行狀態

軟件RAID

à功能都依賴於主機CPU完成,沒有第三方的控制處理器和I/O芯片.

硬件RAID

à有專門的RAID控制處理器和I/O處理芯片來處理RAID任務,不需佔用主機CPU資源.

四、軟件RAID的實現方式

在實現軟件RAID時,我們需要用到mdadm命令。

mdadm:將任何塊設備作成RAID

mdadm爲模式化命令:

1、創建模式:-C

2、管理模式

3、監控模式:-F

4、增長模式:-G

5、裝配模式:-A

-D|--detail :顯示RAID設備的詳細信息;

管理模式:

--add:增加塊設備

--remove:移除塊設備

-f|--fail|--set-faulty:模擬塊設備損壞

-S :mdadm -S /dev/md# 停止RIAD

mdadm -D --scan >> /etc/mdadm.conf :把創建好的RAID寫入配置文件,便於以後的裝配。

格式化時,可以使用命令:mke2fs -j --stride=16 -b 4096 /dev/md# 可以提高設備效率,stride=chunkd值/塊大小(即4k)

創建模式:

mdadm -C RAID-name -l RAID級別 -n 設備個數和具體設備 -a 自動爲其創建設備文件 yes|no -c 指定chunk大小 -x 指定空閒盤的個數

具體的實施過程:(以RAID5爲例)

首先準備3個以上塊設備或者分區(必須在不同的硬盤上的不同分區,否則無意義)

這裏只演示基本過程:

創建一個2G的RAID5.

[root@localhost ~]# fdisk /dev/sdb

The number of cylinders for this disk is set to 2610.

There is nothing wrong with that, but this is larger than 1024,

and could in certain setups cause problems with:

1) software that runs at boot time (e.g., old versions of LILO)

2) booting and partitioning software from other OSs

(e.g., DOS FDISK, OS/2 FDISK)

Command (m for help): p

Disk /dev/sdb: 21.4 GB, 21474836480 bytes

255 heads, 63 sectors/track, 2610 cylinders

Units = cylinders of 16065 * 512 = 8225280 bytes

Device Boot Start End Blocks Id System

/dev/sdb1 1 123 987966 fd Linux raid autodetect

/dev/sdb2 124 246 987997+ fd Linux raid autodetect

/dev/sdb3 247 369 987997+ fd Linux raid autodetect

/dev/sdb4 370 492 987997+ fd Linux raid autodetect

Command (m for help): q

[root@localhost ~]# partprobe /dev/sdb

[root@localhost ~]# cat /proc/partitions

major minor #blocks name

8 0 20971520 sda

8 1 305203 sda1

8 2 19615365 sda2

8 3 1044225 sda3

8 16 20971520 sdb

8 17 987966 sdb1

8 18 987997 sdb2

8 19 987997 sdb3

8 20 987997 sdb4

[root@localhost ~]#

//需要的四塊分區已準備好(其中一塊是備用的),分別是1G,RAID5的利用率爲(n-1)/n,且調整類型爲fd(Linux raid autodetect)。

步驟開始:

[root@localhost ~]# mdadm -C /dev/md2 -l 5 -a yes -n 3 /dev/sdb{1,2,3} -x 1 /dev/sdb4

mdadm: Unknown keyword /dev/md3:

mdadm: Unknown keyword Preferred

mdadm: Unknown keyword Working

mdadm: /dev/sdb1 appears to be part of a raid array:

level=raid0 devices=2 ctime=Wed Feb 25 23:54:13 2015

mdadm: /dev/sdb2 appears to be part of a raid array:

level=raid0 devices=2 ctime=Wed Feb 25 23:54:13 2015

Continue creating array? y

mdadm: array /dev/md2 started.

[root@localhost ~]# mdadm -D /dev/md2

mdadm: Unknown keyword /dev/md3:

mdadm: Unknown keyword Preferred

mdadm: Unknown keyword Working

/dev/md2:

Version : 0.90

Creation Time : Sun Mar 1 21:56:37 2015

Raid Level : raid5

Array Size : 1975680 (1929.70 MiB 2023.10 MB)

Used Dev Size : 987840 (964.85 MiB 1011.55 MB)

Raid Devices : 3

Total Devices : 4

Preferred Minor : 2

Persistence : Superblock is persistent

Update Time : Sun Mar 1 21:56:50 2015

State : clean

Active Devices : 3

Working Devices : 4

Failed Devices : 0

Spare Devices : 1

Layout : left-symmetric

Chunk Size : 64K

UUID : 97957132:c644253c:b9eb8d83:a8bae7d1

Events : 0.4

Number Major Minor RaidDevice State

0 8 17 0 active sync /dev/sdb1

1 8 18 1 active sync /dev/sdb2

2 8 19 2 active sync /dev/sdb3

3 8 20 - spare /dev/sdb4

[root@localhost ~]# mke2fs -j /dev/md2 //格式化/dev/md2爲ext3文件系統

mke2fs 1.39 (29-May-2006)

Filesystem label=

OS type: Linux

Block size=4096 (log=2)

Fragment size=4096 (log=2)

247296 inodes, 493920 blocks

24696 blocks (5.00%) reserved for the super user

First data block=0

Maximum filesystem blocks=507510784

16 block groups

32768 blocks per group, 32768 fragments per group

15456 inodes per group

Superblock backups stored on blocks:

32768, 98304, 163840, 229376, 294912

Writing inode tables: done

Creating journal (8192 blocks): done

Writing superblocks and filesystem accounting information: done

This filesystem will be automatically checked every 37 mounts or

180 days, whichever comes first. Use tune2fs -c or -i to override.

[root@localhost ~]# mount /dev/md2 /mnt/ //掛載

[root@localhost ~]# ls -lh /mnt/ //掛載成功

total 16K

drwx------ 2 root root 16K Mar 1 21:59 lost+found

[root@localhost mnt]# mdadm /dev/md2 -f /dev/sdb1 //模擬損壞/dev/sdb1,剩餘的/dev/sdb4自動補上。

mdadm: Unknown keyword /dev/md3:

mdadm: Unknown keyword Preferred

mdadm: Unknown keyword Working

mdadm: set /dev/sdb1 faulty in /dev/md2

[root@localhost mnt]# mdadm -D /dev/md2

mdadm: Unknown keyword /dev/md3:

mdadm: Unknown keyword Preferred

mdadm: Unknown keyword Working

/dev/md2:

Version : 0.90

Creation Time : Sun Mar 1 21:56:37 2015

Raid Level : raid5

Array Size : 1975680 (1929.70 MiB 2023.10 MB)

Used Dev Size : 987840 (964.85 MiB 1011.55 MB)

Raid Devices : 3

Total Devices : 4

Preferred Minor : 2

Persistence : Superblock is persistent

Update Time : Sun Mar 1 22:06:40 2015

State : clean, degraded, recovering

Active Devices : 2

Working Devices : 3

Failed Devices : 1

Spare Devices : 1

Layout : left-symmetric

Chunk Size : 64K

Rebuild Status : 31% complete

UUID : 97957132:c644253c:b9eb8d83:a8bae7d1

Events : 0.6

Number Major Minor RaidDevice State

3 8 20 0 spare rebuilding /dev/sdb4

1 8 18 1 active sync /dev/sdb2

2 8 19 2 active sync /dev/sdb3

4 8 17 - faulty spare /dev/sdb1

[root@localhost mnt]# mdadm -D --scan >/etc/mdadm.conf //寫入配置文件

[root@localhost /]# umount /dev/md2

[root@localhost /]# mdadm -S /dev/md2

mdadm: stopped /dev/md2

[root@localhost /]# mdadm -A /dev/md2

mdadm: /dev/md2 has been started with 3 drives.

[root@localhost /]#

教程完成