一、配置高可用集羣的前提:(以兩節點的heartbeat爲例)

⑴時間必須保持同步

⑵節點之間必須用名稱互相通信

建議使用/etc/hosts,而不要用DNS

集羣中使用的主機名爲`uname -n`表示的主機名;

⑶ping node(僅偶數節點才需要)

⑷ssh密鑰認證進行無障礙通信;

二、heartbeat的配置

程序主配置文件:ha.cf

認證密鑰:authkeys, 其權限必須爲組和其它無權訪問;

heartbeat v1的資源配置文件:haresources

資源配置格式形如:

node4 192.168.30.100/24/eth0/192.168.30.255 Filesystem::192.168.30.13:/mydata::/mydata::nfs mysqld

需注意資源定義的順序應與資源啓動的順序一致

/usr/share/doc/heartbeat-VERSION 目錄中有此三個文件的模板,可將其複製到/etc/ha.d/目錄下

注意:hearbeat v1和v2的資源管理器有個缺陷,就是不能監控資源的運行狀態,例如做httpd高可用的兩個節點,如果httpd服務停止,但heartbeat程序運行正常(也就是說對方節點能正常接收到心跳信息),這時是不會進行資源轉移的。

三、ha.cf文件部分參數詳解

logfile /var/log/ha-log #指定heartbaet的日誌存放位置

keepalive 2 #指定心跳信息間隔時間爲2秒

deadtime 30 #指定備用節點在30秒內沒有收到主節點的心跳信息後,則立即接管主節點的服務資源

warntime 10 #指定心跳延遲的時間爲十秒。當10秒鐘內備份節點不能接收到主節點的心跳信號時,就會往日誌中寫入一個警告日誌,但此時不會切換服務

initdead 120 #在某些系統上,系統啓動或重啓之後需要經過一段時間網絡才能正常工作,該選項用於解決這種情況產生的時間間隔。取值至少爲deadtime的兩倍。

udpport 694 #694爲默認使用的端口號。

baud 19200 #設置串行通信的波特率

#bcast eth0 # Linux #以廣播方式通過eth0傳遞心跳信息

#mcast eth0 225.0.0.1 694 1 0 #以組播方式通過eth0傳遞心跳信息,一般在備用節點不止一臺時使用。Bcast、ucast和mcast分別代表廣播、單播和多播,是組織心跳的三種方式,任選其一即可。

#ucast eth0 192.168.1.2 #以單播方式通過eth0傳遞心跳信息,後面跟的IP地址應爲雙機對方的IP地址

auto_failback on #用來定義當主節點恢復後,是否將服務自動切回,heartbeat的兩臺主機分別爲主節點和備節點。主節點在正常情況下佔用資源並運行所有的服務,遇到故障時把資源交給備節點並由備節點運行服務。在該選項設爲on的情況下,一旦主節點恢復運行,則自動獲取資源並取代備節點,如果該選項設置爲off,那麼當主節點恢復後,將變爲備節點,而原來的備節點成爲主節點

#stonith baytech /etc/ha.d/conf/stonith.baytech

#watchdog /dev/watchdog #該選項是可選配置,是通過Heartbeat來監控系統的運行狀態。使用該特性,需要在內核中載入"softdog"內核模塊,用來生成實際的設備文件,如果系統中沒有這個內核模塊,就需要指定此模塊,重新編譯內核。編譯完成輸入"insmod softdog"加載該模塊。然後輸入"grep misc /proc/devices"(應爲10),輸入"cat /proc/misc |grep watchdog"(應爲130)。最後,生成設備文件:"mknod /dev/watchdog c 10 130" 。即可使用此功能

node node1.magedu.com node2.magedu.com #要做高可用的節點名稱,可以通過命令“uname –n”查看。

ping 192.168.12.237 #ping節點地址,ping節點選擇的越好,HA集羣就越強壯,可以選擇固定的路由器作爲ping節點,但是最好不要選擇集羣中的成員作爲ping節點,ping節點僅僅用來測試網絡連接

ping_group group1 192.168.12.120 192.168.12.237 #ping組

apiauth pingd gid=haclient uid=hacluster

respawn hacluster /usr/local/ha/lib/heartbeat/pingd -m 100 -d 5s

#該選項爲可選配置,列出與heartbeat一起啓動和關閉的進程,該進程一般是和heartbeat集成的插件,這些進程遇到故障可以自動重新啓動。最常用的進程是pingd,此進程用於檢測和監控網卡狀態,需要配合ping語句指定的ping node來檢測網絡的連通性。其中hacluster表示啓動pingd進程的身份。

#下面的配置是關鍵,也就是激活crm管理,開始使用v2 style格式

# crm respawn #還可以使用crm on/yes的寫法,但這樣寫的話,如果後面的cib.xml配置有問題會導致heartbeat直接重啓該服務器,所以,測試時建議使用respawn的寫法

#下面是對傳輸的數據進行壓縮,是可選項

compression bz2

compression_threshold 2

案例一:基於heartbeat v1配置mysql和httpd的高可用雙主模型,二者使用nfs共享數據

1、實驗環境:

node4: 192.168.30.14,mysql主節點,httpd備節點

node5: 192.168.30.15, mysql備節點,httpd主節點

node3: 192.168.30.20,nfs

node1:192.168.30.10, 作測試用的客戶端

mysql高可用所需的資源:

ip: 192.168.30.100

mysqld

nfs:/mydata

httpd高可用所需的資源:

ip: 192.168.30.101

httpd

nfs:/web

注:爲方便操作,本例中所有主機上都已清空iptables規則

2、準備工作

⑴讓節點之間的時間同步,並能使用名稱進行無障礙通信

ntpdate 0.centos.pool.ntp.org

vim /etc/hosts

ssh-keygen -t rsa

ssh-copy-id -i .ssh/id_rsa.pub root@node5

[root@node4 ~]# ntpdate 0.centos.pool.ntp.org #時間同步 13 Apr 23:08:47 ntpdate[2613]: the NTP socket is in use, exiting [root@node4 ~]# date Wed Apr 13 23:09:25 CST 2016 [root@node4 ~]# crontab -e */10 * * * * /usr/sbin/ntpdate 0.centos.pool.ntp.org &> /dev/null [root@node4 ~]# vim /etc/hosts #編輯本地hosts文件 127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4 ::1 localhost localhost.localdomain localhost6 localhost6.localdomain6 192.168.30.10 node1 192.168.30.20 node2 192.168.30.13 node3 192.168.30.14 node4 192.168.30.15 node5 [root@node4 ~]# scp /etc/hosts root@node5:/etc/ The authenticity of host 'node5 (192.168.30.15)' can't be established. RSA key fingerprint is a3:d3:a0:9d:f0:3b:3e:53:4e:ee:61:87:b9:3a:1c:8c. Are you sure you want to continue connecting (yes/no)? yes Warning: Permanently added 'node5,192.168.30.15' (RSA) to the list of known hosts. root@node5's password: hosts 100% 262 0.3KB/s 00:00 [root@node4 ~]# ping node5 PING node5 (192.168.30.15) 56(84) bytes of data. 64 bytes from node5 (192.168.30.15): icmp_seq=1 ttl=64 time=0.419 ms 64 bytes from node5 (192.168.30.15): icmp_seq=2 ttl=64 time=0.706 ms ^C --- node5 ping statistics --- 2 packets transmitted, 2 received, 0% packet loss, time 1888ms rtt min/avg/max/mdev = 0.419/0.562/0.706/0.145 ms [root@node4 ~]# ssh-keygen -t rsa #生成密鑰對 Generating public/private rsa key pair. Enter file in which to save the key (/root/.ssh/id_rsa): Enter passphrase (empty for no passphrase): Enter same passphrase again: Your identification has been saved in /root/.ssh/id_rsa. Your public key has been saved in /root/.ssh/id_rsa.pub. The key fingerprint is: bc:b8:e6:78:6d:51:91:30:4d:d4:dd:50:c0:18:f1:28 root@node4 The key's randomart image is: +--[ RSA 2048]----+ | o=ooo*o=.| | .+ ooo .| | E.. . | | . .. | | S. | | ... | | .... | | .o.o | | .+o. | +-----------------+ [root@node4 ~]# ssh-copy-id -i .ssh/id_rsa.pub root@node5 #將公鑰信息導入對方節點的認證文件中 The authenticity of host 'node5 (192.168.30.15)' can't be established. RSA key fingerprint is a3:d3:a0:9d:f0:3b:3e:53:4e:ee:61:87:b9:3a:1c:8c. Are you sure you want to continue connecting (yes/no)? yes Warning: Permanently added 'node5,192.168.30.15' (RSA) to the list of known hosts. root@node5's password: Now try logging into the machine, with "ssh 'root@node5'", and check in: .ssh/authorized_keys to make sure we haven't added extra keys that you weren't expecting. [root@node4 ~]# ssh root@node5 hostname #連接對方節點不需要輸入密碼了 node5

#在另一個節點上執行同樣的步驟 [root@node4 ~]# ntpdate 0.centos.pool.ntp.org ... [root@node4 ~]# crontab -e */10 * * * * /usr/sbin/ntpdate 202.120.2.101 [root@node5 ~]# cat /etc/hosts 127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4 ::1 localhost localhost.localdomain localhost6 localhost6.localdomain6 192.168.30.10 node1 192.168.30.20 node2 192.168.30.13 node3 192.168.30.14 node4 192.168.30.15 node5 [root@node5 ~]# ssh-keygen -t rsa ... [root@node5 ~]# ssh-copy-id -i .ssh/id_rsa.pub root@node4 ... [root@node5 ~]# ssh root@node4 hostname node4

⑵提供一個nfs服務器,共享兩個目錄,一個給mysql服務,一個給httpd服務

vim /etc/exports

/mydata 192.168.30.0/24(rw,no_root_squash) #mysql需要以root用戶身份執行初始化,故應該添加no_root_squash選項,初始化完畢後刪除即可

/web 192.168.30.0/24(rw)

[root@node3 ~]# mkdir -p /mydata/{data,binlogs} /web

[root@node3 ~]# vim /web/index.html

hello

[root@node3 ~]# ls /mydata

binlogs data

[root@node3 ~]# useradd -r mysql

[root@node3 ~]# id mysql

uid=27(mysql) gid=27(mysql) groups=27(mysql)

[root@node3 ~]# useradd -r apache

[root@node3 ~]# id apache

uid=48(apache) gid=48(apache) groups=48(apache)

[root@node3 ~]# chown -R mysql.mysql /mydata

[root@node3 ~]# setfacl -R -m u:apache:rwx /web #注意開放權限

[root@node3 ~]# vim /etc/exports

/mydata 192.168.30.0/24(rw,no_root_squash)

/web 192.168.30.0/24(rw)

[root@node3 ~]# service rpcbind status

rpcbind (pid 1337) is running...

[root@node3 ~]# service nfs start #啓動nfs服務

Starting NFS services: [ OK ]

Starting NFS quotas: [ OK ]

Starting NFS mountd: [ OK ]

Starting NFS daemon: [ OK ]

Starting RPC idmapd: [ OK ] ⑶在兩個節點上安裝好要做高可用的服務程序

chkconfig mysqld off #注意,欲做高可用的服務,要禁止其開機自動啓動

[root@node4 ~]# useradd -u 27 -r mysql [root@node4 ~]# useradd -u 48 -r apache [root@node4 ~]# yum -y install mysql-server httpd ... [root@node4 ~]# chkconfig mysqld off #注意,欲做高可用的服務,要禁止其開機自動啓動 [root@node4 ~]# chkconfig httpd off [root@node4 ~]# vim /etc/my.cnf [mysqld] datadir=/mydata/data socket=/var/lib/mysql/mysql.sock user=mysql log-bin=/mydata/binlogs/mysql-bin innodb_file_per_table=ON # Disabling symbolic-links is recommended to prevent assorted security risks symbolic-links=0 skip-name-resolve #禁止mysql進行反向名稱解析 [mysqld_safe] log-error=/var/log/mysqld.log pid-file=/var/run/mysqld/mysqld.pid [root@node4 ~]# scp /etc/my.cnf root@node5:/etc/ #節點間服務的配置文件要保存一致 my.cnf 100% 308 0.3KB/s [root@node4 ~]# mkdir /mydata [root@node4 ~]# showmount -e 192.168.30.13 Export list for 192.168.30.13: /web 192.168.30.0/24 /mydata 192.168.30.0/24 [root@node4 ~]# mount -t nfs 192.168.30.13:/mydata /mydata [root@node4 ~]# service mysqld start #首次啓動,進行初始化 Initializing MySQL database: Installing MySQL system tables... OK Filling help tables... OK [root@node4 ha.d]# mysql ... mysql> grant all on *.* to root@'192.168.30.%' identified by 'magedu'; Query OK, 0 rows affected (0.04 sec) mysql> flush privileges; Query OK, 0 rows affected (0.01 sec) mysql> \q Bye [root@node4 ~]# cd /mydata [root@node4 mydata]# ls binlogs data [root@node4 mydata]# ls data ibdata1 ib_logfile0 ib_logfile1 mysql test [root@node4 mydata]# ls binlogs mysql-bin.000001 mysql-bin.000002 mysql-bin.000003 mysql-bin.index [root@node4 mydata]# cd [root@node4 ~]# service mysqld stop Stopping mysqld: [ OK ] [root@node4 ~]# umount /mydata #另一節點執行類似步驟,只是不需要再次執行mysql初始化

當mysql初始化完畢後,可將nfs服務器上的no_root_squash選項去掉

[root@node3 ~]# vim /etc/exports /mydata 192.168.30.0/24(rw) /web 192.168.30.0/24(rw)

⑷在每個節點上安裝heartbeat並配置好資源

本例中安裝heartbeat v2,v2兼容v1,可使用haresources作爲配置接口。

說明:

①heartbeat-pils不要使用yum安裝,否則會被自動更新成cluter-glue,而cluster-glue跟heartbeat v2不兼容

②/usr/share/doc/heartbeat-VERSION 目錄中有ha.cf、haresources和authkey的模板,可將其複製到/etc/ha.d/目錄下:

cp /usr/share/doc/heartbeat-2.1.4/{authkeys,ha.cf,haresources} /etc/ha.d/

...

scp -p authkeys ha.cf haresources root@node5:/etc/ha.d/

authkeys #節點間的配置文件、消息認證文件,資源配置文件要保持一致

③/etc/ha.d/resource.d目錄中是一些資源代理

IPADDR:使用ifconfig命令配置ip

IPADDR2:使用ip addr命令配置ip(需要使用ip addr show命令查看)

④/usr/lib64/heartbeat目錄中是一些功能腳本

hb_standby:把當前節點變成備節點

hb_takeover:接管資源

ha_propagate:將ha.cf和authkeys複製到其它節點,會自動保持權限

send_arp:任何時候把地址奪過來就要通知前端路由更新arp緩存

haresources2cib.py:將haresources轉換成cib格式,輸出至/var/lib/heartbeat/crm/

[root@node4 ~]# rpm -ivh heartbeat-pils-2.1.4-12.el6.x86_64.rpm

...

[root@node4 ~]# yum -y install PyXML libnet perl-TimeDate

...

[root@node4 ~]# rpm -ivh heartbeat-stonith-2.1.4-12.el6.x86_64.rpm heartbeat-2.1.4-12.el6.x86_64.rpm

Preparing... ########################################### [100%]

1:heartbeat-stonith ########################################### [ 50%]

2:heartbeat ########################################### [100%]

[root@node4 ~]# ls /usr/share/doc/heartbeat-2.1.4/

apphbd.cf ChangeLog DirectoryMap.txt GettingStarted.html HardwareGuide.html hb_report.html heartbeat_api.txt Requirements.html rsync.txt

authkeys COPYING faqntips.html GettingStarted.txt HardwareGuide.txt hb_report.txt logd.cf Requirements.txt startstop

AUTHORS COPYING.LGPL faqntips.txt ha.cf haresources heartbeat_api.html README rsync.html

[root@node4 ~]# cp /usr/share/doc/heartbeat-2.1.4/{authkeys,ha.cf,haresources} /etc/ha.d/

[root@node4 ~]# cd /etc/ha.d

[root@node4 ha.d]# ls

authkeys ha.cf harc haresources rc.d README.config resource.d shellfuncs

[root@node4 ha.d]# ls resource.d/

apache db2 Filesystem ICP IPaddr IPsrcaddr LinuxSCSI LVSSyncDaemonSwap OCF Raid1 ServeRAID WinPopup

AudibleAlarm Delay hto-mapfuncs ids IPaddr2 IPv6addr LVM MailTo portblock SendArp WAS Xinetd

[root@node4 ha.d]# vim ha.cf #編輯配置文件,設置如下幾項,其它採用默認設置即可

...

logfile /var/log/ha-log #同時關閉logfacility local0

...

auto_failback on

mcast eth0 225.1.1.1 694 1 0

node node4 node5

ping 192.168.30.2

...

[root@node4 ha.d]# openssl rand -hex 10

392fa6f47a05ed67a0f7

[root@node4 ha.d]# vim authkeys

...

auth 1

1 sha1 392fa6f47a05ed67a0f7 #指定加密算法,將生成的隨機串附於加密算法之後

[root@node4 ha.d]# chmod 600 authkeys

[root@node4 ha.d]# vim haresources #配置資源

...

node4 192.168.30.100/24/eth0/192.168.30.255 Filesystem::192.168.30.13:/mydata::/mydata::nfs mysqld

node5 192.168.30.101/24/eth0/192.168.30.255 Filesystem::192.168.30.13:/web::/var/www/html::nfs httpd

[root@node4 ha.d]# scp -p authkeys ha.cf haresources root@node5:/etc/ha.d/ #節點間的配置文件、消息認證文件,資源配置文件要保持一致

authkeys 100% 680 0.7KB/s 00:00

ha.cf 100% 10KB 10.3KB/s 00:00

haresources 100% 6105 6.0KB/s 00:00

[root@node4 ha.d]# service heartbeat start;ssh root@node5 'service heartbeat start' #在兩個節點上啓動heartbeat

Starting High-Availability services:

2016/04/14_02:38:06 INFO: Resource is stopped

2016/04/14_02:38:06 INFO: Resource is stopped

Done.

Starting High-Availability services:

2016/04/14_02:38:05 INFO: Resource is stopped

2016/04/14_02:38:06 INFO: Resource is stopped

Done.

[root@node4 ha.d]# ifconfig

...

eth0:0 Link encap:Ethernet HWaddr 00:0C:29:32:52:1C

inet addr:192.168.30.100 Bcast:192.168.30.255 Mask:255.255.255.0

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

...

[root@node4 ha.d]# service mysqld status #mysqld在node4上已正常啓動

mysqld (pid 4094) is running...

[root@node4 ha.d]# service httpd status

httpd is stopped[root@node5 ~]# ifconfig ... eth0:0 Link encap:Ethernet HWaddr 00:0C:29:96:45:92 inet addr:192.168.30.101 Bcast:192.168.30.255 Mask:255.255.255.0 UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1 ... [root@node5 ~]# service httpd status #httpd在node5上已正常啓動 httpd (pid 3820) is running... [root@node5 ~]# service mysqld status mysqld is stopped

⑸客戶端測試:

[root@node1 ~]# curl 192.168.30.101 hello [root@node1 ~]# mysql -u root -h 192.168.30.100 -p ... mysql> create database hellodb; Query OK, 1 row affected (0.03 sec) mysql> show databases; +--------------------+ | Database | +--------------------+ | information_schema | | hellodb | | mysql | | test | +--------------------+ 4 rows in set (0.00 sec)

⑹模擬資源資源轉移

[root@node4 ~]# /usr/lib64/heartbeat/hb_standby #強制node4成爲備節點 2016/04/14_07:07:52 Going standby [all]. [root@node4 ~]# ifconfig eth0 Link encap:Ethernet HWaddr 00:0C:29:32:52:1C inet addr:192.168.30.14 Bcast:192.168.30.255 Mask:255.255.255.0 inet6 addr: fe80::20c:29ff:fe32:521c/64 Scope:Link UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1 RX packets:91672 errors:0 dropped:0 overruns:0 frame:0 TX packets:87427 errors:0 dropped:0 overruns:0 carrier:0 collisions:0 txqueuelen:1000 RX bytes:56564639 (53.9 MiB) TX bytes:38563809 (36.7 MiB) lo Link encap:Local Loopback inet addr:127.0.0.1 Mask:255.0.0.0 inet6 addr: ::1/128 Scope:Host UP LOOPBACK RUNNING MTU:16436 Metric:1 RX packets:48 errors:0 dropped:0 overruns:0 frame:0 TX packets:48 errors:0 dropped:0 overruns:0 carrier:0 collisions:0 txqueuelen:0 RX bytes:3120 (3.0 KiB) TX bytes:3120 (3.0 KiB)

[root@node5 ~]# ifconfig #mysql服務已轉移到node5上運行 ... eth0:0 Link encap:Ethernet HWaddr 00:0C:29:96:45:92 inet addr:192.168.30.101 Bcast:192.168.30.255 Mask:255.255.255.0 UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1 eth0:1 Link encap:Ethernet HWaddr 00:0C:29:96:45:92 inet addr:192.168.30.100 Bcast:192.168.30.255 Mask:255.255.255.0 UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1 ...

mysql> select version(); ERROR 2006 (HY000): MySQL server has gone away No connection. Trying to reconnect... Connection id: 2 Current database: *** NONE *** +------------+ | version() | +------------+ | 5.1.73-log | +------------+ 1 row in set (0.13 sec)

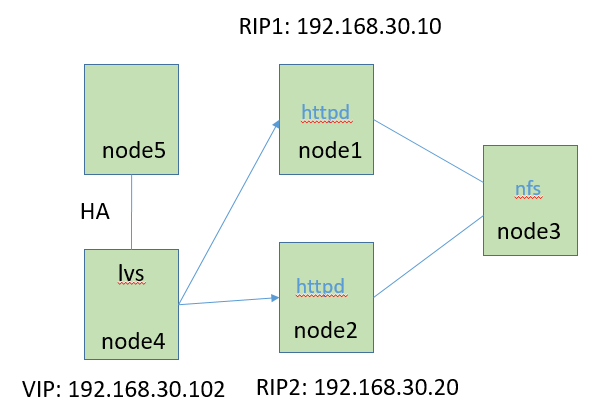

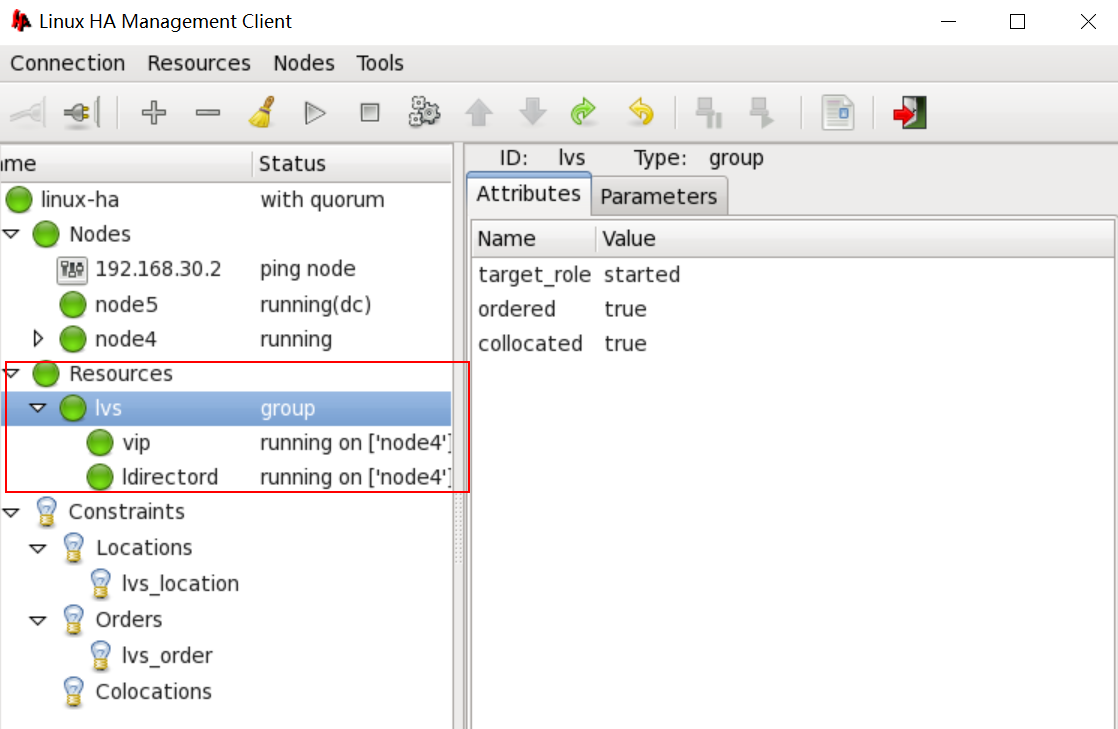

案例二:基於heartbeat v2配置lvs director的高可用

相比heartbeat v1的haresources,heartbeat v2的資源管理器crm能更靈活地定義資源運行的傾向性,功能更強大。

本例中使用crm的GUI配置接口hb_gui進行資源配置

我們接着案例一的實驗環境進行操作,將node4和node5作爲lvs director的高可用節點,node1和node2作爲後端RS,node3作爲RS1和RS2的共享存儲。lvs的安裝配置此處略(可參考博客http://9124573.blog.51cto.com/9114573/1759997)。

1、實驗環境:

node4: 192.168.30.14, lvs director高可用節點

node5: 192.168.30.15, lvs director高可用節點

node1: 192.168.30.10, RS1

node2: 192.168.30.20, RS2

node3: 192.168.30.13, nfs, 作爲RS1和RS2的共享存儲

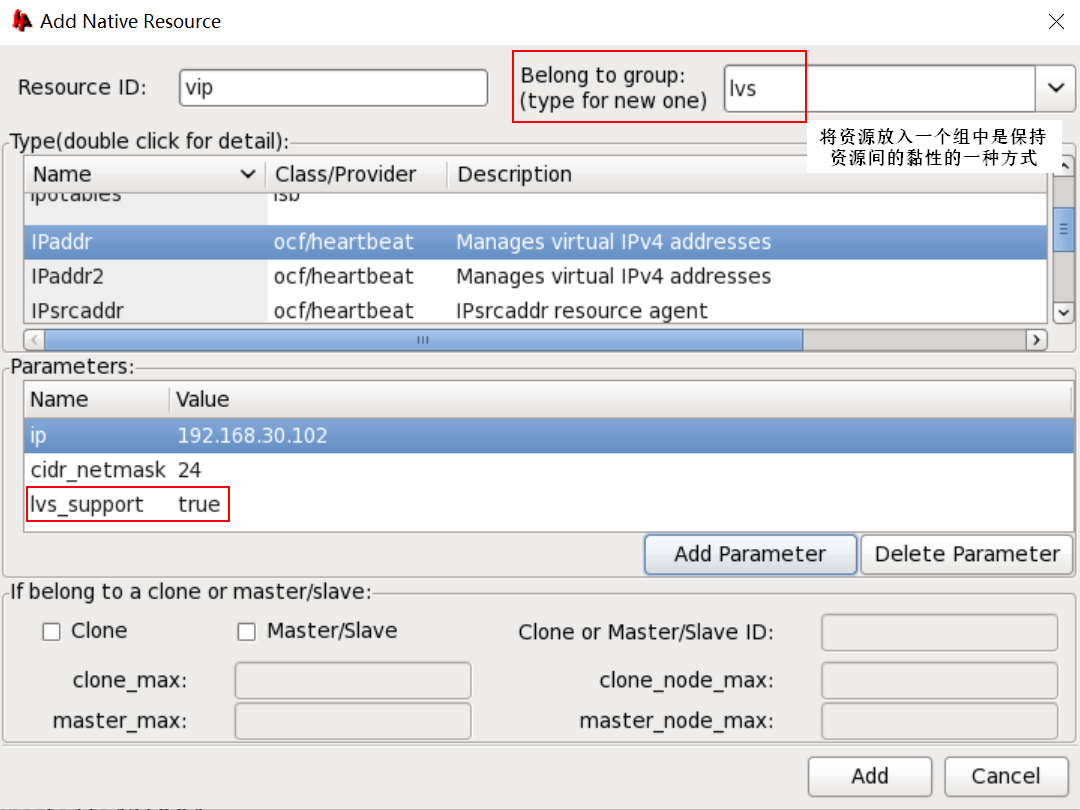

lvs director高可用所需的資源:

vip: 192.168.30.102

ipvsadm

2、準備好後端RS

[root@node1 ~]# mount -t nfs 192.168.30.13:/web /var/www/html [root@node1 ~]# ls /var/www/html index.html hello [root@node1 ~]# service httpd start Starting httpd: [ OK ] #在另一個RS上執行相同步驟

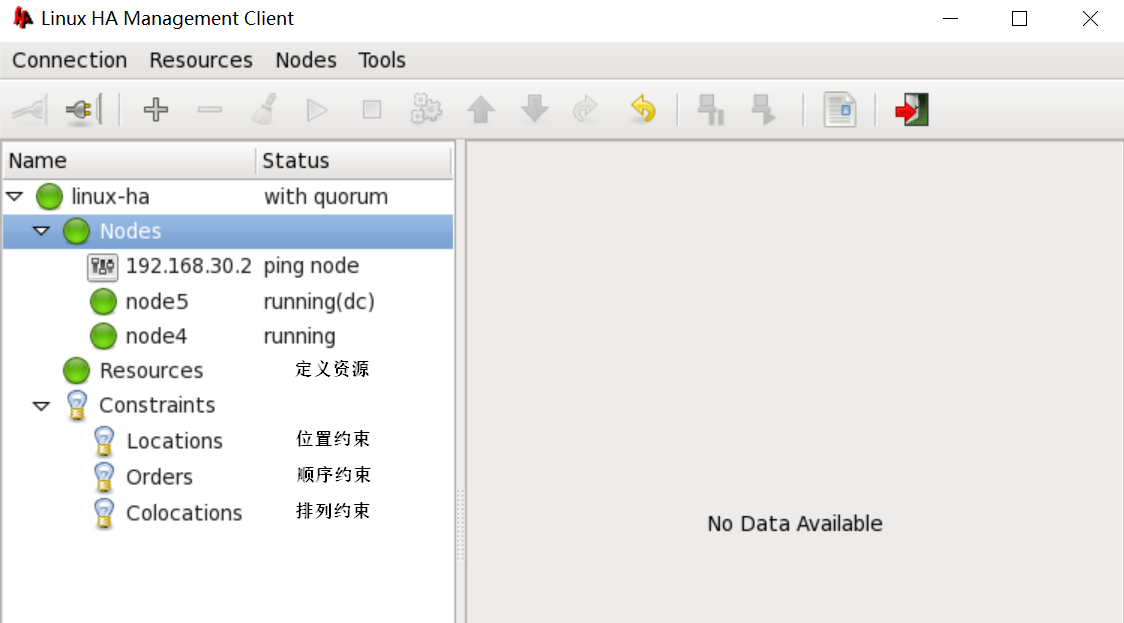

3、啓用crm資源管理器,並使用GUI接口hb_gui配置資源

service ipvsadm save #需要以此種方式保存lvs規則,這樣資源代理才能以service ipvsadm start/stop的方式控制規則的啓動和關閉

vim /etc/ha.d/ha.cf

crm on #啓用crm資源管理器;啓用crm後,haresources則不再起作用

rpm -ivh heartbeat-gui-2.1.4-12.el6.x86_64.rpm

passwd hacluster #安裝heartbeat-gui後會生成一個新用戶hacluster,這是GUI客戶端用以連接crm時所使用的用戶身份,我們欲連接哪個節點做資源配置,就要在該節點上給該用戶設置一個密碼

service heartbeat start #crm會運行爲守護進程mgmtd,監聽在5560/tcp

hb_gui & #啓動GUI客戶端,連接crm的守護進程配置資源

生成的資源配置文件爲cib.xml,默認位於/var/lib/heartbeat/crm目錄下,節點之間會自動同步配置文件

[root@node4 ~]# service heartbeat stop;ssh root@node5 'service heartbeat stop' [root@node4 ~]# ipvsadm -L -n IP Virtual Server version 1.2.1 (size=4096) Prot LocalAddress:Port Scheduler Flags -> RemoteAddress:Port Forward Weight ActiveConn InActConn TCP 192.168.30.102:80 rr -> 192.168.30.10:80 Route 1 0 0 -> 192.168.30.20:80 Route 1 0 0 [root@node4 ~]# service ipvsadm save ipvsadm: Saving IPVS table to /etc/sysconfig/ipvsadm: [ OK ] [root@node4 ~]# scp /etc/sysconfig/ipvsadm root@node5:/etc/sysconfig/ #同步節點間的lvs規則文件 ipvsadm [root@node4 ~]# rpm -ivh heartbeat-gui-2.1.4-12.el6.x86_64.rpm Preparing... ########################################### [100%] 1:heartbeat-gui ########################################### [100%] [root@node4 ~]# tail -1 /etc/passwd #可以看到,安裝hearbeat-gui包後,會生成一個新用戶hacluster hacluster:x:496:493:heartbeat user:/var/lib/heartbeat/cores/hacluster:/sbin/nologin [root@node4 ~]# passwd hacluster #給該用戶設置一個密碼 Changing password for user hacluster. New password: BAD PASSWORD: it is based on a dictionary word BAD PASSWORD: is too simple Retype new password: passwd: all authentication tokens updated successfully.

[root@node5 ~]# rpm -ivh heartbeat-gui-2.1.4-12.el6.x86_64.rpm #另外一個節點也要裝上heartbeat-gui Preparing... ########################################### [100%] 1:heartbeat-gui ########################################### [100%]

[root@node4 ~]# vim /etc/ha.d/ha.cf crm on #啓用資源管理器crm [root@node4 ~]# scp /etc/ha.d/ha.cf root@node5:/etc/ha.d/ #同步主配置文件 ha.cf 100% 10KB 10.3KB/s 00:00 [root@node4 ~]# service heartbeat start;ssh root@node5 'service heartbeat start' #啓動heartbeat Starting High-Availability services: Done. Starting High-Availability services: Done. [root@node4 ~]# netstat -tuanp #可以看到,新增了一個監聽端口;crm運行於守護進程mgmtd,監聽於5560/tcp ... tcp 0 0 0.0.0.0:5560 0.0.0.0:* LISTEN 23134/mgmtd udp 0 0 225.1.1.1:694 0.0.0.0:* 23122/heartbeat: wr ... [root@node4 ~]# hb_gui & #啓動GUI客戶端,開始配置資源

[root@node4 ~]# ls /var/lib/heartbeat/crm cib.xml cib.xml.last cib.xml.sig cib.xml.sig.last [root@node4 ~]# ifconfig #ip已配置成功 ... eth0:0 Link encap:Ethernet HWaddr 00:0C:29:32:52:1C inet addr:192.168.30.102 Bcast:192.168.30.255 Mask:255.255.255.0 UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1 ... [root@node4 ~]# ipvsadm #lvs規則也已加載 IP Virtual Server version 1.2.1 (size=4096) Prot LocalAddress:Port Scheduler Flags -> RemoteAddress:Port Forward Weight ActiveConn InActConn TCP 192.168.30.102:http rr -> node1:http Route 1 0 0 -> node2:http Route 1 0 0 [root@node4 ~]# crm_mon #該命令可監控高可用節點的狀態 Last updated: Mon Apr 18 16:17:55 2016 Current DC: node5 (71a77c25-9cc0-4169-9d88-cb224979fa27) 2 Nodes configured. 1 Resources configured. ============ Node: node5 (71a77c25-9cc0-4169-9d88-cb224979fa27): online Node: node4 (5eb11525-5dcd-4546-8ed1-ad0bc562a8be): online Resource Group: lvs vip (ocf::heartbeat:IPaddr): Started node4 ipvsadm (lsb:ipvsadm): Started node4

[root@node3 ~]# curl 192.168.30.102 #測試成功 hello

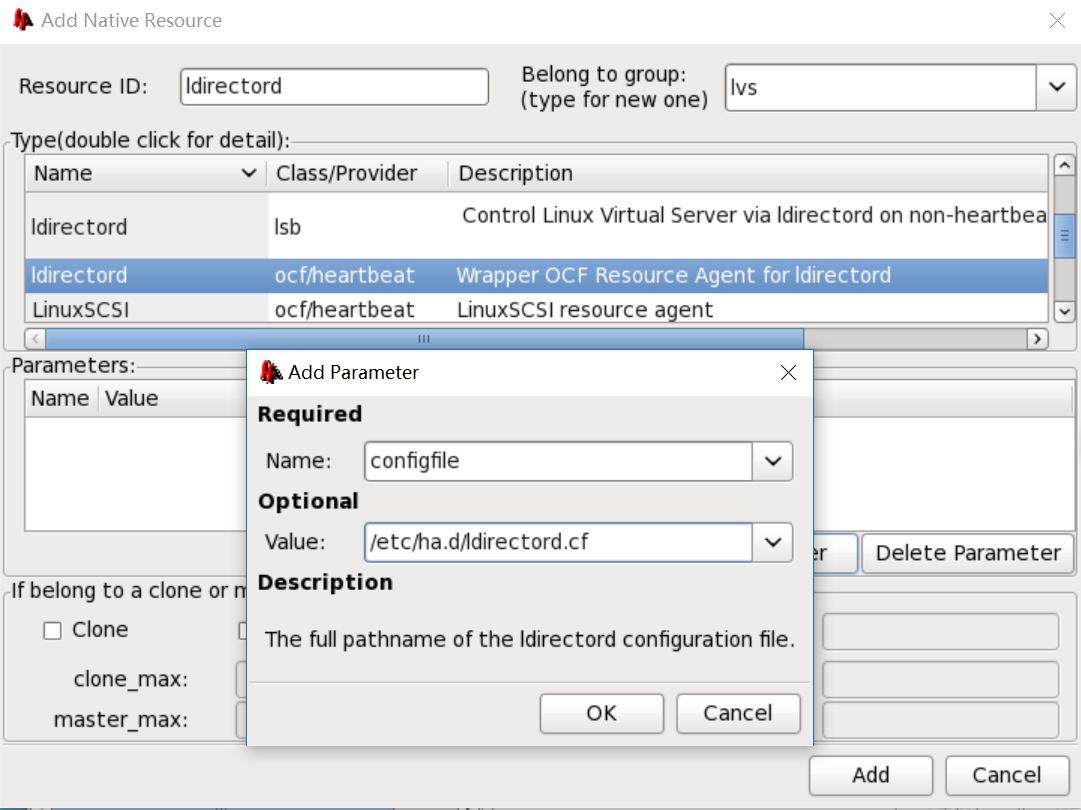

4、使用上面的ipvsadm作爲資源代理無法獲知後端RS的健康狀態,heartbeat爲lvs專門提供了一個給lvs做高可用且具有後端RS健康狀態檢測功能的包heatbeat-ldirectord

yum -y install heartbeat-ldirectord-2.1.4-12.el6.x86_64.rpm

cp /usr/share/doc/heartbeat-ldirectord-2.1.4/ldirectord.cf /etc/ha.d/

vim /etc/ha.d/ldirectord.cf #直接在ldirector的配置文件中就可定義lvs規則和後端RS健康狀態檢測的方法

scp /etc/ha.d/ldirectord.cf root@node5:/etc/ha.d/

配置資源ldirectord時,需添加參數指明其配置文件路徑

使用haresources配置ldirectord可寫作:

node4 192.168.30.14/eth0/192.168.30.0 ldirectord::/etc/ha.d/ldirectord.cf

#在兩個節點都安裝上heartbeat-ldirectord包 [root@node5 ~]# yum -y install heartbeat-ldirectord-2.1.4-12.el6.x86_64.rpm ... Installed: heartbeat-ldirectord.x86_64 0:2.1.4-12.el6

[root@node4 ~]# yum -y install heartbeat-ldirectord-2.1.4-12.el6.x86_64.rpm ... Installed: heartbeat-ldirectord.x86_64 0:2.1.4-12.el6 [root@node4 ~]# rpm -ql heartbeat-ldirectord /etc/ha.d/resource.d/ldirectord /etc/init.d/ldirectord /etc/logrotate.d/ldirectord /usr/sbin/ldirectord /usr/share/doc/heartbeat-ldirectord-2.1.4 /usr/share/doc/heartbeat-ldirectord-2.1.4/COPYING /usr/share/doc/heartbeat-ldirectord-2.1.4/README /usr/share/doc/heartbeat-ldirectord-2.1.4/ldirectord.cf #ldirector的配置文件模板 /usr/share/man/man8/ldirectord.8.gz [root@node4 ~]# ls /etc/ha.d/resource.d/ #可看到,多了一個資源代理ldirectord apache db2 Filesystem ICP IPaddr IPsrcaddr ldirectord LVM MailTo portblock SendArp WAS Xinetd AudibleAlarm Delay hto-mapfuncs ids IPaddr2 IPv6addr LinuxSCSI LVSSyncDaemonSwap OCF Raid1 [root@node4 ~]# cp /usr/share/doc/heartbeat-ldirectord-2.1.4/ldirectord.cf /etc/ha.d/ [root@node4 ~]# vim /etc/ha.d/ldirectord.cf ... # Global Directives checktimeout=3 checkinterval=1 #檢測的間隔時長 #fallback=127.0.0.1:80 autoreload=yes #logfile="/var/log/ldirectord.log" #logfile="local0" #emailalert="[email protected]" #emailalertfreq=3600 #emailalertstatus=all quiescent=yes # Sample for an http virtual service virtual=192.168.30.102:80 real=192.168.30.10:80 gate real=192.168.30.20:80 gate fallback=127.0.0.1:80 gate service=http #以何種協議探測後端RS request="test.html" receive="OK" scheduler=rr #persistent=600 #netmask=255.255.255.255 ... [root@node4 ~]# scp /etc/ha.d/ldirectord.cf root@node5:/etc/ha.d/ #同步節點間的ldrectord.cf ldirectord.cf [root@node4 ~]# service httpd start;ssh root@node5 'service httpd start' #啓動高可用節點上的httpd服務,作爲fallback

[root@node3 ~]# vim /web/test.html #在nfs上提供一個檢測用頁,文件名及內容與ldirectord.cf中的定義一致 OK

[root@node4 ha.d]# hb_gui & #啓動GUI客戶端配置資源 [1] 30759

[root@node3 ~]# curl 192.168.30.102 hello

模擬後端RS故障

[root@node1 ~]# service httpd stop Stopping httpd: [ OK ]

[root@node2 ~]# service httpd stop Stopping httpd: [ OK ]

[root@node4 ~]# ipvsadm -L -n #可以看到director已能檢測到後端RS出現故障,並自動將fallback server的權重調爲1 IP Virtual Server version 1.2.1 (size=4096) Prot LocalAddress:Port Scheduler Flags -> RemoteAddress:Port Forward Weight ActiveConn InActConn TCP 192.168.30.102:80 rr -> 127.0.0.1:80 Local 1 0 6 -> 192.168.30.10:80 Route 0 0 1 -> 192.168.30.20:80 Route 0 0 0

[root@node3 ~]# curl 192.168.30.102 #客戶端仍能正常訪問 hello