最近在部署hadoop,我發現了ambari工具部署hadoop的hive 組件的一個問題,不知道其他人遇到過沒有。

問題描述:通過ambari工具搭建了hadoop2.0完全分佈式集羣。在測試hive的時候,按照官方文檔裏的說明通過下面命令檢查根目錄的時候:總是報錯無法連接mysql。(java.sql.SQLException: Access denied foruser 'hive'@'hdb3.yc.com'(using password: YES))

[root@hdb3 bin]# /usr/lib/hive/bin/metatool -listFSRoot

報錯關鍵信息:

14/02/20 13:21:09 WARN bonecp.BoneCPConfig: Max Connections < 1. Setting to 20 14/02/20 13:21:09 ERROR Datastore.Schema: Failed initialising database. Unable to open a test connection to the given database. JDBC url = jdbc:mysql://hdb3.yc.com/hive?createDatabaseIfNotExist=true, username = hive. Terminating connection pool. Original Exception: ------ java.sql.SQLException: Access denied for user 'hive'@'hdb3.yc.com' (using password: YES)

從報錯信息初步判斷是沒有權限訪問mysql數據庫。但是經過測試,利用hive用戶,及密碼連接mysql服務器是正常的。而且我在安裝之前是在mysql中對hive用戶做過授權的。

#創建Hive帳號並授權:

mysql> CREATE USER 'hive'@'%' IDENTIFIED BY 'hive_passwd';

Query OK, 0 rows affected (0.00 sec) mysql> GRANT ALL PRIVILEGES ON *.* TO 'hive'@'%';

Query OK, 0 rows affected (0.00 sec)

mysql> CREATE USER 'hive'@'hdb3.yc.com' IDENTIFIED BY 'hive_passwd';

mysql> GRANT ALL PRIVILEGES ON *.* TO 'hive'@'hdb3.yc.com';

Query OK, 0 rows affected (0.00 sec)測試連接是正常的:

[root@hda3 ~]# mysql -h hdb3.yc.com -u hive -p Enter password: Welcome to the MySQL monitor. Commands end with ; or \g. Your MySQL connection id is 556 Server version: 5.1.73 Source distribution Copyright (c) 2000, 2012, Oracle and/or its affiliates. All rights reserved. Oracle is a registered trademark of Oracle Corporation and/or its affiliates. Other names may be trademarks of their respective owners. Type 'help;' or '\h' for help. Type '\c' to clear the current input statement. mysql>

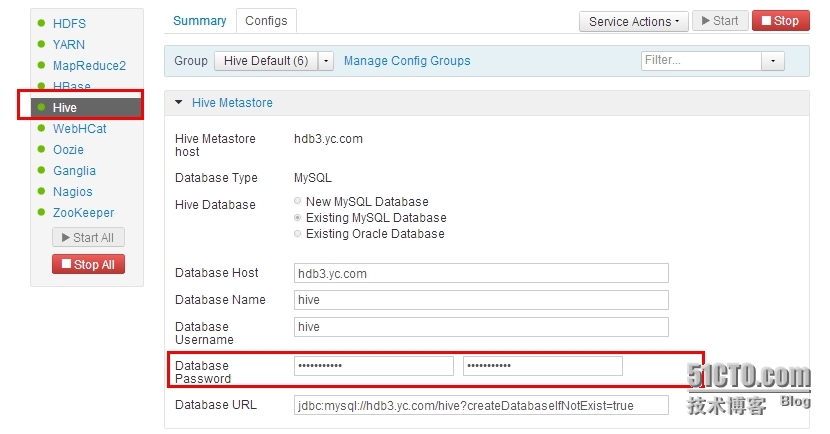

於是檢查hive的配置,在ambari管理頁面,如下,hive的配置項中輸入了正確的密碼。重啓hive服務後測試依然會報錯。

後來檢查服務器上的配置文件找到的問題原由:

上面那個web頁面裏配置了hive 數據庫的連接密碼,但是實際上hive服務器的配置文件裏/usr/lib/hive/conf/hive-site.xml並沒有密碼的相關配置,也就是沒有下面這一段參數配置:

<property> <name>javax.jdo.option.ConnectionPassword</name> <value>hive_passwd</value> </property>

手動編輯配置文件增加上這些配置,加入這段密碼的配置後,沒有重啓hive服務,再次執行下面的命令檢查根目錄,這次不報錯了。

[root@hdb3 bin]# /usr/lib/hive/bin/metatool -listFSRoot Initializing HiveMetaTool.. 14/02/20 14:29:22 INFO Configuration.deprecation: mapred.input.dir.recursive is deprecated. Instead, use mapreduce.input.fileinputformat.input.dir.recursive 14/02/20 14:29:22 INFO Configuration.deprecation: mapred.max.split.size is deprecated. Instead, use mapreduce.input.fileinputformat.split.maxsize 14/02/20 14:29:22 INFO Configuration.deprecation: mapred.min.split.size is deprecated. Instead, use mapreduce.input.fileinputformat.split.minsize 14/02/20 14:29:22 INFO Configuration.deprecation: mapred.min.split.size.per.rack is deprecated. Instead, use mapreduce.input.fileinputformat.split.minsize.per.rack 14/02/20 14:29:22 INFO Configuration.deprecation: mapred.min.split.size.per.node is deprecated. Instead, use mapreduce.input.fileinputformat.split.minsize.per.node 14/02/20 14:29:22 INFO Configuration.deprecation: mapred.reduce.tasks is deprecated. Instead, use mapreduce.job.reduces 14/02/20 14:29:22 INFO Configuration.deprecation: mapred.reduce.tasks.speculative.execution is deprecated. Instead, use mapreduce.reduce.speculative 14/02/20 14:29:23 INFO metastore.ObjectStore: ObjectStore, initialize called 14/02/20 14:29:23 INFO DataNucleus.Persistence: Property datanucleus.cache.level2 unknown - will be ignored SLF4J: Class path contains multiple SLF4J bindings. SLF4J: Found binding in [jar:file:/usr/lib/hadoop/lib/slf4j-log4j12-1.7.5.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: Found binding in [jar:file:/usr/lib/hive/lib/slf4j-log4j12-1.7.5.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation. SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory] 14/02/20 14:29:24 WARN bonecp.BoneCPConfig: Max Connections < 1. Setting to 20 14/02/20 14:29:24 INFO metastore.ObjectStore: Setting MetaStore object pin classes with hive.metastore.cache.pinobjtypes="Table,Database,Type,FieldSchema,Order" 14/02/20 14:29:24 INFO metastore.ObjectStore: Initialized ObjectStore 14/02/20 14:29:25 WARN bonecp.BoneCPConfig: Max Connections < 1. Setting to 20 Listing FS Roots.. hdfs://hda1.yc.com:8020/apps/hive/warehouse

但是在通過ambari管理界面重啓hive服務後又會重新自動給你去掉了。

這導致在執行 /usr/lib/hive/bin/metatool -listFSRoot 命令的時候無法連接mysql數據庫。不知道這是我哪裏的配置不對還是ambari的bug問題。