一、概述

1.簡述: MariaDB Galera Cluster 是一套在mysql innodb存儲引擎上面實現multi-master及數據實時同步的系統架構,業務層面無需做讀寫分離工作,數據庫讀寫壓力都能按照既定的規則分發到各個節點上去。在數據方面完全兼容 MariaDB、Percona Server和MySQL。

2.特性:

(1).同步複製 Synchronous replication

(2).Active-active multi-master 拓撲邏輯

(3).可對集羣中任一節點進行數據讀寫

(4).自動成員控制,故障節點自動從集羣中移除

(5).自動節點加入

(6).真正並行的複製,基於行級

(7).直接客戶端連接,原生的 MySQL 接口

(8).每個節點都包含完整的數據副本

(9).多臺數據庫中數據同步由 wsrep 接口實現

3.侷限性:

(1).目前的複製僅僅支持InnoDB存儲引擎,任何寫入其他引擎的表,包括mysql.*表將不會複製,但是DDL語句會被複制的,因此創建用戶將會被複制,但是insert into mysql.user…將不會被複制的 (2).DELETE操作不支持沒有主鍵的表,沒有主鍵的表在不同的節點順序將不同,如果執行SELECT…LIMIT… 將出現不同的結果集

(3).在多主環境下LOCK/UNLOCK TABLES不支持,以及鎖函數GET_LOCK(), RELEASE_LOCK()…

(4).查詢日誌不能保存在表中。如果開啓查詢日誌,只能保存到文件中

(5).允許最大的事務大小由wsrep_max_ws_rows和wsrep_max_ws_size定義。任何大型操作將被拒絕。如大型的LOAD DATA操作

(6).由於集羣是樂觀的併發控制,事務commit可能在該階段中止。如果有兩個事務向在集羣中不同的節點向同一行寫入並提交,失敗的節點將中止。對於集羣級別的中止,集羣返回死鎖錯誤代碼(Error: 1213 SQLSTATE: 40001 (ER_LOCK_DEADLOCK))

(7).XA事務不支持,由於在提交上可能回滾

(8).整個集羣的寫入吞吐量是由最弱的節點限制,如果有一個節點變得緩慢,那麼整個集羣將是緩慢的。爲了穩定的高性能要求,所有的節點應使用統一的硬件

(9).集羣節點建議最少3個

(10).如果DDL語句有問題將破壞集羣。

二、架構介紹

1.Keepalived+LVS的經典組合作爲前端負載均衡和高可用保障,可以使用單獨兩臺主機分別作爲主、備,如果數據庫集羣數量不多,比如兩臺,也可以直接在數據庫主機上使用此組合

2.一共5臺主機,2臺作爲keepalived+LVS的主備,另外三臺分別爲mdb1、mdb2和mdb3,mdb1作爲參考節點,不執行任何客戶端SQL,這樣做的好處有如下幾條:

(1).數據一致性:因爲"參考節點"本身不執行任何客戶端SQL,所以在這個節點上發生transaction衝突的可能性最小。因此如果發現集羣有數據不一致的時候,"參考節點"上的數據應該是集羣中最準確的。

(2).數據安全性:因爲"參考節點"本身不執行任何客戶端SQL,所以在這個節點上發生災難事件的可能性最小。因此當整個集羣宕掉的時候,"參考節點"應該是恢復集羣的最佳節點。

(3).高可用:"參考節點"可以作爲專門state snapshot donor。因爲"參考節點"不服務於客戶端,因此當使用此節點進行SST的時候,不會影響用戶體驗,並且前端的負載均衡設備也不需要重新配置。

三、 環境準備

1.系統和軟件

| 系統環境 | |

| 系統 | CentOS release 6.5 |

| 系統位數 | x86_64 |

| 內核版本 | 2.6.32-431 |

| 軟件版本 | |

| Keepalived | 1.2.13 |

| LVS | 1.24 |

| MaridDB | 10.0.16 |

| socat | 1.7.3.0 |

2.主機環境

| mdb1(參考點) | 172.16.21.180 |

| mdb2 | 172.16.21.181 |

| mdb3 | 172.16.21.182 |

| ha1(keepalived+lvs主) | 172.16.21.201 |

| ha2(keepalived+lvs備) | 172.16.21.202 |

| VIP | 172.16.21.188 |

四、 集羣安裝配置

以主機mdb1爲例:

1.配置hosts文件

編輯/etc/hosts加入下列內容

[root@mdb1 ~]# vi /etc/hosts 127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4 ::1 localhost localhost.localdomain localhost6 localhost6.localdomain6 172.16.21.201 ha1 172.16.21.202 ha2 172.16.21.180 mdb1 172.16.21.181 mdb2 172.16.21.182 mdb3

2. 準備YUM源

除了系統自帶的官方源,再添加epel,Percona,MariaDB的源

[root@mdb1 ~]# vi /etc/yum.repos.d/MariDB.repo # MariaDB 5.5 RedHat repository list - created 2015-03-04 02:45 UTC # http://mariadb.org/mariadb/repositories/ [mariadb] name = MariaDB baseurl = http://yum.mariadb.org/10.0/rhel6-amd64 gpgkey=https://yum.mariadb.org/RPM-GPG-KEY-MariaDB gpgcheck=1 [root@mdb1 ~]#rpm -ivh http://dl.fedoraproject.org/pub/epel/6/x86_64/epel-release-6-8.noarch.rpm [root@mdb1 ~]#rpm --import https://yum.mariadb.org/RPM-GPG-KEY-MariaDB [root@mdb1 ~]# vi /etc/yum.repos.d/Percona.repo [percona] name = CentOS $releasever - Percona baseurl=http://repo.percona.com/centos/$releasever/os/$basearch/ enabled = 1 gpgkey = file:///etc/pki/rpm-gpg/RPM-GPG-KEY-percona gpgcheck = 1 [root@mdb1 ~]#wget -O /etc/pki/rpm-gpg/RPM-GPG-KEY-percona http://www.percona.com/downloads/RPM-GPG-KEY-percona [root@mdb1 ~]#yum clean all

3.安裝socat

socat是一個多功能的網絡工具,名字來由是”Socket CAT”,可以看作是netcat的N倍加強版。

事實證明,如果不安裝socat,MariaDB-Galera-server最後的數據同步會失敗報錯,網上很多配置文檔都沒有講到這點,請記住一定要安裝

[root@mdb1 ~]# tar -xzvf socat-1.7.3.0.tar.gz [root@mdb1 ~]# cd socat-1.7.3.0 [root@mdb1 socat-1.7.3.0]# ./configure --prefix=/usr/local/socat [root@mdb1 socat-1.7.3.0]# make && make install [root@mdb1 socat-1.7.3.0]# ln -s /usr/local/socat/bin/socat /usr/sbin/

4.安裝MariaDB、galera、xtrabackup

[root@mdb1 ~]# rpm -e --nodeps mysql-libs [root@mdb1 ~]# yum install MariaDB-Galera-server galera MariaDB-client xtrabackup [root@mdb1 ~]#chkconfig mysql on [root@mdb1 ~]#service mysql start

5.置MariaDB的root密碼,並做安全加固

[root@mdb1 ~]#/usr/bin/mysql_secure_installation

6.創建用於同步數據庫的SST帳號

[root@mdb1 ~]# mysql -uroot -p Enter password: Welcome to the MariaDB monitor. Commands end with ; or \g. Your MariaDB connection id is 12 Server version: 10.0.16-MariaDB-wsrep-log MariaDB Server, wsrep_25.10.r4144 Copyright (c) 2000, 2015, Oracle, MariaDB Corporation Ab and others. Type 'help;' or '\h' for help. Type '\c' to clear the current input statement. MariaDB [(none)]> grant all privileges on *.* to sst@'%' identified by '123456'; MariaDB [(none)]> flush privileges; MariaDB [(none)]> quit

7.創建wsrep.cnf文件

[root@mdb1 ~]#cp /usr/share/mysql/wsrep.cnf /etc/my.cnf.d/ [root@mdb1 ~]# vi /etc/my.cnf.d/wsrep.cnf 只需要修改如下4行: wsrep_provider=/usr/lib64/galera/libgalera_smm.so wsrep_cluster_address="gcomm://" wsrep_sst_auth=sst:123456 wsrep_sst_method=xtrabackup

注意:

"gcomm://" 是特殊的地址,僅僅是Galera cluster初始化啓動時候使用。

如果集羣啓動以後,我們關閉了第一個節點,那麼再次啓動的時候必須先修改

"gcomm://"爲其他節點的集羣地址,例如下次啓動時需要更改

wsrep_cluster_address="gcomm://172.16.21.182:4567"

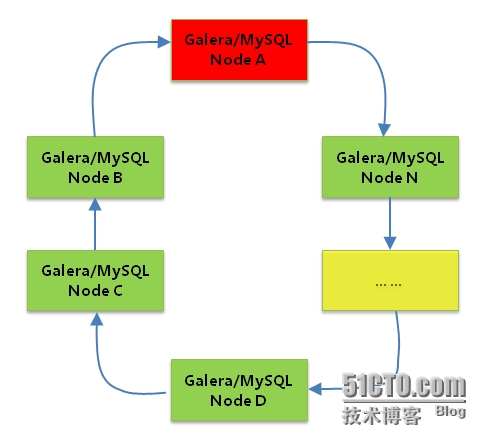

圖中的Node A就是我們的mdb1,Node N就是後面需要添加的主機mdb3

8.修改/etc/my.cnf

添加如下一行

!includedir /etc/my.cnf.d/

另外最好在/etc/my.cnf中指定datadir路徑

datadir = /var/lib/mysql

否則可能會遇到報錯說找不到路徑,所以最好加上這條

9.關閉防火牆iptables和selinux

很多人在啓動數據庫集羣時總是失敗,很可能就是因爲防火牆沒有關閉或者沒有打開相應端口,最好的辦法就是清空iptables並關閉selinux

[root@mdb1 ~]# iptables -F [root@mdb1 ~]# iptables-save > /etc/sysconfig/iptables [root@mdb1 ~]# setenforce 0 [root@mdb1 ~]# vi /etc/selinux/config # This file controls the state of SELinux on the system. # SELINUX= can take one of these three values: # enforcing - SELinux security policy is enforced. # permissive - SELinux prints warnings instead of enforcing. # disabled - No SELinux policy is loaded. SELINUX=disabled # SELINUXTYPE= can take one of these two values: # targeted - Targeted processes are protected, # mls - Multi Level Security protection. SELINUXTYPE=targeted

10.重啓MariaDB

[root@mdb1 ~]# service mysql restart [root@mdb1 ~]# netstat -tulpn | grep -e 4567 -e 3306 tcp 0 0 0.0.0.0:4567 0.0.0.0:* LISTEN 11325/mysqld tcp 0 0 0.0.0.0:3306 0.0.0.0:* LISTEN 11325/mysqld

到此,單節點的配置完成

11.添加mdb2、mdb3到集羣

整個集羣就是首位相連,簡單說來就是在"gcomm://"處的IP不一樣,mdb3—>mdb2—>mdb1—>mdb3,在生產環境,可以考慮將mdb1作爲參考節點,不執行客戶端的SQL,用來保障數據一致性和數據恢復時用。具體構造方法如下:

(1)按照上述1-10的步驟安裝和配置另外兩條主機

(2)除了第7步wsrep_cluster_address要改爲對應的主機地址

mdb2:wsrep_cluster_address="gcomm://172.16.21.180:4567"

mdb3:wsrep_cluster_address="gcomm://172.16.21.181:4567"

如果有更多主機要加入集羣,以此類推,將wsrep_cluster_address指向前一個主機地址,而集羣第一臺主機指向最後一臺的地址就行了

12.最後將mdb2和mdb3啓動

[root@mdb2 ~]# service mysql start [root@mdb3 ~]# service mysql start

13.給集羣加入Galera arbitrator

對於只有2個節點的Galera Cluster和其他集羣軟件一樣,需要面對極端情況下的"腦裂"狀態。

爲了避免這種問題,Galera引入了"arbitrator(仲裁人)"。

"仲裁人"節點上沒有數據,它在集羣中的作用就是在集羣發生分裂時進行仲裁,集羣中可以有多個"仲裁人"節點。

"仲裁人"節點加入集羣的方法很簡單,運行如下命令即可:

[root@mdb1 ~]# garbd -a gcomm://172.16.21.180:4567 -g my_wsrep_cluster -d

參數說明:

-d 以daemon模式運行

-a 集羣地址

-g 集羣名稱

14.確認galera集羣正確安裝和運行

MariaDB [(none)]> show status like 'ws%'; +------------------------------+----------------------------------------------------------+ | Variable_name | Value | +------------------------------+----------------------------------------------------------+ | wsrep_local_state_uuid | 64784714-c23a-11e4-b7d7-5edbdea0e62c uuid 集羣唯一標記 | | wsrep_protocol_version | 5 | | wsrep_last_committed | 94049 sql 提交記錄 | | wsrep_replicated | 0 | | wsrep_replicated_bytes | 0 | | wsrep_repl_keys | 0 | | wsrep_repl_keys_bytes | 0 | | wsrep_repl_data_bytes | 0 | | wsrep_repl_other_bytes | 0 | | wsrep_received | 3 | | wsrep_received_bytes | 287 | | wsrep_local_commits | 0 本地執行的 sql | | wsrep_local_cert_failures | 0 本地失敗事務 | | wsrep_local_replays | 0 | | wsrep_local_send_queue | 0 | | wsrep_local_send_queue_avg | 0.333333 隊列平均時間間隔 | | wsrep_local_recv_queue | 0 | | wsrep_local_recv_queue_avg | 0.000000 | | wsrep_local_cached_downto | 18446744073709551615 | | wsrep_flow_control_paused_ns | 0 | | wsrep_flow_control_paused | 0.000000 | | wsrep_flow_control_sent | 0 | | wsrep_flow_control_recv | 0 | | wsrep_cert_deps_distance | 0.000000 併發數量 | | wsrep_apply_oooe | 0.000000 | | wsrep_apply_oool | 0.000000 | | wsrep_apply_window | 0.000000 | | wsrep_commit_oooe | 0.000000 | | wsrep_commit_oool | 0.000000 | | wsrep_commit_window | 0.000000 | | wsrep_local_state | 4 | | wsrep_local_state_comment | Synced | | wsrep_cert_index_size | 0 | | wsrep_causal_reads | 0 | | wsrep_cert_interval | 0.000000 | | wsrep_incoming_addresses | 172.16.21.180:3306,172.16.21.182:3306,172.16.21.188:3306 | | wsrep_cluster_conf_id | 19 | | wsrep_cluster_size | 3 集羣成員個數 | | wsrep_cluster_state_uuid | 64784714-c23a-11e4-b7d7-5edbdea0e62c | | wsrep_cluster_status | Primary 主服務器 | | wsrep_connected | ON 當前是否連接中 | | wsrep_local_bf_aborts | 0 | | wsrep_local_index | 0 | | wsrep_provider_name | Galera | | wsrep_provider_vendor | Codership Oy <[email protected]> | | wsrep_provider_version | 25.3.5(rXXXX) | | wsrep_ready | ON | | wsrep_thread_count | 3 | +------------------------------+----------------------------------------------------------+

wsrep_ready爲ON,則說明MariaDB Galera集羣已經正確運行了

監控狀態說明:

(1)集羣完整性檢查:

wsrep_cluster_state_uuid:在集羣所有節點的值應該是相同的,有不同值的節點,說明其沒有連接入集羣.

wsrep_cluster_conf_id:正常情況下所有節點上該值是一樣的.如果值不同,說明該節點被臨時”分區”了.當節點之間網絡連接恢復的時候應該會恢復一樣的值.

wsrep_cluster_size:如果這個值跟預期的節點數一致,則所有的集羣節點已經連接.

wsrep_cluster_status:集羣組成的狀態.如果不爲”Primary”,說明出現”分區”或是”split-brain”狀況.

(2)節點狀態檢查:

wsrep_ready: 該值爲ON,則說明可以接受SQL負載.如果爲Off,則需要檢查wsrep_connected.

wsrep_connected: 如果該值爲Off,且wsrep_ready的值也爲Off,則說明該節點沒有連接到集羣.(可能是wsrep_cluster_address或wsrep_cluster_name等配置錯造成的.具體錯誤需要查看錯誤日誌)

wsrep_local_state_comment:如果wsrep_connected爲On,但wsrep_ready爲OFF,則可以從該項查看原因.

(3)複製健康檢查:

wsrep_flow_control_paused:表示複製停止了多長時間.即表明集羣因爲Slave延遲而慢的程度.值爲0~1,越靠近0越好,值爲1表示複製完全停止.可優化wsrep_slave_threads的值來改善.

wsrep_cert_deps_distance:有多少事務可以並行應用處理.wsrep_slave_threads設置的值不應該高出該值太多.

wsrep_flow_control_sent:表示該節點已經停止複製了多少次.

wsrep_local_recv_queue_avg:表示slave事務隊列的平均長度.slave瓶頸的預兆.

最慢的節點的wsrep_flow_control_sent和wsrep_local_recv_queue_avg這兩個值最高.這兩個值較低的話,相對更好.

(4)檢測慢網絡問題:

wsrep_local_send_queue_avg:網絡瓶頸的預兆.如果這個值比較高的話,可能存在網絡瓶

衝突或死鎖的數目:

wsrep_last_committed:最後提交的事務數目

wsrep_local_cert_failures和wsrep_local_bf_aborts:回滾,檢測到的衝突數目

15.測試數據是否能同步

分別在每個節點創建庫和表,再刪除,查看其它節點是否同步,如若配置正確,應該是同步的,具體操作省略

五、 Keepalived+LVS配置

1.使用YUM方式安裝

[root@ha1 ~]# yum install keepalived ipvsadm [root@ha2 ~]# yum install keepalived ipvsadm

2.Keepalived配置

主機ha1的配置

[root@ha1 ~]# vi /etc/keepalived/keepalived.conf

global_defs {

notification_email {

[email protected]

}

notification_email_from root@localhost

smtp_server 127.0.0.1

smtp_connect_timeout 30

router_id LVS_201

}

vrrp_instance VI_1 {

state MASTER

interface eth0

virtual_router_id 51

priority 100

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

172.16.21.188/24 dev eth0 label eth0:0

}

}

virtual_server 172.16.21.188 3306 {

delay_loop 6

lb_algo rr

lb_kind DR

nat_mask 255.255.255.0

persistence_timeout 50

protocol TCP

real_server 172.16.21.181 3306 {

weight 1

TCP_CHECK {

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

connect_port 3306

}

}

real_server 172.16.21.182 3306 {

weight 1

TCP_CHECK {

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

connect_port 3306

}

}

} 備機ha2的配置

global_defs {

notification_email {

[email protected]

}

notification_email_from root@localhost

smtp_server 127.0.0.1

smtp_connect_timeout 30

router_id LVS_202

}

vrrp_instance VI_1 {

state BACKUP

interface eth0

virtual_router_id 51

priority 99

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

172.16.21.188/24 dev eth0 label eth0:0

}

}

virtual_server 172.16.21.188 3306 {

delay_loop 6

lb_algo rr

lb_kind DR

nat_mask 255.255.255.0

persistence_timeout 50

protocol TCP

real_server 172.16.21.181 3306 {

weight 1

TCP_CHECK {

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

connect_port 3306

}

}

real_server 172.16.21.182 3306 {

weight 1

TCP_CHECK {

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

connect_port 3306

}

}

} 3.LVS腳本配置

兩臺realserver服務器上都要配置如下腳本

[root@mdb2 ~]#vi /etc/init.d/lvsdr.sh

#!/bin/bash

# description: Config realserver lo and apply noarp

VIP=172.16.21.188

. /etc/rc.d/init.d/functions

case "$1" in

start)

/sbin/ifconfig lo down

/sbin/ifconfig lo up

echo "1" >/proc/sys/net/ipv4/conf/lo/arp_ignore

echo "2" >/proc/sys/net/ipv4/conf/lo/arp_announce

echo "1" >/proc/sys/net/ipv4/conf/all/arp_ignore

echo "2" >/proc/sys/net/ipv4/conf/all/arp_announce

/sbin/sysctl -p >/dev/null 2>&1

/sbin/ifconfig lo:0 $VIP netmask 255.255.255.255 up

/sbin/route add -host $VIP dev lo:0

echo "LVS-DR real server starts successfully."

;;

stop)

/sbin/ifconfig lo:0 down

/sbin/route del $VIP >/dev/null 2>&1

echo "0" >/proc/sys/net/ipv4/conf/lo/arp_ignore

echo "0" >/proc/sys/net/ipv4/conf/lo/arp_announce

echo "0" >/proc/sys/net/ipv4/conf/all/arp_ignore

echo "0" >/proc/sys/net/ipv4/conf/all/arp_announce

echo "LVS-DR real server stopped."

;;

status)

isLoOn=`/sbin/ifconfig lo:0 | grep "$VIP"`

isRoOn=`/bin/netstat -rn | grep "$VIP"`

if [ "$isLoOn" == "" -a "$isRoOn" == "" ]; then

echo "LVS-DR real server has to run yet."

else

echo "LVS-DR real server is running."

fi

exit 3

;;

*)

echo "Usage: $0 {start|stop|status}"

exit 1

esac

exit 0

[root@mdb2 ~]# chmod +x /etc/init.d/lvsdr.sh

[root@mdb3 ~]# chmod +x /etc/init.d/lvsdr.sh 4.啓動Keepalived和LVS

[root@mdb2 ~]# /etc/init.d/lvsdr.sh start [root@mdb3 ~]# /etc/init.d/lvsdr.sh start [root@ha1 ~]# service keepalived start [root@ha2 ~]# service keepalived start

5.加入開機自動啓動

[root@mdb2 ~]#echo "/etc/init.d/lvsdr.sh start" >> /etc/rc.d/rc.local [root@mdb3 ~]#echo "/etc/init.d/lvsdr.sh start" >> /etc/rc.d/rc.local [root@ha1 ~]# chkconfig keepalived on [root@ha2 ~]# chkconfig keepalived on

6.測試

將主服務器ha1的keepalived關閉,在備機ha2上觀察日誌和IP變化

[root@ha1 ~]#service keepalived stop [root@ha2 ~]#tail -f /var/log/messages Mar 5 10:36:03 ha2 Keepalived_healthcheckers[11249]: Opening file '/etc/keepalived/keepalived.conf'. Mar 5 10:36:03 ha2 Keepalived_healthcheckers[11249]: Configuration is using : 14697 Bytes Mar 5 10:36:03 ha2 Keepalived_vrrp[11250]: Opening file '/etc/keepalived/keepalived.conf'. Mar 5 10:36:03 ha2 Keepalived_vrrp[11250]: Configuration is using : 63250 Bytes Mar 5 10:36:03 ha2 Keepalived_vrrp[11250]: Using LinkWatch kernel netlink reflector... Mar 5 10:36:03 ha2 Keepalived_healthcheckers[11249]: Using LinkWatch kernel netlink reflector... Mar 5 10:36:03 ha2 Keepalived_healthcheckers[11249]: Activating healthchecker for service [172.16.21.181]:3306 Mar 5 10:36:03 ha2 Keepalived_healthcheckers[11249]: Activating healthchecker for service [172.16.21.182]:3306 Mar 5 10:36:03 ha2 Keepalived_vrrp[11250]: VRRP_Instance(VI_1) Entering BACKUP STATE Mar 5 10:36:03 ha2 Keepalived_vrrp[11250]: VRRP sockpool: [ifindex(2), proto(112), unicast(0), fd(10,11)] Mar 6 08:41:53 ha2 Keepalived_vrrp[11250]: VRRP_Instance(VI_1) Transition to MASTER STATE Mar 6 08:41:54 ha2 Keepalived_vrrp[11250]: VRRP_Instance(VI_1) Entering MASTER STATE Mar 6 08:41:54 ha2 Keepalived_vrrp[11250]: VRRP_Instance(VI_1) setting protocol VIPs. Mar 6 08:41:54 ha2 Keepalived_vrrp[11250]: VRRP_Instance(VI_1) Sending gratuitous ARPs on eth0 for 172.16.21.188 Mar 6 08:41:54 ha2 Keepalived_healthcheckers[11249]: Netlink reflector reports IP 172.16.21.188 added Mar 6 08:41:59 ha2 Keepalived_vrrp[11250]: VRRP_Instance(VI_1) Sending gratuitous ARPs on eth0 for 172.16.21.188 [root@ha2 ~]#ifconfig eth0 Link encap:Ethernet HWaddr 00:0C:29:1D:77:9C inet addr:172.16.21.202 Bcast:172.16.21.255 Mask:255.255.255.0 inet6 addr: fe80::20c:29ff:fe1d:779c/64 Scope:Link UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1 RX packets:2969375670 errors:0 dropped:0 overruns:0 frame:0 TX packets:2966841735 errors:0 dropped:0 overruns:0 carrier:0 collisions:0 txqueuelen:1000 RX bytes:225643845081 (210.1 GiB) TX bytes:222421642143 (207.1 GiB) eth0:0 Link encap:Ethernet HWaddr 00:0C:29:1D:77:9C inet addr:172.16.21.188 Bcast:0.0.0.0 Mask:255.255.255.0 UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1 lo Link encap:Local Loopback inet addr:127.0.0.1 Mask:255.0.0.0 inet6 addr: ::1/128 Scope:Host UP LOOPBACK RUNNING MTU:16436 Metric:1 RX packets:55694 errors:0 dropped:0 overruns:0 frame:0 TX packets:55694 errors:0 dropped:0 overruns:0 carrier:0 collisions:0 txqueuelen:0 RX bytes:3176387 (3.0 MiB) TX bytes:3176387 (3.0 MiB)

將ha1的keepalived啓動再觀察ha1的日誌和IP

[root@ha1 ~]#service keepalived start [root@ha1 ~]#tail -f /var/log/messages Mar 6 08:54:42 ha1 Keepalived[13310]: Starting Keepalived v1.2.13 (10/15,2014) Mar 6 08:54:42 ha1 Keepalived[13311]: Starting Healthcheck child process, pid=13312 Mar 6 08:54:42 ha1 Keepalived[13311]: Starting VRRP child process, pid=13313 Mar 6 08:54:42 ha1 Keepalived_vrrp[13313]: Netlink reflector reports IP 172.16.21.181 added Mar 6 08:54:42 ha1 Keepalived_vrrp[13313]: Netlink reflector reports IP fe80::20c:29ff:fe4d:8e83 added Mar 6 08:54:42 ha1 Keepalived_healthcheckers[13312]: Netlink reflector reports IP 172.16.21.181 added Mar 6 08:54:42 ha1 Keepalived_vrrp[13313]: Registering Kernel netlink reflector Mar 6 08:54:42 ha1 Keepalived_healthcheckers[13312]: Netlink reflector reports IP fe80::20c:29ff:fe4d:8e83 added Mar 6 08:54:42 ha1 Keepalived_vrrp[13313]: Registering Kernel netlink command channel Mar 6 08:54:42 ha1 Keepalived_vrrp[13313]: Registering gratuitous ARP shared channel Mar 6 08:54:42 ha1 Keepalived_healthcheckers[13312]: Registering Kernel netlink reflector Mar 6 08:54:42 ha1 Keepalived_healthcheckers[13312]: Registering Kernel netlink command channel Mar 6 08:54:42 ha1 Keepalived_vrrp[13313]: Opening file '/etc/keepalived/keepalived.conf'. Mar 6 08:54:42 ha1 Keepalived_healthcheckers[13312]: Opening file '/etc/keepalived/keepalived.conf'. Mar 6 08:54:42 ha1 Keepalived_vrrp[13313]: Configuration is using : 63252 Bytes Mar 6 08:54:42 ha1 Keepalived_vrrp[13313]: Using LinkWatch kernel netlink reflector... Mar 6 08:54:42 ha1 Keepalived_healthcheckers[13312]: Configuration is using : 14699 Bytes Mar 6 08:54:42 ha1 Keepalived_healthcheckers[13312]: Using LinkWatch kernel netlink reflector... Mar 6 08:54:42 ha1 Keepalived_healthcheckers[13312]: Activating healthchecker for service [172.16.21.181]:3306 Mar 6 08:54:42 ha1 Keepalived_healthcheckers[13312]: Activating healthchecker for service [172.16.21.182]:3306 Mar 6 08:54:42 ha1 Keepalived_vrrp[13313]: VRRP sockpool: [ifindex(2), proto(112), unicast(0), fd(10,11)] Mar 6 08:54:43 ha1 Keepalived_vrrp[13313]: VRRP_Instance(VI_1) Transition to MASTER STATE Mar 6 08:54:43 ha1 Keepalived_vrrp[13313]: VRRP_Instance(VI_1) Received lower prio advert, forcing new election Mar 6 08:54:44 ha1 Keepalived_vrrp[13313]: VRRP_Instance(VI_1) Entering MASTER STATE Mar 6 08:54:44 ha1 Keepalived_vrrp[13313]: VRRP_Instance(VI_1) setting protocol VIPs. Mar 6 08:54:44 ha1 Keepalived_vrrp[13313]: VRRP_Instance(VI_1) Sending gratuitous ARPs on eth0 for 172.16.21.188 Mar 6 08:54:44 ha1 Keepalived_healthcheckers[13312]: Netlink reflector reports IP 172.16.21.188 added Mar 6 08:54:49 ha1 Keepalived_vrrp[13313]: VRRP_Instance(VI_1) Sending gratuitous ARPs on eth0 for 172.16.21.188 [root@ha1 ~]#ifconfig eth0 Link encap:Ethernet HWaddr 00:0C:29:4D:8E:83 inet addr:172.16.21.201 Bcast:172.16.21.255 Mask:255.255.255.0 inet6 addr: fe80::20c:29ff:fe4d:8e83/64 Scope:Link UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1 RX packets:2968402607 errors:0 dropped:0 overruns:0 frame:0 TX packets:2966256067 errors:0 dropped:0 overruns:0 carrier:0 collisions:0 txqueuelen:1000 RX bytes:224206102960 (208.8 GiB) TX bytes:221258814612 (206.0 GiB) eth0:0 Link encap:Ethernet HWaddr 00:0C:29:4D:8E:83 inet addr:172.16.21.188 Bcast:0.0.0.0 Mask:255.255.255.0 UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1 lo Link encap:Local Loopback inet addr:127.0.0.1 Mask:255.0.0.0 inet6 addr: ::1/128 Scope:Host UP LOOPBACK RUNNING MTU:16436 Metric:1 RX packets:54918 errors:0 dropped:0 overruns:0 frame:0 TX packets:54918 errors:0 dropped:0 overruns:0 carrier:0 collisions:0 txqueuelen:0 RX bytes:3096422 (2.9 MiB) TX bytes:3096422 (2.9 MiB)

到此,所有配置就完成了

六、 總結

提到MySQL多主複製,大家很可能都是想到MySQL+MMM的架構,MariaDB Galera Cluster很好地替代了前者並且可靠性更高,具體比較可以參考http://www.oschina.net/translate/from-mysql-mmm-to-mariadb-galera-cluster-a-high-availability-makeover這篇文章。

當然,MariaDB Galera Cluster並不是適合所有需要複製的情形,你必須根據自己的需求來決定,比如,如果你是數據一致性考慮的多,而且寫操作和更新的東西多,但寫入量不是很大,MariaDB Galera Cluster就適合你;如果你是查詢的多,且讀寫分離也容易實現,那就用replication好,簡單易用,用一個master保證數據的一致性,可以有多個slave用來讀去數據,分擔負載,只要能解決好數據一致性和唯一性,replication就更適合你,畢竟MariaDB Galera Cluster集羣遵循“木桶”原理,如果寫的量很大,數據同步速度是由集羣節點中IO最低的節點決定的,整體上,寫入的速度會比replication慢許多。

如果文中有任何遺漏和錯誤,歡迎大家留言指正或者聯繫我,我的郵箱是[email protected]

參考文檔:

http://blog.sina.com.cn/s/blog_704836f40101lixp.html

http://blog.sina.com.cn/s/blog_53b13d950102uyhm.html

http://www.it165.net/database/html/201401/5144.html