準備條件:

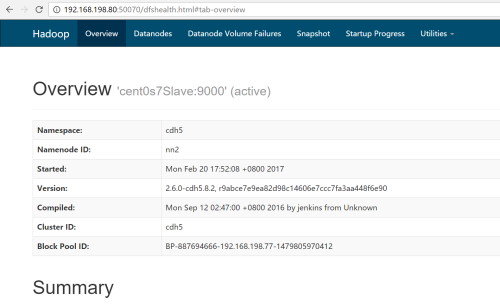

部署hadoop集羣

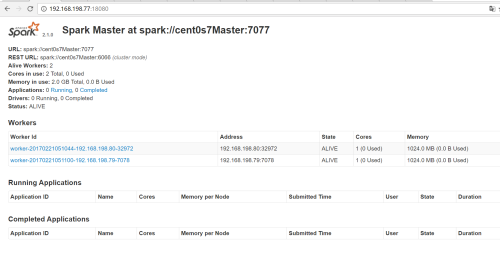

部署spark集羣

安裝python(本人安裝的是anaconda3,python是3.6)

配置環境環境變量:

vi .bashrc #添加如下內容 export SPARK_HOME=/opt/spark/current export PYTHONPATH=$SPARK_HOME/python/:$SPARK_HOME/python/lib/py4j-0.10.4-src.zip

ps:spark裏面會自帶一個pyspark模塊,但是本人官方下載的 spark2.1中的pyspark 與 python3.6 不兼容,存在bug,如果看官用的也是 python3的話,建議到githup下載最新的 pyspark 替換掉$SPARK_HOME/python目錄下面的pyspark。

開啓打怪升級:

1.啓動hadoop集羣和spark集羣

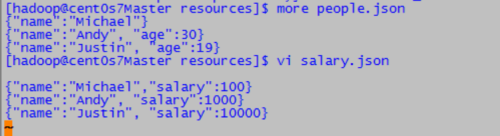

2.將數據傳到hadoop文件系統上,people.json是官方提供的案例數據,salary.json是本人自己新建的數據

hadoop fs -mkdir -p /user/hadoop/examples/src/main/resources/ hadoop fs -put people.json /user/hadoop/examples/src/main/resources/ hadoop fs -put salary.json /user/hadoop/examples/src/main/resources/

3.編寫python SparkSQL程序

# -*- coding: utf-8 -*-

"""

Created on Wed Feb 22 15:07:44 2017

練習SparkSQL

@author: wanghuan

"""

from pyspark.sql import SparkSession

spark = SparkSession.builder.master("spark://cent0s7Master:7077").appName("Python Spark SQL basic example").config("spark.some.config.option", "some-value")

.getOrCreate()

#ssc=SparkContext("local[2]","sparksqltest")

peopleDF = spark.read.json("examples/src/main/resources/people.json")

salaryDF = spark.read.json("examples/src/main/resources/salary.json")

#peopleDF.printSchema()

# Creates a temporary view using the DataFrame

peopleDF.createOrReplaceTempView("people")

salaryDF.createOrReplaceTempView("salary")

# SQL statements can be run by using the sql methods provided by spark

teenagerNamesDF = spark.sql("SELECT a.name,a.age,b.salary FROM people a,salary b where a.name=b.name and a.age <30 and b.salary>5000")

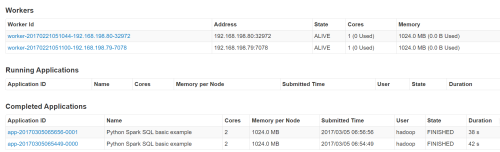

teenagerNamesDF.show()4.運行SparkSQL 應用

運行花了42秒(這個執行時間我覺得有點長,應該跟本人虛擬機性能不咋地相關,本人就是個dell筆記本跑四個虛擬機),結果出來了, 19歲的Justin工資就到了10000了,真是年輕有爲呀。

ps:本人原打算是用java或者scala來開發spark應用的,但是,配置開發環境真的是心酸的歷程,最麻煩的是scala的編譯環境,sbt或者maven下載很多包,國外的包下載不下來(原因大家都懂的)。我只能轉而用解釋性的python來編寫了,至少不用下載國外的編譯包了。