有时候需要通过搜索引擎,获取大量具有一定特征的url地址,人工一页页翻太麻烦了,写了个脚本用来获取,并在获取到后获取当前网页的title

使用环境:

python3

requests

bs4

lxml

from bs4 import BeautifulSoup

import requests

import sys

def get_url(google_hack,start,stop):

headers = {

'Host':'www.baidu.com',

'User-Agent':'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/55.0.2883.87 Safari/537.36',

}

indexUrl = 'https://www.baidu.com'

num = int(start) +int(stop)-1

for stop in range((int(start) -1) *10, num*10, 10):

targetUrl = indexUrl + '/s?wd=' + google_hack + '&pn=' + str(stop) + '&oq=' + google_hack + '&tn=93063693_hao_pg&ie=utf-8&usm=1&rsv_pq=93cdb6350000eadd&rsv_t=150bff5LzGew8qDr0ARHTq%2BNBvCwnE7s0KgrfxwcY5Sqc4xAsDyOFQIo%2FUOfuybbSkFMa5Cz&rsv_jmp=slow'

r = requests.get(targetUrl, headers=headers, timeout=15)

detail = BeautifulSoup(r.content, 'lxml')

for x in detail.find_all('div'):

link = x.get('data-tools')

if link:

try:

url = str(link)[link.find('"url"'):]

url = url[7:-2]#截取url中的内容

r = requests.get(url)

final_url = r.url

title = get_title(r.content.decode(r.apparent_encoding,'replace').encode('utf-8','replace').decode('utf-8'))

print(final_url+' '+title)

except Exception as e:

print(e)

def get_title(data):

title = ''

try:

title = data.split('</title>')[0].split('<title>')[1].strip()

except Exception as e:

print(e)

return title

if __name__ == '__main__':

msg = '''

python3 %s google_hack start_page stop_page

example:

python3 %s "inurl:asp?id=1" 0 20

'''%(sys.argv[0],sys.argv[0])

if len(sys.argv) < 4:

print(msg)

exit(1)

google_hack = sys.argv[1]

start = sys.argv[2]

stop = sys.argv[3]

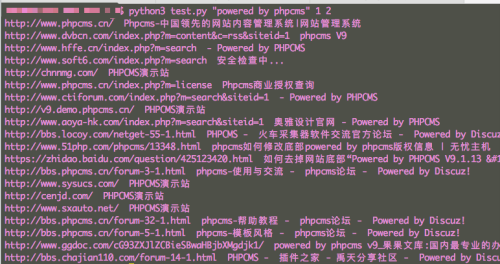

get_url(google_hack,start,stop)使用效果图