kube-APIserver組件介紹

- kube-APIserver提供了k8s各類資源對象(pod,RC,Service等)的增刪改查及watch等HTTP Rest接口,是整個系統的數據總線和數據中心。

kube-APIserver的功能

- 提供了集羣管理的REST API接口(包括認證授權、數據校驗以及集羣狀態變更)

- 提供其他模塊之間的數據交互和通信的樞紐(其他模塊通過API Server查詢或修改數據,只有API Server才直接操作etcd)

- 是資源配額控制的入口

- 擁有完備的集羣安全機制

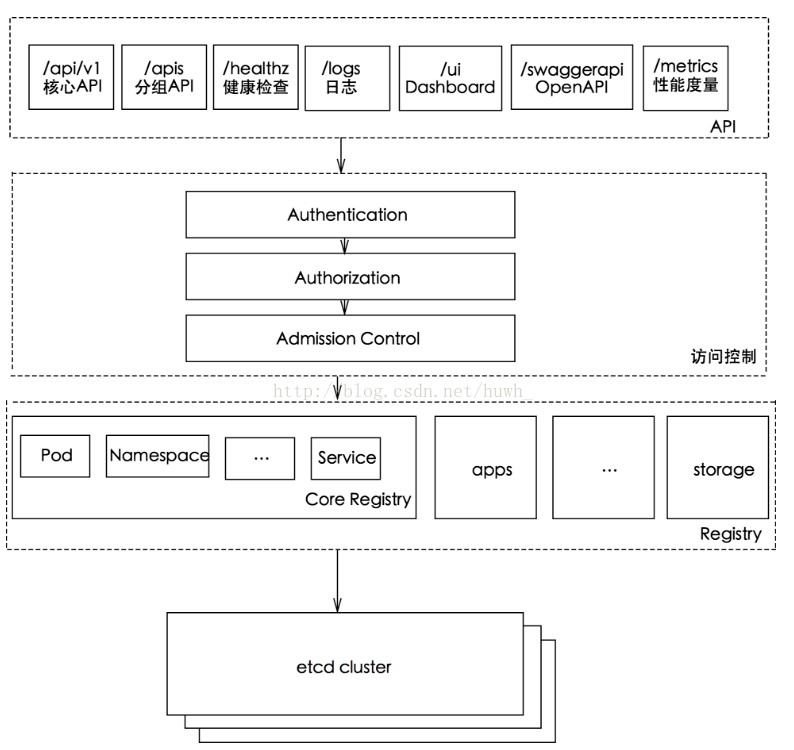

kube-apiserver工作原理圖

kubernetes API的訪問

- k8s通過kube-apiserver這個進程提供服務,該進程運行在單個k8s-master節點上。默認有兩個端口

- 本地端口

- 該端口用於接收HTTP請求

- 該端口默認值爲8080,可以通過API Server的啓動參數“--insecure-port”的值來修改默認值

- 默認的IP地址爲“localhost”,可以通過啓動參數“--insecure-bind-address”的值來修改該IP地址

- 非認證或授權的HTTP請求通過該端口訪問API Server

- 安全端口

- 該端口默認值爲6443,可通過啓動參數“--secure-port”的值來修改默認值

- 默認IP地址爲非本地(Non-Localhost)網絡端口,通過啓動參數“--bind-address”設置該值

- 該端口用於接收HTTPS請求

- 用於基於Tocken文件或客戶端證書及HTTP Base的認證

- 用於基於策略的授權

- 默認不啓動HTTPS安全訪問控制

kube-controller-manager組件介紹

- kube-Controller Manager作爲集羣內部的管理控制中心,負責集羣內的Node、Pod副本、服務端點(Endpoint)、命名空間(Namespace)、服務賬號(ServiceAccount)、資源定額(ResourceQuota)的管理,當某個Node意外宕機時,Controller Manager會及時發現並執行自動化修復流程,確保集羣始終處於預期的工作狀態。

kube-scheduler組件介紹

- kube-scheduler是以插件形式存在的組件,正因爲以插件形式存在,所以其具有可擴展可定製的特性。kube-scheduler相當於整個集羣的調度決策者,其通過預選和優選兩個過程決定容器的最佳調度位置。

- kube-scheduler(調度器)的指責主要是爲新創建的pod在集羣中尋找最合適的node,並將pod調度到Node上

- 從集羣所有節點中,根據調度算法挑選出所有可以運行該pod的節點

- 再根據調度算法從上述node節點選擇最優節點作爲最終結果

- Scheduler調度器運行在master節點,它的核心功能是監聽apiserver來獲取PodSpec.NodeName爲空的pod,然後爲pod創建一個binding指示pod應該調度到哪個節點上,調度結果寫入apiserver

kube-scheduler主要職責

- 集羣高可用:如果 kube-scheduler 設置了 leader-elect 選舉啓動參數,那麼會通過 etcd 進行節點選主( kube-scheduler 和 kube-controller-manager 都使用了一主多從的高可用方案)

- 調度資源監聽:通過 list-Watch 機制監聽 kube-apiserver 上資源的變化,這裏的資源主要指的是 Pod 和 Node

- 調度節點分配:通過預選(Predicates)與優選(Priorites)策略,爲待調度的 Pod 分配一個 Node 進行綁定並填充nodeName,同時將分配結果通過 kube-apiserver 寫入 etcd

實驗部署

實驗環境

- Master01:192.168.80.12

- Node01:192.168.80.13

- Node02:192.168.80.14

- 本篇實驗部署是接上篇文章Flannel部署的,所以實驗環境不變,本次部署主要是部署master節點需要的組件

kube-APIserver組件部署

- master01服務器操作,配置apiserver自簽證書

[root@master01 k8s]# cd /mnt/ //進入宿主機掛載目錄 [root@master01 mnt]# ls etcd-cert etcd-v3.3.10-linux-amd64.tar.gz k8s-cert.sh master.zip etcd-cert.sh flannel.sh kubeconfig.sh node.zip etcd.sh flannel-v0.10.0-linux-amd64.tar.gz kubernetes-server-linux-amd64.tar.gz [root@master01 mnt]# cp master.zip /root/k8s/ //複製壓縮包到k8s工作目錄 [root@master01 mnt]# cd /root/k8s/ //進入k8s工作目錄 [root@master01 k8s]# ls cfssl.sh etcd-v3.3.10-linux-amd64 kubernetes-server-linux-amd64.tar.gz etcd-cert etcd-v3.3.10-linux-amd64.tar.gz master.zip etcd.sh flannel-v0.10.0-linux-amd64.tar.gz [root@master01 k8s]# unzip master.zip //解壓壓縮包 Archive: master.zip inflating: apiserver.sh inflating: controller-manager.sh inflating: scheduler.sh [root@master01 k8s]# mkdir /opt/kubernetes/{cfg,bin,ssl} -p //在master01中創建工作目錄,之前在node節點中同樣也創建過工作目錄 [root@master01 k8s]# mkdir k8s-cert //創建自簽證書目錄 [root@master01 k8s]# cp /mnt/k8s-cert.sh /root/k8s/k8s-cert //將掛載的自簽證書腳本移動到k8s工作目錄中的自簽證書目錄 [root@master01 k8s]# cd k8s-cert //進入目錄 [root@master01 k8s-cert]# vim k8s-cert.sh //編輯拷貝過來的腳本文件 ... cat > server-csr.json <<EOF { "CN": "kubernetes", "hosts": [ "10.0.0.1", "127.0.0.1", "192.168.80.12", //更改地址爲master01IP地址 "192.168.80.11", //添加地址爲master02IP地址,爲之後我們要做的多節點做準備 "192.168.80.100", //添加vrrp地址,爲之後要做的負載均衡做準備 "192.168.80.13", //更改地址爲node01節點IP地址 "192.168.80.14", //更改地址爲node02節點IP地址 "kubernetes", "kubernetes.default", "kubernetes.default.svc", "kubernetes.default.svc.cluster", "kubernetes.default.svc.cluster.local" ], "key": { "algo": "rsa", "size": 2048 }, "names": [ { "C": "CN", "L": "BeiJing", "ST": "BeiJing", "O": "k8s", "OU": "System" } ] } EOF ... :wq [root@master01 k8s-cert]# bash k8s-cert.sh //執行腳本,生成證書 2020/02/10 10:59:17 [INFO] generating a new CA key and certificate from CSR 2020/02/10 10:59:17 [INFO] generate received request 2020/02/10 10:59:17 [INFO] received CSR 2020/02/10 10:59:17 [INFO] generating key: rsa-2048 2020/02/10 10:59:17 [INFO] encoded CSR 2020/02/10 10:59:17 [INFO] signed certificate with serial number 10087572098424151492431444614087300651068639826 2020/02/10 10:59:17 [INFO] generate received request 2020/02/10 10:59:17 [INFO] received CSR 2020/02/10 10:59:17 [INFO] generating key: rsa-2048 2020/02/10 10:59:17 [INFO] encoded CSR 2020/02/10 10:59:17 [INFO] signed certificate with serial number 125779224158375570229792859734449149781670193528 2020/02/10 10:59:17 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for websites. For more information see the Baseline Requirements for the Issuance and Management of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org); specifically, section 10.2.3 ("Information Requirements"). 2020/02/10 10:59:17 [INFO] generate received request 2020/02/10 10:59:17 [INFO] received CSR 2020/02/10 10:59:17 [INFO] generating key: rsa-2048 2020/02/10 10:59:17 [INFO] encoded CSR 2020/02/10 10:59:17 [INFO] signed certificate with serial number 328087687681727386760831073265687413205940136472 2020/02/10 10:59:17 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for websites. For more information see the Baseline Requirements for the Issuance and Management of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org); specifically, section 10.2.3 ("Information Requirements"). 2020/02/10 10:59:17 [INFO] generate received request 2020/02/10 10:59:17 [INFO] received CSR 2020/02/10 10:59:17 [INFO] generating key: rsa-2048 2020/02/10 10:59:18 [INFO] encoded CSR 2020/02/10 10:59:18 [INFO] signed certificate with serial number 525069068228188747147886102005817997066385735072 2020/02/10 10:59:18 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for websites. For more information see the Baseline Requirements for the Issuance and Management of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org); specifically, section 10.2.3 ("Information Requirements"). [root@master01 k8s-cert]# ls *pem //查看 會生成8個證書 admin-key.pem admin.pem ca-key.pem ca.pem kube-proxy-key.pem kube-proxy.pem server-key.pem server.pem [root@master01 k8s-cert]# cp ca*pem server*pem /opt/kubernetes/ssl/ //將證書移動到k8s工作目錄下ssl目錄中 -

配置apiserver

[root@master01 k8s-cert]# cd .. //回到k8s工作目錄 [root@master01 k8s]# tar zxvf kubernetes-server-linux-amd64.tar.gz //解壓軟件包 kubernetes/ kubernetes/server/ kubernetes/server/bin/ ... [root@master01 k8s]# cd kubernetes/server/bin/ //進入加壓後軟件命令存放目錄 [root@master01 bin]# ls apiextensions-apiserver kube-apiserver.docker_tag kube-proxy cloud-controller-manager kube-apiserver.tar kube-proxy.docker_tag cloud-controller-manager.docker_tag kube-controller-manager kube-proxy.tar cloud-controller-manager.tar kube-controller-manager.docker_tag kube-scheduler hyperkube kube-controller-manager.tar kube-scheduler.docker_tag kubeadm kubectl kube-scheduler.tar kube-apiserver kubelet mounter [root@master01 bin]# cp kube-apiserver kubectl kube-controller-manager kube-scheduler /opt/kubernetes/bin/ //複製關鍵命令文件到k8s工作目錄的bin目錄中 [root@master01 bin]# cd /root/k8s/ [root@master01 k8s]# head -c 16 /dev/urandom | od -An -t x | tr -d ' ' //生成一個序列號 c37758077defd4033bfe95a071689272 [root@master01 k8s]# vim /opt/kubernetes/cfg/token.csv //創建token.csv文件,可以理解爲創建一個管理性的角色 c37758077defd4033bfe95a071689272,kubelet-bootstrap,10001,"system:kubelet-bootstrap" //指定用戶角色身份,前面的序列號使用生成的序列號 :wq [root@master01 k8s]# bash apiserver.sh 192.168.80.12 https://192.168.80.12:2379,https://192.168.80.13:2379,https://192.168.80.14:2379 //二進制文件,token,證書都準備好,執行apiserver腳本,同時生成配置文件 Created symlink from /etc/systemd/system/multi-user.target.wants/kube-apiserver.service to /usr/lib/systemd/system/kube-apiserver.service. [root@master01 k8s]# ps aux | grep kube //檢查進程是否啓動成功 root 17088 8.7 16.7 402260 312192 ? Ssl 11:17 0:08 /opt/kubernetes/bin/kube-apiserver --logtostderr=true --v=4 --etcd-servers=https://192.168.80.12:2379,https://192.168.80.13:2379,https://192.168.80.14:2379 --bind-address=192.168.80.12 --secure-port=6443 --advertise-address=192.168.80.12 --allow-privileged=true --service-cluster-ip-range=10.0.0.0/24 --enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,ResourceQuota,NodeRestriction --authorization-mode=RBAC,Node --kubelet-https=true --enable-bootstrap-token-auth --token-auth-file=/opt/kubernetes/cfg/token.csv --service-node-port-range=30000-50000 --tls-cert-file=/opt/kubernetes/ssl/server.pem --tls-private-key-file=/opt/kubernetes/ssl/server-key.pem --client-ca-file=/opt/kubernetes/ssl/ca.pem --service-account-key-file=/opt/kubernetes/ssl/ca-key.pem --etcd-cafile=/opt/etcd/ssl/ca.pem --etcd-certfile=/opt/etcd/ssl/server.pem --etcd-keyfile=/opt/etcd/ssl/server-key.pem root 17101 0.0 0.0 112676 980 pts/0 S+ 11:19 0:00 grep --color=auto kube [root@master01 k8s]# cat /opt/kubernetes/cfg/kube-apiserver //查看生成的配置文件 KUBE_APISERVER_OPTS="--logtostderr=true \ --v=4 \ --etcd-servers=https://192.168.80.12:2379,https://192.168.80.13:2379,https://192.168.80.14:2379 \ --bind-address=192.168.80.12 \ --secure-port=6443 \ --advertise-address=192.168.80.12 \ --allow-privileged=true \ --service-cluster-ip-range=10.0.0.0/24 \ --enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,ResourceQuota,NodeRestriction \ --authorization-mode=RBAC,Node \ --kubelet-https=true \ --enable-bootstrap-token-auth \ --token-auth-file=/opt/kubernetes/cfg/token.csv \ --service-node-port-range=30000-50000 \ --tls-cert-file=/opt/kubernetes/ssl/server.pem \ --tls-private-key-file=/opt/kubernetes/ssl/server-key.pem \ --client-ca-file=/opt/kubernetes/ssl/ca.pem \ --service-account-key-file=/opt/kubernetes/ssl/ca-key.pem \ --etcd-cafile=/opt/etcd/ssl/ca.pem \ --etcd-certfile=/opt/etcd/ssl/server.pem \ --etcd-keyfile=/opt/etcd/ssl/server-key.pem" [root@master01 k8s]# netstat -ntap | grep 6443 //查看監聽的端口是否開啓 tcp 0 0 192.168.80.12:6443 0.0.0.0:* LISTEN 17088/kube-apiserve tcp 0 0 192.168.80.12:48320 192.168.80.12:6443 ESTABLISHED 17088/kube-apiserve tcp 0 0 192.168.80.12:6443 192.168.80.12:48320 ESTABLISHED 17088/kube-apiserve [root@master01 k8s]# netstat -ntap | grep 8080 //查看監聽的端口是否開啓 tcp 0 0 127.0.0.1:8080 0.0.0.0:* LISTEN 17088/kube-apiserve - 配置scheduler服務

[root@master01 k8s]# ./scheduler.sh 127.0.0.1 //直接執行腳本,啓動服務,並生成配置文件即可 Created symlink from /etc/systemd/system/multi-user.target.wants/kube-scheduler.service to /usr/lib/systemd/system/kube-scheduler.service. [root@master01 k8s]# systemctl status kube-scheduler.service //查看服務運行狀態 ● kube-scheduler.service - Kubernetes Scheduler Loaded: loaded (/usr/lib/systemd/system/kube-scheduler.service; enabled; vendor preset: disabled) Active: active (running) since 一 2020-02-10 11:22:13 CST; 2min 46s ago //成功運行 Docs: https://github.com/kubernetes/kubernetes ... - 配置controller-manager服務

[root@master01 k8s]# chmod +x controller-manager.sh //添加腳本執行權限 [root@master01 k8s]# ./controller-manager.sh 127.0.0.1 //執行腳本,啓動服務,並生成配置文件 Created symlink from /etc/systemd/system/multi-user.target.wants/kube-controller-manager.service to /usr/lib/systemd/system/kube-controller-manager.service. [root@master01 k8s]# systemctl status kube-controller-manager.service //查看運行狀態 ● kube-controller-manager.service - Kubernetes Controller Manager Loaded: loaded (/usr/lib/systemd/system/kube-controller-manager.service; enabled; vendor preset: disabled) Active: active (running) since 一 2020-02-10 11:28:21 CST; 7min ago //成功運行 ... [root@master01 k8s]# /opt/kubernetes/bin/kubectl get cs //查看節點運行狀態 NAME STATUS MESSAGE ERROR scheduler Healthy ok controller-manager Healthy ok etcd-2 Healthy {"health":"true"} etcd-0 Healthy {"health":"true"} etcd-1 Healthy {"health":"true"}master節點組件部署完成