4.1 分詞器的核心類

1. Analyzer

Lucene內置分詞器SimpleAnalyzer、StopAnalyzer、WhitespaceAnalyzer、StandardAnalyzer

主要作用:

KeywordAnalyzer分詞,沒有任何變化;

SimpleAnalyzer對中文效果太差;

StandardAnalyzer對中文單字拆分;

StopAnalyzer和SimpleAnalyzer差不多;

WhitespaceAnalyzer只按空格劃分。

2. TokenStream

分詞器做好處理之後得到的一個流,這個流中存儲了分詞的各種信息,可以通過TokenStream有效的獲取到分詞單元信息

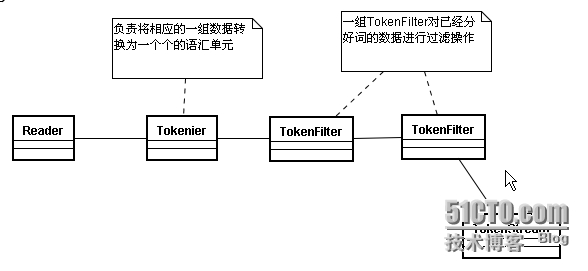

生成的流程

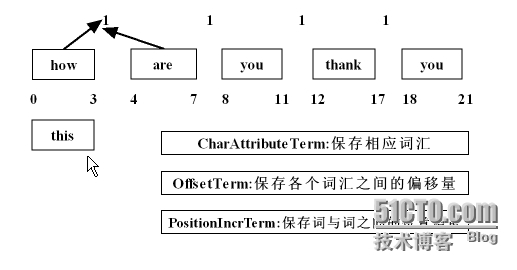

在這個流中所需要存儲的數據

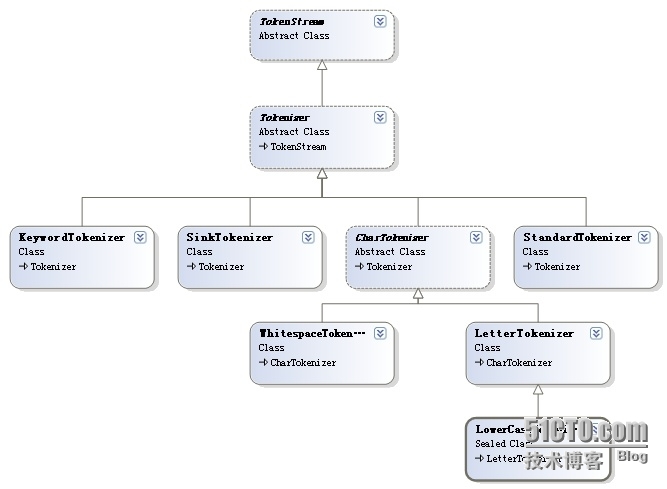

3. Tokenizer

主要負責接收字符流Reader,將Reader進行分詞操作。有如下一些實現類

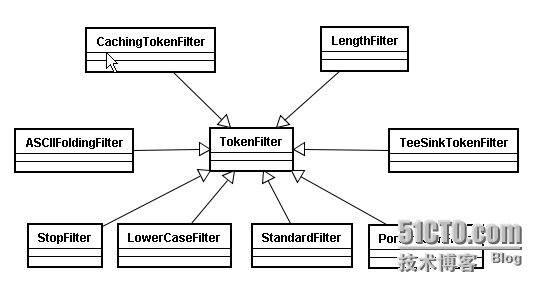

4. TokenFilter

將分詞的語彙單元,進行各種各樣過濾

5.擴展:TokenFilter各類介紹:

(1),TokenFilter

輸入參數爲另一個TokerStream的TokerStream,其子類必須覆蓋incrementToken()函數。

(2),LowerCaseFilter

將Token分詞轉換爲小寫。

(3),FilteringTokenFilter

TokenFilters的一個抽象類,可能會刪除分詞。如果當前分詞要保存,則需要實現accept()方法

並返回一個boolean值。incrementToken()方法將調用accept()方法來決定是否將當前的分詞返回

給調用者。

(4),StopFilter

從token stream中移除停止詞(stop words).

protected boolean accept() {

return!stopWords.contains(termAtt.buffer(), 0, termAtt.length());//返回不是stop word的分詞

}(5),TypeTokenFilter

從token stream中移除指定類型的分詞。

protected boolean accept() {

returnuseWhiteList == stopTypes.contains(typeAttribute.type());

}(6),LetterTokenizer

是一個編譯器,將文本在非字母。說,它定義了令牌的最大字符串相鄰的字母

(7),TokenFilter的順序問題

此時停止詞 the 就未被去除了。先全部轉換爲小寫字母,再過濾停止詞(The 轉換成 the 纔可以與停止詞詞組裏的 the 匹配),如果不限制大小寫,停止詞的組合就太多了。

import java.io.Reader;

import java.util.Set;

import org.apache.lucene.analysis.Analyzer;

importorg.apache.lucene.analysis.LetterTokenizer;

importorg.apache.lucene.analysis.LowerCaseFilter;

import org.apache.lucene.analysis.StopAnalyzer;

import org.apache.lucene.analysis.StopFilter;

import org.apache.lucene.analysis.TokenStream;

import org.apache.lucene.util.Version;

public class MyStopAnalyzer extends Analyzer {

privateSet<Object> words;

publicMyStopAnalyzer(){}

publicMyStopAnalyzer(String[] words ){

this.words=StopFilter.makeStopSet(Version.LUCENE_35,words, true);

this.words.addAll(StopAnalyzer.ENGLISH_STOP_WORDS_SET)

}

@Override

publicTokenStream tokenStream(String fieldName, Reader reader) {

// TODO Auto-generatedmethod stub

return newStopFilter(Version.LUCENE_35,new LowerCaseFilter(Version.LUCENE_35, newLetterTokenizer(Version.LUCENE_35,reader)),this.words);

}

}4.2Attribute

public static void displayAllTokenInfo(Stringstr,Analyzer a) {

try{

TokenStreamstream = a.tokenStream("content",new StringReader(str));

//位置增量的屬性,存儲語彙單元之間的距離

PositionIncrementAttributepia =

stream.addAttribute(PositionIncrementAttribute.class);

//每個語彙單元的位置偏移量

OffsetAttributeoa =

stream.addAttribute(OffsetAttribute.class);

//存儲每一個語彙單元的信息(分詞單元信息)

CharTermAttributecta =

stream.addAttribute(CharTermAttribute.class);

//使用的分詞器的類型信息

TypeAttributeta =

stream.addAttribute(TypeAttribute.class);

for(;stream.incrementToken();){

System.out.print(pia.getPositionIncrement()+":");

System.out.print(cta+"["+oa.startOffset()+"-"+oa.endOffset()+"]-->"+ta.type()+"\n");

}

}catch (Exception e) {

e.printStackTrace();

}

}4.3 自定義分詞器

1.自定義Stop分詞器

package com.mzsx.analyzer;

import java.io.Reader;

import java.util.Set;

import org.apache.lucene.analysis.Analyzer;

importorg.apache.lucene.analysis.LetterTokenizer;

importorg.apache.lucene.analysis.LowerCaseFilter;

import org.apache.lucene.analysis.StopAnalyzer;

import org.apache.lucene.analysis.StopFilter;

import org.apache.lucene.analysis.TokenStream;

import org.apache.lucene.util.Version;

public class MyStopAnalyzer extends Analyzer {

privateSet<Object> words;

publicMyStopAnalyzer(){}

publicMyStopAnalyzer(String[] words ){

this.words=StopFilter.makeStopSet(Version.LUCENE_35,words, true);

this.words.addAll(StopAnalyzer.ENGLISH_STOP_WORDS_SET);

}

@Override

publicTokenStream tokenStream(String fieldName, Reader reader) {

returnnew StopFilter(Version.LUCENE_35,new LowerCaseFilter(Version.LUCENE_35, newLetterTokenizer(Version.LUCENE_35,reader)),this.words);

}

}//測試代碼

@Test

publicvoid myStopAnalyzer() {

Analyzera1 = new MyStopAnalyzer(new String[]{"I","you","hate"});

Analyzera2 = new MyStopAnalyzer();

Stringtxt = "how are you thank you I hate you";

AnalyzerUtils.displayAllTokenInfo(txt,a1);

//AnalyzerUtils.displayToken(txt,a2);

}

2.簡單實現同義詞索引

package com.mzsx.analyzer;

public interface SamewordContext {

publicString[] getSamewords(String name);

}package com.mzsx.analyzer;

import java.util.HashMap;

import java.util.Map;

public class SimpleSamewordContext implementsSamewordContext {

Map<String,String[]>maps = new HashMap<String,String[]>();

publicSimpleSamewordContext() {

maps.put("中國",new String[]{"天朝","大陸"});

maps.put("我",new String[]{"咱","俺"});

maps.put("china",new String[]{"chinese"});

}

@Override

publicString[] getSamewords(String name) {

returnmaps.get(name);

}

}package com.mzsx.analyzer;

import java.io.IOException;

import java.util.Stack;

import org.apache.lucene.analysis.TokenFilter;

import org.apache.lucene.analysis.TokenStream;

importorg.apache.lucene.analysis.tokenattributes.CharTermAttribute;

importorg.apache.lucene.analysis.tokenattributes.PositionIncrementAttribute;

import org.apache.lucene.util.AttributeSource;

public class MySameTokenFilter extendsTokenFilter {

privateCharTermAttribute cta = null;

privatePositionIncrementAttribute pia = null;

privateAttributeSource.State current;

privateStack<String> sames = null;

privateSamewordContext samewordContext;

protectedMySameTokenFilter(TokenStream input,SamewordContext samewordContext) {

super(input);

cta= this.addAttribute(CharTermAttribute.class);

pia= this.addAttribute(PositionIncrementAttribute.class);

sames= new Stack<String>();

this.samewordContext= samewordContext;

}

@Override

publicboolean incrementToken() throws IOException {

if(sames.size()>0){

//將元素出棧,並且獲取這個同義詞

Stringstr = sames.pop();

//還原狀態

restoreState(current);

cta.setEmpty();

cta.append(str);

//設置位置0

pia.setPositionIncrement(0);

returntrue;

}

if(!this.input.incrementToken())return false;

if(addSames(cta.toString())){

//如果有同義詞將當前狀態先保存

current= captureState();

}

returntrue;

}

privateboolean addSames(String name) {

String[]sws = samewordContext.getSamewords(name);

if(sws!=null){

for(Stringstr:sws) {

sames.push(str);

}

returntrue;

}

returnfalse;

}

}package com.mzsx.analyzer;

import java.io.Reader;

import org.apache.lucene.analysis.Analyzer;

import org.apache.lucene.analysis.TokenStream;

import com.chenlb.mmseg4j.Dictionary;

import com.chenlb.mmseg4j.MaxWordSeg;

importcom.chenlb.mmseg4j.analysis.MMSegTokenizer;

public class MySameAnalyzer extends Analyzer {

privateSamewordContext samewordContext;

publicMySameAnalyzer(SamewordContext swc) {

samewordContext= swc;

}

@Override

publicTokenStream tokenStream(String fieldName, Reader reader) {

Dictionarydic = Dictionary.getInstance("D:/luceneIndex/dic");

returnnew MySameTokenFilter(

newMMSegTokenizer(new MaxWordSeg(dic), reader),samewordContext);

}

}//測試代碼

@Test

publicvoid testSameAnalyzer() {

try{

Analyzera2 = new MySameAnalyzer(new SimpleSamewordContext());

Stringtxt = "我來自中國海南儋州第一中學,welcome to china !";

Directorydir = new RAMDirectory();

IndexWriterwriter = new IndexWriter(dir,new IndexWriterConfig(Version.LUCENE_35, a2));

Documentdoc = new Document();

doc.add(newField("content",txt,Field.Store.YES,Field.Index.ANALYZED));

writer.addDocument(doc);

writer.close();

IndexSearchersearcher = new IndexSearcher(IndexReader.open(dir));

TopDocstds = searcher.search(new TermQuery(new Term("content","咱")),10);

Documentd = searcher.doc(tds.scoreDocs[0].doc);

System.out.println("原文:"+d.get("content"));

AnalyzerUtils.displayAllTokenInfo(txt,a2);

}catch (CorruptIndexException e) {

e.printStackTrace();

}catch (LockObtainFailedException e) {

e.printStackTrace();

}catch (IOException e) {

e.printStackTrace();

}

}