DStream是RDD的模板,每隔一個batchInterval會根據DStream模板生成一個對應的RDD。然後將RDD存儲到DStream中的generatedRDDs數據結構中:

// RDDs generated, marked as private[streaming] so that testsuites can access it @transient private[streaming] var generatedRDDs = new HashMap[Time, RDD[T]] ()

下面我們以一個實際的例子來說明DStream中RDD的生成的全週期。

val lines = ssc.socketTextStream("localhost", 9999)

val words = lines.flatMap(_.split(" "))

val pairs = words.map(word => (word, 1))

val wordCounts = pairs.reduceByKey(_ + _)

wordCounts.print()代碼可以‘翻譯’成如下代碼:

val lines = new SocketInputDStream("localhost", 9999)

// 類型是 SocketInputDStream

val words = new FlatMappedDStream(lines, _.split(" "))

// 類型是 FlatMappedDStream

val pairs = new MappedDStream(words, word => (word, 1))

// 類型是 MappedDStream

val wordCounts = new ShuffledDStream(pairs, _ + _)

// 類型是 ShuffledDStream

new ForeachDStream(wordCounts, cnt => cnt.print())

// 類型是 ForeachDStream我們先看看DStream的print方法:

def print(num: Int): Unit = ssc.withScope {

def foreachFunc: (RDD[T], Time) => Unit = {

(rdd: RDD[T], time: Time) => {

val firstNum = rdd.take(num + 1)

// scalastyle:off println

println("-------------------------------------------")

println("Time: " + time)

println("-------------------------------------------")

firstNum.take(num).foreach(println)

if (firstNum.length > num) println("...")

println()

// scalastyle:on println

}

}

foreachRDD(context.sparkContext.clean(foreachFunc), displayInnerRDDOps = false)

}首先定義了一個函數,該函數用來從RDD中取出前幾條數據,並打印出結果與時間等。後面會調用foreachRDD函數。

private def foreachRDD(

foreachFunc: (RDD[T], Time) => Unit,

displayInnerRDDOps: Boolean): Unit = {

new ForEachDStream(this,

context.sparkContext.clean(foreachFunc, false), displayInnerRDDOps).register()

}在foreachRDD中new出了一個ForEachDStream對象。並將這個註冊給DStreamGraph 。

ForEachDStream對象也就是DStreamGraph中的outputStreams。

private[streaming] def register(): DStream[T] = {

ssc.graph.addOutputStream(this)

this

}

def addOutputStream(outputStream: DStream[_]) {

this.synchronized {

outputStream.setGraph(this)

outputStreams += outputStream

}

}當到達batchInterval的時間後,會調用DStreamGraph中的generateJobs方法

def generateJobs(time: Time): Seq[Job] = {

logDebug("Generating jobs for time " + time)

val jobs = this.synchronized {

outputStreams.flatMap { outputStream =>

val jobOption = outputStream.generateJob(time)

jobOption.foreach(_.setCallSite(outputStream.creationSite))

jobOption

}

}

logDebug("Generated " + jobs.length + " jobs for time " + time)

jobs

}然後就調用了outputStream也就是ForEachDStream的generateJob(time)方法:

override def generateJob(time: Time): Option[Job] = {

parent.getOrCompute(time) match {

case Some(rdd) =>

val jobFunc = () => createRDDWithLocalProperties(time, displayInnerRDDOps) {

foreachFunc(rdd, time)

}

Some(new Job(time, jobFunc))

case None => None

}

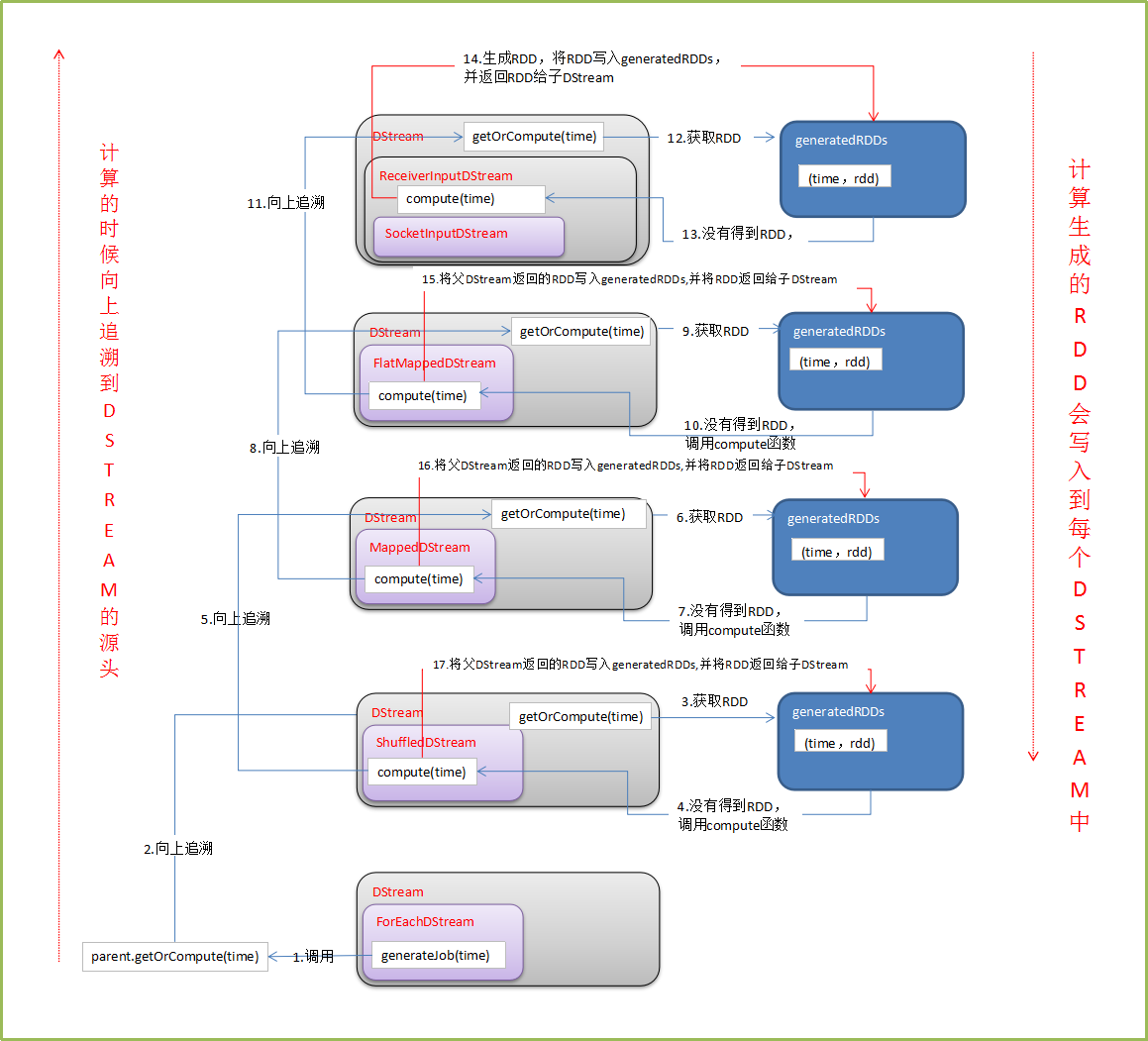

}從這個方法開始,一直向DStream的依賴關係追溯上去。到最初的DStream,然後生成新的RDD,並將RDD寫入generatedRDDs中。過程如下圖:

備註:

1、DT大數據夢工廠微信公衆號DT_Spark

2、IMF晚8點大數據實戰YY直播頻道號:68917580

3、新浪微博: http://www.weibo.com/ilovepains