ItemWriter

對於read讀取數據時是一個item爲單位的循環讀取,而對於writer寫入數據則是以chunk爲單位,一塊一塊的進行寫入

先寫一個Job 和 ItermReader作爲例子

@Configuration

public class DbOutputDemoJobConfiguration {

@Autowired

public JobBuilderFactory jobBuilderFactory;

@Autowired

public StepBuilderFactory stepBuilderFactory;

@Autowired

@Qualifier("dbOutputDemoJobFlatFileReader")

public ItemReader<Customer> dbOutputDemoJobFlatFileReader;

@Autowired

@Qualifier("dbOutputDemoJobFlatFileWriter")

public ItemWriter<Customer> dbOutputDemoJobFlatFileWriter;

@Bean

public Step dbOutputDemoStep() {

return stepBuilderFactory.get("dbOutputDemoStep")

.<Customer,Customer>chunk(10)

.reader(dbOutputDemoJobFlatFileReader)

.writer(dbOutputDemoJobFlatFileWriter)

.build();

}

@Bean

public Job dbOutputDemoJob() {

return jobBuilderFactory.get("dbOutputDemoJob")

.start(dbOutputDemoStep())

.build();

}

}

@Configuration

public class DbOutputDemoJobReaderConfiguration {

@Bean

public FlatFileItemReader<Customer> dbOutputDemoJobFlatFileReader() {

FlatFileItemReader<Customer> reader = new FlatFileItemReader<>();

reader.setResource(new ClassPathResource("customerInit.csv"));

DefaultLineMapper<Customer> customerLineMapper = new DefaultLineMapper<>();

DelimitedLineTokenizer tokenizer = new DelimitedLineTokenizer();

tokenizer.setNames(new String[] {"id","firstName", "lastName", "birthdate"});

customerLineMapper.setLineTokenizer(tokenizer);

customerLineMapper.setFieldSetMapper((fieldSet -> {

return Customer.builder().id(fieldSet.readLong("id"))

.firstName(fieldSet.readString("firstName"))

.lastName(fieldSet.readString("lastName"))

.birthdate(fieldSet.readString("birthdate"))

.build();

}));

customerLineMapper.afterPropertiesSet();

reader.setLineMapper(customerLineMapper);

return reader;

}

}

數據寫入數據庫中

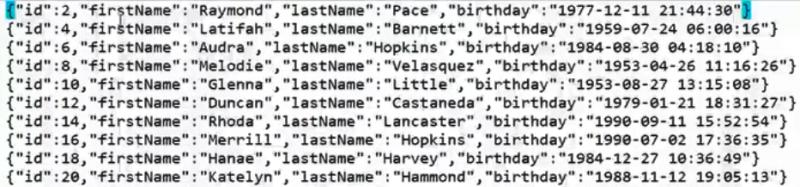

待入庫的文本數據

數據庫表

JdbcBatchItemWriter

@Configuration

public class DbOutputDemoJobWriterConfiguration {

@Autowired

public DataSource dataSource;

@Bean

public JdbcBatchItemWriter<Customer> dbOutputDemoJobFlatFileWriter(){

JdbcBatchItemWriter<Customer> itemWriter = new JdbcBatchItemWriter<>();

// 設置數據源

itemWriter.setDataSource(dataSource);

// 執行sql語句

itemWriter.setSql("insert into customer(id,firstName,lastName,birthdate) values " +

"(:id,:firstName,:lastName,:birthdate)");

// 替換屬性值

itemWriter.setItemSqlParameterSourceProvider(new BeanPropertyItemSqlParameterSourceProvider<>());

return itemWriter;

}

}

執行結果

數據寫入.data文件中

FlatFileItemWriter可以將任何一個類型爲T的對象數據寫入到普通文件中

我們將customerInit.csv中的數據讀出並且寫入到文件customerInfo.data中

FlatFileItemWriter

@Configuration

public class FlatFileDemoJobWriterConfiguration {

@Bean

public FlatFileItemWriter<Customer> flatFileDemoFlatFileWriter() throws Exception {

FlatFileItemWriter<Customer> itemWriter = new FlatFileItemWriter<>();

// 輸出文件路徑

String path = File.createTempFile("customerInfo",".data").getAbsolutePath();

System.out.println(">> file is created in: " + path);

itemWriter.setResource(new FileSystemResource(path));

// 將Customer對象轉爲字符串

itemWriter.setLineAggregator(new MyCustomerLineAggregator());

itemWriter.afterPropertiesSet();

return itemWriter;

}

}

public class MyCustomerLineAggregator implements LineAggregator<Customer> {

//JSON

private ObjectMapper mapper = new ObjectMapper();

@Override

public String aggregate(Customer customer) {

try {

return mapper.writeValueAsString(customer);

} catch (JsonProcessingException e) {

throw new RuntimeException("Unable to serialize.",e);

}

}

}

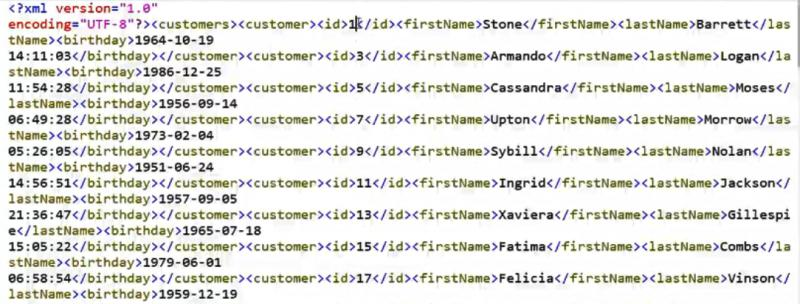

數據寫入XML文件中

將數據寫入到xml文件中,必須用到StaxEventItemWriter,也會用到XStreamMarshaller來序列文件

StaxEventItemWriter

@Configuration

public class XMLFileDemoJobWriterConfiguration {

@Bean

public StaxEventItemWriter<Customer> xmlFileDemoXMLFileWriter() throws Exception {

// 對象轉爲XML

XStreamMarshaller marshaller = new XStreamMarshaller();

Map<String,Class> aliases = new HashMap<>();

aliases.put("customer",Customer.class);

marshaller.setAliases(aliases);

StaxEventItemWriter<Customer> itemWriter = new StaxEventItemWriter<>();

// 指定根標籤

itemWriter.setRootTagName("customers");

itemWriter.setMarshaller(marshaller);

// 指定輸出xml文件路徑

String path = File.createTempFile("customerInfo",".xml").getAbsolutePath();

System.out.println(">> xml file is generated: " + path);

itemWriter.setResource(new FileSystemResource(path));

itemWriter.afterPropertiesSet();

return itemWriter;

}

}

輸出如下

數據寫入多種文件中

將數據寫入多個文件,需要使用CompositItemWriter或者使用ClassifierCompositItemWriter

二者差異:

-

CompositeItemWriter 是把全量數據分別寫入多個文件中;

-

ClassifierCompositeItemWriter是根據指定規則,把滿足條件的數據寫入指定文件中;

將數據分別寫入到xml文件和json文件中,在CompositeItemWriter、ClassifierCompositeItemWriter中實現寫入文件

@Bean

public StaxEventItemWriter<Customer> xmlFileWriter() throws Exception {

// 對象轉爲XML

XStreamMarshaller marshaller = new XStreamMarshaller();

Map<String,Class> aliases = new HashMap<>();

aliases.put("customer",Customer.class);

marshaller.setAliases(aliases);

StaxEventItemWriter<Customer> itemWriter = new StaxEventItemWriter<>();

// 指定根標籤

itemWriter.setRootTagName("customers");

itemWriter.setMarshaller(marshaller);

// 指定輸出路徑

String path = File.createTempFile("multiInfo",".xml").getAbsolutePath();

System.out.println(">> xml file is created in: " + path);

itemWriter.setResource(new FileSystemResource(path));

itemWriter.afterPropertiesSet();

return itemWriter;

}

@Bean

public FlatFileItemWriter<Customer> jsonFileWriter() throws Exception {

FlatFileItemWriter<Customer> itemWriter = new FlatFileItemWriter<>();

// 指定輸出路徑

String path = File.createTempFile("multiInfo",".json").getAbsolutePath();

System.out.println(">> json file is created in: " + path);

itemWriter.setResource(new FileSystemResource(path));

itemWriter.setLineAggregator(new MyCustomerLineAggregator());

itemWriter.afterPropertiesSet();

return itemWriter;

}

CompositeItemWriter

使用CompositeItemWriter輸出數據到多個文件 ``` @Bean public CompositeItemWriter customerCompositeItemWriter() throws Exception { CompositeItemWriter itemWriter = new CompositeItemWriter<>(); // 指定多個輸出對象 itemWriter.setDelegates(Arrays.asList(xmlFileWriter(),jsonFileWriter())); itemWriter.afterPropertiesSet(); return itemWriter; } ```

輸出結果

ClassifierCompositeItemWriter

使用ClassifierCompositeItemWriter根據規則輸出數據到文件

@Bean

public ClassifierCompositeItemWriter<Customer> customerCompositeItemWriter() throws Exception {

ClassifierCompositeItemWriter<Customer> itemWriter = new ClassifierCompositeItemWriter<>();

itemWriter.setClassifier(new MyCustomerClassifier(xmlFileWriter(),jsonFileWriter()));

return itemWriter;

}

構造指定數據劃分規則,按照customer的id進行分類 ``` public class MyCustomerClassifier implements Classifier

}

<br/>

輸出結果

<br/>

<br/>

<br/>

參考:

https://blog.csdn.net/wuzhiwei549/article/details/88593942

https://blog.51cto.com/13501268/2298822